Tag Archives: brain

This continues my discussion of A Computational Foundation for the Study of Cognition, a 1993 paper by philosopher and cognitive scientist David Chalmers (republished in 2012). The reader is assumed to have read the paper and the previous post.

This continues my discussion of A Computational Foundation for the Study of Cognition, a 1993 paper by philosopher and cognitive scientist David Chalmers (republished in 2012). The reader is assumed to have read the paper and the previous post.

I left off talking about the differences between the causality of the (human) brain versus having that “causal topology” abstractly encoded in an algorithm implementing a Mind CSA (Combinatorial-State Automata). The contention is that executing this abstract causal topology has the same result as the physical system’s causal topology.

As always, it boils down to whether process matters.

Continue reading

54 Comments | tags: algorithm, brain, brain mind problem, Church-Turing thesis, computation, computationalism, computer model, computer program, David Chalmers, human brain, human mind, mind, positronic brain, theory of mind | posted in Computers

I’ve always liked (philosopher and cognitive scientist) David Chalmers. Of those working on a Theory of Mind, I often find myself aligned with how he sees things. Even when I don’t, I still find his views rational and well-constructed. I also like how he conditions his views and acknowledges controversy without disdain. A guy I’d love to have a beer with!

I’ve always liked (philosopher and cognitive scientist) David Chalmers. Of those working on a Theory of Mind, I often find myself aligned with how he sees things. Even when I don’t, I still find his views rational and well-constructed. I also like how he conditions his views and acknowledges controversy without disdain. A guy I’d love to have a beer with!

Back during the May Mind Marathon, I followed someone’s link to a paper Chalmers wrote. I looked at it briefly, found it interesting, and shelved it for later. Recently it popped up again on my friend Mike’s blog, plus my name was mentioned in connection with it, so I took a closer look and thought about it…

Then I thought about it some more…

Continue reading

11 Comments | tags: algorithm, brain, brain mind problem, computation, computationalism, computer model, computer program, David Chalmers, human brain, human mind, mind, positronic brain, theory of mind | posted in Computers, Philosophy

After a weekend of transistorized baseball, it’s time to get back to wandering through pondering consciousness. I laid down a few cobblestones last week; time to add a few more to the road. Eventually I’ll have something on which I can drive an argument.

After a weekend of transistorized baseball, it’s time to get back to wandering through pondering consciousness. I laid down a few cobblestones last week; time to add a few more to the road. Eventually I’ll have something on which I can drive an argument.

There are a number of classic, or at least well-known, arguments for and against computationalism. They variously involve Pixies, different kinds of Zombies, people trapped in different kinds of rooms, and rock walls that compute. (In fact, they compute rooms that trap Pixies. And everything else.)

Today I’m going to ruminate on the world’s most unfortunate file clerk.

Continue reading

91 Comments | tags: brain, brain mind problem, computationalism, consciousness, human brain, human consciousness, human mind, John Searle, mind, The Chinese Room, Theory of Consciousness, theory of mind | posted in Computers, Philosophy, Science

When it comes to consciousness, one of the top challenges is defining what it is. (Some insist it doesn’t even exist, which makes defining it even more of a challenge.) Part of the problem is that there is no single correct definition. There never really has been.

When it comes to consciousness, one of the top challenges is defining what it is. (Some insist it doesn’t even exist, which makes defining it even more of a challenge.) Part of the problem is that there is no single correct definition. There never really has been.

There is also that there is sentience (essentially the ability to feel pain as pain) and there is sapience (roughly: wisdom). Lots of animals are sentient, but sapience seems to be a property of human consciousness.

Which raises the question: Are humans just a point on a spectrum, or is there some sort of “band gap” between higher and lower forms?

Continue reading

24 Comments | tags: brain, brain mind problem, consciousness, human brain, human consciousness, human mind, mind, Theory of Consciousness, theory of mind | posted in Opinion, Philosophy

On the one hand, a main theme here is theories of consciousness. On the other hand, it’s been almost eight years blogging, and I’ve covered my views pretty well in numerous posts and comment threads. Our understanding of consciousness currently seems stuck pending new discoveries, either in answering hard questions, or in providing entirely new paths.

On the one hand, a main theme here is theories of consciousness. On the other hand, it’s been almost eight years blogging, and I’ve covered my views pretty well in numerous posts and comment threads. Our understanding of consciousness currently seems stuck pending new discoveries, either in answering hard questions, or in providing entirely new paths.

A while back I determined to step away from debates (even blogs) that center on topics with no resolution. Religion is a big one, but theories of mind is another. Your view depends on your axioms. Unless (or until) science provides objective answers, everyone is just guessing.

But it’s been three-and-a-half years, and, well,… I have some notes…

Continue reading

8 Comments | tags: AI, brain, brain mind problem, chaos theory, Cogito ergo sum, computationalism, computer model, computer program, consciousness, human brain, human consciousness, human mind, information theory, Isaac Asimov, mind, stored program computer, Theory of Consciousness, Von Neumann architecture | posted in Computers, Opinion, Science

A while back I realized I had an Engineer’s Mind. I’ve always had a sense of that. What I realized was the significance of the Engineer’s Mind category. And of other categories of Mind — for example an Artist’s Mind (which I didn’t discover I also had until high school; see My Life 2.0).

A while back I realized I had an Engineer’s Mind. I’ve always had a sense of that. What I realized was the significance of the Engineer’s Mind category. And of other categories of Mind — for example an Artist’s Mind (which I didn’t discover I also had until high school; see My Life 2.0).

Having a given Mind doesn’t mean one is necessarily good at something (skill takes practice), but it does suggest a predisposition or talent for it. Our minds seem to come pre-wired in two ways: core wiring that makes us human; and “flavor” wiring that gives us (some of our) basic traits. For instance, some people have — or strongly do not to have — a Math Mind.

I’ve found Mind a useful metaphor as well as a game to play.

Continue reading

7 Comments | tags: art, artist, brain, engineer, engineering, engineers, human brain, human mind, mind, mind-set, teacher | posted in Basics, Life

Over the last few weeks I’ve written a series of posts leading up to the idea of human consciousness in a machine. In particular, I focused on the difference between a physical model and a software model, and especially on the requirements of the software model.

Over the last few weeks I’ve written a series of posts leading up to the idea of human consciousness in a machine. In particular, I focused on the difference between a physical model and a software model, and especially on the requirements of the software model.

The series is over, I have nothing particularly new to add, but I’d like to try to summarize my points and provide an index to the posts in this series. It seems I may have given readers a bit of information overload — too much information to process.

Hopefully I can achieve better clarity and brevity here!

Continue reading

30 Comments | tags: AI, algorithm, brain, brain mind problem, chaos theory, computationalism, computer model, computer program, consciousness, human brain, human consciousness, human mind, information theory, mind, My brain is full, stored program computer, Theory of Consciousness, Von Neumann architecture | posted in Computers

Last time I introduced four levels of possibility regarding how mind is related to brain. Behind Door #1 is a Holy Grail of AI research, a fully algorithmic implementation of a human mind. Behind Door #4 is an ineffable metaphysical mind no machine can duplicate.

Last time I introduced four levels of possibility regarding how mind is related to brain. Behind Door #1 is a Holy Grail of AI research, a fully algorithmic implementation of a human mind. Behind Door #4 is an ineffable metaphysical mind no machine can duplicate.

The two doors between lead to physical models that recapitulate the structure of the human brain. Behind Door #3 is the biology of the brain, a model we know creates mind. Behind Door #2 is the network of the brain, which we presume encodes the mind regardless of its physical construction.

This time we’ll look more closely at some distinguishing details.

Continue reading

14 Comments | tags: AI, algorithm, brain, calculator, computationalism, computer model, computer program, consciousness, enchanted loom, human brain, human consciousness, LTP, neural correlates, qualia, self-awareness, slide rule, software model, synapse | posted in Computers

Last week we took a look at a simple computer software model of a human brain. (We discovered that it was big, requiring dozens of petabytes!) One goal of such models is replicating consciousness — a human mind. That can involve creating a (potentially superior) new mind or uploading an existing human mind (a very different goal).

Last week we took a look at a simple computer software model of a human brain. (We discovered that it was big, requiring dozens of petabytes!) One goal of such models is replicating consciousness — a human mind. That can involve creating a (potentially superior) new mind or uploading an existing human mind (a very different goal).

Now that we’ve explored the basics of calculation, code (software), computers, and (computer software) models, we’re ready to explore what’s involved in attempting to model a (human) mind.

I’m dividing the possibilities into four basic levels.

Continue reading

18 Comments | tags: AI, algorithm, brain, computationalism, computer model, computer program, consciousness, enchanted loom, human brain, human consciousness, human mind, Isaac Asimov, mind, physicalism, positronic brain, qualia, René Descartes | posted in Computers

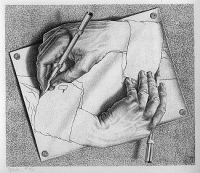

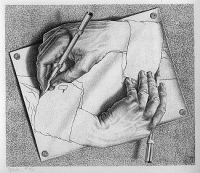

If you have read this blog much, you know that a topic that interests me greatly is the nature of consciousness. How is it that a three-pound clump of cells, a brain, gives rise to the rich experience of consciousness, our minds? Cognitive scientist David Chalmers termed this “the hard problem” of consciousness, and as it stands we really have no idea what consciousness is (and yet we all experience it all the time).

If you have read this blog much, you know that a topic that interests me greatly is the nature of consciousness. How is it that a three-pound clump of cells, a brain, gives rise to the rich experience of consciousness, our minds? Cognitive scientist David Chalmers termed this “the hard problem” of consciousness, and as it stands we really have no idea what consciousness is (and yet we all experience it all the time).

Back in 1979 cognitive scientist Douglas Hofstadter wrote Gödel, Escher, Bach, a book that attempts to answer the question. GEB, as it became known, was a large book most took as a random tour of interesting scientific ideas. But GEB did have a theme, so 25 years later Hofstadter wrote another (much shorter) book to re-state his case.

That book is called I Am a Strange Loop, and it has much worth considering!

Continue reading

46 Comments | tags: brain, David Chalmers, Douglas Hofstadter, experience, GEB, mind, Paris Hilton, Philosophical zombie, Theory of Consciousness | posted in Philosophy, Science

This continues my discussion of A Computational Foundation for the Study of Cognition, a 1993 paper by philosopher and cognitive scientist David Chalmers (republished in 2012). The reader is assumed to have read the paper and the previous post.

This continues my discussion of A Computational Foundation for the Study of Cognition, a 1993 paper by philosopher and cognitive scientist David Chalmers (republished in 2012). The reader is assumed to have read the paper and the previous post. On the one hand,

On the one hand,  Over the last few weeks I’ve written a series of posts leading up to the idea of human consciousness in a machine. In particular, I focused on the difference between a physical model and a software model, and especially on the requirements of the software model.

Over the last few weeks I’ve written a series of posts leading up to the idea of human consciousness in a machine. In particular, I focused on the difference between a physical model and a software model, and especially on the requirements of the software model.

If you have read this blog much, you know that a topic that interests me greatly is the nature of consciousness. How is it that a three-pound clump of cells, a brain, gives rise to the rich experience of consciousness, our minds? Cognitive scientist

If you have read this blog much, you know that a topic that interests me greatly is the nature of consciousness. How is it that a three-pound clump of cells, a brain, gives rise to the rich experience of consciousness, our minds? Cognitive scientist