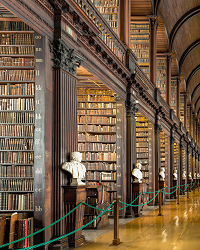

In this corner, philosopher John Searle (1932–), weighing in with what I like to call the Giant File Room (GFR). The essential idea is of a vast database capable of answering any question. The question it poses is whether we see this ability as “consciousness” behavior. (Searle’s implication is that we would not.)

In this corner, philosopher John Searle (1932–), weighing in with what I like to call the Giant File Room (GFR). The essential idea is of a vast database capable of answering any question. The question it poses is whether we see this ability as “consciousness” behavior. (Searle’s implication is that we would not.)

In that corner, philosopher and mathematician Kurt Gödel (1906–1978), weighing in with his Incompleteness Theorems. The essential idea there is that no consistent (arithmetic) system can prove all possible truths about itself.

It’s possible that Gödel has a knockout punch for Searle…

As a result of lurking on various online discussions, I’ve been thinking about computationalism in the context of structure versus function. It’s another way to frame the Yin-Yang tension between a simulation of a system’s functionality and that system’s physical structure.

As a result of lurking on various online discussions, I’ve been thinking about computationalism in the context of structure versus function. It’s another way to frame the Yin-Yang tension between a simulation of a system’s functionality and that system’s physical structure. After a weekend of

After a weekend of