Tag Archives: algorithm

This is the third post of a series exploring the duality I perceive in digital computation systems. In the first post I introduced the “mind stacks” — two parallel hierarchies of levels, one leading up to the human brain and mind, the other leading up to a digital computer and a putative computation of mind.

This is the third post of a series exploring the duality I perceive in digital computation systems. In the first post I introduced the “mind stacks” — two parallel hierarchies of levels, one leading up to the human brain and mind, the other leading up to a digital computer and a putative computation of mind.

In the second post I began to explore in detail the level of the second stack, labeled Computer, in terms of the causal gap between the physical hardware and the abstract software. This gap, or dualism, is in sharp contrast to other physical systems that can, under a broad definition of “computation,” be said to compute something.

In this post I’ll continue, and hopefully finish, that exploration.

Continue reading

9 Comments | tags: algorithm, analog computer, computation, computationalism, computer program, digital computer | posted in Computers

Digital Computer

In the previous post I introduced the “mind stacks” — two essentially parallel hierarchies of organization (or maybe “zoom level” is a more apt term) — and the premise of a causal disconnect in the block labeled Computer. In this post I’ll pick up where I left off and discuss that disconnect in detail.

A key point involves what we mean by digital computation — as opposed to more informal, or even speculative, notions sometimes used to expand the meaning of computation. The question is whether digital computing is significantly different from these.

The goal of these posts is to demonstrate that it is.

Continue reading

7 Comments | tags: algorithm, analog computer, computation, computationalism, computer program, digital computer | posted in Computers

The Age of Fire is a key milestone for a would-be technological civilization. Fire is a dividing line, a technology that gave us far more effectiveness. Fire provides heat, light, cooking, defense, fire-hardened wood and clay, and eventually metallurgy.

The Age of Fire is a key milestone for a would-be technological civilization. Fire is a dividing line, a technology that gave us far more effectiveness. Fire provides heat, light, cooking, defense, fire-hardened wood and clay, and eventually metallurgy.

The Age of the Electron is another key technological milestone. Electricity provides heat and light without fire’s dangers and difficulties, it drives motors, and enables long-distance communication. It leads to an incredible array of technologies.

The Age of the Algorithm is just as much of a game-changer.

Continue reading

31 Comments | tags: algorithm, computation, computationalism, computer program, digital computer | posted in Computers

In the nearly nine years of this blog I’ve written many posts about human consciousness in the context of computers. Human consciousness was a key topic from the beginning. So was the idea of conscious computers.

In the nearly nine years of this blog I’ve written many posts about human consciousness in the context of computers. Human consciousness was a key topic from the beginning. So was the idea of conscious computers.

In the years since, there have been myriad posts and comment debates. It’s provided a nice opportunity to explore and test ideas (mine and others), and my views have evolved over time. One idea I’ve found increasing skepticism for is computationalism, but it depends on which of two flavors of it we mean.

I find one flavor fascinating but can see the other as only metaphor.

Continue reading

10 Comments | tags: algorithm, analog computer, brain, brain mind problem, computationalism, digital computer, human brain, human mind | posted in Computers, Philosophy

I cracked up when I saw the headline: Why your brain is not a computer. I kept on grinning while reading it because it makes some of the same points I’ve tried to make here. It’s nice to know other people see these things, too; it’s not just me.

I cracked up when I saw the headline: Why your brain is not a computer. I kept on grinning while reading it because it makes some of the same points I’ve tried to make here. It’s nice to know other people see these things, too; it’s not just me.

Because, to quote an old gag line, “If you can keep your head when all about you are losing theirs… perhaps you’ve misunderstood the situation.” The prevailing attitude seems to be that brains are just machines that we’ll figure out, no big deal. So, it’s certainly (and ever) possible my skepticism represents my misunderstanding of the situation.

But if so, I’m apparently not the only one…

Continue reading

41 Comments | tags: algorithm, brain, brain mind problem, computationalism, consciousness, human brain, human consciousness, human mind, Matthew Cobb, mind, Theory of Consciousness | posted in Philosophy, Science

In this corner, philosopher John Searle (1932–), weighing in with what I like to call the Giant File Room (GFR). The essential idea is of a vast database capable of answering any question. The question it poses is whether we see this ability as “consciousness” behavior. (Searle’s implication is that we would not.)

In this corner, philosopher John Searle (1932–), weighing in with what I like to call the Giant File Room (GFR). The essential idea is of a vast database capable of answering any question. The question it poses is whether we see this ability as “consciousness” behavior. (Searle’s implication is that we would not.)

In that corner, philosopher and mathematician Kurt Gödel (1906–1978), weighing in with his Incompleteness Theorems. The essential idea there is that no consistent (arithmetic) system can prove all possible truths about itself.

It’s possible that Gödel has a knockout punch for Searle…

Continue reading

24 Comments | tags: algorithm, Incompleteness Theorems, John Searle, Kurt Gödel, The Chinese Room | posted in Philosophy, Science

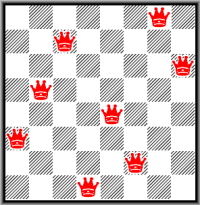

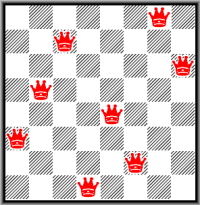

One solution to the puzzle.

I’ve written a lot lately about the physical versus the virtual. I’ve also written about algorithms and the role they play. In this post, I revisit both by exploring what is, for me, an old friend: The Eight Queens Puzzle. The goal is to place eight chess queens on a chessboard such that none can take another in a single move.

The puzzle is simple enough, yet just challenging enough, that it’s a good problem for first-year student programmers to solve. That’s where I met it, and it’s been a kind of “Hello, World!” algorithm for me ever since.

I thought it might be a fun way to explore a simple virtual reality.

Continue reading

14 Comments | tags: abstract system, algorithm, chess, chess queen, chessboard, computationalism, eight queens puzzle, information system, physical system, virtual reality | posted in Computers

Math version 1.0

This image here of the Mandelbrot fractal might look like one of the uglier renderings you’ve seen, but it’s a thing of beauty to me. That’s because some code I wrote created it. Which, in itself, isn’t a deal (let alone a big one), but how that code works kind of is (at least for me).

The short version: the code implements special virtual math for calculating the Mandelbrot. That the image looks anything at all like it should shows the code works.

Yet according to that image, something wasn’t quite right.

Continue reading

9 Comments | tags: algorithm, Mandelbrot, Mandelbrot fractal, virtual reality | posted in Computers

In the last post I explored how algorithms are defined and what I think is — or is not — an algorithm. The dividing line for me has mainly to do with the requirement for an ordered list of instructions and an execution engine. Physical mechanisms, from what I can see, don’t have those.

In the last post I explored how algorithms are defined and what I think is — or is not — an algorithm. The dividing line for me has mainly to do with the requirement for an ordered list of instructions and an execution engine. Physical mechanisms, from what I can see, don’t have those.

For me, the behavior of machines is only metaphorically algorithmic. Living things are biological machines, so this applies to them, too. I would not be inclined to view my kidneys, liver, or heart, as embodied algorithms (their behavior can be described by algorithms, though).

Of course, this also applies to the brain and, therefore, the mind.

Continue reading

43 Comments | tags: algorithm, brain, brain mind problem, computationalism, consciousness, human brain, human consciousness, human mind, mind, Theory of Consciousness, theory of mind | posted in Computers

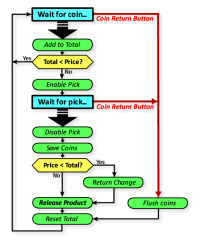

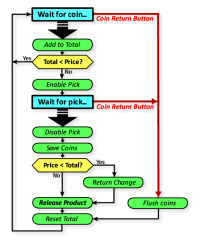

There’s a discussion that’s long lurked in a dusty corner of my thinking about computationalism. It involves the definition and role of algorithms. The definition isn’t particularly tricky, but the question of what fits that definition can be. Their role in our modern life is undeniably huge — algorithms control vast swaths of human experience.

There’s a discussion that’s long lurked in a dusty corner of my thinking about computationalism. It involves the definition and role of algorithms. The definition isn’t particularly tricky, but the question of what fits that definition can be. Their role in our modern life is undeniably huge — algorithms control vast swaths of human experience.

Yet some might say even the ancient lowly thermostat implements an algorithm. In a real sense, any recipe is an algorithm, and any process has some algorithm that describes that process.

But the ultimate question involves algorithms and the human mind.

Continue reading

8 Comments | tags: algorithm, computation, computationalism, digital | posted in Computers

This is the third post of a series exploring the duality I perceive in digital computation systems. In the first post I introduced the “mind stacks” — two parallel hierarchies of levels, one leading up to the human brain and mind, the other leading up to a digital computer and a putative computation of mind.

This is the third post of a series exploring the duality I perceive in digital computation systems. In the first post I introduced the “mind stacks” — two parallel hierarchies of levels, one leading up to the human brain and mind, the other leading up to a digital computer and a putative computation of mind.

The Age of Fire is a key milestone for a would-be technological civilization. Fire is a dividing line, a technology that gave us far more effectiveness. Fire provides heat, light, cooking, defense, fire-hardened wood and clay, and eventually metallurgy.

The Age of Fire is a key milestone for a would-be technological civilization. Fire is a dividing line, a technology that gave us far more effectiveness. Fire provides heat, light, cooking, defense, fire-hardened wood and clay, and eventually metallurgy.

I cracked up when I saw the headline:

I cracked up when I saw the headline:  In this corner, philosopher

In this corner, philosopher

There’s a discussion that’s long lurked in a dusty corner of my thinking about computationalism. It involves the definition and role of algorithms. The definition isn’t particularly tricky, but the question of what fits that definition can be. Their role in our modern life is undeniably huge — algorithms control vast swaths of human experience.

There’s a discussion that’s long lurked in a dusty corner of my thinking about computationalism. It involves the definition and role of algorithms. The definition isn’t particularly tricky, but the question of what fits that definition can be. Their role in our modern life is undeniably huge — algorithms control vast swaths of human experience.