Math version 1.0

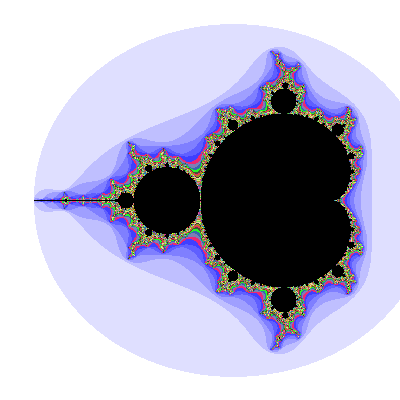

This image here of the Mandelbrot fractal might look like one of the uglier renderings you’ve seen, but it’s a thing of beauty to me. That’s because some code I wrote created it. Which, in itself, isn’t a deal (let alone a big one), but how that code works kind of is (at least for me).

The short version: the code implements special virtual math for calculating the Mandelbrot. That the image looks anything at all like it should shows the code works.

Yet according to that image, something wasn’t quite right.

See the jagged edges on the big blue bands of color around the Mandelbrot set (the round black parts and the “needle” sticking out to the left)? They shouldn’t be jagged.

Not unless every Mandelbrot rendering I’ve seen does something to smooth out the edges (and I don’t believe that).

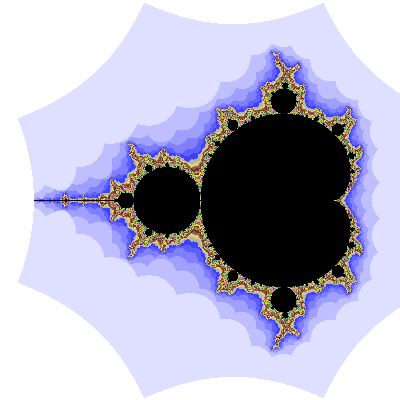

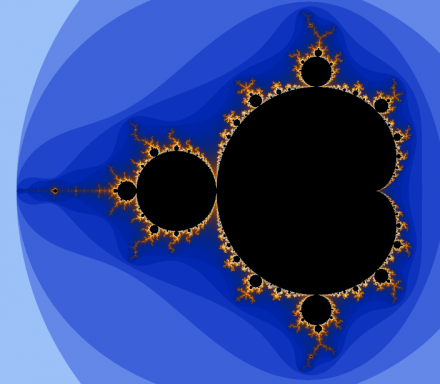

I expected something that looks like the one below (done with Ultra Fractal 6):

There is a coloring algorithm that smooths the banding such that one band blends nicely into another — that’s common to the point of being the default.

Band smoothing results in something like this (also from UF6):

But those ragged edges — something is going on there.

The weird thing: What kind of error gives me ragged edges, but still generates a correct-looking Mandelbrot? A math error seems like it should result in worse damage to the image.

§

I had an idea what might be at fault. A known bug, but I didn’t see how it mattered here (turns out it didn’t).

Plan A was to fix that and try another render, but the code is calculation intense — that 200×200 jagged image above took over two hours. If the bug wasn’t the problem, nothing gained after hours for a render.

I didn’t have that kind of patience.

Plan B was to investigate the reason for the jagged edges. Understanding the cause should point to the fix. Which it did.

The short version: The math code itself was fine. The jagged edges came from the Mandelbrot generation code.

After 12 hours of overnight calculation, here’s the 400×400 pixel result:

Math version 2.0!

Which looks a lot better.

The scalloping of the edges — also an artifact of the generation code — I can live with because the alternative is horrifying.

§ §

At this point you might be wondering what this post is about and, more importantly, whether it is worth reading.

To be honest, it almost certainly is not.

This is me commemorating a small milestone regarding some code I’ve been working on. Make no mistake: What I’m doing is strictly Amateur Night at the Mandelbrot. I’m dabbling in something that interests me — building ships in bottles, so to speak.

This is not likely to be of much interest to others, so caveat lector.

[That said, I’m not going to geek out much on the code itself. I have a programming blog for that. I am going to geek out on the Mandelbrot a bit.]

§ §

Basic Background #1: The Mandelbrot is generated by doing a repeating calculation (the same calculation) on every point of the image. The calculation repeats until: [A] some max limit is reached; or [B] the calculation “escapes” — reaches a value greater than 2.

Points that escape are not in the Mandelbrot set, points that do not are. (Which makes the M-set impossible to fully calculate — some points that max out might eventually escape.)

Incidentally, that the same calculation is done on each pixel means the Mandelbrot is embarrassingly parallel — every pixel can be calculated simultaneously. My dream is using a GPU stack to create a highly parallelized Mandelbrot Machine.

§

Basic Background #2: Traditionally, points in the M-set are rendered black. The main cardioid shape, the circular bays, and the threads and mini-brots seen in zooms.

The colorful parts are outside. A traditional method uses the number of calculations it took to escape. This number is then mapped to some color palette. The color of a pixel indicates how long it took to escape.

Most of the fun of Mandelbrot renderings is in the coloring schemes, the palettes, and how palettes are mapped. A full exploration would require a college semester (at least).

§

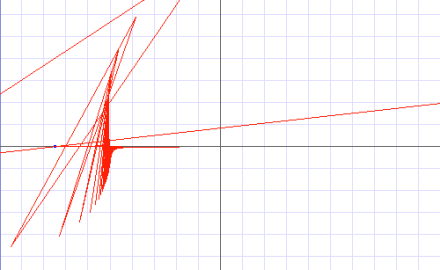

Orbits figure 1.

Basic Background #3: This is geometry on a two-dimensional plane.

That a point “escapes” when the calculation goes above 2.0 refers to its distance from the center.

Each time the calculation repeats, it generates a new point (which is plugged back in for the next repeat).

If the new point is ever further from the center than a distance of 2.0 it “escapes” (it’s been shown that in such cases, the point always diverges to infinity).

Orbits figure 1 shows the “orbits” of a point inside the set. (The original point — the one being calculated — is the blue dot in the upper right. The red lines show how it moves after each calculation.

As you see, the orbits converge on an attractor and never escape.

Orbits figure 2 shows a point that does escape after jumping back and forth (for quite a while) on the left side:

Orbits figure 2.

When last seen, the point was headed off for the far right. You can see how it starts jumping up and down, which leads to its escape.

(BTW: This particular point [-0.75,+0.001] is interesting and will be the subject of future posts.)

§

Basic Background #4: Those big bands, points far from the M-set, are regions where the number of calculation repeats is tiny.

The most distant regions are already escaped, so their count is zero (or one if all points get at least one to “prime the pump”). Slightly closer regions escape after one, two, or just a few more, repeats.

Out in the “flats” the change from, say two to three, is really obvious, so we get those big bands. (Closer in, things get chaotic and fast-changing, and the bands aren’t so apparent.)

(Band smoothing is just a trick to eliminate the edges.)

§ §

Okay, so what happened with my code concerns the check to see if the point has escaped. It needs to be performed after every calculation.

It looks basically like this:

loop (max=1000) do

calculate(point)

if 2.0 < abs(point) then

exit point(ESCAPE)

end loop

exit point(NOT_ESCAPE)

But that simple algorithm, especially the bit in red, hides something more complicated.

Taking the absolute value of a point gives us a distance. The actual calculation being performed there is:

Which, of course, is just the Pythagorean formula. We want to know if dist is greater than 2.0.

But this adds two multiplications, an addition, and (worst) a square root. (Remember: this is virtual math I have to implement, and implementing a square root routine? No thank you very much!)

So the work around is to cheat and leverage the fact that a distance of two is guaranteed if x+y is above 4.0 (’cause its square root is 2.0).

§

The problem is that the obvious replacement…

if 4.0 < (point.x + point.y) then

exit point(ESCAPE)

…results in the jagged edges. D’oh!

My Plan B involved using native math, which is adequate for rendering so long as we don’t zoom in too much (or at all). The native math is much (much) faster, so I could experiment.

First I confirmed that the usual approach (taking the absolute value) gave expected results:

Complex numbers, version 1. The normal mode.

So far, so good. Using the x+y approach, I got this:

Complex numbers, version 2. Jagged edges!

So, yeah that approach is the problem (but not the math itself; yay).

But now what? I really don’t want to have to do a distance calculation using my virtual math.

Ah, but if either x or y is above 2.0, that should work…

Complex numbers, version 3. Much better.

But the bands are a little close in.

Turns out much better if I test for x or y above 4.0:

Complex numbers, version 4. A winner!

And that I can live with. An acceptable compromise in band edges balanced by not having to do a distance calculation.

(BTW: any “bailout” value above 2.0 works — higher values just shift the banding. That’s all that happened here.)

§ §

This isn’t about my code (or even the Mandelbrot, per se). It’s another exploration of algorithms.

A point illustrated here is that subtle aspects of the code can result in unexpected results in the generated reality. Code is very difficult — perhaps even impossible — to get 100% right.

Even if your code is right, your thinking can be wrong. Good code, wrong idea, it still amounts to a bug — an unexpected (and usually undesired) result.

§

Another point is the need sometimes to implement basic building blocks you then use to create a virtual reality (in this case, a rendering of the Mandelbrot set).

Here I’m implementing a limited set of operations on arbitrary precision real numbers. That’s the virtual math needed for the Mandelbrot reality.

The “arbitrary precision” is what drives the need. The native numbers of most computers are fixed precision. In many cases, the best precision is 53 binary bits, which amounts to 16 or so decimal digits.

Calculating the deeper parts of the Mandelbrot (which is to say: nearly all of it) requires many hundreds of decimal digits of precision. That sort of thing isn’t available normally except in highly specialized packages (such as fractal generators).

Few things in the real world need that kind of monster precision. Only in the abstract world of math can it matter. (Which, once again, is an argument that there’s something not quite right, or at least damned weird, about the real numbers.)

§

It takes 12 hours to calculate a 400×400 image because I’m using 160 bits for binary fractions. That gives me 49 decimal digits.

I kept it low to make it even possible to do the calculation of all the points. My intended use for this code is studying what happens to specific points (like the one I mentioned above).

If, for example, I used 1024 binary fraction bits (a default mode), I get 309 decimal digits of precision. (And very slow calculation times.) The code ultimately is limited only by system limits, but for technical reasons I’ve got it throttled at 8,192 bits — a whopping 2,467 decimal digits of precision.

It’s a project I’ve had in mind for a long time. Doing it in Python made it easy, but slow. A proof of concept. The next step is doing it in C++ or other low-level language. That’ll make it fast.

The final step is porting that to a GPU and running pixels in parallel!

Stay precise, my friends!

As a bonus, here’s a little movie that animates orbits for a series that follows the cardioid border at different distances (the Trace value — 1.00 would be right on the border). Note how complex the orbits are at close range. (More about this another time.)

∇

January 20th, 2020 at 7:13 am

“This is me commemorating a small milestone regarding some code I’ve been working on.”

One of the things I’ve resolved is not to let pragmatism dictate what I’m interested in. Much of what I post is just me commemorating what I’ve learned, so I totally get where you’re coming from.

Unfortunately, as usual, I have nothing intelligent to say about the mathiness. 🙂

January 20th, 2020 at 10:16 am

“One of the things I’ve resolved is not to let pragmatism dictate what I’m interested in.”

Good for you! I think I’ve suffered from the opposite problem — no pragmatism at all when it comes to pursuing interests. (Hence all the largely ignored maths and baseball posts. 😀 ) My parents were both academics whose minds were fairly disconnected from the pragmatic world. I inherited all that. So it goes.

As for commemorating, yeah, it’s fun to celebrate. A lot of what I post is along the lines of, “Whoa! I think this is so cool I gotta share!” I do find reality fascinating, and it’s safe to say there is nothing that doesn’t interest me. (But one is forced to be selective due to time.)

“Unfortunately, as usual, I have nothing intelligent to say about the mathiness.”

And I tried to be so un-mathy. 😮

The Mandelbrot fascinates me for being so simple at root, yet infinitely complex. And it’s a genuinely algorithmically-defined object. Even as an abstraction, every point represents an iterated calculation — a Turing Halting problem.

It also, I think, participates in what I see as evidence that dimensions are not created equal. My exploration of rotation got me thinking it’s only a coherent concept in two and three dimensions, but it seems incoherent in four or more. (It can be described mathematically, but how coherent is the notion of rotating on two axes at once? That’s what it amounts to.)

Other things seem incoherent in one or two dimensions, which seems to make three a kind of sweet spot. It allows routing of paths (such as nerves and blood vessels or electrical circuits) which suggests Flatland creatures are impossible.

In four (or more) dimensions, the notion of “inside” becomes problematic, so how do you have living organisms with no insides?

Of course, being a 3D being with a 3D brain and 3D history, this could be bias, but at least some of it seems based on objective criteria, so who knows. But the fact is, attempts to extend the Mandelbrot into three dimensions look fascinating (you can find videos), but don’t have anywhere near the compelling attractions of the 2D version.

January 20th, 2020 at 11:04 am

The dimension constraints thing is interesting. At the very least, it may indicate that 4D or higher structures would be far stranger than they’re typically imagined. Maybe a 4D black hole is a meaningless conjecture.

Or like you said, maybe we’re just too bound up in our 3D framework.

January 20th, 2020 at 11:47 am

Or it’s possible a trained mathematician would have a deeper understanding that justifies it all.

Tesseracts are another example that, to me, suggests something about dimensions. A line (1D) is bounded by points (0D); a square (2D) is bounded by lines; a cube is bounded by squares; and a tesseract is bounded by cubes. So boundaries in 4D have to be three-dimensional objects.

In 3D, bounding surfaces are conceptually 2D (although, of course, they have a third dimension). They only extend in two dimensions. A particle near such a barrier sees a “wall” extending along, say, X and Y, which blocks movement in the Z direction. Coordinates with certain Z values would be unavailable, but X and Y are wide open.

In 4D, bounding volumes have to present a wall extending in, say, X, Y, and Z, so a particle near such a barrier would see certain X, Y, and Z, values as out of reach, but any W coordinate is available. Or any other combination — regardless, a 3D barrier only leaves one coordinate free.

In 2D, bounding lines block certain, say, X coordinates, leaving Y free. (In 1D, points block the only dimension, so zero axes of freedom!) It seems only in 3D do we get two degrees of freedom when bounded by a wall. The general rule is walls have one less dimension, and in higher dimensions this one leaves one dimension free.

But in 3D it works out to leave two, which seems significant. (Or I’ve got this all wrong. I need to think about it some more. This was a bit off the top of my head. But I do know tesseracts are a bit weird.)

January 20th, 2020 at 11:57 am

I must have something wrong here. There’s a huge discontinuity regarding 3D when charted like this:

I have to be seeing this wrong…

January 20th, 2020 at 12:00 pm

Maybe it has to do with going from a surface to a volume?

January 21st, 2020 at 10:03 am

Yeah, totally wrong! I was just considering a single wall on a single axis, so of course that’s wrong. The trick is to ask what is the “inside” of a cubic shape in different dimensions. Then it becomes obvious walls are paired and a pair exists for each axis.

A 1D line has one axis, so one pair of “walls” (two given coordinates on the X axis). The inside is the X axis between those points. Specifically {x0 < x < x1}

A 2D square has two axes and two pair of walls (lines crossing those axes at given points). The inside is the region {x0 < x < x1, y0 < y < y1}

A 3D cube has three pair of squares for walls. The inside is the region {x0 < x < x1, y0 < y < y1, z0 < z < z1}

So 4D tesseracts (and higher cubic shapes) just follow that pattern. The inside of a tesseract is a 4D region with four pairs of cubes for walls. Trying to imagine it twists the mind, but it’s sort of, kind of, almost, just barely possible if you squint your mind’s eye.

Mathematically, I’m probably wrong about 4D rotation, too, but it’s sure weird something can rotate on two axes at once. Rotating a 3D object through 4D space allows you to invert it and end up with a “mirror” version. Hard to believe that’s possible, but [shrug].

January 16th, 2024 at 4:50 pm

[…] more posts about the Mandelbrot and some ultra-precision code I was working on. As it turned out, I posted a follow-up later that month. The planned Mandelbrot March (or May) ended up being more about complex numbers […]

November 17th, 2025 at 2:08 pm

[…] In only 256 colors. At very shallow zoom depths. And yet it was amazing. The more I learn about it, the more amazing the Mandelbrot is. From an abstract maths point of view as well as from an artistically stunning point of view. […]