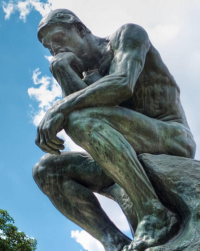

About Wyrd Smythe

The canonical fool on the hill watching the sunset and the rotation of the planet and thinking what he imagines are large thoughts.

It has been a while — three weeks exactly — since the last post here. I haven’t been idle, though; quite the opposite. I reached three-score-and-ten last fall and have taken it as a mile-marker indicating it’s time to get some stuff done.

It has been a while — three weeks exactly — since the last post here. I haven’t been idle, though; quite the opposite. I reached three-score-and-ten last fall and have taken it as a mile-marker indicating it’s time to get some stuff done.

I hibernated through the winter, but now that spring has arrived, I’m out of my sleepy cave and roaming around organizing things, getting rid of other things, keeping appointments, and (gulp) spending money.

But this Friday Notes edition has a tiny significance that demands my attention.

Continue reading

20 Comments | tags: email spam, encryption, Eric Clapton, omnivore, quantum computing, squirrels, weather | posted in Friday Notes, Movies, Music

This is the last of a series of posts about the #5 Crossbar Switch, an electro-mechanical telephone switching system that uses relay logic.

This is the last of a series of posts about the #5 Crossbar Switch, an electro-mechanical telephone switching system that uses relay logic.

The first post touched on the evolution of telephone switching and introduced the switch fabric comprised of many 10×10 crossbar switches. The second post discussed the line and marker circuits; the latter of which implements the switching logic.

This post — the point of the series — discusses the very clever design that allows a 10×10 crossbar to use only 20 relays (here called “magnets”) to control 100 potential connection points within the switch.

Continue reading

3 Comments | tags: relay logic, telephones | posted in Technology

In the first part of this series I touched on the evolution of (landline) telephone switching — which began with operators handling calls manually and which ultimately became the job of computers.

In the first part of this series I touched on the evolution of (landline) telephone switching — which began with operators handling calls manually and which ultimately became the job of computers.

One of the last stages along the way was an electro-mechanical relay-logic marvel of unsurpassed engineering complexity, the #5 Crossbar Switch.

The last post introduced the switch fabric through which calls are routed. This post explores how that fabric is controlled and what happens when we pick up the phone, dial it, and are connected to the remote end.

Continue reading

1 Comment | tags: relay logic, telephones | posted in Technology

My fascination with relay-controlled systems begins in the mid-1970s when I stumble on two sets of bound documents for the PBX in an office that went out of business. The ledger-sized one (17″×11″) had circuit and logic diagrams; the page-sized one (8½”×11″) had descriptions of the diagrams and PBX operation (see SB #61: Tock for more).

My fascination with relay-controlled systems begins in the mid-1970s when I stumble on two sets of bound documents for the PBX in an office that went out of business. The ledger-sized one (17″×11″) had circuit and logic diagrams; the page-sized one (8½”×11″) had descriptions of the diagrams and PBX operation (see SB #61: Tock for more).

I spent many hours studying those books but only ever figured out the basics. These telephone switches are among the most complex electro-mechanical machines we’ve designed.

This series of posts explores this last species of telephone switch controlled entirely by relay logic: the #5 Crossbar Switch.

Continue reading

4 Comments | tags: relay logic, telephones | posted in Technology

I started the Friday Notes series in March of 2021. (I would have mentioned the five-year anniversary in last month’s edition had I noticed it.) Since then (61 posts), I have managed to whittle down long-standing, and in some cases ancient, piles of notes.

I started the Friday Notes series in March of 2021. (I would have mentioned the five-year anniversary in last month’s edition had I noticed it.) Since then (61 posts), I have managed to whittle down long-standing, and in some cases ancient, piles of notes.

The piles aren’t all vanquished; likely they never will be. New notes spring up like mushrooms, so it seems there will always be fodder for future posts.

Or at least endless editions of Friday Notes.

Continue reading

1 Comment | tags: gravity, Neal Asher, Project Hail Mary, weather | posted in Friday Notes

Yesterday I re-posted (with a few small edits) a Substack post from last September about my basic metaphysical stance: physicalism and realism. I’d posted here about the latter back in 2018 [see Realism], but the more recent Substack post reflects eight more years of thought on the matter.

Yesterday I re-posted (with a few small edits) a Substack post from last September about my basic metaphysical stance: physicalism and realism. I’d posted here about the latter back in 2018 [see Realism], but the more recent Substack post reflects eight more years of thought on the matter.

My view has evolved some without really changing. I’m still committed to physicalism and realism. Nothing I’ve learned or heard argued has persuaded me towards idealism or anti-realism.

In this re-post I’m focusing on a couple of philosophical topics that have gotten a little under my skin:

Continue reading

4 Comments | tags: color, idealism, physicalism, realism | posted in Philosophy, Science

During the two years that I was active on Substack I never managed to quite find my “voice” there. I never fixed on exactly what I wanted my Substack blog to be beyond being just a version of this one. That ended up feeling like a dilution.

During the two years that I was active on Substack I never managed to quite find my “voice” there. I never fixed on exactly what I wanted my Substack blog to be beyond being just a version of this one. That ended up feeling like a dilution.

With a view towards re-concentrating my efforts, I decided to reprise (with minor edits) some of my Substack posts here. I started this last month with The Noise is Deafening, and I’ve got two more somewhat related posts for this week.

The first one is an elucidation of my basic metaphysical stance:

Continue reading

3 Comments | tags: idealism, physicalism, realism | posted in Philosophy, Science

I wrote about Project Hail Mary (the 2021 book by Andy Weir) back in February [see this post]. Last month I wrote a few paragraphs about the movie (written by Drew Goddard and directed by Phil Lord and Christopher Miller).

I wrote about Project Hail Mary (the 2021 book by Andy Weir) back in February [see this post]. Last month I wrote a few paragraphs about the movie (written by Drew Goddard and directed by Phil Lord and Christopher Miller).

Project Hail Mary (the 2026 movie) was released to theaters on March 20th. I wanted to be sure to see it on the big screen, so I went to go see it on the 22nd. And loved it.

I need to see it again before I can write about it, but for Sci-Fi Saturday I thought I’d post some links to videos that dig into the actual science behind the book and its adaptation.

Continue reading

2 Comments | tags: Andy Weir, Project Hail Mary, science fiction movies | posted in Movies, Sci-Fi Saturday

Last September I posted a bunch of meme images — mostly things I’d done for Substack Notes but a few just because I wanted a nicer version of something I’d seen. Other than occasional comments to interesting blog posts, I’m no longer active on Substack, but I do have some more memes…

Continue reading

Leave a comment | tags: Between the Bridge and the River, Craig Ferguson, Elizabeth Montgomery, memes, Schrödinger's Cat | posted in Society, Writing

It has been a minute or two since the last Science Notes — this subset of Friday Notes where I share bits and pieces of science news that have caught my eye.

It has been a minute or two since the last Science Notes — this subset of Friday Notes where I share bits and pieces of science news that have caught my eye.

In fact, the last of these was back in October, and the reason I didn’t post sooner was that not many articles have caught my eye since. In part because I’ve found myself skipping more and more articles due to lack of interest.

I fear it’s also in part because science has become so broken these days, so lost in fantastical speculation that I’ve begun skipping articles in which the word “might” or “could” plays a prominent role.

Continue reading

Leave a comment | tags: beer, conservative, education, liberal, Science Notes, sleep | posted in Friday Notes, Science

It has been a while — three weeks exactly — since the last post here. I haven’t been idle, though; quite the opposite. I reached three-score-and-ten last fall and have taken it as a mile-marker indicating it’s time to get some stuff done.

It has been a while — three weeks exactly — since the last post here. I haven’t been idle, though; quite the opposite. I reached three-score-and-ten last fall and have taken it as a mile-marker indicating it’s time to get some stuff done. This is the last of a series of posts about the

This is the last of a series of posts about the

During the two years that I was active on Substack I never managed to quite find my “voice” there. I never fixed on exactly what I wanted my Substack blog to be beyond being just a version of this one. That ended up feeling like a dilution.

During the two years that I was active on Substack I never managed to quite find my “voice” there. I never fixed on exactly what I wanted my Substack blog to be beyond being just a version of this one. That ended up feeling like a dilution. I wrote about

I wrote about