Tag Archives: consciousness

I cracked up when I saw the headline: Why your brain is not a computer. I kept on grinning while reading it because it makes some of the same points I’ve tried to make here. It’s nice to know other people see these things, too; it’s not just me.

I cracked up when I saw the headline: Why your brain is not a computer. I kept on grinning while reading it because it makes some of the same points I’ve tried to make here. It’s nice to know other people see these things, too; it’s not just me.

Because, to quote an old gag line, “If you can keep your head when all about you are losing theirs… perhaps you’ve misunderstood the situation.” The prevailing attitude seems to be that brains are just machines that we’ll figure out, no big deal. So, it’s certainly (and ever) possible my skepticism represents my misunderstanding of the situation.

But if so, I’m apparently not the only one…

Continue reading

41 Comments | tags: algorithm, brain, brain mind problem, computationalism, consciousness, human brain, human consciousness, human mind, Matthew Cobb, mind, Theory of Consciousness | posted in Philosophy, Science

In the last post I explored how algorithms are defined and what I think is — or is not — an algorithm. The dividing line for me has mainly to do with the requirement for an ordered list of instructions and an execution engine. Physical mechanisms, from what I can see, don’t have those.

In the last post I explored how algorithms are defined and what I think is — or is not — an algorithm. The dividing line for me has mainly to do with the requirement for an ordered list of instructions and an execution engine. Physical mechanisms, from what I can see, don’t have those.

For me, the behavior of machines is only metaphorically algorithmic. Living things are biological machines, so this applies to them, too. I would not be inclined to view my kidneys, liver, or heart, as embodied algorithms (their behavior can be described by algorithms, though).

Of course, this also applies to the brain and, therefore, the mind.

Continue reading

43 Comments | tags: algorithm, brain, brain mind problem, computationalism, consciousness, human brain, human consciousness, human mind, mind, Theory of Consciousness, theory of mind | posted in Computers

As a result of lurking on various online discussions, I’ve been thinking about computationalism in the context of structure versus function. It’s another way to frame the Yin-Yang tension between a simulation of a system’s functionality and that system’s physical structure.

As a result of lurking on various online discussions, I’ve been thinking about computationalism in the context of structure versus function. It’s another way to frame the Yin-Yang tension between a simulation of a system’s functionality and that system’s physical structure.

In the end, I think it does boil down the two opposing propositions I discussed in my Real vs Simulated post: [1] An arbitrarily precise numerical simulation of a system’s function; [2] Simulated X isn’t Y.

It all depends on exactly what consciousness is. What can structure provide that could not be functionally simulated?

Continue reading

7 Comments | tags: Alan Turing, algorithm, computationalism, consciousness, human brain, human consciousness, human mind, John Searle, semantic vectors, Theory of Consciousness, Turing Test | posted in Science

Philosopher and cognitive scientist Dave Chalmers, who coined the term hard problem (of consciousness), also coined the term meta hard problem, which asks why we think the hard problem is so hard. Ever since I was introduced to the term, I’ve been trying figure out what to make of it.

Philosopher and cognitive scientist Dave Chalmers, who coined the term hard problem (of consciousness), also coined the term meta hard problem, which asks why we think the hard problem is so hard. Ever since I was introduced to the term, I’ve been trying figure out what to make of it.

While the hard problem addresses a real problem — how phenomenal experience arises from the physics of information processing — the latter is about our opinions regarding that problem. What it tries to get at, I think, is why we’re so inclined to believe there’s some sort of “magic sauce” required for consciousness.

It’s an easy step when consciousness, so far, is quite mysterious.

Continue reading

34 Comments | tags: brain mind problem, consciousness, David Chalmers, human brain, human consciousness, human mind, mind, René Descartes | posted in Philosophy

Indulging in another round of the old computationalism debate reminded me of a post I’ve been meaning to write since my Blog Anniversary this past July. The debate involves a central question: Can the human mind be numerically simulated? (A more subtle question asks: Is the human mind algorithmic?)

Indulging in another round of the old computationalism debate reminded me of a post I’ve been meaning to write since my Blog Anniversary this past July. The debate involves a central question: Can the human mind be numerically simulated? (A more subtle question asks: Is the human mind algorithmic?)

An argument against is the assertion, “Simulated water isn’t wet,” which makes the point that numeric simulations are abstractions with no physical effects. A common counter is that simulations run on physical systems, so the argument is invalid.

Which makes no sense to me; here’s why…

Continue reading

55 Comments | tags: algorithm, computation, computationalism, computer model, consciousness, human mind, mind, simulations, software model, Theory of Consciousness, theory of mind | posted in Computers

Over the last few days I’ve found myself once again carefully reading a paper by philosopher and cognitive scientist, David Chalmers. As I said last time, I find myself more aligned with Chalmers than not, although those three posts turned on a point of disagreement.

Over the last few days I’ve found myself once again carefully reading a paper by philosopher and cognitive scientist, David Chalmers. As I said last time, I find myself more aligned with Chalmers than not, although those three posts turned on a point of disagreement.

This time, with his paper Facing Up to the Problem of Consciousness (1995), I’m especially aligned with him, because the paper is about the phenomenal aspects of consciousness and doesn’t touch on computationalism at all. My only point of real disagreement is with his dual aspects of information idea, which he admits is “extremely speculative” and “also underdetermined.”

This post is my reactions and responses to his paper.

Continue reading

81 Comments | tags: brain, consciousness, David Chalmers, human brain, human consciousness, human mind, mind, Theory of Consciousness, theory of mind | posted in Philosophy

Did someone say walkies?

I’m spending the weekend dog-sitting my pal, Bentley (who seems to have fully recovered from eating a cotton towel!), while her mom follows strict Minnesota tradition by “going up north for the weekend.” So I have a nice furry end to the two-week posting marathon. Time for lots of walkies!

As a posted footnote to that marathon, this post contains various odds and ends left over from the assembly. Extra bits of this and that. And I finally found a place to tell you about a metaphor I stumbled over long ago and which I’ve found quite illustrative and fun. (It’s in my metaphor toolkit along with “Doing a Boston” and “Star Trekking It”)

It involves the idea of making a bad ROM call…

Continue reading

7 Comments | tags: consciousness, human brain, human consciousness, human mind, mind, Theory of Consciousness, theory of mind | posted in Computers, Life

Last Friday I ended the week with some ruminations about what (higher) consciousness looks like from the outside. I end this week — and this posting mini-marathon — with some rambling ruminations about how I think consciousness seems to work on the inside.

Last Friday I ended the week with some ruminations about what (higher) consciousness looks like from the outside. I end this week — and this posting mini-marathon — with some rambling ruminations about how I think consciousness seems to work on the inside.

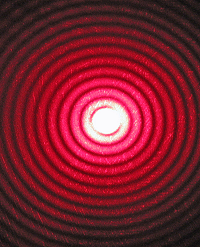

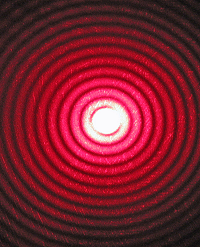

When I say “seems to work” I don’t have any functional explanation to offer. I mean that in a far more general sense (and, of course, it’s a complete wild-ass guess on my part). Mostly I want to expand on why a precise simulation of a physical system may not produce everything the physical system does.

For me, the obvious example is laser light.

Continue reading

37 Comments | tags: brain mind problem, computationalism, consciousness, human brain, human consciousness, human mind, interpretation, laser light, mind, positronic brain, Theory of Consciousness, theory of mind | posted in Computers, Philosophy, Science

I’ve been on a post-a-day marathon for two weeks now, and I’m seeing this as the penultimate post (for now). Over the course of these, I’ve written a lot about various low-level aspects of computing, truth tables and system state, for instance. And I’ve weighed in on what I think consciousness amounts to.

I’ve been on a post-a-day marathon for two weeks now, and I’m seeing this as the penultimate post (for now). Over the course of these, I’ve written a lot about various low-level aspects of computing, truth tables and system state, for instance. And I’ve weighed in on what I think consciousness amounts to.

How we view, interpret, or define, consciousness aside, a major point of debate involves whether machines can have the same “consciousness” properties we do. In particular, what is the role of subjective experience when it comes to us and to machines?

For me it boils down to a couple of key points.

Continue reading

17 Comments | tags: algorithm, brain mind problem, computationalism, consciousness, human brain, human consciousness, human mind, interpretation, mind, positronic brain, Theory of Consciousness, theory of mind | posted in Computers, Philosophy, Science

Philosophical Zombies (of several kinds) are a favorite of consciousness philosophers. (Because who doesn’t like zombies. (Well, I don’t, but that’s another story.)) The basic idea involves beings who, by definition, [A] have higher consciousness (whatever that is) and [B] have no subjective experience.

Philosophical Zombies (of several kinds) are a favorite of consciousness philosophers. (Because who doesn’t like zombies. (Well, I don’t, but that’s another story.)) The basic idea involves beings who, by definition, [A] have higher consciousness (whatever that is) and [B] have no subjective experience.

They lie squarely at the heart of the “acts like a duck, is a duck” question about conscious behavior. And zombies of various types also pose questions about the role subjective experience plays in consciousness and why it should exist at all (the infamous “hard problem”).

So the Zombie Issue does seem central to ideas about consciousness.

Continue reading

5 Comments | tags: brain mind problem, consciousness, human brain, human consciousness, human mind, mind, Philosophical zombie, qualia, Theory of Consciousness, theory of mind | posted in Computers, Philosophy, Science

I cracked up when I saw the headline: Why your brain is not a computer. I kept on grinning while reading it because it makes some of the same points I’ve tried to make here. It’s nice to know other people see these things, too; it’s not just me.

I cracked up when I saw the headline: Why your brain is not a computer. I kept on grinning while reading it because it makes some of the same points I’ve tried to make here. It’s nice to know other people see these things, too; it’s not just me.

Philosopher and cognitive scientist

Philosopher and cognitive scientist

I’ve been on a

I’ve been on a