Over the last three posts I’ve been exploring the idea of system states and how they might connect with computational theories of mind. I’ve used a full-adder logic circuit as a simple stand-in for the brain — the analog flow and logical gating characteristics of the two are very similar.

Over the last three posts I’ve been exploring the idea of system states and how they might connect with computational theories of mind. I’ve used a full-adder logic circuit as a simple stand-in for the brain — the analog flow and logical gating characteristics of the two are very similar.

In particular I’ve explored the idea that the output state of the system doesn’t reflect its inner working, especially with regard to intermediate states of the system as it generates the desired output (and that output can fluctuate until it “settles” to a valid correct value).

Here I plan to wrap up and summarize the system states exploration.

Today is different from yesterday. My brain is different today from yesterday.

I have memories now that I didn’t have 24 hours ago. For instance, I know the Twins lost to the Angels last night. I clearly have brain states I have never before.[1]

This introduces something I bumped into writing the previous post. I want to call it “system states” vs. “states of the system” but that’s an ambiguous (and unhelpful) terminology.

So let me explain.

§

Consider a car engine: It has a variety of parts that move; those parts have different states they can be in.[2]

But those states are strictly cyclical. We could almost think of the parts as having “orbits” they follow. If we pick any point along their movement and call it the “Start” point, they will return to that point again and again.

The system states are finite and fixed. There are only so many states (finite) and those states don’t change (fixed).

The state diagram (or table) of a system is usually finite and fixed. There are only so many states the system can be in, and in most systems, many of those states are revisited constantly.

Just as the pistons in your car engine constantly cycle through the same states.

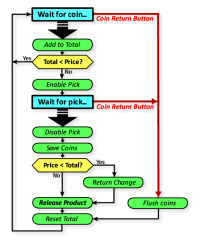

Or as the coin machine represented in the state diagram at the top of this post cycles through its states.

§

The reason the nomenclature is so unhelpful is that “states of the system” can apply to the previous section just as easily as “system states” does.[3]

What I mean by states of the system is how I have mental states today that I didn’t yesterday. These states are new, and unique, even unpredictable.

These states aren’t cyclical. I’ll never return to the exact mental states of yesterday.

(Which is mixed blessing, is it not?)

Compare this to the car engine. Its states are fixed, but it receives new gas and air for combustion. And it wears over time, which changes its states slightly. It certainly affects its behavior.

And, obviously, a car engine can go to new places quite easily. (It’s kind of their whole role in life.)

§

The conundrum isn’t getting a system to generate new states. A random number generate does that.

Any complex system, operating in the real world, according to some set of rules (a finite, fixed set of system states), can generate new and unique states of the system (merely in virtue of moving around).

So the problem isn’t generating new states. Its understanding how the system states work to generate new states.[4]

Trying to figure out a stateful system navigating through a complex dynamic environment can be a difficult proposition (in large part because the interactions with that dynamic environment complicate things).

The size of the state spaces involved makes it beyond formidable.

Possibly effectively impossible, but never underestimate conscious intelligence.

§

I’ve always found a little ironic the debate about consciousness being held by… instances of consciousness.[5]

It’s like choosing to write a blog post about the lack of free will. Or choosing to comment on that post.

(Been there, done that, from both sides now, and still, somehow… it does seem sometimes there is an element of determinism to it all. 😃)

Still, the state space is effectively infinite, which certainly feels pretty free.

§ § §

Another thing about state engine systems is how abstract they are. They’re kind of the opposite of functionalism.

Functional software models attempt to replicate aspects of a systems functionality. The full-adder routines directly modeled the truth table, or the logic, or the addition process, or even the physical operation of the circuit.

But the FSM algorithm, any state-driven algorithm, is about as abstract and unrelated (directly) to the thing it represents as it’s possible for code to get.

This is exactly why I found it so useful. It basic abstract nature makes it apply to many different kinds of input processing. Most languages parsers are, effectively, FSMs.

All interpretation of the system, all its functionality, is in the table of system states.

§ §

When we talk about playing back recorded mental states, we generally mean playing back the states of the system.

Like a video recording, we have a series of snapshots, ordered in time, taken at close enough intervals to preserve reality well enough to effectively recreate those moments in time.[6]

The same questions apply to any putative brain recording. (Not to mention questions about data size, format, and storage. Let alone how it’s played back.)

And note that none of this is related to the brain’s actual system states, only the captured states of the system.

As mentioned last time, these recorded states of the system don’t offer interaction capabilities any more than a video recording does.

Interaction requires knowing the system states so new states can be generated. We’d need to know how to build the car engine, not play back a movie of it operating.

§

The problem is that figuring out system states can be a challenge.

In part because system states are so abstract. States (or either kind) are such tiny parts of the system, and there’s no organizational hierarchy, no levels of complexity.

The states comprise the whole in one jump.

(It’s how you get Pixies. If the states exist as a sequence, the whole exists. The other question about Pixies is: Which states are we talking about? The system states that comprise the Pixie itself experiencing… being in a rock wall, I guess. Or the states of a Pixie dreaming of riding unicorns? Or, equally, of being chased and eaten by dragons.)

And to the extent the brain is an analog system (like a car or full-adder), there is the additional question of how often to take snapshots. What exactly is a brain state (of either kind)?

§

You can skip the rest of the post if you want. It’s just show-and-tell.

I wanted to show you what a state table for the coin machine state diagram up top might look like:

"Wait for coin":

(COIN) "Check Price" {Add to Total}

(Coin Return) "Wait for coin" {Flush Coins}

(otherwise) "Wait for coin" {Pause}

"Check Price":

(Total < Price?) "Wait for coin" {}

(otherwise) "Wait for pick" {Enable Pick}

"Wait for Pick":

(Pick) "Check Change" {Save Coins; Disable Pick}

(Coin Return) "Wait for coin" {Flush Coins; Disable Pick}

(otherwise) "Wait for Pick" {Pause}

"Check Change":

(Price < Total?) "Release Product" {Return Change}

(otherwise) "Release Product" {}

"Release Product":

() "Wait for coin" {Release Product; Reset Total}

Functions:

{Add to Total}

{Reset Total}

{Flush Coins}

{Save Coins}

{Return Change}

{Enable Pick}

{Disable Pick}

{Release Product}

{Pause}

Inputs/Events:

(COIN)

(Coin Return Button)

(Pick Product Buttons)

This is very high-level, and assumes a great deal, but it provides a flavor of what I mean by system states. (Compare this with the diagram at the post’s top.)

There are five system states, each with a double-quoted name. Following each state name is a list of links to possible next states.

Moving from state to state requires an input or event to match a link on the list. The lists have an “otherwise” last item in case an input or event doesn’t find a match.

Each link on the list has three fields. The first, in parenthesis, is the condition an input or event must match. The second, in double-quotes, is the name of the next state. The third, in braces, is a function to call if this link is taken.

Note that an empty condition matches anything. Also that links don’t always need to perform a function, they just get the system to the next state.

To indicate the work involved, I’ve also listed the functions that need to be implemented. Their behaviors should be implicit in their names. I’ve also listed the I/O events the system needs to handle.

§

The point is to show how involved and abstract an FSM is from the actual process. It consists of a set of separate, unordered, linked, states that, combined, comprise a functioning system.

It’s an incredible way to program a lot of simple to medium-complex things, but once a system has lots and lots of states… yikes!

Stay stateful, my friends!

[1] Sad how many of those daily brain states we can’t hang onto for long. Even the really good ones can get lost over time. Fun having them, though!

[2] Here we’ll ignore that engine parts move in analog manner. In a truly analog system, states are snapshots of ‘where things are’ from instant to instant because analog systems don’t always have formal states.

[3] These labels are entirely arbitrary and could just as readily be used vice versa. In fact, I decided to reverse my use of them while writing the post.

[4] One issue with the brain’s system states is the one-hundred-million neurons with, on average, 7000 connections to other neurons. Even the synapses themselves have myriad individual states.

In terms of just the neurons, if we grant neurons just 10 possible states from not firing to firing max rapidly (and 10 may be low-balling it), then brains have a theoretical system state space size of:

10100,000,000

Which is a pretty big number. (A one with a hundred-million zeros after it.) If we consider the state space of the (let’s call it 500 trillion) synapses and grant them the same low-ball 10-level variation, the state space size is:

10500,000,000,000

Which is a considerably larger number. (And the real numbers are likely much higher because neurons and synapses likely have more than just 10 levels of variation.)

[5] I’m especially amused by illusionism to the extent it suggests I’m having the subjective experience of having an illusion of subjective experience.

To the extent it suggests our subjective experience of consciousness isn’t the complete, or even terribly accurate, picture, this seems fairly obvious.

[6] Note all the caveats: How often do we save those states? How much data must we capture each time? How do we store the data? How do we play it back?

[?] Lord help me, but I do love footnotes!

∇

June 13th, 2024 at 5:32 pm

[…] on from system states (and states of the system), today I’d like to fly over the landscape of different systems. In […]

June 13th, 2024 at 5:49 pm

[…] of these, I’ve written a lot about various low-level aspects of computing, truth tables and system state, for instance. And I’ve weighed in on what I think consciousness amounts […]