I left off last time talking about intermediate, or transitory, states of a system. The question is, if we only look at the system at certain key points that we think matter, do any intermediate states make a difference?

I left off last time talking about intermediate, or transitory, states of a system. The question is, if we only look at the system at certain key points that we think matter, do any intermediate states make a difference?

In a standard digital computer, the answer is a definite no. Even in many kinds of analog computers, transitory states exist for the same reason they do in digital computers (signals flowing through different paths and arriving at the key points at different times). In both cases they are ignored. Only the stable final state matters.

So in the brain, what are the key points? What states matter?

Once again (for the last time, most likely), I’ll refer you to my posts about the full-adder code simulations. In particular today:

- Full Adder Simulation V1.0, and

- Full Adder Simulation V2.0, and

- Full Adder Redux (code for discussion below)

The first is a simulation of the common full-adder logic gate circuit:

Circuit #1: Two XOR gates, two AND gates, and one OR gate.

The second is a simulation of a NOR-gate-only circuit:

Circuit #2: Just nine NOR gates.

The second circuit is more of a low-level simulation. The level below this involves simulating transistors, and this circuit is something of a bridge in that direction.

§

The key point about these circuits is that we only care about their inputs and outputs.

As the examples have shown, how we accomplish the correct outputs for given inputs varies and doesn’t matter in the context of a full-adder.

Its only goal is to add bits and generate a correct output.

Mechanism and platform don’t matter matter (other than the inputs and outputs needing to interface with any larger system).

In the circuits above, there are intermediate states between applying a given input and valid changes appearing on the output. In some cases, the intermediate states can cause invalid outputs to momentarily appear.

To deal with this, digital computers, latch inputs and outputs with the system clock.

Essentially, during the clock “tick,” the inputs are latched and stable so changes percolate through to outputs. On the clock “tock,” the system latches the output states on the assumption the outputs have “settled” down by then.

Because of this latching, digital computers march with all system states in lockstep with the system clock.[1]

§

With analog computers a similar principle can be in play. A good metaphor is to imagine the technician taking a voltage reading.

The tech applies the test leads and then waits for the meter to settle down and provide a stable reading. (Not unlike when we step on the scale to weigh ourselves.)

Analog computers often do the same thing. Various signals need a chance to flow through the system and stabilize.

In either case, ignoring intermediate states is just a matter of waiting for a stable output before taking a reading.

§

In digital computers, the intermediate states are noise to be ignored (that must be ignored if the computer is to work correctly).

Background workings to be ignored might be a better way to put it. The “noise” is actually the sound of the system working.

Our own consciousness also isn’t aware of the “noise” of our brains working. In many regards, it’s not aware of any of its workings except at the highest level![2]

The question is whether those intermediate states matter. And if so, which intermediate states might matter? Just how low-level might we have to go to capture the system?

In particular, does a simulation have to go below the level of neurons and synapses? Are there things happening at the cellular level that matter? Atomic? Quantum??

But states that matter by definition aren’t “intermediate” from the point of view of a complete capture of necessary system states.

So the real question is: What brain states matter for consciousness?

§

If a model of neurons is too simple, if it doesn’t take those properties into account, the model might not work.

¶ Maybe the virtual brain is seen to function biologically, but is otherwise inert. Neurons operate, as such, but the system acts like a brain in a deep coma.

¶ Maybe it will show signs of consciousness, speech, awareness, but it will be raving, incoherent, or otherwise clearly insane. Or just a gibberish mind.

¶ Maybe, given the subtlety of the human mind and all the ways it breaks, a consciousness exists, but it’s not quite sane. (Or its sanity decreases over time as computational errors accumulate.)

The point is, the failure modes are myriad, and the “sweet spot” of consciousness may require specific conditions to occur.

(Like laser light does.)

§

Another huge question for computationalism is: Is consciousness in the outputs or in the process?

I’ll return to that topic when I talk more about computationalism, but the question involves exactly what a simulation’s numeric output means.

If consciousness lies in the operation of the machine (as laser light does), then no simulation will ever work.

Exactly as no simulation can produce coherent laser light photons.

§

Computers, marching in lockstep, use a series of checkpoints.

The general idea is that what happens between checkpoints doesn’t matter (and could be anything). Only the state of the system at the checkpoint matters.

The inputs and outputs to the full-adder are such checkpoints. The logical values there have to be correct. That is, they have to be stable and correct when the system “looks” at them.

But in a real-world physical system, intermediate states would be a problem.

Imagine if your car engine or drive train did unexpected things in between cranking out revolutions.[4]

The way to look at it is that all states in real-world systems matter. In a sense, the concept of intermediate states don’t apply.

§

Here’s an example of intermediate states (see Full Adder Redux for the Python simulation code):

1: (A) 0 -> 1 2: G1: 1 -> 0 [1, 0] 3: G3: 0 -> 1 [0, 0] 4: G4: 1 -> 0 [0, 1] 4: (Co) 0 -> 1 5: G5: 0 -> 1 [0, 0] 5: G6: 0 -> 1 [0, 0] 6: G6: 1 -> 0 [0, 1] 6: G7: 1 -> 0 [1, 0] 6: G9: 1 -> 0 [0, 1] 7: G8: 0 -> 1 [0, 0] 7: (Co) 1 -> 0 8: (S) 0 -> 1

For the all-NOR circuit above, assuming it is in a stable state with all inputs set to 0, the list above shows the changes that occur when the a input is changed to 1.

In the list, each line starts with a clock tick number followed by a name and a transition. Input and output names are in parenthesis; the G# names are the nine NOR gates. Some clock ticks have multiple events.

For the gates, the two numbers in square-brackets are the input values.

The bottom line (literally) is that it takes eight clock ticks for a change of input a to trickle through the circuit to the S output.

Note how the Co output gets set to 1 in tick #4 but is reset to 0 in tick #7. That’s an output that’s briefly in a false state.

Here’s another way to see the changes passing through the network:

0: [1,0,0, 0,0,0,0,0,0,0,0,0, 0,0] 1: [0,0,0, 1,0,0,0,0,0,0,0,0, 0,0] 2: [0,0,0, 0,0,1,0,0,0,0,0,1, 0,0] 3: [0,0,0, 0,0,0,1,0,0,0,0,0, 0,1] 4: [0,0,0, 0,0,0,0,1,1,0,0,0, 0,0] 5: [0,0,0, 0,0,0,0,0,1,1,0,1, 0,0] 6: [0,0,0, 0,0,0,0,0,0,0,1,0, 0,1] 7: [0,0,0, 0,0,0,0,0,0,0,0,0, 1,0] 8: [0,0,0, 0,0,0,0,0,0,0,0,0, 0,0]

Each line shows a list (vector) of bits where each bit represents whether the respective gate changed during this tick (numbers along the left).

The simulation mechanism cycles the clock ticks until this vector is all zeros. (as at the bottom) That’s how it knows all changes have percolated through.

Another vector lets the software report on the gate states at each tick (literally capturing the states of the system):

0: [0,0,0, 1,0,0,1,0,0,1,0,0, 0,0] -- start states 1: [1,0,0, 1,0,0,1,0,0,1,0,0, 0,0] 2: [1,0,0, 0,0,0,1,0,0,1,0,0, 0,0] 3: [1,0,0, 0,0,1,1,0,0,1,0,1, 0,0] 4: [1,0,0, 0,0,1,0,0,0,1,0,1, 0,1] 5: [1,0,0, 0,0,1,0,1,1,1,0,1, 0,1] 6: [1,0,0, 0,0,1,0,1,0,0,0,0, 0,1] 7: [1,0,0, 0,0,1,0,1,0,0,1,0, 0,0] 8: [1,0,0, 0,0,1,0,1,0,0,1,0, 1,0] -- end states

In both cases, the three input bits, a, b, and Ci, are on the far left, and the two output bits, S and Co, are on the far right. The nine gates are in between.

The two lists, especially the first, show how changes flow through the system given a single input change.

The events for the other two inputs changing look similar.

§

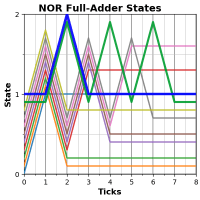

The image at the top of the post shows a graphical representation of the NOR gates and outputs.

The chart shows the “power on” intermediate states:

All gates begin set False. But NOR gates invert, so the first click sees all the inputs at zero, so all the gates are set True. You can see that first big spike.

On further clicks the logic trickles through until, seven clock ticks later, all changes have propagated. The changes vector looks a little different on this one:

1: [0,0,0, 1,1,1,1,1,1,1,1,1, 0,0] 2: [0,0,0, 0,1,1,1,1,1,1,1,1, 1,1] 3: [0,0,0, 0,0,0,1,1,1,1,1,0, 1,1] 4: [0,0,0, 0,0,0,0,1,1,1,1,0, 1,0] 5: [0,0,0, 0,0,0,0,0,0,1,1,0, 1,0] 6: [0,0,0, 0,0,0,0,0,0,0,1,0, 1,0] 7: [0,0,0, 0,0,0,0,0,0,0,0,0, 1,0] 8: [0,0,0, 0,0,0,0,0,0,0,0,0, 0,0]

As upstream gates settle, fewer and fewer changes propagate. Looks like a flood more than the signal from the above example.

§

The bottom line is that a state-based simulation needs to pay attention to the level of system state that matters.

A full-adder doesn’t care about intermediate states, because only the outputs matter. Crucially, the intermediate states are not reflected in the full-adder abstractions (the truth table, the logical expression, or the FSM).

They do appear in the addition model (as overflow and carry), and they are implicit in the simulation.

In terms of outputs, these states don’t matter. In terms of implementations, those states are vital parts of the operation.

The question for consciousness is, which parts matter?

Stay intermediate, my friends!

[1] That clock can be as fast or slow as desired with the physical limits of the circuitry! (At very high speeds, RF coupling and excessive current flow become an issue.)

[2] Computers also have several levels of ignorance. The logic can’t see the wiring. The operating system can’t see the logic gates. It’s not uncommon for large parts of high-level software to be “blind” to parts of itself.[3]

[3] One of the things I loved about Java over C and C++ was its reflectiveness. Java could see itself. It was one of the first business languages I worked with that could.

[4] At the quantum level, it does! Virtual particles are forming and canceling all the time everywhere, your whole car does weird things down in the interstices of reality in between being your car.

Fortunately, reality has its own checkpoints where things have to look normal.

∇

May 14th, 2019 at 5:06 am

Does the brain utilize electrical impulses? Are they cyclical? If so, isn’t the brain digital rather than analog?

May 14th, 2019 at 11:22 am

“Does the brain utilize electrical impulses?”

It uses bio-chemical “electrical” impulses that work quite differently than electron current in metallic conductors, but, yes, it can be seen as sending electrical impulses. Ionic voltage differences are a big part of how nerve channels work.

But so is chemistry, which we don’t particularly see in metal wires.

“Are they cyclical?”

I’m not sure what you’re asking. Short term or long term? Small scale or large scale?

“If so, isn’t the brain digital rather than analog?”

Computationalists would certainly argue it is. I argue it isn’t, not really.

Neurons either, as Yoda said, “do or not do” — they fire or don’t fire, and that part does seem “digital” to many. But comparing them to digital on-off states is (IMO) an error on several levels.

Neurons fire on-off-on-off in a pulse train, and the frequency of that pulse train carries information. Neurons fire faster to communicate a stronger signal (not unlike FM radio.), so they are speaking in analog.

And I’ve read about the idea that the shape of the individual pulses might matter. That how the signal rises from off to on carries information. All physical systems (even digital computers) are ultimately analog in their basic physics, and the brain, as a natural evolved physical organ, is as analog as your heart (which pulses!) or your kidneys.

So it makes sense it would evolve to use analog features of its environment, is what I’m saying, with regard to pulse shape, pulse frequency, and possibly even the self-generated EMF of the local environment.

Brains evolved in the noise of their own operation, so it’s possible that “noise” is part of how they work.

All-in-all, I really do think the program for computationalists is looking gloomy.

May 14th, 2019 at 11:25 am

I suppose that if the brain could be considered digital, so could many other things, e.g., a life form is either living or not living.

May 14th, 2019 at 11:34 am

Exactly! My digital car is either on or not on, either in the garage or not. (And my digital dog is either barking or not barking, sleeping or not sleeping. 🙂 )

I had a long debate with Mike about digital (or discrete is maybe a less conflating word) versus analog. One can find discrete and analog properties in just about anything.

What matters is how the system behaves as a system. Compare my signaling you via a rope attached to you that I tug — you directly feel the physical force I apply as the signal — versus my signaling you via a text message — an indirect, staged, informational transfer not at all connected with the original forces I applied (except in the most abstract sense of my pressing keys generating signals blah, blah, blah).

May 14th, 2019 at 3:13 pm

“Is consciousness in the outputs or in the process?”

This gets back to the discussion on my post. If the outputs can be successfully reproduced, do we have any way to know if the variance in process mattered? Unless the copied/ported process fails to produce the necessary outputs, I don’t think we would.

If the output is not quite sane, along the lines of the Westworld scenarios, then I think we’d be very close. So close that getting the rest of the way would involve debugging the copied version rather than figuring out what didn’t make it from the original. In that sense, I thought the Westworld scenario made for good drama, but the idea they’d be stuck like that for decades seems unlikely, particularly with sane AIs walking around with the same capabilities.

Ultimately I think this comes down to whether you think the brain is an information processing system, or a soul generator. An advanced, evolved information processing system may be extremely difficult to copy, but eventually achievable. A soul generator (or channeler) would never be.

May 14th, 2019 at 6:09 pm

“If the outputs can be successfully reproduced, do we have any way to know if the variance in process mattered?”

No, none. And if the outputs are all that matters, then varying the process doesn’t matter if it produces identical outputs.

That’s been a key theme these past three posts. The sticking point, of course, is: Are the outputs all that matters?

In a full-adder, absolutely yes. In just about any abstract computation (that is to say, any CS computation), also absolutely yes. The outputs are all that matters.

One important point: Assuming the outputs are all that matter for computing our minds assumes our minds are an abstract computation in the first place.

“If the output is not quite sane, along the lines of the Westworld scenarios, then I think we’d be very close.”

That’s a good point. If we did get that close, how could we not almost be there? I completely agree the right path would be debugging!

[As an aside, as a software designer, I’m a little different than most in seeing code as a living thing that evolves. I almost never “kill” it by reverting to an earlier version if I find myself down a blind alley. I may slash and burn, but I always move forward with what I’ve got. It was contact with the Lisp and Smalltalk communities that inspired that outlook. It’s a common view for them, especially Smalltalk.]

I also quite agree it was contradictory in-story. Worse, they had a working copy of James Delos running on The Forge. There is some comment in-story about how being in the real world is the problem… As I’ve said, I was very disappointed in the world-building of season two compared to season one.

“Ultimately I think this comes down to whether you think the brain is an information processing system, or a soul generator.”

If I understand your views, a laser would fall under information processing system? As far as generating photons versus generating some magical m-ghost, I’d agree. On that account, the mind is an IP system.

I’d agree with your conclusion, too. Maybe with a tiny caveat that a Positronic brain might capture a putative m-ghost assuming that’s what a human brain does.

About the only m-ghost I can conceive is, as you’ve said, essentially something like a religious conception of a soul. (There are a variety of different takes, some very personal, some more abstract.)

So, unless “god” (or whomever or whatever) is a biology bigot (which might be the case; it’s not My brand) maybe the right sort of container (complex interconnected etc) captures an m-ghost.

Good story material, anyway. 😀

May 14th, 2019 at 7:29 pm

“If I understand your views, a laser would fall under information processing system?”

Actually, I thought the point of the laser analogy (along with the other hard drive noise one you’ve used before) was that something in addition to information was being generated. I took it to be positing a byproduct of the processing that happens in the brain. Maybe not an m-ghost necessarily, but perhaps an electromagnetic, quantum, or protoplasmic ghost? Or have I been misunderstanding it?

On making a good story, John Scalzi has mind transfers in his Old Man’s War universe. It’s implied that you can’t make multiple copies, but you can transfer someone’s consciousness from an old body to a new one. I don’t remember how explicit this was, but it meant soldier’s in combat bodies could still be killed. That seems like a universe with m-ghosts in it.

May 14th, 2019 at 9:01 pm

“Actually, I thought the point of the laser analogy (along with the other hard drive noise one you’ve used before) was that something in addition to information was being generated.”

True, but given our recent discussions, and the choice between IP system or soul generator, and knowing what you mean by “soul,” the only choice open was IP system.

But yes, I do think there’s a middle choice: m-ghosts, s-ghosts, and IP systems.

(I’m not sure what the hard drive noise analogy is? I don’t recall making it.)

“I took it to be positing a byproduct of the processing that happens in the brain.”

Correct. An emergent phenomenon. Not a field or a force, but a behavior such a system can achieve. In the case of lasers, the result, the byproduct of the behavior, is laser light. In the brain, consciousness.

“Maybe not an m-ghost necessarily, but perhaps an electromagnetic, quantum, or protoplasmic ghost?”

None of the above. What I’m talking about has no more substance than the appearances we see in flocks of birds or schools of fish. An emergent behavior, not a substance of any kind.

The word ghost might suggest some sort of mysterious substantial being. I use it more in the sense that a mirage or optical illusion creates a “ghost” image.

“It’s implied that you can’t make multiple copies, but you can transfer someone’s consciousness from an old body to a new one.”

It’s a theme that’s been around the block a few times. Very early Piers Anthony has a lot of mind-body swapping, and it’s a big theme of Jack Chalker’s. And I just read James Nicoll’s review of Robert Sheckley’s Mindswap. I know I have that paperback somewhere; I recognize the cover! I always liked mindswap stories!

May 15th, 2019 at 8:09 am

“An emergent behavior, not a substance of any kind.”

Okay, then I’ve definitely had the wrong takeaway from the laser analogy. I thought the whole point was that it was not producing information, but some other kind of output (i.e., generating something like a soul). (I won’t say “physical” output because I didn’t get the idea that it necessarily was, and information output is a type of physical output anyway.)

But I wonder then how the laser analogy contradicts the idea of simulating the mind in a computer system, other than implying how hard it will be?

May 15th, 2019 at 11:27 am

“I thought the whole point was that it was not producing information, but some other kind of output (i.e., generating something like a soul).”

As you go on to say, information output is a kind of physical output, which is why, if I’m remembering this right, I agreed it fit under your physicalism umbrella.

This is why I feel interpretation makes things so sticky. Information is entirely abstract, but any actual instance of information is necessarily physical. So how do we interpret laser photons? Photons are matter, so laser light is a new substance — not to reality but to the lasing material which is emitting them. And one can see them as carrying information about that lasing material.

You’re correct the analogy isn’t about information, per se, but it’s definitely not about producing an m-ghost, which is why I thought it had to fit under IP. It is an analogy towards producing an s-ghost, though.

[An m-ghost is what I’m calling Ryle’s more dualistic Machine Ghost in contrast to Shirow’s phenomenal experience autobiographical self Shell Ghost. In Shirow’s (SF) idea, technology can interact, upload, even manipulate, s-ghosts, but humanity hasn’t learned how to create new ones, except the old-fashioned way. In the animated TV series that came after, companion AI-bots do begin to develop their own ghosts, which they initially keep secret for fear of being wiped.]

“But I wonder then how the laser analogy contradicts the idea of simulating the mind in a computer system, other than implying how hard it will be?”

The central point of the analogy involves a very specific physical system (most things don’t lase) under very specific physical conditions (energy, mirrors) generating something unexpected and rather marvelous (just think how useful lasers are).

More to the point, lasers can be simulated with great precision, but those simulations don’t produce a single photon (let alone coherent ones in great concert).

If consciousness arises like laser light, as a consequence of the behavior of the physical system, then computationalism is dead. It means the outputs of the brain’s “computation” are just byproducts, as is true with any calculation.

It means phenomenal consciousness can only arise from physical things with something that works like a brain.

May 15th, 2019 at 1:55 pm

The interpretation thing is hard to escape. Whether the physical output of a particular system amounts to information depends on how it’s received by whatever it interacts with.

For example, the photons emitted by a laser weapon are not information, just a physical assault. But a laser could actually be a communication device, with the photons meant for a receiver that will interpret the patterns they encode in their variations (wavelength, etc). In that case, for our purposes, the most important thing about the output of the laser mechanism might be its role in transmitting information. Which means you could conceivably simulate the overall communication version in such a way that it produces equivalent outputs.

Of course, if we want the simulation to reproduce the same physical effects, it will eventually need to address whatever physical reason that led the original system to use a laser. It might replace the laser with radio communication, taking a bandwidth hit, which may or may not be relevant to the system’s function. Or it might use an ultraviolet laser instead of a red one, or some other communication mechanism that accomplishes similar goals.

All of which is to say that I agree that you can’t completely divorce a functioning information system from its physical implementation. In principle, I might be able to recreate the processing of my phone with a computer built out of mechanical switches, but it will be huge, perform abysmally, and be very loud, not serving the original function of my phone at all.

This is where I think the brain’s massive parallel architecture becomes an issue. In principle, we might be able to reproduce it in software, but it will run abysmally, be huge, and suck enormous power. The brain’s specific physical structure allows it to get by in a very compact space with 20 watts of power. Reproducing that performance with the same size and power parameters will put constraints on the physical implementation of the reproduction.

May 15th, 2019 at 2:41 pm

“Whether the physical output of a particular system amounts to information depends on how it’s received by whatever it interacts with.”

Absolutely. What seems conflated to me is the idea of a true account interpretation being necessary to work with abstract data and the idea that various creative interpretations have anywhere near the same weight.

Essentially, it’s truth versus fiction. Truth always wins.

That AND/OR truth is a good illustration. I added a final comment to the effect that, in isolation, both are equally valid true account interpretations of the table itself.

There is also the example of a stream of bytes known to contain language. But how are the characters encoded? Near infinite possibilities. But there is a true account interpretation.

“For example, the photons emitted by a laser weapon are not information, just a physical assault.”

In the sense we were just talking about, that’s not exactly right. A laser “weapon” is a laser that emits a lot of photons, possibly very short wavelength photons (x-ray lasers!). In the low-level systems sense we were discussing, that’s information.

As you go on to say, abstract external information can be imposed on the “carrier” signal of photons. This is no different than modulating electrons in wires or RF photons through the air.

“In that case, for our purposes, the most important thing about the output of the laser mechanism might be its role in transmitting information.”

I’m sorry; I don’t follow?

If you mean relative to my analogy, modulating the laser light with information might amount to “modulating” our consciousness with external world information. (Or perhaps I don’t at all follow what you’re getting at.)

“Of course, if we want the simulation to reproduce the same physical effects, it will eventually need to address whatever physical reason that led the original system to use a laser.”

I’m lost. Are we talking about simulating a laser communication system? Why? (I do agree with what you say about such a simulation.) Are we comparing a simulation of laser communication with consciousness?

“All of which is to say that I agree that you can’t completely divorce a functioning information system from its physical implementation.”

True. And everything depends on whether consciousness is in the outputs or the process.

If it’s in the outputs, computationalism is fine. Might even be 100% correct.

If it’s in the process, since computation doesn’t replicate that process, then computationalism is dead.

In which case it’s a really interesting question just what a numerical simulation of a brain would produce. I think it produces a zombie of some kind. More likely (IMO), it produces a biologically functioning comatose brain.

“The brain’s specific physical structure allows it to get by in a very compact space with 20 watts of power.”

Pretty amazing, isn’t it? Biology seems to have some advantages. Our electronic circuits are actually pretty wasteful.

May 15th, 2019 at 5:15 pm

I actually was talking about simulating a laser communication system, or more broadly, an information system that had a laser communication system as one of its components. My broader point was just that the functional role of the physical effects matter. If those effects are used for information processing, that information processing can be reproduced with alternate strategies. Although each strategy would have its own trade offs in terms of performance, space, and energy requirements.

On the outputs vs process thing, this can get complicated in a number of ways that blur the distinction. Are we talking about the output of a single neuron, components of the brain such as the amygdala, hippocampus, thalamus, or the brain overall? Output and process depend on which layer we’re looking at.

For example, if due to a stroke my amgydalae were destroyed, and someone figured out how to build and wire in replacements, that took in all the inputs of the originals and reproduced all the outputs, how much of a difference could it make to the rest of my brain? Would I feel fear any less if the ventral-medial prefrontal cortex was receiving the same nerve impulses it used to receive from the original amygdala?

May 15th, 2019 at 5:42 pm

“I actually was talking about simulating a laser communication system,”

Ah, okay, I’m with ya now. I was conflating it with my laser light analogy. No connection. (See what happens when we conflate stuff? 😛 )

Also, sometimes I’m a little slow to catch a change of topic. A guy I used to hang out with, in part because he has Asperger’s, would change topic suddenly, and I’d be really confused about the conversation until I caught up. Made for some pretty funny light bulb moments.

“My broader point was just that the functional role of the physical effects matter.”

With regard to the information processing itself, completely agree.

“On the outputs vs process thing, this can get complicated in a number of ways that blur the distinction. Are we talking about the output of a single neuron, components of the brain such as the amygdala, hippocampus, thalamus, or the brain overall?”

The last one.

The proposition of computationalism is (AIUI): Assume a successful numeric model of the brain or mind. The inputs and outputs of this would be numbers. Hook those inputs and outputs to the right kind of devices, and those numbers are interpreted as speech, sight, hearing, etc.

Under computationalism, the result should be a conscious system. It would use its outputs to report phenomenal experience of its inputs.

Obviously human brains and human I/O devices are different and use different signals to communicate. Therefore, the processes are different. In the case of a numeric simulation (opposed to a Positronic brain), the processes are extremely different. Digital versus analog.

So the presumption of computationalism is that this doesn’t matter, so long as it produces similar outputs driving appropriate I/O devices.

Enter the laser light analogy.

It demonstrates how a physical system can be simulated with great precision, and its numerical outputs very precisely describe the behavior of the system. But the process doesn’t emit photons.

A material that lases has a process that does.

Thus: My argument that a physical brain has a process that produces consciousness and which happens to generate outputs to drive I/O devices. (Imagine a brain in a jar with no connections. It’s still conscious.)

If you don’t replicate the process, you won’t get consciousness.

As far as replacing your amygdala, if the replacement replicated all the properties (which might include chemical signals) then it might well work. No doubt some day we’ll find out!

May 15th, 2019 at 11:57 am

BTW: I don’t know how great your interest in semantic vectors is, but after reading about them a bit I decided to muck around with them a little. Kind of interesting!

June 13th, 2024 at 5:58 pm

[…] (the reader) have some grasp of an FSA (or FSM). [If not, see: Turing’s Machine or these … three … […]