As a diversion for the weekend: Have you ever wondered why computers run so hot? No? Okay, I’ll tell you. It’s actually kind of a hoot. (We’ll get back to the more serious topic of algorithms and AI, and wrap up that series, next week.)

As a diversion for the weekend: Have you ever wondered why computers run so hot? No? Okay, I’ll tell you. It’s actually kind of a hoot. (We’ll get back to the more serious topic of algorithms and AI, and wrap up that series, next week.)

You kind of have to wonder. Humankind has gone from oil and gas lamps to incandescent copper filaments, then to fluorescent lights, and now to LEDs. The trend here seems towards cooler more efficient light sources. But computers seem to need bigger and bigger fans!

The short answer: It’s all those short circuits!

Millions (if not billions) of short circuits happening several billions of times per second!

It’s like an army of tiny imps running around inside your computer dropping crowbars across the power rails (but just for a nano-jiffy; they pick them right back up).

If you work much with computers, you probably know that the heat is correlated with the computer’s (clock) speed. The faster a computer runs, the hotter it runs.

If you work much with computers, you probably know that the heat is correlated with the computer’s (clock) speed. The faster a computer runs, the hotter it runs.

When you know what’s going on under the hood, the heat makes perfect sense. It’s due to how the transistors in modern computer chips work. (And has nothing to do with imps.)

To make sense of it, let’s back up and talk about what a transistor is, especially with regard to computers.

Don’t worry, we’ll keep it really simple; it’s Saturday!

What’s more, talking about them in the context of computers turns out to be a much easier discussion for the same reason a lot of “computer stuff” is actually very simple when you come down to it: It’s all just ones and zeros.

(Information theory tells us that, at least when it comes to information, it’s always just ones and zeros. A Turing Machine demonstrates this concretely with its tape.)

I mentioned transistors when we talked about creating a computer model of a computer (in The Computer Connectome).

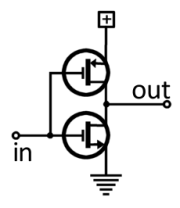

Transistor and its symbol.

At the time, we treated them as tiny black boxes with three connections (leads). Now we need to look inside the box.

The transistor symbol shows the three leads as lines leading away from the circle symbolizing the transistor.

(For the record, clockwise from left side: base, collector, emitter)

Basically, think of them as light dimmers. Current enters from the lower right (the emitter) and exits from the upper right (the collector).

A much smaller amount of current gets siphoned off and exits stage left (through the base). The actual amount exiting the base proportionally controls how much can leave the “main” exit.

By varying the amount of base current, you control the collector current. Just like turning a light dimmer up and down.

There’s a lot more to the picture when we use them as dimmers, but that’s the analog world. The digital world is much simpler (just ones and zeros).

There’s a lot more to the picture when we use them as dimmers, but that’s the analog world. The digital world is much simpler (just ones and zeros).

Computers rip out the light dimmers and install simple switches. You can have any light setting you want so long as it’s either on or off.

Now, either some current leaves the base or not, which switches the transistor fully on or fully off, respectively. That’s binary logic in action. And it makes designing circuits way easier!

Your average computer these days has a CPU chip with billions of transistors. Other chips inside the box also have high transistor counts.

For a number of engineering reasons, these chips generally use a certain kind of transistor, called a field-effect transistor — FET (“phet” or “eff-ee-tee”). [One of the key reasons is that FETs are voltage-based devices, whereas the transistors just discussed are current-based.]

In particular, again for reasons, computer logic chips use a certain kind of FET, called a metal-oxide semiconductor FET — MOSFET (“moss-phet”). [These are the ones that have to be handled carefully. A static zap can destroy them.]

C-MOS configuration.

But none of that matters. What matters to us is that they use a certain MOSFET configuration, called complementary MOSFET — which fortunately has the mercifully short nickname, CMOS (“sea-moss”).

It matters because the CMOS configuration has a funny thing about it.

As you can see from the diagram, it consists of two MOSFET transistors connected in a totem-pole fashion.

The little plus-in-the-box is the positive power supply, and the triangle of horizontal lines is ground (zero volts), so the two transistors bridge the power rails.

If they were both turned on, that would be a dead short!

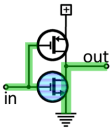

“One”

As it stands, if one is on while the other is off, the output is connected to either ground or plus voltage. Those are the binary “one” and “zero” values.

The input to these is, likewise, a connection to ground or plus voltage.

If you look closely, the two transistor symbols aren’t the same. The little arrows are in different places and point different directions. That’s because the transistors are different; they have reversed polarity!

It’s like one is the anti-matter version of the other. Or the Bizarro version. They operate the opposite of each other, and this is critical!

“Zero”

The input signal connects to both, but since they are opposites, an input that turns on one turns off the other. This ensures that both transistors aren’t on at the same time.

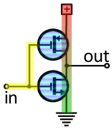

Normally. And that’s the rub.

For a very brief instant — a nano-jiffy — when they switch, both transistors are on. There is a dead short through them.

Doing it that way allows them to switch much faster (because reasons), but it means they generate heat during the switch.

How much heat depends on the number of transistors (of course) and also how often they switch, which is where clock speed comes in.

Dead Short!

The more often they’re switching, the more often they’re shorting. Computers today switch billions of times per second.

And they have billions of transistors (but not every transistor necessarily switches at each clock tick).

But millions and millions do each billionth of a second, and all those shorts generate significant amounts of heat. That’s why modern computers need heat fins and big fans.

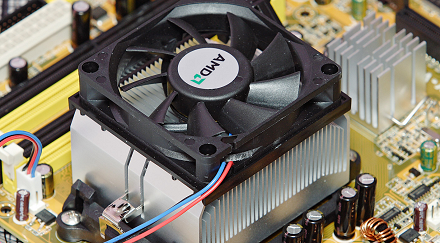

(Once, just the computer box itself had a fan, but over time the CPU chips needed their own little — or not so little —fans.)

Sit and watch your computer do nothing for 60 seconds. If only half your transistors switch every clock tick, assuming a billion transistors and a 2 gHz clock, that’s 60 quintillion dead shorts.

No wonder the poor darlings need fans to cool off!

November 7th, 2015 at 5:05 pm

I thought you said it was Saturday? 😉

November 7th, 2015 at 6:52 pm

Is is not Saturday where you are?

November 7th, 2015 at 7:19 pm

In my undergraduate days, my school had a water cooled mainframe. If Moore’s Law is petering out, I wonder if we might see a return to large systems which need that kind of cooling.

November 8th, 2015 at 6:00 am

Do you think that the shift to cloud-based computing will mean that most of the world’s computing goes on in huge data-centres, with a return to mainframe-style machines?

November 8th, 2015 at 9:24 am

Not sure if I’d go that far. There are a lot of phones, tablets, and personal computing devices in the world, and most of cloud computing still farms out the most intensive processing to the user’s local device. Real attempts to re-centralize processing never seem to live up to the promise.

It seems like data center processing will always be more expensive than local processing, so we’ll likely save it for stuff that must happen centrally. Right now, that’s mostly traditional server processing. If quantum computing continues to require cryogenic conditions, that might be where it has to happen.

November 8th, 2015 at 9:52 am

What he said! 🙂

November 8th, 2015 at 9:49 am

The cooling system is certainly a big part of any large data center!

(Back in The Human Connectome there’s a picture of guy walking through a large data center (CERN, in fact). Those big blue things you see on the wall are, I think, the A/C units. (It’s possible they’re fire control of some kind.))

I remember how we were so bemused that Crays lived in a bath of Freon.

November 8th, 2015 at 6:01 am

I always wondered why CPUs get hotter when they run demanding apps. Presumably it’s because more of the transistors are switching state each clock cycle?

November 8th, 2015 at 10:02 am

That and some systems can speed up or slow down their clock depending on system load. Some can even adjust their voltage, which also affects the heat produced.

Think about a browser, for example. Once you’ve opened a page and are reading, the app isn’t doing much. It’s mostly just waiting for you to press a key. Other than background processes and services, the threads of the app may all be blocked in wait states.

Compare that to, say, when I render a 3D scene and the CPU use jumps to 100%. Very calc intensive, 3D.

(It’s possible my estimate of half the transistors switching on every clock tick was too big in a truly idle system. It was just a WAG. But the CPU is constantly running code, and modern PCs have lots of services, so maybe it wasn’t too far off. At the same time, right now for instance, the System Idle Time process has 99% of the CPU’s attention, and I suspect there’s a blocking wait for interrupts in that process. Of course, every key I press here generates an interrupt and wakes it up a little. 🙂 “What? Huh?? Oh, he pressed another key. I wish he’d type faster. This is so boring!”)

November 8th, 2015 at 6:03 am

Good article, Wyrd. Are you going to write about reversible computing next?

November 8th, 2015 at 10:04 am

ROFL! No, not an area that interests me too much, nor an area I have much background with. For something like this, I generally stick with topics I know pretty well and have explored pretty thoroughly.

November 8th, 2015 at 10:12 am

I did read an article about (deliberately) inaccurate computing intended to speed things up. Part of the difficulty in getting more speed has to do with making sure you get all the data correct. If you’re willing to sacrifice some of that, you can run faster.

The first response to an idea like that (at least on my part) was: W.T.F?

What possible value is there is fast, but inaccurate, answers? But the more I think about it, if the error can be restricted to the really high precision parts of a calculation — degrading their precision slightly — perhaps that’s okay if speed is really crucial. If a window positions itself a pixel or two off of where you placed it, is that really a problem?

Obviously there are situations were precision needs to rule, but others where maybe it doesn’t.

What makes this relevant is that humans also aren’t perfect computers. We make way more errors than they’re talking about allowing. So WRT complex intelligent systems, maybe a little error turns out to be an important ingredient. This ties in with other theories of consciousness I’ve heard about that address the fact of our imprecision and consider it important to the process.

November 8th, 2015 at 12:06 pm

N.B. brains get hot too. 🙂

November 8th, 2015 at 12:46 pm

True! Different reasons, of course.

(I don’t know if you’re read Terry Prachett’s Discworld novels (only the best fantasy SF ever), but on the Discworld Trolls are silicate life forms who are normally as dumb as, well, a bag of rocks, but if their silicon-based brains cool to fridge or winter temps, they become brilliant! 🙂 )

November 8th, 2015 at 12:47 pm

Although,… when you get right down to it, essentially: work produces heat. That’s just thermodynamics.

January 16th, 2024 at 4:51 pm

[…] both electricity and water (for cooling). Computers run hot because their operation involves trillions of tiny brief short circuits. In particular, two types of computing are energy hogs: AI training and cryptocurrency […]