Last Friday I ended the week with some ruminations about what (higher) consciousness looks like from the outside. I end this week — and this posting mini-marathon — with some rambling ruminations about how I think consciousness seems to work on the inside.

Last Friday I ended the week with some ruminations about what (higher) consciousness looks like from the outside. I end this week — and this posting mini-marathon — with some rambling ruminations about how I think consciousness seems to work on the inside.

When I say “seems to work” I don’t have any functional explanation to offer. I mean that in a far more general sense (and, of course, it’s a complete wild-ass guess on my part). Mostly I want to expand on why a precise simulation of a physical system may not produce everything the physical system does.

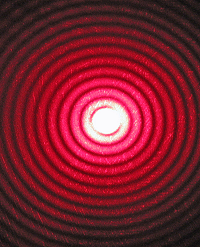

For me, the obvious example is laser light.

[I can hear the groans. “Again with the laser light thing.”]

Yep, again with the laser light thing.[0]

Because the thing is, it does seem a pretty good response to the best assertion of computationalism: that a sufficiently precise numeric model can produce everything produced by the system it models.

In my view it depends on where the system actually is: in the behavior or in the outputs. And that depends on understanding the system.

Ultimately, it depends on understanding what consciousness really is.

(And I don’t think we’re close.)

§ § §

A long wait state.

Admittedly, the point I’m making isn’t obvious.[1]

I’ll begin with a very simple example: a computer simulation of a vending machine.

I discussed the machine in a previous post. [See Final States, State of the System, and Turing’s Machine.]

The vending machine has a very small number of system states. Mostly, it’s waiting for the user to insert coins or push buttons.

However, over the course of its life, it has a very large number of states of the system. Each time someone interacts with the machine, it generates new states as it cycles through the appropriate system states for the interaction.

If we want to simulate the vending machine, we can either simulate the system states — model the machine’s nature — or we can simulate its operation over time — model the machine’s trajectory.

In either case, we start with some kind of model of the machine.

We try to identify its system states — essentially what the machine can do, but also how it does it. System states are tightly constrained by the machine’s intent. The state diagram (for the vending machine) is small and limited.

Once we have this model, we can “run” it by assuming various kinds of inputs and cycling through the system states. If we record those states as they happen, we end up with a states-of-the-system transcript.

We could replay that transcript to recreate the “life” of the machine during that time.

And note that replaying it doesn’t require the model, just the playback mechanism. By analogy, watching a play requires the actors be present. Watching a movie of that play does not.

§

Note that this model is very simplified.

Some simplicity is for purposes of this illustration of a stateful system — for instance, which product is selected has no bearing on the example but would need to be part of a more realistic model.

There are also error conditions, such as not being able to make change, or being out of a product. These would be part of a real model.

The model also ignores such things as the machine heating up or cooling down, or wear and tear on the components, or vandalism. These might not be part of a model if they weren’t of concern in terms of desired results.

So clearly the level of detail necessary in any model depends on what the model should accomplish. My vending machine model only needs to accomplish a demonstration of a state system, so being a cartoon of a real vending machine is good enough.

§ §

Computationalism seeks a model sufficiently detailed to create, what is effectively, a person.[2]

It obviously needs to be a pretty good model.

It requires understanding the system being modeled and the ability to compute it. (Knowing the model doesn’t mean we can compute it.)

The central question is: Can a physical system have properties that a simulation cannot replicate?

On some level, the answer is, “Obviously, yes.” The laser analogy makes this clear. A simulation of a laser cannot emit photons. Only a certain kind of physical system behaving in a certain kind of way can emit photons.

The laser model simulates that behavior. The model generates outputs (list of numbers) that we interpret as describing the behavior of the physical system.

The outputs represent the states of the system — the trajectory of system states over time. We can almost think of them as very detailed “Dear Diary” entries.

§ §

If we faithfully replicate the physical aspects of a system, then it seems reasonable the copy should have the properties of the system it mirrors.

For instance, if we made a tiny vending machine, with all working parts, we’d expect it to work just like a vending machine, except tiny.[3]

But if we model a system numerically, how can we know if our model contains the necessary properties? A numerical model can easily ignore something a physical model takes for granted.

For example, early 3D CAD-CAM programs had no awareness of different moving parts intersecting the same physical space at the same moment.

Simple 3D systems don’t mind “solid” objects overlapping even in a static model. You can put one object inside another object without the world blowing up.

In more complex models, calculations detect the overlap and react in some way. They may not act to prevent the overlap. They may just inform the user of what parts overlapped at what point of the cycle.

In the real world, when parts overlap, either the prototype makes crunching sounds, or it just stops. Physical parts cannot overlap. Attempts to make them do so are generally catastrophic.

The point is that the behavior of overlapping physical objects must be built into the model. And the model doesn’t care if the parts overlap — it’s just a matter of one kind of numerical result versus another.

§ § §

Do the outputs of the vending machine model faithfully describe the machine’s behavior?

For some definition of faithfully, yes, they do. They can be as faithful to the reality as the blueprint for the machine. In both cases, abstract information in a specific format represents the vending machine. The more detailed the representation, the more accurate the model.

But the simulation doesn’t dispense sodas or chips. It only tells us about dispensing them (using lists of numbers).[4]

Likewise, the laser simulation doesn’t emit any photons, it can only tell us about them in — perhaps in great detail.

And also likewise, a brain simulation tells us, with lists of numbers, what the brain is doing. (Given a good enough model.)

Can it tell us that the brain is having phenomenal experience? Can it tell us the brain is experiencing consciousness?

§

The bedeviling thing about phenomenal experience is that it is subjective.

We can’t tell if someone else is having a subjective experience, we can only rely on what they tell us. Which means there is always room for doubt when dealing with an unknown intelligent system.[5]

A general proposition is: Any system that can act sufficiently conscious (however we chose to define it or measure it) is conscious (by fiat).

I’m fine with that. I think it’s currently science fiction, and I think no such system can be created, both in principle and practically, but if one were? I’d have no problem working with Lt. Cmdr. Data, nor any doubts about his “humanity.”

I’m just skeptical it will ever happen. (For reasons I’ve explored over the last 14 days.) We certainly aren’t anywhere close.

§ §

In any event, the hope of computationalism is that, given a good enough model (which I think is huge ask), it will describe a brain having conscious thoughts in response to inputs fed to the model.

The outputs will tell various devices to speak, write, even move.[6]

But what is the model really simulating? What do the outputs really tell us?

A good enough model should definitely tell us about a biologically working brain. The basic functions of the body should be easy enough to simulate.

Models that describe the heart pumping, for example, would lack for little in what they tell us about the heart.

But does simulating the brain result in subjective consciousness? Does it reside in something a numerical simulation can capture?

The challenges are formidable and possibly computationally out of reach, even if possible in principle. My only point throughout is that I’m very dubious about “in principle.”

Stay skeptical, my friends!

[0] And again with the footnotes!

[1] And, of course, could be wrong. (The only think I’m always right about is that I could be wrong.)

[2] The whole point of the Turing Test is not being able to tell between a person and a machine.

[3] It would be adorable! I want a tiny working vending machine! And tiny coins to feed it. And tiny sodas and itty-bitty bags of chips!

[4] These outputs could tell another machine to dispense sodas and chips. In fact, most vending machines today do have an electronic “brain” with a model of vending machine behavior, and it does tell the machine what to do.

[5] Illusionists doubt we’re having subjective experience!

[6] Belch, fart, spit, blow their “nose,” clear their “throat,” and excuse themselves for a much-needed bathroom break. (“My back diodes are swimming!“)

∇

May 24th, 2019 at 3:36 am

FYI: I’ll only be available now and then until Monday. I’m around, but I’ll be preoccupied dog-sitting.

May 24th, 2019 at 5:39 pm

I think I’d find the various examples of physical systems whose effects can’t be reproduced computationally more compelling if someone could identify what outputs of the brain can’t be reproduced, at least beyond vague speculations.

The brain receives electrochemical signals from sense organs, engages in selective and recurrent propagation of those signals, and outputs electrochemical signals to muscles and organs. It also receives and produces pure chemical signals in the form of hormones. But the very name “signal” is a universally accepted interpretation of these physical actions as being about information.

If the brain sent out lasers, bottled soda, or some kind of physical effect which didn’t depend on the interpretation of that effect by other systems, it would be a lot harder to think of it as doing information processing, or of reproducing its operations in a computer. But the brain seems to depend on other systems for its physical effects, much as a computer depends on its I/O systems.

I understand the concerns about analog processing and electrical interference, but if the brain were so sensitive to variations in that processing, I think it would be impossible to think near anything that generated any kind of electric field, such as a cell phone, much less inside an MRI machine. It seems much more likely the brain’s information processing systems compensate for these noise sources, at least within certain limits. (Obviously electrodes and TMS exceed those limits.)

Ultimately I think the question of whether a machine can be conscious is a red herring, a hopelessly subjective question. A more grounded question is can a machine, an engineered system, reproduce the decision making capabilities of an evolved system such as an animal, particularly the human animal?

It seems clear we can already reproduce the decision making capabilities of simple animals like cnidarians, sea slugs, or pond snails. Autonomous systems seem to be getting at lobster or crab levels. Humble beginnings to be sure, but we started at worm level.

Only time will tell.

May 25th, 2019 at 12:14 pm

“I think I’d find the various examples of physical systems whose effects can’t be reproduced computationally more compelling…”

By “reproduced” you mean simulated numerically, so on one account (mine), there is no computation that reproduces the effects of a physical (except for computations that simulate other computations).

But on the other account (yours), if the numbers match reality well enough, the model is 100% successful.

And we inevitably get back to the central sticking point: [You] Blah, blah, blah, outputs! [Me] Blah, blah, blah, process!

“…if someone could identify what outputs of the brain can’t be reproduced, at least beyond vague speculations.”

A fair point, indeed. We’re confounded by two things: We only have the one example of brains, and they turn out to be really hard to figure out. And we’re so far away from being able to test the software, because that’s really hard to figure out, too.

So,… damnit, vague is all I got.

All I can say is that, for me, it was really thinking about the laser analogy. It was, aptly, like the proverbial light bulb going on.

Everything depends on the whether describing the brain numerically gives rise to consciousness. It does not give rise to photons (or any other physical phenomena), so I think it’s reasonable to consider it might not give rise to consciousness.

The flip side (which I fully acknowledge!), is that, say, a bridge simulation can accurately describe the various stresses put on the system, and the numbers reflecting those stresses would match the actual physical forces that could be measured on the bridge.

I can see it working either way, which is the source of my skepticism in the face of rampant computationalism. (I’m sure I’d do a lot less preaching if everyone didn’t seem so damn certain. 🙂 I’ve railed against gnosticism (certainty) all my adult life.)

“If the brain sent out lasers, bottled soda, or some kind of physical effect which didn’t depend on the interpretation of that effect by other systems,”

Huh. I never really put it together why the emphasis on interpretation was so central.

I may need to chew on this particular point more, but my initial impression is that this assumes the brain is computing something that needs interpretation in the first place. (And again we’re confounded by only having the one example of a really complex natural system.)

Something about the idea of one part of a natural system “interpreting” the “signals” of another part of the system doesn’t feel right. (I’m fine with signals. I just quoted it to acknowledge what you said about it; I agree.)

When a tornado rips through town, are the trees and houses “interpreting” the signals of the tornado. One could say so poetically or metaphorically, but is that really the same as what happens when the video system interprets bit patterns as ASCII and displays characters?

On some level, even the use of “signals” for chemical signals can be misleading in the context of electrical signals. There are ways in which both are the same and ways in which both are different. (And different usually wins because of entropy.) Treating them as identical might not always work out.

“But the brain seems to depend on other systems for its physical effects,”

Do you distinguish between systems in the brain versus, say, the neurons that go to muscles and sensors? (As you know, there’s a pretty direct path to the brain through the nose.)

“I understand the concerns about analog processing and electrical interference,”

The analog concerns would be separate from EMF concerns. The MRI point is a good one. Radio signals in general, even. If our brains depended too much on those kinds of signals, you’d think we’d know it by now.

(Some do feel living under powerlines is a problem, although there doesn’t seem any hard science behind it. I can see 60 Hz having potentially more effect than HF RF, which might simply not couple to the brain very well. Wouldn’t it be interesting if living in the bath of RF that we do has had some effect? Could it account for Trump? 😀 )

“Ultimately I think the question of whether a machine can be conscious is a red herring, a hopelessly subjective question.”

I know you do. You’re an instrumentalist. And a relativist (at least more so than me). 😀

I’m an objectivist (and something of an absolutist), and I don’t give a damn what the instruments say. I want to know what’s going on under the hood. 😛

As to your more grounded question, I’ll go along with it. (I still want to know what’s going on under the hood!)

May 25th, 2019 at 2:52 pm

The question of interpretation is an interesting one. When does a physical reaction, or series of reactions, amount to interpretation? I wonder if there’s a philosophy of interpretation out there somewhere. But my initial thought is it matters if there can be multiple reactions to the causal force.

So in the case of a tornado, we don’t seem to have a situation where some trees and houses aren’t affected by it, or are effected in different ways. The power and force don’t allow for a lot of variation. The only thing that seems to matter is how strong the structure is in relation to the wind.

But in the case of anything we are tempted to call a “signal”, I think the effect is far more varied. A video controller is ultimately going through physical reactions, but another piece of equipment that receives the exact same signal won’t respond in the same manner. In the case of biological systems, the effect of a neurotransmitter or neuromodulator is up to the recipient, as is the effect of a hormone. In these cases, the same molecule can affect different systems in different ways. In those scenarios, the word “interpret” feels right to me.

Of course, we could insist that interpretation can only be done by a conscious entity. If so, then any talk of electronic equipment “interpreting” a signal would have to be considered metaphorical. But the same metaphors could also be used in biological systems.

“Do you distinguish between systems in the brain versus, say, the neurons that go to muscles and sensors?”

Well, there is the central nervous system and the peripheral nervous system, with the brain and spinal cord being the CNS, and everything else being the peripheral system, although the boundary isn’t a clean one. (For example, the retina is part of the central nervous system.)

And there are sensory neurons, motor neurons, and interneurons. Sensory neurons respond to some sort of physical impingement from the environment, such as a photon striking a cone or rod cell, certain molecules landing on a taste bud, or pressure through the skin, but immediately signal to interneurons. Motor neurons receive their signals from interneurons and transmit across the neuromuscular junction to muscle fibers, where the signal is amplified as necessary to cause contraction.

(Obviously this is a massive simplification. For example, interneurons are typically split into sub-categories, including communication and computation neurons, the latter I know you’ll have issues with. And in reality there are dozens of different types of neurons, by some accounts, hundreds.)

“(As you know, there’s a pretty direct path to the brain through the nose.)”

Definitely. It’s an unusual relationship, the only sense that goes directly to the cerebral cortex. Vision goes through the thalamus and brainstem simultaneously, but the rest of the senses come up linearly through the brainstem, thalamus, and then the cortex.

This weird pathway for smell implies the earliest vertebrate forebrains were “smell brains”, which is why I posted a while back wondering if smell was the killer-app that led to consciousness.

“You’re an instrumentalist. And a relativist (at least more so than me).”

I am those things. People also often call me a nihilist, a label I’d accept descriptively, but not normatively. They also call me a lot of other things, not all of which I answer to. 🙂

May 26th, 2019 at 11:33 am

“I wonder if there’s a philosophy of interpretation out there somewhere.”

A philosophical interpretation of interpretation? That sounds exactly like something philosophers would do! 😀

To one point you raised, I’m fine saying electronic gear interprets electrical signals. In fact, that’s exactly what I’d say occurs. (FWIW, it does so because it was intelligently designed to do so. James and I have been having a debate about the role of The Designer.)

“But my initial thought is it matters if there can be multiple reactions to the causal force.”

That does seem a good start. Might it be useful to think about the definition of “signal” as well?

I still wonder whether tornadoes have signals, but like the idea of there only being one “interpretation” of its forces.

Now, the “signals” (and I think we can be clear those are signals) to the video card definitely require interpretation to have any meaning at all. As you suggest, sending those signals to a hard drive card isn’t going to work out well.

Ha! We have something new to argue about: Do sense neurons and muscles constitute IO devices that interpret a set of signals — which other organs would not understand — versus a view of the whole system as (in a crude metaphor) “ropes” tugging on, or being tugged by, the brain — the point being a “tug” is a “tug” that’s going to be meaningful to whatever receives it.

Rather than bit patterns which have to be decoded, are nerve impulses largely the same? If an evil mad scientist rewired someone’s CNS, would an attempt to blink the eyes move a finger? Would the nerve impulses that trigger eye muscles also trigger finger muscles?

In this context, it occurs to me “signals” might be understood as a distinct signaler using some means to pass information to a signalee over a distance or through a barrier.

That eliminates the tornado, but keeps chemical signals and the way nerves work. (And, obviously, electrical.)

So there definitely is a signaler/signalee role for the brain.

“Well, there is the central nervous system and the peripheral nervous system…”

Yes, right. I was wondering how you saw it. Consciousness does seem to reside in the brain, but I do wonder about the ways the brain extends itself through the body. The nose being an extreme, and as you say, ancient, example.

I do wonder what role the physicality of the body might play in consciousness. (Not a biological body, necessarily, but something with mobility and a sense of directly experiencing the environment. Maybe proprioception is an important aspect of consciousness?

Could there be more to the phrase “feeling alive” than we suspect?

“People also often call me a nihilist,”

Can’t say I see you that way, but atheism is sometimes linked with nihilism. [shrug]

May 26th, 2019 at 4:02 pm

I looked up Merriam-Webster’s definitions for “signal”. It gives a number of them. One that I found interesting is their 2b one, “something that incites to action.”

Maybe another criteria is whether the causal force supplies all the energy for the effect it causes. So a tornado’s effects on trees and buildings doesn’t incite anything in them, it just directly manipulates them.

But a signal to a video controller is a fairly low energy impulse. The controller draws its energy to perform its work from its power source. Likewise, the motor neuron signal to a muscle fiber is also relatively low energy. Most of the energy for the fiber’s contraction comes from its internal ATP energy stores, then from the muscle’s intracellular fluid, and finally from the bloodstream.

But to your point, one big difference between a technological computer and a nervous system is that nervous systems never evolved a databus. Everything is based on connections. (Well, not everything, since hormones are transmitted through the bloodstream, but their effects tend to be broad rather than targeted.) Once a particular motor neuron is excited, it’s only going to excite a certain set of muscle fibers.

“If an evil mad scientist rewired someone’s CNS, would an attempt to blink the eyes move a finger?”

Interesting question. Setting aside all the practical concerns (if two neurons are attached, could they function as one neuron?), in principle, yes.

Interestingly, we might have natural versions of this to some degree. People suffer from things like synesthesia where sensory modalities become crossed, or allochiria where signals from one side of the body are confused with signals from the other side. Although I’m not aware of anything like this in the motor output systems.

May 27th, 2019 at 12:04 pm

“Maybe another criteria is whether the causal force supplies all the energy for the effect it causes.”

Oh, I like that! That fits all cases I can think of that I would label “signal” and excludes those I wouldn’t.

“…nervous systems never evolved a databus.”

😀 I do love the idea of having one, though. Think it would be in chunks of ten “bits” rather than eights?

[I wrote an online essay once about how I thought, in the Star Trek era, computer engineers might have started using 10-bit bytes, 20-bit words, 40-bit dwords, and so on. I mean, why not? A little more room in the “byte” and chunks of ten are much easier to translate mentally. 10 bits = 1 Kilo, 20 bits = 1 Meg, 30 bits = 1 Gig, 40 bits = 1 Tera, and so on. Very handy.]

“Everything is based on connections.”

Yeah. My “ropes and tugs.”

Say… Multiple nerves go to each muscle group, don’t they? Maybe there is a little bit of a parallel highway thing going on. You mentioned chemical messengers, which is also a bit parallel (and a bit, as you say, broadcast).

“Although I’m not aware of anything like this in the motor output systems.”

Yeah… Well, thinking about birth defects that would miswire, it occurs to me that might not matter unless it was catastrophic. Our whole process of learning to move and walk and talk involves training our NN to operate our muscles (tug on the ropes). When I tug this rope, that happens.

Maybe it doesn’t make that much difference exactly how the wiring starts out, so long as it’s roughly right.

James invoked a discussion about teleonomy in the Giant File Room, and, while reading the Wiki entry, something struck me.

Teleonomy raises the issue of natural forces over time creating a “useful” end goal. I’ve raised the metaphor of a watershed evolving over time in comparison to biological evolution in general.

In some sense, the current form of the watershed was always there. The various qualities of the land predetermine how the watershed is likely to form. The more “random” forces of weather also shape, so there is a chaotic element.

Which got me thinking about how inevitable we might be given the various qualities of the “land” that formed us combined with various “random weather” forces.

What if we get out there and find lots of bipeds that look like us. Star Trek was always right! It wasn’t just a budget limitation. 😀

More seriously, just how inevitable might we be?

(This came off my typing: “so long as it’s roughly right.” The idea there might be only a chaotic attractor for how nerves form — roughly right, which is a-okay. I’m been likewise chewing on the idea of humanity being in a chaotic attractor zone of evolution.)

May 27th, 2019 at 5:20 pm

“computer engineers might have started using 10-bit bytes, 20-bit words, 40-bit dwords, and so on.”

Seems like some of the old mainframe and mini-computer systems played around with stuff like that, although maybe I’m misremembering. But it seems like powers of 2 is easier for digital systems.

“Say… Multiple nerves go to each muscle group, don’t they?”

Seems like each motor nerve synapses on a plate that connects to a muscle fiber group. At least for striated muscles. Might be different for the smooth muscles in places like the heart.

“Maybe it doesn’t make that much difference exactly how the wiring starts out, so long as it’s roughly right.”

Although it makes you wonder about people who are congenitally clumsy. Maybe their neural wiring got messed up in development.

“More seriously, just how inevitable might we be?”

It’s hard to say. There are a lot of contingent events in our evolutionary history, things that if they didn’t happen, we wouldn’t be here. The development of the primate body plan required the development of dense forests to make that body plan adaptive. The development of great apes then required the receding of those forests to drive us to the ground, and eventually the savannas. And something (maybe tall grass) drove us to walk upright, freeing our arms for carrying and manipulating things.

But are there other body plans that could build a civilization? As I noted on another thread, when thinking about the brain, we have to remember the hand. Our opposable thumb is as important as our wits. So it’s hard to imagine another body plan doing it. But I think we should be cautious in assuming our lack of imagination means there aren’t other paths.

But I suspect those paths are profoundly rare.

May 27th, 2019 at 5:56 pm

“Seems like some of the old mainframe and mini-computer systems played around with stuff like that, although maybe I’m misremembering.”

Six wasn’t uncommon, and some systems had nine. Eight really is just a historical standard that’s now actually official. Eight may have won out because of hexadecimal.

In the six-bit systems, engineers used two digits of octal to easily express bit patterns. With eight-bit systems, hex fills the same need. In both cases, the mapping is exact — no “left over” values or duplicate values.

The downside of ten-bit systems is lack of an easy way to express bit patterns, but part of my thesis is that the need to express bit patterns is increasingly obsolete. CSS color codes is about the only place I use it anymore.

One could use base 32, which encodes five-bits. 0-9, A-V. Weird, but doable, and technology seems to be expanding what minds can do.

“Although it makes you wonder about people who are congenitally clumsy.”

Interesting point. When I was in high school, I decided I was unacceptably (to me) clumsy and started actively concentrating on being more graceful. A few years after I began that, I realized I was much better.

Of course, high school also implies adolescent clumsiness, so who knows. It was something I focused deliberately on, for whatever that’s worth.

“The development of the primate body plan required the development of dense forests to make that body plan adaptive.”

Right, but I think that’s the thing. Aren’t forests kind of an inevitable form of plant species? And then, in turn, wouldn’t savannas also be?

Being upright has cooling and visibility advantages, so some form of tallness seems convergent. Bipedal means a smaller footprint and more flexibility maybe? We can climb, swim, run, walk, stand, do stairs, do ladders, etc. Animals can do some of those things better, but bipeds might do them all adequately?

“Our opposable thumb is as important as our wits.”

Manipulation (and fire) seem pretty important. Manipulation lets us experiment with our thoughts much better.

I can see tentacles. (And, hmm, cephalopods are pretty smart.)

I do think that will be a hallmark of any intelligent civilization we find: they’ll manipulate the hell out of their environment. (Heh! Which goes back to my belief intelligence is loud.)

May 27th, 2019 at 6:58 pm

Seems like if you had enough of a memory intensive application, bit patterns might become important again. But they’re definitely not as pervasive as they once were.

“Of course, high school also implies adolescent clumsiness, so who knows.”

I read something years ago about how the Soviets trained their Olympic athletes. They’d develop the initial coordination skills young, but as they went through their growth spurt, they’d have them focus exclusively on strength and flexibility training until their growth had stabilized. The thinking was that teaching coordination during that period was pointless.

On being upright, the trick is to find a reason we evolved that way but that the other great apes didn’t. It might be as simple as our ancestors being in a region where the fruit was higher up. Of course, testing any of these theories is the hard part.

Cephalopods are a body plan a lot of science fiction plays with. (Uplifted octopusses feature heavily in the novel I’m currently reading.) But most of that fiction ignores the fact that what makes that body plan effective is a liquid environment.

Ants, spiders, crabs, and similar multi-armed body plans also get a lot of attention, the idea being all those limbs make up for lack of a hand. But I wonder if they do. How would a spider or a crab craft a complex tool? And is that question just anthropocentric?

May 27th, 2019 at 7:40 pm

“Seems like if you had enough of a memory intensive application, bit patterns might become important again.”

Oh, they’ll always be important at a low-level. It is funny how rarely I use hex or bit-level stuff anymore, though. No one bothers to pack bit flags in a control word anymore. We just use individual, easily addressed variables.

Many of the higher level languages don’t even have the bit-twiddling capacity that, say, C and C++ have. They don’t distinguish between bit-level AND, OR, XOR and logical versions (which, oh my, can surprise you sometimes 🙂 ).

“On being upright, the trick is to find a reason we evolved that way but that the other great apes didn’t.”

It’s like our intelligence in that they seem to have the potential, but in much reduced form. It’s one of my favorite questions: What accounts for that gap?

“But most of that fiction ignores the fact that what makes that body plan effective is a liquid environment.”

Good point. Elephant trunks show us that short thick ones might work. And the ends of their trunks are a bit prehensile.

“How would a spider or a crab craft a complex tool?”

Yeah, I don’t see lots of limbs as necessarily helpful. It’s the ability to do fine manipulations with some degree of strength that matters. The thing about bones and muscles is leverage, but we can also be delicate and fine.

(There’s also that old joke about how the centipede walked fine until someone asked it how it kept all those legs straight. Poor thing never moved an inch after. Couldn’t stop thinking about it. 😀 )

“And is that question just anthropocentric?”

I don’t think so. We have enough different species on Earth to do some projection, and (as I’ve mentioned before) our love of science fiction also shows we have some degree of imagination outside our species.

I think merely asking the question seriously is a good start on mitigating the issue.

May 27th, 2019 at 6:50 pm

This is a continuation of the next post given that Wyrd and I tend to get into heavy stuff, though that post was was suppose to be light: https://logosconcarne.com/2019/05/25/bad-rom-call/#comment-29177

On algorithms, yes I’m going purely abstract. Cool as it might be, the Antikythera mechanism would be a poor example of an algorithm. I’m talking about language here rather than substance. “X + Y” is more like what I mean by algorithm. But I can see how an algorithm could be interpreted from the Antikythera as well.

And yes, the physicality of I/O is what gives computation meaning to us. I get no meaning until my screen lights up, the music plays, my keyboard works, and so on.

You know, one danger however is that some rat bastard might come along and say, “Hmm…. You seem to have defined “computer” so that even technological computers don’t really exist as such either in any absolute capacity. That’s interesting given how convinced you are that intelligent entities like us may create ‘computers’, though nature never does…”.

(Aw crap, this was suppose to be light hearted weekend fare!) 🙂

May 27th, 2019 at 7:27 pm

“Cool as it might be, the Antikythera mechanism would be a poor example of an algorithm.”

Why is that?

You go on to refer to language and equations. But there are very obvious algorithms that can be written for the Antikythera (just as there are very obvious algorithms behind an electronic calculator).

If you’re requiring written algorithms for “computation” then you’re essentially using the CS definition.

If the Antikythera is a “poor example” of something with an algorithm, what’s a good one?

“You know, one danger however is that some rat bastard might come along and say,…”

I have no idea what this paragraph is getting at. Please speak plainly. I’m old.

May 27th, 2019 at 8:44 pm

Wyrd,

If something is possible to write for the Antikythera to function by in a language based sense, then that’s what I mean by “compute”. Otherwise no.

As for the next issue, this was a bit of humor and I meant no offense. But I think now that I had it wrong there. It’s not that brains have sources of input and output which thus render them non-computers, since the machines that we build have those as well. It’s that they have no written instructions by which to function. They’re not teleological information processors. Regardless, as established earlier I mean to speaking in terms of “brain function” rather than “computation”.

May 27th, 2019 at 9:30 pm

“If something is possible to write for the Antikythera to function by in a language based sense,”

It’s a calculator, so, yes, absolutely something (an algorithm) can be written.

“I meant no offense.”

Oh, none taken. I just had no clue what you meant.

“It’s not that brains have sources of input and output which thus render them non-computers”

Hold on, I’m confused. I thought having I/O was part of your definition of computation? Here you seem to be saying that having I/O renders it not a computer?

“It’s that they have no written instructions by which to function. They’re not teleological information processors.”

Are you also making the claim no algorithm can be written? That’s one of the key questions of computationalism.

See, the Antikythera is a (mechanical) computer because there is an obvious and fairly simple algorithm that describes its behavior, and because the information the algorithm produces is essentially indistinguishable from what the Antikythera itself produces.

For the brain, there is no obvious algorithm, and it’s not clear whether simulating the physical system produces the same information as the physical system. (It might.)

“Regardless, as established earlier I mean to speaking in terms of “brain function” rather than ‘computation’.”

“Lay on, Macduff, and damn’d be him that first cries, ‘Hold, enough!'” 😀

May 29th, 2019 at 8:07 am

“Hold on, I’m confused. I thought having I/O was part of your definition of computation? Here you seem to be saying that having I/O renders it not a computer?”

Ah, that should explain some things. Yes my point has been that any machine with input and/or output sources will thus not be “computational” in that regard. We call my phone a computer, though in a pure sense it’s somewhat computer and somewhat not since it incorporates input and output accessories. Such things do not “compute”.

So given that our so called computers aren’t really computers, I briefly decided that this helps the computational case for brains since they have non-computational I/O sources just as well. But then I realized that acknowledging similarities with a non constituent trait shouldn’t demonstrate pro constituency!

“Are you also making the claim no algorithm can be written? That’s one of the key questions of computationalism.”

Well no, I was merely saying that I presume nature built us rather than any sort of intelligent being. Given these origins I wouldn’t think that such an algorithm could be written however. As you come to grasp my model of brain function however, I think you’ll see that it doesn’t support such potential.

“Lay on, Macduff, and damn’d be him that first cries, ‘Hold, enough!’”

I’m a bit rusty on my Shakespeare, but I’ll interpret this as “So bring it on man!” Yes I’ll do my best as time permits.

From 3.5 billion years ago a vast microbial mat of life developed in our oceans. Though evolution should have been occurring continuously, apparently its most revolutionary period came about 541 million years ago and lasted for about 20 million years. Apparently most major animal phyla emerged during this “Cambrian explosion”.

Here we not only see standard genetic based organism instruction, but the rise of central organism processors in more advanced multicellular organisms. This is all standard history course. I mention it however to get into what I call “non-conscious brain function”.

I suspect that at some point, and possibly still during the Cambrian, a second mode of brain function evolved as well. The deficiency of the first alone, I think, is that in more open environments sufficient instructions can’t be provided to effectively deal with novel challenges. Thus I believe that a mechanism of agency was required in order to facilitate autonomy. And what brought agency? Sentience I think. Without an interested party it seems to me that there can be no agent based decisions. So I’m saying that more advanced brains evolved to produce sentience, and that this became a functional second mode of brain function that’s produced by the first. As I define the term this is “conscious function”.

So how might this value dynamic have effectively evolved? Evolution of course builds things by trial and error. Thus all sorts of extraneous traits can ride along, some of which may find uses under the proper circumstances. Well I’m saying that a purely epiphenomenal sentience must have evolved that couldn’t have wasted much energy. (Apparently for evolution the hard problem of consciousness is not a hard problem.)

Thus normal organism function remained, though something extraneous could feel bad or good. This is where “value” would have entered existence, and I call this a conscious state even though initially functionless. There is something that it is like to exist as such.

Then at some point one of these extremely primitive conscious entities must have found itself in charge of making functional decisions. Instead of a standard non-conscious mechanism, this conscious part must have functioned to relieve itself of perhaps pain. Furthermore this must have provided advantages given the need for autonomy, and so continued to evolve with all sorts of effective conscious traits. Note that here we have not only standard non-conscious function, but that this produces sentience which drives the conscious entity to do whatever it can to feel as good as it can. So effectively there is a main non-conscious mode of brain function, and then a tiny auxiliary conscious form that the main one fosters to provide the autonomy that can’t otherwise exist. And while you and I should only have access to the tiny conscious form of function, the vast non-conscious brain should be doing the vast majority of work.

That’s the basics, and with sentience providing nothing less than value in an otherwise valueless world. So what essential characteristics of conscious function do I identify, fostered through non-conscious function, that even permitted the human to evolve? Before getting into this sort of thing I’ll stop for questions about what I’ve said so far.

May 29th, 2019 at 11:25 am

“Ah, that should explain some things.”

I’m afraid it muddies the waters even more. Please give me your definition of what a computer is. (Feel free to go back to that other thread where I first asked the question.)

But, please, a precise definition of computing.

“As you come to grasp my model of brain function however, I think you’ll see that it doesn’t support such potential.”

If so, that would be rather extraordinary.

“Without an interested party it seems to me that there can be no agent based decisions.”

That seems to depend on how one defines “interested party.” Would you call a bacterium moving along a food gradient an “interested party?”

“So I’m saying that more advanced brains evolved to produce sentience, and that this became a functional second mode of brain function that’s produced by the first. As I define the term this is ‘conscious function’.”

What could brains with only the first functional mode do versus brains with the second functional mode? What extra abilities did the second mode confer?

What is the difference between a bacterium following a food gradient and a mammal following a food scent? Yes, there is something it is like to be the animal, but how does that really change goal-oriented behavior?

You certainly don’t seem to be suggesting sentience is just along for the ride. If an organism needs fuel to survive, what does “being hungry” really add if a non-sentient organism can also seek fuel based on its first brain function?

(There is a view that does suggest our entire consciousness is just along for the ride. Our unconscious, fully determined brains do it all; we just watch the movie.)

“That’s the basics, and with sentience providing nothing less than value in an otherwise valueless world.”

But the world does have values not related to how I feel about them, doesn’t it? Doesn’t sunshine have value for plants and all animals? Likewise water, gravity, air,… lots of things have value not related to opinions on that value.

I guess my final question at this point is: That’s a nice “just so” story about how consciousness came to be, and sentience almost has to play some sort of role, but… so what?

What does that have to do with our consciousness now, let alone computationalism? I assume you find p-zombies incoherent?

May 30th, 2019 at 2:09 am

Wyrd,

You’ve accused me of constructing a “Just so story”, as well as remarked “So what?” I’ve noticed that when people take this tone with me, in most cases I later realize that it was my fault. Furthermore my ideas seem difficult enough to understand for people who are sympathetic with me. People with the converse perspective however should have little potential to grasp my ideas.

Right now I’m most interested in whether or not this situation is fixable? I certainly hope so! Regardless I’ll give your current questions a try.

What is my specific definition for computer? Beyond the broad conceptual grasp that people have in general, I don’t have such a definition. I will however accept any provided definition when I’m trying to understand what someone is saying.

It’s true than many do use the brain/ computer analogy, and that I don’t mind it myself in a non-rigorous or general sense. It’s not “true” however. It’s just an association that may or may not be useful in various specific ways.

Furthermore note that I’m not currently speaking with you here about the brain “computing”. As we’ve discussed, I’m speaking with you about “brain function”. The topic at hand is how this contributes to organism function.

Agreed that it depends upon definition. As I like to define the term, no I wouldn’t call a bacterium moving along a food gradient an “interested party”. Sure it may look to us that way, but if there is nothing that it’s like to be such an organism (as is generally presumed), then an interested party will not exist. Here sentience completely defines “interested party”.

I’m saying that there is something fundamentally different from an organism reacting to processed inputs in a certain way (like a flower opening to sunlight), versus doing something which might relieve pain. The first seems fine in more closed environments. Inputs here lead to associated outputs. But in more open environments I suspect that evolution simply couldn’t account for enough contingencies to make its organisms function sufficiently.

Note that in more and more open environments our robots function worse and worse. Could enough raw computing power be used to overcome such hurdles? My ideas suggest not in the end. Regardless I believe that evolution got around this issue by creating sentience, or an agent motivated by a punishment/ reward dynamic. The non-conscious brain didn’t need to account for the discussion we’re having for example. Instead we figure this our ourselves given our desires to feel good and not bad. Take away our sentience and you thus take away personal existence for each of us, I think. That’s what I consider different about the first and second forms of brain function — the second is autonomous while the first is not.

Do I find philosophical zombies incoherent? Instead I’d say that if my pet theory does happen to be effective, then they shouldn’t be possible.

May 30th, 2019 at 12:35 pm

“People with the converse perspective however should have little potential to grasp my ideas.”

I think you might be confusing not agreeing with and not understanding. It’s possible to fully grasp someone’s point and still not agree with their analysis.

There is a common misapprehension people have that, if they explain themselves clearly enough, then everyone will see their point and agree with them. That simply isn’t the way it works. One’s worldview, one’s starting axioms, seriously determine what ideas seem reasonable or not.

(Mike and I realized quite some time ago that, despite how much we have in common, in some areas we have very different axioms and worldviews. Mike is more of a relativist than I… or I’m more of an absolutist than he… it’s relative. 🙂 Point is, we’ll never agree on some things even though we do grasp each other’s positions.)

“What is my specific definition for computer? […], I don’t have such a definition.”

Ah. Well, then I withdraw the question. We’ll speak of them no more.

“Here sentience completely defines ‘interested party’.”

Got it.

So your statement is: “Without [sentience] it seems to me that there can be no agent based decisions.” Is that correct?

How are you defining “agent” — is a bacteria an agent? Is my thermostat an agent?

“Note that in more and more open environments our robots function worse and worse.”

I see your “open” versus “closed” environment idea as needing definition and examples. What constitutes an “open” environment such that autonomous — I want to use the word “agent” here — organisms can’t… thrive? survive?

Specifically, what kinds of situations would early sentience handle that organisms lacking it couldn’t?

“Could enough raw computing power be used to overcome such hurdles? My ideas suggest not in the end.”

How so? What do you see as the problem? (This is why your understanding of “computer” matters. 😉 )

“Regardless I believe that evolution got around this issue by creating sentience,…”

Your phrasing suggests Intelligent Design, but I’m sure you can’t mean that, so what do you mean that evolution “created” sentience? Evolution has no goals! (This kinda mirrors the conversation with James next door about teleonomy.)

“Take away our sentience and you thus take away personal existence for each of us, I think.”

To what extent do you see as different, the following:

Essentially, it seems to me, you’re in the position of having to explain why philosophical zombies can’t work.

You can’t reply: “Because they lack sentience!” — that’s a given. The pressing question you need to answer is why that matters.

Why can’t a correctly programmed robot (with no sentience) do everything a sentient being can do? Why is sentience (or phenomenal experience) necessary to function?

May 31st, 2019 at 4:38 am

“I think you might be confusing not agreeing with and not understanding. It’s possible to fully grasp someone’s point and still not agree with their analysis.”

Oh definitely! Except that you admittedly don’t yet grasp major elements regarding my positions. In truth what I most seek is not for others to agree with me, but simply to grasp my various positions and how they’re related. That’s my true goal. And yes I suspect that such people would tend to agree with me, which remains to be seen. It’s a person who grasps my ideas that should be able to provide me with the most effective criticism — here I might be given insights from which to alter or abandon suspect elements.

Like you I consider Mike and I quite well aligned in most regards. If we’re right about that then this should be the case for us as well. There’s one difference between Mike and I that I only recently grasped explicitly. Of course he’s been talking about consciousness as a less than useful distinction for years. But until your “Giant File Room” post I didn’t quite realize that he’d actually call a Chinese room “conscious”. Perhaps I just didn’t want to believe it? Anyway you and I seem far more aligned about this.

“So your statement is: “Without [sentience] it seems to me that there can be no agent based decisions.” Is that correct?”

Yep.

“How are you defining “agent” — is a bacteria an agent? Is my thermostat an agent?”

Well an agent would be something slightly beyond just sentient. It would need to be functionally so, which is to say have the capacity to promote its own sentient based interests somewhat. So bacteria and thermostats would fail to qualify for lack of sentience, though something sentient and powerless will also fail the “agent” distinction.

“I see your “open” versus “closed” environment idea as needing definition and examples. What constitutes an “open” environment such that autonomous — I want to use the word “agent” here — organisms can’t… thrive? survive?

Specifically, what kinds of situations would early sentience handle that organisms lacking it couldn’t?”

An extreme example of a closed environment would be the game of chess. An extreme example of an open environment would be existing as a functional human. So apparently I’m referring to a gradient of variables — chess has few while human life has lots. It should thus be far more possible to write effective instructions for successful chess playing, than instructions for successful human proliferation.

If we go back to my pet theory regarding the rise of sentience, the theme is that evolution effectively could “provide” (and yes I mean teleonomically rather than teleologically) operations for most everything in more closed environments. Plants wouldn’t need a second mode of brain function (or even a first!). But as various organisms during the Cambrian started eating not just the microbial mat, but each other, they should have needed to develop instructions from which to eat but not be eaten under various “sensed” circumstances.

My own idea however is that in the war for genetic survival, environments gained more variables, or thus became more open. Well here it should naturally be harder to “write” effective instructions. Too many contingencies to account for. My proposed solution is that evolution effectively wrote the best instructions that it could, and this included creating an “agent” to do what it couldn’t directly. Here instead of providing instructions evolution could say, “This sort of thing will cause you to suffer, while this other sort of thing will feel good. Thus it’s in your personal interest as an agent to figure out various things that I can’t effectively design into you.” So autonomy might have emerged by means of such a workaround.

Of course I could be wrong about this. It might be possible for philosophical zombie to exist and thus function just fine in highly open environments. Evolution doesn’t seem to have built any though. Furthermore in practice when we try to develop machines that function well in more open environments, they seem to have extra more problems.

Regardless if you’re able to grasp this explanation, right or wrong, there’s a good deal more that I have to say. I’d like to get into not just the “Why?” but also the “What?” of consciousness. I consider that to be the main question. (Conversely the “How?” of consciousness doesn’t bother me.)

“Your phrasing suggests Intelligent Design, but I’m sure you can’t mean that, so what do you mean that evolution “created” sentience?”

I just mean that this aspect of reality “evolved”. I consider this to be some seriously special “stuff”! One of the reasons that it’s hard for Mike to grasp my ideas, I think, is because he has a hard time acknowledging that nature is creating something quite unique here, or all that’s valuable to anything throughout all of existence. Sentience can be simulated to an arbitrary degree of prediction, though no simulation of sentience will contain its real world properties — there’s nothing which hurts or feels good. And with nothing that hurts or feels good there is no associated function. It’s similar to how you cannot walk across a simulation of a bridge.

May 31st, 2019 at 12:38 pm

“Except that you admittedly don’t yet grasp major elements regarding my positions.”

You regard sentience as the primary source of consciousness, its “fuel.”

“So apparently I’m referring to a gradient of variables — chess has few while human life has lots.”

As an aside, among the many billions of humans, how many play chess well compared to how well they manage to navigate life? Why did it take so long to build a successful chess-playing machine? Or what about Go, which is even harder? Yet billions of people manage life just fine.

When a person is born, does their life really have as many possible futures as any chess or go game? In some ways, yes, in some ways, no. It’s actually kind of hard to compare the relative complexities.

Both chess and life are pretty complex. OTOH, we have managed to make a chess-playing machine. We’re still out of sight on making an AGI machine…

Let’s pin chess as an example of a task that doesn’t require sentience. If anything, “feelings” might affect play negatively. I think you’ll agree a chess-playing {thing} doesn’t require sentience.

So the question can be framed: Exactly why does anything else? What really makes any other task different from playing chess such that it requires sentience?

Why would avoiding being eaten be that different from avoiding losing your queen?

“It should thus be far more possible to write effective instructions for successful chess playing, than instructions for successful human proliferation.”

Depends on what you mean by “successful human proliferation.” The mathematics of proliferation are well-known and very simple. Some of the earliest computer models were population models.

[An (adult) engineer I knew in college back in the 1970s used to play this game on the University’s mainframe. It was a Medieval “Civilization” type game. He’d enter a lot of data (on punch cards!) about resources and movements, and submit the card deck for a batch run. The next day he’d pick up his printout of what had happened to the population and go another round.]

We can currently simulate human organs pretty well. Consciousness is a hard one, but most of the other stuff isn’t that hard.

“My proposed solution is that evolution effectively wrote the best instructions that it could, and this included creating an “agent” to do what it couldn’t directly.”

Dude, that reeks of Intelligent Design. You’re assigning goals to evolution. It doesn’t have any.

“So autonomy might have emerged by means of such a workaround.”

But bacteria have as much “autonomy” as anything else does, don’t they? Theirs is based on simple mechanisms, while ours is based on complex ones, including sentience, but the autonomy exists in both cases.

I think what you’re trying to say is that sentience confers the ability to deal with more complex situations, but I don’t see how that follows. Intelligence is what’s required.

In fact, aren’t we a bit more intelligent (rational) when we’re a bit less sentient (emotional)?

“It might be possible for philosophical zombie to exist and thus function just fine in highly open environments.”

Your “pet theory” requires that you address why p-zombies can’t work. If you can’t, your theory fails.

I don’t think you can point to our lack of progress with machines. We only just created chess- and go-playing machines (after, what, maybe 60 years of effort?). Many believe we’ll create machines that come pretty close to us. Or even exceed us.

So I think you have a long way to go to justify sentience as the source or “fuel” of our consciousness. (I suspect it all co-evolved as a part of how evolution can generate complexity from basic rules and energy. The only “driver” was the evolutionary process itself.)

“…nature is creating something quite unique here…”

That much we can agree on!

May 31st, 2019 at 2:24 pm

Were the dinosaurs sentient? (In general, are lizards sentient?)

If the dinosaurs were sentient, it doesn’t seem to have advanced them much in millions of years. Lizards have been around since then, and (if they’re sentient) it doesn’t seem to have done much for them. Lizards are some of the least conscious animals.

Or, if they aren’t sentient, are you saying sentience arose much later in animal evolution? That it didn’t arise until mammals?

June 1st, 2019 at 6:13 pm

Wyrd,

I’m going to continue speaking of evolution in a way that reeks of intelligent design. Why? Simply because the English language evolved to make this convenient and the converse inconvenient. I’d rather not also accuse you of whacking a straw man here! I’m as strong a naturalist as you’ll meet.

Hey that’s pretty good! Still the challenge is to gain a working level grasp of my ideas rather than simply lecture level. And how could we assess such mastery? To the extent that you could read a given article (regarding psychology for example), and then predict the sorts of things that I’d say about it, you’d have a working level grasp of my ideas. Of course I haven’t yet gotten into the vast majority of my ideas in even a lecture level capacity however. We’re currently at a prequel stage addressing the “Why?” of sentience/ consciousness.

I’ve provided a reason that sentience is needed for more advanced forms of life, and thus why philosophical zombies aren’t possible. But perhaps I haven’t yet done so well enough? Hopefully the following account will be sufficient.

I’m pleased that you understand that a chess playing machine needn’t be sentient. So why does a more “open” environment, such as for the human or even one of those primitive organisms by which sentience began, require sentience for their function? What is the effective defining difference between an “open” and a “closed” environment?

Here it may be helpful for me to list some of the organisms that I suspect reside on each side. I consider mammals, birds, reptiles, and most or all fish to effectively function in open environments and to thus not be suited for philosophical zombie recreation. I consider microorganisms, plants, and fungus to function in closed environments, and thus to effectively be philosophical zombies. I’m less confident about insects, though lean towards the sentient side. To me flies seem sentient. Ants? Though apparently quite “robotic”, I’m not confident enough to assert that they lack all sentience at this point.

Regardless, what’s the difference between the two sides? The difference I propose is that in closed environments at a given moment, there are effective non-agent based instructions that may be developed, though in open environments there are not.

Clearly such instructions can be provided for the game of chess. (And I could change this to Tic-Tack-Toe with any objections.) The same could be said for microorganisms, plants, and fungus I think. They needn’t be sentient for there to be productive non-sentient based mechanisms from which to propagate their gene lines. Of course the things that they do are insanely more involved than simply playing chess, but still. The point is that each moment there are non-agent based responses which promote gene line propagation.

Just as all of our robots aren’t sentient, clearly evolution should have been able to develop central organism processors that aren’t sentient. But once these organisms started requiring strategies from which to eat and not be eaten under countless circumstances, the theory is that their environments began to “open”. While evolution could still say, “If [this, this, this…] then [this, this, this…]”, the thought is that effectiveness diminished since adding more and more “set pieces” couldn’t productively go on forever. That would be where “If…then…” sets of instructions were unable to sufficiently promote genetic survival. Here an agency mode of operation seems to have filled the void. And given the apparent prominence of sentience today, quite extensively so.

That’s my answer for why it’s impossible to build something without phenomenal experience that functions like a human — “set piece answers” in open environments couldn’t forever remain effective. Sure they could work somewhat, though agency worked better and so came to dominate. And note that the purpose of these agents is not to pass on their gene lines, but simply to feel as good as possible each moment. Here evolution simply needs to figure out a fitting way to punish organisms given that they incur damaged bodies (or whatever), or reward organisms that effectively spread their seed (or whatever). Thus the sentient being is motivated to figure out what to do in a personal capacity, or teleological function.

Here’s a somewhat concise heuristic of my point: There are effective set piece answers from which to avoid losing your queen. There aren’t effective set piece answers from which to avoid being eaten.

As defined here, bacteria use set piece answers exclusively. Conversely sentient beings have set piece answers… as well as agency. I believe that there are biological mechanisms which address amazingly complex dynamics — far beyond what the human should ever grasp. So it’s not exactly complexity that I’m speaking of. Instead it’s a capacity for set piece answers to do the job (and the “job” here is genetic proliferation). There should be no set piece answers from which to think the things that you’re thinking right now. Thus there should be no potential for something to function like you in a non teleological way. And what’s the source of your teleology? To feel good and not bad.

Agreed. We’ll need effective reductions of this as well however. Thus my efforts.

You conclude by referencing life like its goal is to become advanced. In truth “success” here may effectively be defined as genetic proliferation — otherwise it’s hypothetical rather than actualized.

I wonder if you’re familiar with the scientist who in recent years established that the human brain has 86 billion neurons rather than the 100 billion long presumed? I found her Brainscience interview quite encouraging. By minute 11 she notes how it’s productive to consider evolution as “change” rather than “progress”. It’s not progress that the human evolved, but rather just an event that occurred given the circumstances. The human can survive right now, and so like other forms of life it does. Some forms of life are foundational however, unlike us. We’ll surely be a fast blip on the screen of life.

https://hwcdn.libsyn.com/p/f/2/d/f2d638af6d0a7cdd/133-BS-SuzanaHerculano-Houzel.mp3?c_id=15053915&cs_id=15054289&expiration=1559431655&hwt=9a0eb57e7e23c7fe51db317888a8965a

June 2nd, 2019 at 5:47 pm

“…And how could we assess such mastery? … I’m pleased that you understand…”

FTR: I don’t respond well to verbiage that treats me like a trained dog. Please just stick to your points and don’t try to characterize me.

“…organisms that I suspect reside on each side.”

So the dividing line is pretty much at insects, with insects perhaps falling on the sentient side.

You’ve equated sentience and consciousness, so same dividing line, I assume.

But don’t all these organisms exist in the same environment? “The” environment, so to speak? What makes some of them in “closed” environments while others are in “open” ones?

Why have some of those organisms progressed so much while others haven’t changed in millions of years? You say lizards are sentient, but it doesn’t seem to have “fueled” much consciousness in them.

“Clearly such [effective non-agent based instructions] instructions can be provided for the game of chess.”

This perhaps gets at the crux of something. Chess is fairly easy to learn to play, but very difficult to learn to win. Winning at chess (against a good player) is as “open” as anything I can imagine.

It is not (even for software) a matter of set pieces, but of learning to play.

“That’s my answer for why it’s impossible to build something without phenomenal experience that functions like a human — ‘set piece answers’ in open environments couldn’t forever remain effective. “

Yet there is a huge ground between “set piece answers” and sentience-based consciousness (as neural nets, for one, demonstrate).

At first I wrote “sentience-based reasoning” but that seemed an oxymoron: reasoning is an intellectual process that doesn’t require sentience. To me it shows the disconnect between sentience and intellect.

To me, intelligence is what’s required to handle increasingly complex situations, and I consider the accident of intelligence in evolution as conferring the ability to take on progressively more complex situations: tool building, communicating.

In my view sentience just came along for the ride and, because it is a part of our primitive past, can be a hindrance at times. Pain killers have been big business all throughout human history.

“There are effective set piece answers from which to avoid losing your queen. There aren’t effective set piece answers from which to avoid being eaten.”

I disagree. I think both depend on your wits in the moment. Neither need depend on your sentience. There is a perfectly rational analysis: Eaten=no perpetuation.

June 3rd, 2019 at 9:45 am

Of course. Sorry. I didn’t quite see that coming.

Well we do all share the same planet, though make our livings in very different ways. The frogs outside obviously need to function as they do rather than as we do in order to pass on their genes given their particular niche. But could we write an instruction manual for frogs to function effectively in their niche? I don’t think so. In fact I don’t think even evolution was able to put together such a plan for them. I suspect that it could do so for microorganisms, plants, and fungi, and certainly the game of chess if it were in the mix, though I suspect that frogs exist under environments that are too “open”. So instead I’m proposing that evolution made some things feel bad for them, like body damage, and other things feel good for them, like eating nutritious food. Given such phenomenal experiences it was then in an agent’s interest to figure out what to do in certain capacities.

Well I only suspect that they’re sentient. And if so it may be that they already fill their niche pretty well. Evolution isn’t out to make things any more intelligent than their various niches require.

Well my point here is that instructions can exist to play chess pretty well. (If not then we could consider tic-tac-toe.) Not so I think for advancing a lizard’s gene line. Thus evolution may have only provided most of these instructions and then left the lizard to figure certain things out, and motivated by its desire to feel good and not feel bad. Thus here we have agency.

I think I see what you’re saying, along with how emotion can cloud rationality and all that. But if you lacked all sentience, would an agent still exist? If you could not feel bad or good, would you do anything at all? I suspect that you’d lose your motivation from which to consciously function. This is why I say that sentience effectively exists as the “fuel” which drives conscious function. The popularity of pain killers demonstrates my point. Nature has built us to survive by trying to feel good, though we fight against nature here given how badly it hurts us.

Well computers seem to do a pretty good job of playing chess, and even without any “wits” that I know of. In the end however the point is not for you to agree with my “Why?” of consciousness explanation, but rather to grasp it. Unfortunately it’s been difficult for me to help others understand this position. I surely haven’t been expressing it well enough yet. I do suspect that discussion with you has been helping me though. Then the question beyond would be if we should go beyond the “Why?” and get into the “What?”

June 3rd, 2019 at 3:18 pm

“In fact I don’t think even evolution was able to put together such a plan for [frogs].”

But that’s exactly what it did do (though this creationist phrasing is misleading).

There is no “plan.” Ur-frogs turned into frogs due to accidental mutations that happened to be advantageous for the mutants. (Compared to most mutations, which are harmful.)

You want to place “sentience” in the central role of “goal” for intelligent behavior, and as I said when this started, on some level that’s obviously true. At the level you’re using “sentience” it basically means “desire” or “want” — it forms a gradient between feeling “good” and feeling “bad” with a motivation to move along that gradient.

I don’t at all dispute that goal-oriented behavior evolved from want or desire. I think that’s obvious. I’m just not sure I call that “sentience.”

[FWIW: To me “sentience” involves the concepts of misery and joy, and I don’t think fish or frogs have them. That kind of sentience came much later and is a precursor to consciousness (in the general self-awareness sense).]

“Evolution isn’t out to make things any more intelligent than their various niches require.”

The thesis is that sentience is a driver towards consciousness. Why aren’t all sentient creatures so driven?

“Not so I think for advancing a lizard’s gene line.”

You can’t have it both ways, my friend. If sentience is such a driver, you have to account for why all sentient creatures aren’t much more conscious. (But if lizards and such aren’t sentient, then “open” environments don’t seem to require sentience.)

“But if you lacked all sentience, would an agent still exist?”

I think, at least in a limited sense, the answer has to be yes. Agency can have any goal.

(This gets at why I think low-level sentience isn’t so helpful. One can define any goal of agency as desiring to “feel better” or “feeling bad” about not accomplishing the goal. Such an agent can even be programmed to express itself as “feeling bad” or “feeling good.” One can say the same about plants seeking sunlight.)

“Well computers seem to do a pretty good job of playing chess, and even without any ‘wits’ that I know of.”

Those “wits” come from the millions of games they’re trained on. How those systems work is very similar to how humans play chess.

“Then the question beyond would be if we should go beyond the ‘Why?’ and get into the ‘What?'”

What about the what? 🙂

June 5th, 2019 at 8:15 am

So you tend to interpret sentience in a higher order way than what I mean to convey? Unfortunately I’ve had trouble finding a sufficiently acceptable term here. Before 2014 (and thus before my blogging) I adopted the term “sensation” rather than sentience for this, and in contrast with the term “sense”. Consider toe pain for example. The part of this which hurts would be “sensation” under my scheme, though the part that provides information, such as that the toe seems to be the source of the pain, would be “sense”. Or the scent of something would provide sensation to the extent that it feels good/bad, as well as sense to the extent that it imparts information such as what probably caused it. I stopped using sensation this way however because it was hard for people to alter their existing conceptions of the term.

Next I went with the “utility” term, as in Jeremy Bentham’s utilitarianism. Given that even modern economists use the term, why not? But of course in common speech people generally mean “useful” here rather than “feeling good/bad”. Furthermore the utilitarianism ideology seems to have a moralistic quest, though my own ideas are amoral.

Another way to go would be with “hedonism”, though this is commonly interpreted as living in an extravagant or excessive way, as in the life of a rockstar. Conversely epicureanism is quite modest, though it harbors all sorts of ancient Greek stipulations (not to mention the modern “foodie” association).

For a while I tried the “affect” term, as in “affective neuroscience”. It’s quite esoteric however. Valence is used in these fields as well, and I do like its association with the “value” term. It’s similarly esoteric however. Either work when I’m speaking with Mike, though I’ll need others to grasp what I’m saying as well. I’ve actually been quite pleased with sentience, though if it’s often considered “higher order” then that’s problematic for my purposes.

What do you think about qualia? I once favored this term, but decided that people might interpret it too broadly. Thus not just the punishment of toe pain, but include the informational “sense” part as well. So not just the disgust of a strong whiff of human feces, but also a probable identification of an environmental cause. I might have been too hasty about this however. What do you think about qualia for my purposes? Do you consider it any lower than sentience?

Regarding my “Why?” of consciousness speculation, it seems to me that much of our differences are definitional. This might be expected given that I’m interested in lower order consciousness while your posts here concern human specialness. If we’re going to get into my “What?” of lower consciousness as well however, I’d like to begin with something that I brought up with Mike earlier.