No, sorry, I don’t mean the Bletchey Bombe machine that cracked the Enigma cipher. I mean his theoretical machine; the one I’ve been referring to repeatedly the past few weeks. (It wasn’t mentioned at the time, but it’s the secret star of the Halt! (or not) post.)

No, sorry, I don’t mean the Bletchey Bombe machine that cracked the Enigma cipher. I mean his theoretical machine; the one I’ve been referring to repeatedly the past few weeks. (It wasn’t mentioned at the time, but it’s the secret star of the Halt! (or not) post.)

The Turing Machine (TM) is one of our fundamental definitions of calculation. The Church-Turing thesis says that all algorithms have a TM that implements them. On this view, any two actual programs implementing the same algorithm do the same thing.

Essentially, a Turing Machine is an algorithm!

The abstract Machine that Turing conceived is a way of defining what can be calculated or — more specifically — what you can write an algorithm to do. The idea is instrumental in his halting proof.

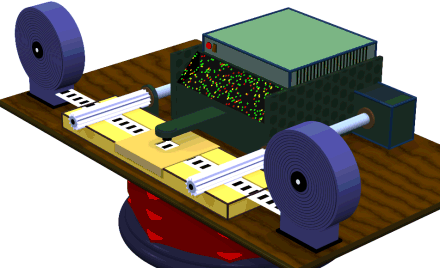

It is possible to build actual (or virtual) Turing Machines. Creating a virtual one in software is a common exercise for undergraduate Computer Science students. Those with a mechanical bent sometimes make physical TMs!

Or virtual 3D models of Turing Machines!

One problem is that a TM assumes infinite resources, so you can never make a perfect one. Compounding the problem, as calculation devices go, they’re hideously inefficient, so they really need infinite resources (and infinite time)!

Conceptually a TM is a tape (infinite in length!) along which a machine moves (or the tape moves past the machine). The tape, at regular intervals, is marked or not. The machine controls tape movement (back and forth), can read the marks, and can change them (from marked to not or vice-versa).¹

What makes each TM an algorithm is an internal state table telling the machine what to do next depending what’s on the current position of the tape.

Which may cause you might ask: “Hey, wait! If the tape only has a mark or not, how much can the machine have to do? I see only two options.”

You are correct. There are only two options. Marked; not marked. The magic lies in what we might think of as the “back trail” — all the steps the machine does to get to that point on the tape.

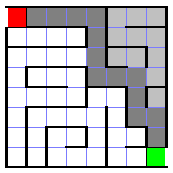

A simple maze.

To illustrate this, imagine we’ve been placed in a maze, at the entrance (the red square in the upper left).

We’re going to use the left-hand rule to find the exit.

We’ll assume our starting position is facing outwards (to the left, or west, on the maze map, as if we’d backed into the maze).²

The algorithm goes like this:

- Turn 90° to our right.

- Facing a wall? If Yes Goto #1 (else continue).

- Go forward one space.

- Is this the exit? If Yes Solved! (else continue).

- Turn 90° to our left.

- Goto #2.

It’s the equivalent of walking through the maze with our left hand always touching the wall on the left. The first couple of steps into the maze look like this:

- Turn right (now facing north).

- Facing a wall? Yes. Goto #1.

- Turn right (now facing east).

- Facing a wall? No! Continue.

- Go forward one space.

- Is this the exit? No. Continue.

- Turn left (now facing north again).

- Goto #2.

- Facing a wall? Yes. Goto #1.

- Turn right (now facing east).

- Facing a wall? No! Continue.

- Go forward one space.

It’s taken a lot of algorithm steps to move two cells into the maze, but eventually the algorithm will take us to the exit (green square in the lower right).

The algorithm explores all the grey squares. The darker ones are the direct path to the exit, which is always the inner edge of the explored area.

The point is that at any given point in the maze, we’re faced with the question, “Am I looking at a wall?”

That’s the same question as, “Is there a mark on the tape?”

But there was also the question, “Am I at the exit?”

That can be rephrased as, “Am I looking at a wall (with an exit)?” Now the overall question is, “Am I looking at a wall? If so, does that wall have an exit?”

This actually models the point about “back trail” in the small. To ask whether the wall is an exit, we first must ask whether it’s a wall. The mark meaning “exit” doesn’t mean anything except following a mark meaning “wall”.

In the large, the maze shows how following a series of (pairs of) marks not only travels a path through the maze but locates the exit.³

More to the point, where we are in the maze reflects all the steps taken to get to that point. This is indicative of any process. The state constantly evolves and — at any given point — reflects the steps that got it there.⁴

Something else to note is that, while the algorithm had only six steps, the execution of that algorithm involves a lot of steps. Those six steps get repeated many times, a common characteristic of algorithms.⁵

§

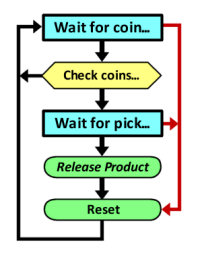

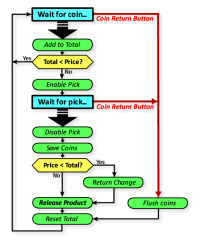

We can consider a somewhat more involved example, the algorithm behind a (fairly simple) vending machine.

The flowchart is shown to the right.

The maze-solving algorithm we just considered had two decision points (wall? exit?). It also had steps done between those points.

Very often, when we characterize a system, we only care about the system’s state when we need to make a decision. The intermediate states between decisions don’t matter because they don’t affect anything. We can ignore them.

This is the key difference between a typical flowchart (such as we’ve been looking at so far) and a typical state diagram (which we’ll get to in a bit).

The vending algorithm also has two decision points (in yellow). The first asks whether enough coins have been tendered for a purchase. The second asks whether change is owed.

But this algorithm has two very important other points: Wait states (in cyan). The maze algorithm never waited, it just plowed through the maze.

A long wait state!

Conceptually, a wait can be viewed as a constant loop — the very loop drivers hate from passengers: “Are we there yet?” “Are we there yet?” “Are we there yet?” “Are we there yet?”

At some point the answer is, “Yes, damnit!” and the loop exits.⁶

The main point here is that the vending algorithm has two stable states (the wait states) and a number of transitory states. A stable state is a state where a process stops until some event prompts (or allows) it to continue.

The system performs transitory steps “at its own speed” (so to speak) but stops at stable steps. This is another characteristic of algorithms.

§

So far we’ve looked at flowcharts — which are state diagrams — but often include more transitory states (especially if important to understanding the algorithm).⁷

State diagrams often lump transitory states between stable states into a single task performed between those stable states. That reduces the diagram to functional skeleton that outlines the algorithm’s behavior.

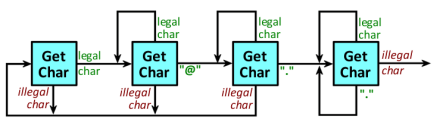

Below is a simple state diagram for an algorithm that identifies valid email addresses in text. Each state (cyan box) waits for another character of text. (We can imagine, for example, it’s monitoring someone’s typing.)

Any illegal character — one that couldn’t possibly be in an email address — resets the process to the beginning. So long as it gets legal characters, it progresses through the states.

If it gets to the final state (far right), the next illegal character it gets signals the end of the email. (Even a space will do it.)

Each state change (black lines with arrows) involves some number of transitory states. For example, the algorithm might be filling a buffer with the characters it finds. A jump back to restart needs a step clearing that buffer.

This isn’t a perfect algorithm (but a better one has more boxes and lines). For instance, it believes an email address can end with a period! And it has no problem with multiple periods with nothing between them. Both are illegal in an email address.

§

This may seem to have gotten away from Turing’s Machine, but each of the three examples we’ve studied is a Turing Machine.

This may seem to have gotten away from Turing’s Machine, but each of the three examples we’ve studied is a Turing Machine.

The Machine part is trivial. It just reads, moves, or writes, the tape.

It’s the flowchart wired into the machine that makes it do what it does. The combination of that flowchart and a machine that executes it defines what we mean by calculation or computation.

[If you’d like to know more about how the flowchart is wired into the TM, I’ve just started a series on my programmer blog that explores state engines (aka TMs). Most (or at least much) of the first article should be reasonably accessible even to non-programmers.]

[1] If that sounds familiar, it may be from all the times I’ve said that computers read numbers and write numbers (and do math). Reading and marking the tape is the same thing. Also, that the tape is marked or not corresponds directly to binary one and zero. This is computing at its lowest level!

[2] As is common with maps, left is east, up is north, and so forth.

[3] Assuming paths do not reconnect once they branch away — that generates loops in the maze graph which can defeat the left-hand rule.

[4] There are multiple paths that can take you to a given spot in a maze, so a given state does not necessarily imply a specific back trail of states.

[5] In fact, the ability to iterate (loop) is a required characteristic of code — of anything capable of expressing an algorithm.

[6] Some computer algorithms (parts of them) operate exactly like that. The code enters a loop of constantly asking if input is available.

A better architecture allows the process to enter a quiescent “sleep” state. Then input interrupts wake it up to process the input.

[7] We’re talking about flowcharts used for visualizing and discussing algorithms, so irrelevant details are just noise. The abstract flowchart for a full algorithm necessarily has all the steps.

November 11th, 2015 at 4:21 pm

Question for the day: Is it the way machines can do some of the same tasks humans do that so convinces people machines must be able to actually be us?

The idea certainly predates computers. Ancient Hebrew mythology has golems, living clay beings, brought to life essentially by sacred words inside them.

The Tin Man in Oz is a silly reference to a long-standing icon of the mechanical man. It always seemed pretty clear that gears and cogs couldn’t emulate a real person, not even really tiny ones. (Really tiny gears and cogs, I mean.)

Computers do seem magical to people in a way that, say, cars do not (although there isn’t actually all that much difference). It’s possible we’re more used to mechanical things now.

There is also that cars are easier. Gears and cogs are easier. You can see them working. Logic gates, let alone algorithms, not so much. There is more arcane mystery there. More chance that something magical could spring forth.

I guess there are 10 types of people. Those who think binary is just a bunch of bits and logic, and those who think that those things can do self-aware consciousness. 🙂

November 12th, 2015 at 2:23 pm

I think the reason why the tin man is seen as being “like” a physical body is because both are made of moving parts. And the reason why computers are seen as being “like” a brain is because both seem to be inert, both use electrical signals internally and as input/outputs, both store data, and both seem to follow ordered sequences of thought/computation.

November 12th, 2015 at 4:53 pm

That’s an interesting point! That may be why many draw a line from computers to brains.

As you suggested before, I know too much about computers to see those things as being very computer-like. They’re analogous, certainly, but function so differently. (I suppose it brings us back to the crux: Is the analogy (model, simulation, whatever) the same as the thing?)

I think, too, people do draw a line from “people use logic and information” to “computers use logic and information.”

November 17th, 2015 at 7:54 pm

I am not as able to grasp all of your posts but did enjoy the movie which did explain Alan Turing trial and error machine which “beat” or was able to translate the Enigma Machine. Benedict Cumberbatch and Keira Knightley were good in their roles as were the side characters and members of the team. “The Imagination Game” was the film’s title, W.S. Hope you are well.

November 18th, 2015 at 10:38 am

Yeah, I’m looking forward to seeing the film when it comes around on cable. The YouTube Numberphile group did a lot of videos about the Enigma and the Bombe. For instance, did the movie touch on how a key part of cracking Enigma was realizing that a letter never mapped to itself? In other words, an “A” in the message was never an actual “A” it was always one of the other letters.

November 18th, 2015 at 10:47 am

The Imitation Game is excellent. I don’t know how accurate its portrayal of the code breaking is, but yes, I think it mentioned that aspect.

November 18th, 2015 at 11:03 am

I’d like to believe they were accurate on the stuff they showed, but sadly that isn’t always the case. I always expect stuff to be cut out, and there are necessary changes due to cinema versus text, but with factual topics, you hope they use the real ones!

Speaking of historical scientific figures, have you seen Einstein and Eddington? Neat movie. (And Eddington, like Turing, was also gay. Worse, he was collaborating with a German scientist who was apparently saying that the British legend Isaac Newton had gotten it wrong.)

November 18th, 2015 at 11:45 am

I’ll look out for it.

June 13th, 2024 at 5:18 pm

[…] Turing’s Machine or State Engines, part 1, for more about state […]

June 13th, 2024 at 5:53 pm

[…] I discussed the machine in a previous post. [See Final States, State of the System, and Turing’s Machine.] […]

June 13th, 2024 at 5:58 pm

[…] need to presume you (the reader) have some grasp of an FSA (or FSM). [If not, see: Turing’s Machine or these … three … […]

July 2nd, 2024 at 2:28 pm

[…] it was his talking about how Life can calculate anything, that it’s a kind of Turing machine, and how it is therefore subject to the Turing Halting […]

July 2nd, 2024 at 3:39 pm

[…] instructions. It tells the computer how to move from state to state. An algorithm always has a TM (Turing Machine) that represents […]