In this corner, philosopher John Searle (1932–), weighing in with what I like to call the Giant File Room (GFR). The essential idea is of a vast database capable of answering any question. The question it poses is whether we see this ability as “consciousness” behavior. (Searle’s implication is that we would not.)

In this corner, philosopher John Searle (1932–), weighing in with what I like to call the Giant File Room (GFR). The essential idea is of a vast database capable of answering any question. The question it poses is whether we see this ability as “consciousness” behavior. (Searle’s implication is that we would not.)

In that corner, philosopher and mathematician Kurt Gödel (1906–1978), weighing in with his Incompleteness Theorems. The essential idea there is that no consistent (arithmetic) system can prove all possible truths about itself.

It’s possible that Gödel has a knockout punch for Searle…

This post isn’t really about the GFR and its application as a thought experiment for consciousness. That’s been well explored. Seeing it in terms of Gödel, though; that seems a new twist.

[At least to me; this is likely plowed ground. I’d say “no doubt some ancient Greek though of it first,” but Gödel came two millennia after them. (And even so, one probably did.)]

Just seeing those two philosopher names in juxtaposition may have made your light bulb go on. If so, bear with me while I lay some groundwork for any novitiates.

§ §

Firstly, John Searle and his Giant File Room.

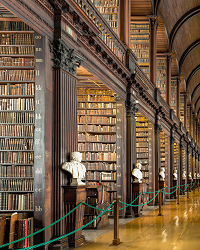

We can imagine the Librarian of a vast Library — something on the order of the Library of Babel (as famously described by Jorge Luis Borges). The Librarian uses the Library to answer any question we ask.

A further wrinkle is that the Librarian doesn’t speak the language used in the Library, including in our questions. Instead, a special Librarian protocol links the text of our question with its correct answer. (Think of it as a magic Dewey Decimal system.)

Searle’s implication is that the Librarian seems to understand our questions, because we always get correct answers. But — according to Searle — there is no understanding present. Certainly the Librarian doesn’t understand our questions, and it’s hard to argue an inanimate object (such as a collection of books) is conscious.

Overall, Searle is saying a computer wouldn’t be conscious, even though it seems to understand our questions.

At the moment, the question is theoretical, since we don’t have systems complex enough (or the full understanding required to even design them). But it is certainly a question we’ll face down the road.

§

Secondly, Kurt Gödel and Incompleteness.

This one is a little harder. It’s important to emphasize this applies strictly to systems capable of doing arithmetic.

The basic idea is that an arithmetic system is logical and, assuming you do the math correctly, always gives true (logical) answers. The old hope for such systems is that they can mechanically crank out all possible truths.

Gödel dashed that hope. Arithmetic systems cannot enumerate all possible true statements.

The limit is theoretical, not merely effective or practical. There is no way, even in principle, to accomplish it.

[However: A more complex system can prove all possible truths about a less complex system, but still cannot prove all truths about itself. This continues up the complexity scale. No system can prove all truths about itself. I find the open-endedness comforting.]

§ §

Okay, so how do Searle and Gödel connect? And what’s the knock-out punch?

Well, consider what happens if we ask the Librarian mathematical questions…

§

The basic premise is that the Library acts as a lookup system. By fiat (and probably in truth), there is no understanding in the process of looking up — of indexing — an answer to a given question.

[The understanding is in the design and construction of the Library, how it came to be capable of answering questions.]

As a lookup system, it would seem these have to be separate questions:

- What is two plus two?

- What is one plus three?

Even if they have the same answer. And there are an infinite number of such questions for which the answer is four. Likewise an infinite number of questions about all numbers!

Which, at first blush, seems like it might be okay. The whole premise is that we can — at least in theory — enumerate all possible questions and create the protocol that links them to correct answers.

But Gödel showed this isn’t possible, even in theory.

§

As a context, imagine one of those city-to-city mileage charts, such as usually found in a road atlas.

These charts are tables of rows and columns. A given set of major cities is listed twice, once along the rows, once along the columns. To find the distance between any two cities, locate one on a row the other on a column, and find where they intersect. That table cell shows the mileage.

No calculation or geographical knowledge required. Just lookup the cities and lookup their intersection.

So easy a simple computer could do it.

Likewise, a simple computer — a hand calculator — can be programmed with the rules of mathematical symbol manipulation (perhaps along with some trig and log tables for lookup of the tetchier stuff).

And, indeed, no understanding is required in these simple lookup devices.

§

We might try to include those mathematical symbol manipulation rules in our Library protocol. (The Aristotelian view sees math as nothing more than that anyway.)

Doing so would allow the Librarian to answer most mathematical questions.

But here’s the gotcha: Gödel showed that any consistent symbol manipulation system is necessarily incomplete. Any rule-based math system we create for the Librarian will have that flaw.

I’ll note that Gödel’s idea of incompleteness is sometimes applied as a metaphor to non-arithmetic systems. While the poetry may be apt (or not), the technical points usually miss the mark, sometimes badly.

Applying Gödel to the Library, however, is not an error or a metaphor, because we are talking about a mathematical system — more specifically, a computer system.

Because a computational system (or the Librarian’s protocol) is fully deterministic — we can always predict outcomes — Gödel absolutely applies. (As does Turing’s Halting Problem, which is essentially the same thing.)

Logic is math and both are subject to Gödel’s limits. Any purely logical, or purely mathematical, system is necessarily incomplete.

A computer, therefore, is necessarily incomplete.

§

The deeper question is: Is a human also incomplete that way? Is the human brain, as some hold, ultimately just a mechanical device fully limited by Gödel and Turing?

Or do our brains, because they are not computers, work in a way that transcends the limits of math and logic — which is to say, the limits of computation?

As I mentioned, it is usually considered a category error to apply Gödel to real life (or any non-arithmetic system), but if our brains really are computational devices, then Gödel has to apply.

An alternate view sees the brain as an analog signal processing system (of mind-boggling complexity) not necessarily subject to computational limits.

§

To tie this together with recent posts, the difference here involves algorithms and virtual versus concrete reality.

The Turing limit obviously directly concerns algorithms, but it may not be obvious that Gödel does, too. The process of enumerating true statements is fundamentally algorithmic.

For that matter, mathematics is deeply algorithmic. (Algorithms are a form of mathematics, as demonstrated by lambda calculus. Formal logic, in general, is explicitly mathematical.)

What links math, logic, and algorithms, is that they are all abstractions.

They are all descriptions of real things.

And those descriptions, those abstractions, are subject to the informational limits proved by Gödel and Turing.

As I said, the deep question is whether a concrete physical object such as a brain reifies mathematical computational abstractions.

If it does, it’s the only object nature has ever produced that works that way.

Stay non-Gödelean, my friends!

∇

February 2nd, 2020 at 11:26 am

I have no great urge to unpack Searle’s GFR or to debate computationalism. If possible, I’d like to discuss the idea of Gödel juxtaposed with Searle’s Room.

It doesn’t actually resolve the issue, of course. Those who believe the brain is a “computer” can accept the GFR (the whole system) as conscious, and those who reject computationalism can accept the GFR as not conscious. Win-win. 😀

But does Gödel highlight the issue or not change anything at all?

February 2nd, 2020 at 12:02 pm

FTR: This post grew from speculations about implementing multiplication as a lookup process rather than the usual arithmetic process.

The latter is famously computationally intense because it requires m×n computations (where m and n are the number of digits in the two operands). Doing it with a lookup table reduces the number of operations considerably.

Thinking about that led to thinking about implementing math via lookup in general, and that led to thinking about Searle’s GFR doing mathematics.

And then Gödel stopped by to say hello…

February 2nd, 2020 at 12:07 pm

Isn’t this question at the heart of Penrose’s argument?

https://en.wikipedia.org/wiki/The_Emperor%27s_New_Mind

February 2nd, 2020 at 12:26 pm

Very much so. (That book was a key part of my reconsideration of computationalism, which up to then I’d mostly accepted as likely. (I was also influenced by Asimov and SF robots, in general! 🙂 ))

February 2nd, 2020 at 12:41 pm

Ha! And you just proved the point about someone having already plowed the ground! 😀

February 2nd, 2020 at 4:37 pm

I think Gödel does apply to the GFR, and to the brain, and any type of AI system. But it’s worth remembering what Gödel’s theorems are (in a rough English translation):

So, the system will always have processing that it itself can’t validate, and it will never be able to validate itself, with certitude.

The last point is important. As Turing himself pointed out, if the system can operate in terms of probabilistic knowledge, then there’s nothing stopping it from doing these validations probabilistically. Given its gappy noise riddled senses, probabilistic processing describes most of what the brain does, and ANNs.

So, if we can live with some fallibility in the GFR, then Gödel isn’t a showstopper. On the other hand, if we insist that it must be able to infallibly validate itself or every last bit of its own content, then it would be, but we’d be asking something of it we couldn’t expect from any other system.

February 2nd, 2020 at 8:24 pm

“I think Gödel does apply to the GFR, and to the brain, and any type of AI system.”

We can certainly agree on the first and third (assuming software AI).

Obviously I’m not so sure about the second (or AI implemented in a physically isomorphic analog system — the Positronic brain). Gödel applies to numeric (discrete) systems. I’m not sure it applies to, for example, an analog radio or sound system.

“As Turing himself pointed out, if the system can operate in terms of probabilistic knowledge, then there’s nothing stopping it from doing these validations probabilistically.”

There are a number of caveats to that, mainly to do with the difficulty of doing that with discrete deterministic analytic systems. It highlights the apparent resource difference between what physical systems accomplish naturally (therefore “easily” from our point of view) versus replicating that system analytically. Like an analytical approach to solving N-body problems versus how orbiting objects solve them by doing them.

I do agree that whatever brains do, it’s chaotic and noisy. Above all, it’s analog, which immediately raises analytic issues. (And it’s where we differ, since I’m not at all sure Gödel applies to analog systems.)

“So, if we can live with some fallibility in the GFR, then Gödel isn’t a showstopper.”

True, and I’ve argued before that we shouldn’t expect the GFR to deliver perfect answers. (Because humans don’t, and the presumption is the GFR stands in for a human.)

I do think the issue of mathematics in the GFR raises interesting issues with regard to a strict lookup approach versus the need for more complex algorithms. There are some interesting questions about how much is expected of the Librarian.

And, if nothing else, it emphasizes the challenge (if not impossibility) of a GFR capable of answering all reasonable questions via lookup. At the center of Gödel’s result is the in principle impossibility of enumerating all formulas (mathematical questions).

There is another possibility I think is cute:

Gödel’s result applies to consistent arithmetic systems. Granting for the moment our brains are arithmetic systems, what if they’re not consistent ones? What if, in all their complexity, consistency was sacrificed as not valuable?

Maybe being able to sometimes prove 1=2 is a survival trait. (Captain Kirk certainly seems to have demonstrated that.)

I’ve also argued in the past that, if software consciousness is possible, then I suspect it will require some randomness or uncertainty to function correctly. Just consider how a perfect memory is said to be debilitating. Getting over pain or trauma requires forgetting.

If computational minds are possible, it just might require some “sloppy” computation. 😀

February 3rd, 2020 at 1:17 pm

I’m not versed in the actual mathematics of Gödel, so my ability to discuss it intelligently is limited. I’m going off of the write ups and comments of various mathematicians and AI experts.

I would note that both brains and computers have inherent limitations on how much they can model their own operations, limitations that likely swamp Gödelian limitations, so it may be that worrying about Gödel is sour grapes.

It’s also worth noting that artificial neural networks are largely probabilistic systems. But it is a good point that building a stochastic system on top of a system (hardware) engineered to remove indeterminancy does take more effort than if it it’s incorporated from the beginning. Of course, doing so allows us to control exactly how much randomness actually makes it into the overall resulting system.

The big issue for digital system, it seems to me, is their power consumption. A major advantage of analog systems is efficiency. It might be what eventually drives the industry in the direction of neuromorphic hardware.

February 3rd, 2020 at 2:34 pm

As you may know, Gödel Incompleteness result, as well as Turing’s Halting result, use the same diagonalization technique Cantor invented to prove the reals are a larger set than the rationals (despite both being “infinite” in size).

Cantor showed it’s not possible to enumerate the reals (whereas we can enumerate the rationals). Turing used the idea to prove that the set of programs that halt cannot be enumerated, and Gödel used it to prove the set of true theorems cannot be enumerated.

What cannot be enumerated cannot be found (since a brute force way to find things is to enumerate all possibilities). So, ultimately, there’s no way to prove into what class program P, or statement S, falls.

With Gödel, the first theorem is the key result. The second one refines this to say that any system capable of proving itself consistent cannot actually be consistent. Not only can a consistent arithmetic system not prove all truths, it can’t even prove itself consistent.

Kind of the ultimate mathematical Heisenberg. 🙂

“…so it may be that worrying about Gödel is sour grapes.”

I tend to agree. This post is specifically about how Gödel might apply to Searle’s GFR or, by extension, how it might apply to a lookup-based system in general. And it isn’t really about approaching Gödelean limits so much as the difficulty of a lookup system doing math.

(This post sprang from ruminations about implementing multiplication via lookup for my arbitrary precision binary faction code. Multiplication is a Cartesian product and computationally intense. Lookup makes it more of an O(n) operation. But as a general (i.e. non-binary) approach, it seriously explodes in size requirements. A common tradeoff is time versus size, and lookup requires size. Which got me thinking about the GFR doing math.)

((Talk about size: The technique I’m using requires a 256×256 lookup table with each cell requiring two bytes. The table is a byte multiply table. Requires 128K of memory. Not a huge deal these days, but my “16K used to be all the memory we had” soul still cringes.))

Anyway, what this all may demonstrate is why earlier AI methods failed. They were essentially lookup-based. Or rule-based, which amounts to the same thing. This proved too limited. It’s Gödel in the small — the difficulty of listing all possible rules ahead of time.

Now we train systems on “real world” data and let them learn. Which works, but tends to make our AI systems inscrutable like bio-intelligence. (Perhaps intelligence will always be a little inscrutable and irreducible. Maybe it’s too holistic to ever be fully deconstructed. And it’s in those limits that Gödel and Turing rear their heads.)

“It’s also worth noting that artificial neural networks are largely probabilistic systems.”

You’re referring to their outputs? Yeah, in many forms they’re little more than holographic search engines. New input creates a point in their phase space that has some probability of belonging to a known class. We do something similar when we’re not sure what we’re seeing or hearing.

“The big issue for digital system, it seems to me, is their power consumption.”

That’s a direct consequence of the deeper issue that analytic systems work differently from physical systems. In the latter, the “computing” is done at the quantum (or possibly atomic) level. In the former, much larger systems have to account for it. (In addition to getting the simulation correct in the first place.)

So far we lack the computing power to fully simulate even a single proton, so we have a ways to go when it comes to that level of simulation.

“A major advantage of analog systems is efficiency. It might be what eventually drives the industry in the direction of neuromorphic hardware.”

I know we disagree on this, but the other major difference there is structure. The thing about neuromorphic hardware (or just neural nets, for that matter) is they replicate structure.

And as you know, I think that massive parallel asynchronous network is crucial. IIT is right about that much (if nothing else 😀 ).

February 3rd, 2020 at 6:40 pm

I actually didn’t know that about how Gödel reached his conclusion. Interesting. Thanks!

In terms of analog computing, I’ve been reading ‘The Principles of Neural Design’ by Peter Sterling and Simon Laughlin. They drop down to the protein and molecular level, describing protein circuits and analyzing them in terms of Shannon information theory. It requires an understanding of organic chemistry, but they describe proteins as finite state machines.

(Proteins are fascinating. Cells may be the basic unit of life, but proteins seem like the basic unit of functionality (although RNA has its tricks too). They often operate near the thermodynamic limit. People are working to develop nanorobotics, but life got there billions of years ago.)

On structure, as I’ve noted before, we’re not really all that far apart. While I think alternate structures can in principle perform the same function, performance and power efficiency may require structures similar to the brain, although technological solutions may present different optimization points in the balance between speed, parallelism, and power efficiency.

February 3rd, 2020 at 9:37 pm

Gödel, Turing, Cantor, Heisenberg, and Planck, all made important statements about fundamental ontological limits. I find it comforting that reality can’t ever be fully known. 🙂

“…they describe proteins as finite state machines.”

Yeah, at that level, things get discrete and it’s hard not to see them as little machines. I, too, have long been fascinated by RNA, DNA, and proteins (although biology and chemistry aren’t sciences I’ve particularly pursued).

They make an interesting argument for the VR hypothesis. DNA is digital data. If one were designing a VR with “living” things capable of creating new “living” things, one might very well use an architecture where design data is combined in a random way to create a similar but unique pattern.

Not to mention the interesting question about how such a system even got started.

“On structure, as I’ve noted before, we’re not really all that far apart.”

😀 For the last couple of years I’ve been lulled into thinking that, but recently realizing you do maintain a commitment to what I think of as “strong” computationalism shows there’s a bit of daylight between us. (The strong form asserts the brain is a computer and the mind an algorithm.)

However:

“While I think alternate structures can in principle perform the same function,”

I call that “weak” computationalism — the idea that a software model can accomplish the same result as what it models. I’m skeptical but see it as possible, so there’s a lot less daylight there.

February 4th, 2020 at 7:41 am

“I find it comforting that reality can’t ever be fully known.”

What about that do you find comforting?

Personally, I don’t, and hope there’s always someone probing the boundaries. There have been too many “we’ll never know” type statements in history that eventually turned out to be wrong for me to be comfortable with any form of mysterianism.

If by “weak” computationalism you mean an understanding that the nervous system isn’t doing computation, then that’s definitely not me. I think computation is the right way to think about what it does. My alternate structures comment was just referring to different ways of doing that computation.

February 4th, 2020 at 9:24 am

“What about that do you find comforting?”

I prefer a reality with a bit of ineffable mystery. I think living in a clockwork reality where everything can potentially be known and understood is boring. Like a joke for which you know the punchline. Or a mystery novel where you know who done it.

Back in college, a teacher told us about Eastern storytelling, especially Japanese storytelling, was far more open-ended than Western storytelling. Western storytelling has endings that resolve things, and leans toward happy endings (unless telling morality parables). Eastern storytelling is more prone to leave things unresolved. (And less prone to happy endings. Hero and Ju-On are good examples.)

The teacher mentioned that many westerners aren’t comfortable with unresolved stories — one more inscrutable thing about the east. I realized then that I am. I’d learned about unreliable narrators by then and found that idea fascinating. Back in college I also studied filmmakers such as Nicholas Roeg who have the storytelling philosophy: ‘You don’t always know what’s going on in life, so you won’t always know what’s going on in my movies.’ (The Man Who Fell to Earth is a good example. Very confusing movie. For that matter, so is Kubrick’s 2001.)

Bottom line, mystery, mystique, even mysticism… all add a bit of spice. When, and if, science ever demonstrated reality was more clockwork, I’d have to give up these possibly romantic ideas, but the jury is still out, and Heisenberg and friends seem to have already demonstrated that reality is not clockwork and we can’t know everything.

“I don’t, and hope there’s always someone probing the boundaries.”

Absolutely. I hope humans always do probe the boundaries. Curiosity is vital.

“There have been too many ‘we’ll never know’ type statements in history that eventually turned out to be wrong for me to be comfortable with any form of mysterianism.”

I distinguish between scientific findings that say certain things are out of reach per physics versus areas where we have no such understanding. (In some areas we have no understanding at all!)

There’s nothing wrong with, for example, exploring ways around the speed of light, but the understanding has to be that current physics says it’s impossible even in principle. One is always free to say, “Yeah, but… maybe not?”

Put it this way: I see a difference between “We’ll never know,” and “We know we can’t know.” The former just speaks to ignorance, and I agree it’s often proved wrong.

“If by ‘weak’ computationalism you mean an understanding that the nervous system isn’t doing computation”

With the caveat that by “computation” we’re talking about something a TM can do, then yes. Weak computationalism is a commitment to software simulations resulting in consciousness without a commitment to how the brain produces it.

Weak computationalism would place a brain simulation on the same level as a heart simulation or a tree simulation.

Strong computationalism is the commitment that:

“I think computation is the right way to think about what [the brain] does.”

Understood. 😉

My attempts to get you to justify that belief usually ends the discussion. The perception I’m left with is that it’s axiomatic for you based on circumstantial perceptions (such as equating neurons with logic gates or making an analogy with computer memory).

As I mentioned, I had a belief that, after years of discussion, we’d met in the middle on weak computationalism. You’d said things along the lines of the brain not being a TM or not being a “conventional” computer, which seemed to acknowledge the brain is not a computer. But a few months ago you said something that made me realize you’re still committed to the strong form, which means all those years of conversation were not at all persuasive to you, and I find that disappointing.

“My alternate structures comment was just referring to different ways of doing that computation.”

Yeah, I picked up on that. 🙂 That’s why I said there actually is a bit of daylight between us on the topic.

February 4th, 2020 at 11:31 am

“My attempts to get you to justify that belief usually ends the discussion.”

Wyrd, you’ve seen the reasons for my position many times, as the reference you make to them shows. The nature of that reference highlights the reason I usually move on. My perception is that this is something you are intensely passionate about, and my experience is that it’s too easy for things to become heated.

You’re right, there is daylight between us, and we probably should just accept that. And I admit I was complicit in veering into this subject above. Sorry! My bad. 🙂

February 4th, 2020 at 1:14 pm

In my view, there really isn’t any reason we can’t debate this. I can only assure you I’m not someone who gets “intensely passionate” about intellectual issues. All I’m intending to offer is effective debate. (However, many have accused me of “judo” or other martial arts. What can I say, I’m a master debater. 😉 )

FWIW, my take on this is that I’ve offered strong counter-arguments to those things you’ve offered as reasons for your view. It seems you deny the validity of those arguments, but while I can cite your views, I cannot cite why you deny my arguments. My perception is the debate never gets that far.

But, yeah, I pretty much gave up when I realized you were still committed to strong computationalism and nothing I’d ever said was persuasive. Given our common interests, though, it’s inevitable it’ll come up now and again.

At least I’m not confused about your position. 😀

February 4th, 2020 at 7:59 am

” I find it comforting that reality can’t ever be fully known.”

Or could reality be whatever we do know?

More weakly, the relationship between knowledge and reality is complicated like the sound of the tree falling in the forest that nobody hears.

February 4th, 2020 at 9:49 am

“Or could reality be whatever we do know?”

Haven’t we done idealism already? 😀

In a sense, of course that’s true, but I distinguish between reality (which is external to me) and my model of it. I don’t label that model “reality” though — I know it’s too prone to illusion and error. It’s reality seen through a dark fractured glass. (See also: Plato’s Cave.)

It’s my reality, but it’s not the reality.

“More weakly, the relationship between knowledge and reality is complicated…”

Indeed (and hence epistemology)!

“…like the sound of the tree falling in the forest that nobody hears.”

I always thought that one was definitional. It just depends on what you mean by “sound” — physical vibrations or what those vibrations engender in something that “hears” them.

February 4th, 2020 at 9:56 am

Like I said the relationship is complicated. That there is a reality external to you is part of your model of reality.

February 4th, 2020 at 10:03 am

It’s something I take as axiomatic, certainly, but if I don’t, I’m stuck with solipsism.

February 4th, 2020 at 10:29 am

Life is much easier with assumptions, isn’t it?

February 4th, 2020 at 10:35 am

Put that way it sounds a little pejorative. I’d say life is impossible without some axioms. Any judgements would be on what axioms one chooses.

February 4th, 2020 at 11:32 am

My axiom is that we can’t know reality. We only know a representation of reality.

February 4th, 2020 at 11:46 am

And you form no axioms about reality? (You do seem to admit it exists.)

January 29th, 2021 at 11:07 am

[…] post, Searle vs Gödel, sprang from thinking about implementing math via lookup rather than calculation (although at some […]