This is the third post of a series exploring the duality I perceive in digital computation systems. In the first post I introduced the “mind stacks” — two parallel hierarchies of levels, one leading up to the human brain and mind, the other leading up to a digital computer and a putative computation of mind.

This is the third post of a series exploring the duality I perceive in digital computation systems. In the first post I introduced the “mind stacks” — two parallel hierarchies of levels, one leading up to the human brain and mind, the other leading up to a digital computer and a putative computation of mind.

In the second post I began to explore in detail the level of the second stack, labeled Computer, in terms of the causal gap between the physical hardware and the abstract software. This gap, or dualism, is in sharp contrast to other physical systems that can, under a broad definition of “computation,” be said to compute something.

In this post I’ll continue, and hopefully finish, that exploration.

I’ll mention that, in this series, I am not particularly arguing for or against computationalism — the notion that a conscious mind can be either computed or simulated by a digital computer. I am focused mainly on the difference between digital and analog computation.

That said, I am skeptical of computationalism, and part of the reason does involve the difference I perceive between digital and analog systems. Nevertheless, nothing here can be said, on its own, to rule out some form computationalism. The truth is that we don’t understand how mind emerges from brain function well enough to know either way.

So without further ado, let’s jump into the gap…

§ §

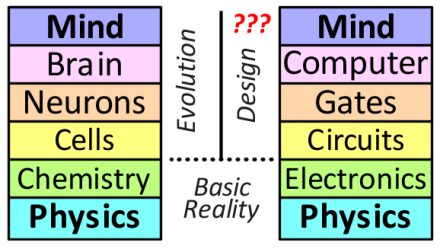

For reference, here again are the mind stacks:

They are meant to illustrate different “zoom levels” of brains and computers. See the first post for an explanation of these levels. I originally created the diagram for discussions about computationalism, but in these posts I use it to contrast between two different kinds of systems: physical (analog) and digital (numeric).

In this case, the term analog is a bit misleading. I use it here as a shorthand in contrast to digital — which itself is shorthand for digital computer. The more accurate terms would be physical versus numeric computation, but the former is also a bit misleading because all computation is physically implemented. Another contrast might be physical versus abstract, but now both terms seem too broad.

Bottom line, here analog means any system that falls under the large umbrella of computation but which isn’t digital. The latter term here refers to what computer science defines as (and most people mean by) “computer” — essentially a numeric calculator; something that merely does math.

Also recall that in the second post I replaced the Mind level in the right stack with Tetris (or any other actual software). The focus here is on digital computing and, in particular, its dual nature.

§

In both stacks, with one exception that is the point of these posts, there is a clear causal relationship between levels. Each physically depends on the level beneath. An implicit assumption is that an upper level is predicted by a lower level.

For instance, Chemistry depends on Physics — so does Electronics. In turn, Cells and Circuits depend on the levels below them, and likewise Neurons and (logic) Gates. Finally we have the complete systems of Brain and Computer.

As an aside, in both cases there is a bit more to the picture. Brains also depend on blood vessels and other aspects of biology. Likewise, computers depend on power supplies and other aspects of electronic systems. In the context of this discussion, these can be ignored.

The one exception is what happens in the Computer level.

§

An important point here is that, unless one ascribes to some form of brain/mind dualism — a view that mind is more than the sum of brain functions — then Mind also is a direct consequence of Brain.

In fact, a major goal of neuroscience is determining the correlations between brain function and mind. The presumption is that a direct correspondence between the physical activity of the brain and autobiographical conscious mind does exist.

In contrast, the physical operation of a computer only predicts the behavior of the hardware itself. A digital computer can run any software made for that system, and that software may be simple or complex, effective or broken. It might cause the computer to model a Tetris game, a web browser, or a spreadsheet. Or it might put the computer into a useless loop requiring a reboot.

Contrast that with any analog system, be it a radio, a motor, or a hydraulic lift. An analysis of such systems tells us exactly what they do at their highest level. We can, in most cases rather easily, predict what such systems do.

A digital computer is just an electronic abacus. They manipulate numbers. Crucially, those numbers have no intrinsic meaning; they are entirely arbitrary. Any meaning comes from the intent of the software designer — meaning is external to the system.

That is the digital dualism.

§ §

I mentioned above that analog can be a misleading term when used in contrast to digital computation and that the more accurate terminology is physical in contrast to numeric or abstract.

We often associate digital with discrete and analog with continuous. Certainly the term digital, which basically means finger, does imply discrete, and digital computers are discrete devices in their operation. In fact, most such devices use binary numbers, which is about as discrete as things get.

But physical digital computers, electronic or otherwise, in the context of these posts, have analog hardware. It’s only their mode of operation — that dual second layer — that’s actually discrete.

Consider an abacus. The stones slide up and down on rails and while being moved occupy all positions along the rail. The physical abacus is an analog system, but the stones only have meaning when either all the way up or all the way down.

Consider an abacus. The stones slide up and down on rails and while being moved occupy all positions along the rail. The physical abacus is an analog system, but the stones only have meaning when either all the way up or all the way down.

Leaving a stone in the middle, which is physically possible, makes its meaning undetermined in terms of the calculation being performed on the abacus.

Likewise, electronic digital computers use components that, as do all physical systems, operate in a fundamentally analog fashion. Such devices are designed in a way that, metaphorically, insures the “stone” is either all the way up or all the way down. The transistors of a computer, like the stones of an abacus, do occupy all the in-between states, but only very rapidly while shifting from one state to the other.

So the physical digital computer, be it pen and paper, an abacus, or an electronic device, is an analog system, but its operation — its manipulation of numbers — is discrete.

That, again, is the digital dualism.

§ §

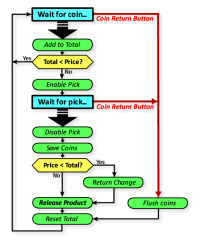

I want to contrast digital computers with analog systems that not only are discrete in operation, but which can be described by an algorithm. One good example is an old-fashioned vending machine.

I say “old-fashioned” because modern vending machines are likely to be controlled by a specially programmed embedded digital computer that implements the vending algorithm.

The point here is that a vending machine has states and moves from state to state based on inputs from the user of the machine, which is why there is an algorithm that describes the operation.

As such, it’s legitimate to see it as a computer — even as a digital computer due to its discrete states.

But as just discussed, the actual operation of the machine is physical and — during transitions between states — decidedly analog.

More to the point, there isn’t the pronounced dualism of digital systems. Examining the machine tells us exactly what it does. There is no separation between an abstract manipulation of numbers and the physical meaning of the system. The machine is what it does.

Further, the algorithm is directly implemented by the hardware. There is no list of instructions being executed by a CPU. There is no ability for the machine to run a different algorithm.

There is no real dualism in any physical system. Not even in digital computer hardware itself. The dualism comes from the abstract numerical calculations that derive meaning externally. Only digital computation, regardless of physical implementation, has this property.

§ §

To wrap this up, a point about power or energy. There is the suggestion that power is an aspect of this, and I’ve realized it’s actually another aspect of the duality of digital systems.

The power of any system is due to its physical dynamics. There are cases of both digital and analog systems where a given system “computes” the same result but with widely different power levels depending on the physical implementation.

Digital computers based on tubes or relays require considerable power, but equivalent systems using CMOS require much less. Our phones and pads ran on batteries. Yet all can accomplish the same computations.

On the analog side, a crystal radio uses only the power of the RF signal, but a tube-based super het version uses considerably more power to do the same thing.

So power is a function of how the physical system is implemented, but digital systems are still the only ones with a dual layer. Per the Church-Turing thesis, the abstract layer is fully decoupled and has nothing to do with the power used by the system.

Yet, because analog systems are concrete, those that require power cannot accomplish their task without it. An engine hoist, for instance, cannot work without sufficient power. Neither can a tube-based digital computer.

§ §

Lastly, a point has been raised about “decision points” but I don’t have clarity on exactly what the relevance or application is.

Certainly any algorithm — or any physical device that can be described by an algorithm (such as a vending machine) — can have decision points. Most do, especially ones of any complexity.

It strikes me that physical systems don’t themselves have decision points. Physics is deterministic — a physical system operates according to physical laws — there are no decisions involved.

But consider the vending machine. While its physics are determined, the abstraction of its operation — its algorithm or flowchart — does have decision points (canonically, the IF-THEN-ELSE construct of digital computer programs).

This does seem to blur the line between physical and digital and deserves further consideration. One difference I think I see is that a vending machine is described by an algorithm, whereas in digital systems the algorithm, including its IF-THEN-ELSE constructs, is the operational dual layer.

At the very least, digital systems are characterized by having massively more such decision points than any analog system. Many, even most, analog systems (radios, for instance) don’t really have any to speak of. Vending machines, and others with more discrete operation, are the exceptions, but they pale in comparison to digital computers.

§ §

This, I think, does end this series exploring the dualism of digital computers.

Down the road I may explore some aspects of pancomputationalism that I think have some relevance. There are some intriguing points and nuances with regard to digital computation.

Stay uncomputable, my friends! Go forth and spread beauty and light.

∇

April 8th, 2021 at 6:27 pm

A thought while reading…

Currently, the AGIs that are being built are creating neural networks that cannot be understood by humans. They are so vast and interconnected that we would need another software program to try and deduce their construction.

Then, I thought, what if we took biological constructs, neurons, and built up a simple bio-circuit and triggered computations using them. We could build up to a certain point and fully understand how those neurons were interacting to produce the output.

Then we build a dynamic, self-assembling neural engine that constructed interconnected computation nodes from cells so complex that we would not be able to comprehend them.

We attach a voice to both the comp-bot and the bio-bot and ask them, “Are you conscious?” What might say about their responses?

April 8th, 2021 at 6:35 pm

And this just flowed through my in box: https://www.axios.com/newsletters/axios-science-3a36b6ee-60bb-486e-a847-b64e579454e8.html

April 8th, 2021 at 8:01 pm

Okay. To be honest, I don’t get much bang from articles with headlines containing the words “might” or “could” or “in the future” — I have a hard enough time wrapping my head around what is!

April 8th, 2021 at 7:58 pm

I’m sorry, but I’m not quite following. I do understand that ANNs of even just a few hundred nodes do things we don’t fully understand. I’m not clear on my a biological version would be more understandable? (There is research involving neuromorphic components that more closely resemble human neurons, but I don’t know how closely they mimic the behavior.)

How would a self-assembling system work? Purely through software reconfiguration, or would it have the ability to physically configure itself?

One consideration is that, as far as I know (and, admittedly, it’s not a field I’m watching closely), our ANNs have, at best, on the order of hundreds of nodes, which is a far cry from the 100-billion neurons in a human brain. Being able to ask questions like, “Are you conscious?” is very far off in the future, so who knows what either network will answer!

April 9th, 2021 at 9:53 am

I suppose I was trying to point out the opacity of current advanced NNs and how such systems, multiplied by orders of magnitude will mimic the brain.

I tend to believe that consciousness is merely a numbers game, that we romanticize our ability of mind. We may not have long to wait for a simulated mind…

Quote:

GPT-3 has generated a lot of discussion on Hacker News. One comment I found particularly intriguing compares human brain with where we are with the language models: A typical human brain has over 100 trillion synapses, which is another three orders of magnitudes larger than the GPT-3 175B model. Given it takes OpenAI just about a year and a quarter to increase their GPT model capacity by two orders of magnitude from 1.5B to 175B, having models with trillions of weight suddenly looks promising.

If GPT-2 was “too dangerous to release,” and GPT-3 almost passed the Turing test for writing short articles. What can a trillion parameter model do? For years the research community has been searching for chatbots that “just works,” could GPT-3 be the breakthrough? Is it really possible to have a massive pre-trained model, so any downstream tasks become a matter of providing a few examples or descriptions in the prompt? At a broader scale, can this “data compilation + reprogram” paradigm ultimately lead us to AGI? AI safety needs to go a long way to prevent techniques like these from being misused, but it seems the day of having truly intelligent conversations with robots is just at the horizon.

Unquote

https://lambdalabs.com/blog/demystifying-gpt-3/

April 9th, 2021 at 12:03 pm

Okay, I follow. One thing I’ve thought ever since I read Penrose’s The Emperor’s New Mind is that, eventually, we will build a machine that either simulates or replicates the human brain. We’ll turn it on and then we’ll see.

That said, I’m not sure we’ll live to see it; I don’t think it’s “just at the horizon” but quite some time off. As I understand it, even something as advanced as GPT-3 is still pretty stupid and that such systems are fooled in ways that wouldn’t give a two-year-old pause. (There’s been a lot of research into how easily ANNs are thrown off by adversarial inputs.)

ANNs are indeed very powerful in certain domains — they are essentially very powerful search engines that use a vastly multi-dimensional configuration space. I’m just not sure that actually leads to AGI, because I suspect there’s a lot more to it than that.

But we’re all just guessing at this point. The proof will be in the pudding!

April 8th, 2021 at 8:06 pm

I will say that my guess is that a system based on signal processing (similar to how the brain works) stands a better chance of working than one that simulates behavior through numerical modeling. As I understand it, unless using neuromorphic chips (which I don’t know much about), the ANNs currently in use are using numerical models that run on digital computers.

I am fairly skeptical of these being able to produce consciousness, and it’s also possible the computational resources are too intense for them to scale up to the size probably needed. My understanding is that neuromorphic chips are a possible way to accomplish the goal by more closely replicating neuron function.

September 1st, 2021 at 7:49 am

[…] this year I wrote a trilogy of posts exploring digital dualism — the notion that a (conventional) computer has a physical layer that implements a causally […]

October 4th, 2021 at 7:28 am

[…] & III) which was a follow-up to last spring’s Digital Dualism trilogy (parts: 1, 2 & 3). The first trilogy was a continuation of an exploration of computer modeling I started in 2019. […]