The Age of Fire is a key milestone for a would-be technological civilization. Fire is a dividing line, a technology that gave us far more effectiveness. Fire provides heat, light, cooking, defense, fire-hardened wood and clay, and eventually metallurgy.

The Age of Fire is a key milestone for a would-be technological civilization. Fire is a dividing line, a technology that gave us far more effectiveness. Fire provides heat, light, cooking, defense, fire-hardened wood and clay, and eventually metallurgy.

The Age of the Electron is another key technological milestone. Electricity provides heat and light without fire’s dangers and difficulties, it drives motors, and enables long-distance communication. It leads to an incredible array of technologies.

The Age of the Algorithm is just as much of a game-changer.

A primary characteristic of these Ages is the sweeping technological change involved, the broad range of new technologies that arise from a single new tool.

The three I mentioned don’t form an exclusive list by any stretch. We might, for instance, equally consider the Age of the Plow or the Age of Human Flight. I mention the first two only as crucial stepping stones to the third.

Algorithms enable computation, and to some extent what I’m calling the Age of the Algorithm is more properly called the Age of the Computer. But a computer without an algorithm is just a boat anchor, and the focus here is on algorithms.

That said, Age of the Computer is the more appropriate, since the true dividing line is the electronic machines that perform complex algorithms at high speed and with complete accuracy. In reality, the idea of an algorithm predates computers considerably. An algorithm is simply a set of instructions for doing something (long division, for example).

So while it really is the electronic calculators that have changed the game, without algorithms they’re just metal, plastic, and sand. Besides, Age of the Algorithm appeals to my alliterative appreciation.

§

Two important phrases from the above are “high speed” and “complete accuracy” — these are the real game-changers.

A “computer” used to mean a person who did math — presumably someone who was well-trained and good at it, because it’s easy to make mistakes doing math by hand. The very first computing machines were purpose-built to generate accurate tables for logarithms, trigonometry (sines and cosines), and navigation.

General purpose computing, and the programming languages that enabled it, catapulted these systems into the stratosphere. Our ability to compute complex and elaborate functions, accurately and quickly, is a step into a world as new as the one with electric lights or the one with fire.

As the computing industry matured, another important attribute changed the game: the small size of calculating devices. Digital watches became a thing. Now we carry extremely powerful computers around in our pockets (along with large digital libraries, video cameras, display screens, online access, GPS, and advanced communications).

Quite some distance from the first human-controlled fire.

§ §

Back in 2019 I wrote a series of posts about virtual reality. (I’ve written even more extensively about algorithms.) Then 2020 derailed so many things, and that series has been sitting on a siding ever since.

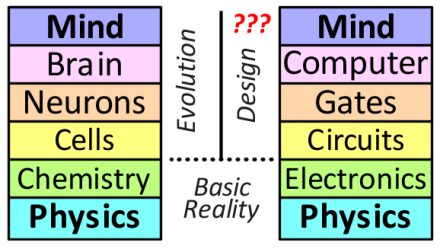

Picking up the thread, a lot centers on this diagram:

Which seeks to compare levels of organization of two implementations of consciousness — one we know exists and one we can currently only speculate about.

I’m referring to the box in the upper right, the one labeled “Mind” — we have no idea whether an algorithm can produce a mind. Put another way, we have no idea if our minds are the consequence of an algorithmic process. The question is one of the more contentious ones among those who study consciousness.

For the record, I’m in the Very Skeptical camp. I’ll need to see it to believe it. Currently I file the idea as non-physical (and possibly wishful thinking).

At issue here is what I perceive as a causal disconnect — because algorithms — in the Computer level of the right-hand stack. Not everyone agrees there is such a disconnect.

§

A complicating factor is that we don’t understand exactly how the left-hand stack produces a mind. There are many theories, and even stronger beliefs, but so far no one actually knows.

That lack of knowledge makes it challenging to determine whether a computation can result in a mind. When it comes to computers, there are many things we can simulate, but also many things we cannot. A way it’s often put is that, ‘no matter how good a water simulation is, it still won’t get you wet.’

(I have frequently drawn a parallel with lasers, which we can simulate with great precision, but no such simulation produces laser light. The key question is whether mind emerging from the brain is like laser light emerging from a lasing material.)

§

I have a sense this will take multiple posts to cover, so here I’d like to focus on the diagram and explain the two stacks and their organization.

The idea is that each layer of the two stacks has a perceived correlation with the matching layer in the other stack. The left stack organizes a biological being into a hierarchy of levels; the right stack does the same with a computer. Each level represents the whole, but looked at with differing levels of detail.

Each layer emerges from, and supervenes on, the layers below it.

At the very bottom, basic physics, which includes quantum and classical physics. At this level, there is very little difference between the two — physics is physics. I suspect this is where the conflation begins, with the perception that everything is just physics.

True, but using physics to simulate something is different than using physics to build something. (Again, simulating water versus getting wet.)

§

The second layer, still within the realm of basic physical reality, is what we might think of as the higher level physics necessary for any biology or any electronic device. Chemistry has always been with us. Electronics came into being in the Age of the Electron.

[As an aside to those who hold that quantum mechanics plays no role in the brain, or in biology or botany in general, we often forget that chemistry is quantum. We’re just so used to chemistry that we don’t think of it that way anymore.]

The third layer starts to differentiate basic physics into the building blocks for building brains and computers, but note that all biology uses cells, and all electronic devices use circuits. Nothing in this third layer really speaks to brains or computers.

§

The fourth layer, however, does. Neurons are a specific kind of cell, key to making a brain. Logic gates, likewise, are a specific kind of circuit, key to building a computer.

This layer is the source of considerable contention. Many see them as comparable — that neurons are just a kind of logic gate.

I couldn’t disagree more. (I’ve argued extensively against the view.)

To me, conflating them is like conflating classical computing bits with quantum computing qubits. They are alike only in the vaguest sense. Neurons are as far beyond logic gates as qubits are beyond bits.

If one insists on comparing the brain to a computer, a far better parallel would be to see each neuron as a computational device. The brain, then is a massively linked network of such computational devices. (I don’t have a reference, but I recently saw someone arguing that the brain might be comparable to the entire internet.)

The problem is that neurons, while they do have an “on” and “off” state (firing or not firing), seem to carry considerable analog information in the pulse timing and duty cycle of the “on” state. I’ve even seen claims that the pulse edges — their rise and fall timing — may carry information.

One is, I think, far better off to view the neurons as analog signal processing nodes (or complex computational devices) than logic gates. I once read a paper by a neurophysicist who wrote that the synapse (which is just a component of a neuron) is the most complex biological machine in the body.

So no: Neurons are not (at all) like logic gates.

What probably adds to the conflation is that they do appear at the same organizational level. They are the basic building blocks of a brain, just as logic gates are the basic building blocks of a computer.

§

But since the thesis here is that brains are not computers, it’s a given that neurons are not logic gates.

Which brings us to the penultimate organizational level, the brain and the computer. Given that computers were sometimes called “thinking machines” it’s not hard to see why so many conflate them. Our pursuit of Artificial Intelligence only strengthens that belief.

And it is intriguing that our greatest success with AI, such as it is, has been in very crude and reduced approximations of the brain’s neural network. Such networks have shown amazing promise for many kinds of categorization and identification tasks (and more).

But they still aren’t “thinking machines” — not even close. They have no sense, common or otherwise, and are trivially fooled.

§

The top level remains a mystery, both in how mind emerges from brain, and (more so) how and whether it can emerge from computation.

Given that we don’t know how mind emerges from brain, it’s almost silly to even be talking about simulating it with a computer. It’s a case of guesswork, of blind leading blind.

§ §

As mentioned above, based on past discussions, a key point of contention involves what I perceive as a causal disconnect in the Computer level. (The virtual reality posts I wrote in 2019 were leading up to discussing this point.)

This post is long enough that I’ll only introduce it here.

There is a chain of causality or purpose that extends from the lower levels of the left-hand stack all the way up to the brain (and, one assumes, the mind). The higher the level of organization, the more specific the system is to its purpose. Neurons, with their synapses, dendrites, and axons, are very specific to brain function.

In turn, the brain has a single causal purpose — the analysis and modeling of the body and environment. In more advanced brains, it enables thought and consciousness. It does nothing else. It has no other purpose. (In fact, brains place considerable cost burden on their owners.)

Computer hardware, however, does any task that can be determined by its software. There is no necessity that it do anything at all (when turned off, it doesn’t). Computers are explicitly general purpose devices. That is a big part of their value.

More to the point, there is a vast causal gap between the operation of the hardware levels and what the software does. A key point in computation is that the hardware doesn’t matter. One can perform any computation with pen and paper. Or an abacus.

The most powerful computers aren’t really doing anything more. They just does it really, really fast. Never forget they are just super calculators.

Stay algorithmic, my friends!

∇

[Part Two: Digital Difference] ⊕ [Part Three: Digital Dualism]

March 25th, 2021 at 1:33 pm

I’ve seen a number of article now with various people in the field of the study of consciousness pushing back on the brain=computer notion.

I’ve posted about some of what I’ve seen, most recently in Brain Background, but also just over a year ago in Brains Are Not Computers.

I’ve tried to find the article I read earlier this week that compared the brain to the entire internet, but (a) haven’t found the one I read, and (b) it appears to be an idea that’s been around for a few years. This article in LiveScience dates to 2005.

It’s nice to know I’m not the only one who doesn’t see the comparison.

March 25th, 2021 at 1:35 pm

FTR: It’s software simulations that I’m skeptical about. I’ve long thought a “Positronic brain” — a structurally isotropic device would probably work. I don’t think biology is crucial, but, as with lasers, I do think physical structure is.

As such I call my view structuralism.

March 25th, 2021 at 6:50 pm

We’ve been round on this many times. I think this time I’ll just ask, if you don’t think the nervous system is doing computation, what do you think is happening with all that electrochemical signaling?

This reminds me that prior to all this job stress, I was reading a book on computational neuroscience, which I need to get back to.

March 25th, 2021 at 7:46 pm

Aw, you don’ wanna come out and play? 😦

You’ve kind of answered your own question, and I’ve answered it many times. Signal processing is not digital computation.

We can accomplish signal processing through digital computation, but there’s a reason vinyl records are still a thing and that there are still recording studios that are all analog. (I know of at least one that uses tubes, not transistors.) For that matter, the famous “golden record” was also analog, although the reason has more to do with the ease of an alien civilization recognizing and playing an obvious analog signal over some putative digital format.

More to the point, as mentioned in the post, brain operation is distinctly analog, not digital.

March 25th, 2021 at 8:48 pm

Just trying to explore rather than debate.

I don’t know too many neuroscientists who argue that the brain is doing digital computation, at least not pure digital. But as I recall, in our previous discussions, you didn’t recognize the concept of analog computation. (Although I think you mentioned it recently in connection with quantum computing.)

So what do you see this network of interneurons doing? What would you say is going on with the cascades, loops, and selective excitation and inhibition? When particular neurons in the visual cortex get excited by edges, or corners, or other visual features, how would you characterize what’s happening?

March 25th, 2021 at 9:19 pm

I’m not sure exactly what you mean by “analog computation” but I’ve always said the brain is an analog device. Let me ask this: Is an analog radio, or an audio amplifier, an analog computer? As opposed to more formal devices, called analog computers, which use various programmable circuits to determine output values?

My answer to your questions is, again: analog signal processing. Which I see as “computation” only in the broadest sense of the word. Cascades, loops, and selective responses, are very much a characteristic of analog signal processing.

I don’t really have a problem with calling this a form of computation (in the broadest sense), but I do think it’s important to appreciate the difference between what we casually call “computing” and such analog signal processing.

Analog computation (including radios and amplifiers) involves a physical chain of direct causality. As a simple example, a voltage divider, which “computes” a ratio, does so in consequence of the voltage drop across the divider.

Digital computing involves calculation with numbers — numbers that require an external map defining what those numbers mean. If one actually watches the flow of numeric computation inside a digital computer, there is nothing that innately distinguishes those numbers as any particular computation. It might be Tetris, a web browser, an AI network, a text editor, or just the operating system taking care of business. There’s no way to tell without an external map.

I see those as vastly different processes, and these days it’s only the latter that most people mean when they say “computer” — some form of super calculator. As such, the notion that brain=computer seems misleading if people are actually referring to signal processing.

March 25th, 2021 at 9:44 pm

To be as clear as possible, what I’m very skeptical about is that the brain is algorithmic.

March 26th, 2021 at 7:15 am

On radios and amps, I’m a pancomputationalist, so I think any system does at least some computation. What I think sets apart systems we commonly think of as computational is a high ratio of causal differentiation to energy magnitude. Old style radios and amps have a higher ratio than, say, a forklift, but a much lower one than something that would commonly be referred to as a computer. (Although technically a smartphone is a radio, so the boundaries aren’t sharp.)

So what in particular about algorithms do you object to? Is it their (usually) deterministic nature? Would you describe what happens in an ANN (at the neural network level) as algorithmic?

March 26th, 2021 at 8:37 am

Can you explain what you mean by “high ratio of causal differentiation to energy magnitude” and how it applies to radios/amps vs. forklifts vs. digital computers? I’m afraid it doesn’t mean anything to me, so I don’t know how to respond.

Another question: How “far apart” (in some conceptual “computational device space”) would you say that analog computational devices are from digital ones? How much of a gap do you perceive?

Things I can respond to:

I would say that smartphones contain radios — ones that are almost certainly digitally implemented — but they are (in my book) definitely (digitally) computational.

As far as I know, all implementations of ANNs are also (digitally) computational and implemented with algorithms.

I don’t object to algorithms in themselves (far from it!), but I’m skeptical the brain — a decidedly analog device — uses them to function. I don’t believe anything natural does in terms of its basic function, but more complex brains are able to define and use them. As I mentioned in the post, the idea is ancient. That old beer recipe we touched on recently is an algorithm.

March 26th, 2021 at 10:12 am

On causal differentiation, another way to think of it is the amount of information the system deals with. Information is often characterized as a difference that makes a difference. There are a lot more differences in a system we’re tempted to label as computational.

Yet another way may be to think in terms of the number of decision or selection points the system has. A forklift has very few of these, the causal sequences are more sequential, and the energy output is the whole point. A traditional radio has more decision points, based on what station it’s dialed to, the volume set, etc, although a large part of the traditional radio is still geared toward energy output (the signal to the speakers).

But a computational system has vastly more decision points, and it typically has complex looping mechanisms to further magnify the number of decisions. All of this typically takes place at a low energy level, since the magnitude of the energy output isn’t the point, so much as the selection of which output(s).

On analog and digital, at the level of the raw system dynamics, the only difference is that the selection thresholds in one are more continuous while they’re discrete in the other. That makes the discrete systems more deterministic, less subject to noisy stochastic conditions. The design of analog systems have to make tradeoffs on how much to compensate for noise, with the maximum noise compensation being going to discrete mechanisms, with costs in efficiency. And we can bring in stochasticity into a discrete system when desired. So I don’t see the vast conceptual chasm between them that you do. (I don’t anticipate you and I will find agreement on this anytime soon. 🙂 )

On algorithms, fair enough. But would you agree the brain uses physics and chemistry and operates according to known physical laws?

March 26th, 2021 at 11:40 am

I’ll start by saying that, yeah, I do think pancomputationalism conflates things that have significant differences, so I agree we probably won’t agree on this. 😀

I would like to understand some aspects of this better, though, so I have questions. I need to get ready to go for my first vaccine shot (in less than two hours), and I want to think about this a bit, so I’ll pick it up later today. (Assuming I’m able!)

In the meantime, I’d like to try to sum up why I see analog and digital systems as “far apart” in the broad computational space.

Digital systems do their work by crunching numbers that are arbitrarily defined. They do explicit mathematics on those numbers. These systems convert inputs to arbitrary numbers with no innate correlation to those inputs, do math on those numbers, and then convert those numbers to some form of useful output.

In contrast, analog systems do their work through a series of proportional direct causal chains that extend from input to output. Any conversion is from one proportional system to another, and the representations are innately of the same kind. (For instance, sound waves are converted to voltages that closely resemble them, those voltages are converted to record grooves or magnetic domains which also resemble the inputs, and finally to a speaker cone that recreates the sound waves. Throughout the system, the signal directly represents the information.)

I do see those as vastly different modes of operation.

March 26th, 2021 at 11:51 am

Good luck with the shot!

March 26th, 2021 at 12:04 pm

Thanks! I’m on my way in 45 minutes. I forgot to answer your last question. Definitely the brain operates according to physical laws. Doesn’t everything? 🙂

March 26th, 2021 at 3:07 pm

The shot was… kind of fun, actually. I had a good time! (See this comment for details. Suffice to say, extremely well-run operation!)

March 26th, 2021 at 4:11 pm

Okay, as I said, I have questions. I hope you don’t mind if I use quote mode; it’s meant for clarity not confrontation.

“There are a lot more differences in a system we’re tempted to label as computational.”

Do you mean differences between real-world analog inputs (and outputs) versus their digital representations? If so, I agree there is considerable difference.

I would go so far as to say the digital representation, in being numeric and arbitrary, is a difference class of information from analog representations. In analog systems the information always resembles its inputs. For me this is a key point in the gap between analog and digital systems.

If you mean a different kind of difference, then I’m not following, and you’ll need to expand a bit.

“Yet another way may be to think in terms of the number of decision or selection points the system has.”

I would like to drill down on what you mean by decision point. In the radio and forklift examples those are all input settings. I may not understand what you mean, though, because I’d say forklifts have more input settings — the operator has many controls available. A crystal radio, as a minimal example, has only one: what frequency it’s tuned to. (A volume control adds only one more.)

Given these operator-controlled input settings, would you agree these systems make no decisions of their own?

OTOH, biological systems do seem to decide how to grow in response to the environment. (It fascinates me during my walks how trees that are sheltered on one side have branches that have turned towards the sunlight. They literally seem to be reaching for sunlight.)

And there are mechanical systems that can react to run-time conditions. A thermostat is a simple example, but there are more complex ones. The relay logic of a phone switching system is an interesting and complex example.

When it comes to algorithms, “selection” is a required aspect. To be considered a programming language requires that capability. (Which is why HTML is just a computer language but JavaScript is a programming language.) As you say, the nature of program code greatly magnifies the use of these.

Bottom line, I would agree that “a computational system has vastly more decision points.” My question then might be, why doesn’t “vastly more” imply a significant difference? Would you disagree that decision points in a (digital) computer are a different class than those in analog systems (in virtue of their necessity and amount)?

“All of this typically takes place at a low energy level, since the magnitude of the energy output isn’t the point, so much as the selection of which output(s).”

I agreed at first, but as I started writing I began to question it. For instance, analog music players (vinyl or tape) versus digital (CDs, MPEGs). Both output the same thing — same power, so to speak(er). 🙂 And I would suspect internal power use is comparable. Or consider a crystal radio, which is powered only by the RF it picks up. It’s a very low-power device.

Digital systems, as I’m sure you know, can consume a fair amount of power. (To the point people are starting to complain about how not-green server farms and AI systems are.) For that matter, consider tube-based early computers — serious power hogs. One can also construct a digital computer from relays, which also requires an awful lot of power.

So is power really much of a factor either way?

“On analog and digital, at the level of the raw system dynamics, the only difference is that the selection thresholds in one are more continuous while they’re discrete in the other.”

Indeed the very same transistor circuits can be designed to as faithfully as possible follow a varying analog voltage or, with different bias values, essentially over-amplify inputs to either all-on or all-off. Looked at very closely, even discrete circuits have rise and fall times, so depending on how closely we look at the raw dynamics, they can have a lot in common.

Even so, these are distinctly different classes of operational modes. Even at the raw level, ignoring the high-level aspects, there is a significant gap between these modes.

But it occurs to me that the high-level operation is even more significant. Even if we conflate the low-level dynamics, in analog systems, the low-level dynamics are the dynamics of the system. There is no high-level abstraction on top of that.

In digital systems however, there is, and that high-level abstraction — that numerical model of reality — is what matters. (Famously, in computing, implementation is irrelevant!) The high-level abstraction is the point of the system.

This, in fact, is what I was leading up to in the post. There is the system operational dynamics, but on top of that is the high-level numerical abstraction.

That’s the primary difference in these systems. Digital systems are two systems — a low-level one and a high-level one. Analog systems are just one level. That, I think, is key to why I see them as so different.

“That makes the discrete systems more deterministic,…”

Forklifts are pretty determined (in both senses of the word)! 😀 😀

For that matter, so are relay logic systems. Discrete operation isn’t really the point. That digital systems crunch numbers and have that second level is, along with the notion of algorithms that define how the numbers are crunched.

“But would you agree the brain uses physics and chemistry and operates according to known physical laws?”

As I said earlier, definitely! The caveat might be that we may not know all the laws that apply or understand what roles they play. But I’m assuming this conversation is completely within the bounds of physicalism.

March 27th, 2021 at 6:56 am

What I described, in my mind, applies equally to both analog and digital systems.

The main point about the selection points is that the causal flow changes depending on the state of the system. We can regard operators setting them as input to the system. Systems we typically regard as computational just have a lot more of this state dependent causal flow, with no sharp boundary in the scaling.

Selection output is a thing with music players, which I think just illustrates that the boundaries here aren’t sharp.

As a pancomputationalist, I find the view that the laws of physics are nature’s algorithms compelling. So your thinking for the ANNs is largely my thinking for the organic variety. Certainly the organic variety is far more complex, for now.

March 27th, 2021 at 11:43 am

I confess I’m dismayed by your dismissive reply and surprised by what seems to be largely an appeal to your own intuition. I think I’ve earned a more thoughtful response.

You ignored my questions and what I said was the primary difference: That digital systems are dual — low-level dynamics comparable to analog systems plus a high-level abstraction that is, in fact, the whole point of the system. That is the hard boundary between the two types.

March 27th, 2021 at 3:31 pm

Sorry Wyrd. As I noted above, I’m not really up for another round on this debate. Maybe I shouldn’t have weighed in on this thread.

March 27th, 2021 at 5:49 pm

I suppose I should take it as a compliment that I’m apparently such a threat to your beliefs that I can’t even ask questions, but mostly I just think it’s a pity. 😦

March 30th, 2021 at 12:38 am

Bookmarks:

Computation in Physical Systems § 3.3.

The Computational Theory of Mind

The Philosophy of Computer Science

May 20th, 2021 at 10:01 pm

Kinda dropped the ball on these. Need to resume!

April 1st, 2021 at 7:48 am

[…] the previous post I introduced the “mind stacks” — two essentially parallel hierarchies of organization […]

April 6th, 2021 at 8:09 pm

I… phew… great topic. Certainly one with lots of interesting areas to explore. I’ll just say that I don’t know much of anything in this area with certainty, except to say that I have very strong intuitions that I don’t believe the mind is the product of algorithms, and I don’t believe the brain is exclusively an apparatus for computing, although it certainly can do plenty of that in some structuralist biological way like you describe.

I think there are myriad interwoven levels to a human being that are valid in particular terms and yet also unified. At one level, there are certainly things within the body that the brain monitors and regulates. These have nothing to do with being conscious, as we can be sleeping, in a coma, wide awake but concentrating all of our conscious resources on taking a physics exam, etc., and yet the body is regulated.

On the other hand, we have memories and the brain appears in some way to archive, store, and relate these artifacts of experience. I don’t know if the actual content of experience is stored or filed away in the brain, or at the level of mind and the brain is simply a storage device that mind accesses, but this is where it starts to get pretty fuzzy for me.

But then there is the level where I think quite clearly mind transcends the brain. I can only give examples and they will never be air tight evidence, I suppose, but if they are not admitted as evidence one is forced to become dismissive. Let’s take the book Black Elk Speaks, a popular recounting of Nick Black Elk’s life. There are many stories in there of Black Elk’s visionary experiences, but one stands out that I’ll use as an example. He went to Europe at some point as part of Buffalo Bill’s traveling western show, and became separated from the group. Lost in Paris, a French family took him in. He became ill at breakfast one day and collapsed–he only breathed occasionally and they felt sure he was dead–and had a vision in which he traveled back over the ocean riding a cloud and looked down on his family, including the tepee where his mother lived. He felt sure she looked up at him, but then the cloud whisked him back across the ocean to Paris and he awoke. When he returned (probably a year or two later) the camp was where he’d seen it–everything was as he’d seen it. His mother said she had a dream he was looking down on her from a cloud, and upon hearing this, he shared his memory of the vision. There are countless stories like this in countless cultures, and I think the cumulative body of evidence is quite clear that mind as a whole is a venture that exceeds the domain of individual brains…

And I think all of these things are true at some level. So I think the notion that we can somehow replicate a brain through computation is a bit like saying we can replicate the Earth without gravity. There’s just more to it than that and we don’t even know what we’re trying to replicate. There’s a belief that only certain things are so, and that if only those things are so then certain other things can be concluded, but it’s built on notions that are inconsistent with the basic life experiences of millions of past human beings. And I think it falls short, ultimately.

It’s all true at some level but I think there are more levels than we understand, and I think they are all integrated or related somehow. In a sense, there’s an inseparability to things that we, in our modernity, seem loath to accept.

Michael

April 7th, 2021 at 8:57 am

Hey Michael! As you say, there is much here to explore, and I think it’s safe to say no one actually knows anything, but we do all have our strong intuitions. Since we both agree mind is not algorithmic, let me start there.

FWIW, I divide the notion of computation of consciousness (“computationalism”) into two types, strong computationalism and weak computationalism.

The former is the notion that the mind is an algorithm running on brain “hardware” — that there is some equivalent Turing Machine that computes consciousness. I am very skeptical of strong computationalism on a number of counts, and I’ve written a lot of posts about it here.

The latter is the notion that a digital computer running a simulation of a brain — as one might run a simulation of a heart or liver — would produce outputs that we could identify as conscious thought. I’m still skeptical about this, but, given a sufficiently accurate simulation, I don’t have a strong argument about what would happen instead. My guesses are: The simulation would (1) indicate a living brain organ, but with no sign of conscious thought; (2) produce the equivalent of mental white noise; (3) produce only chaotic thought fragments; (4) produce thoughts, but be an insane mind; (5) produce thoughts, but of little or no account (like someone with brain damage or almost no IQ).

There is also the possibility that the computing requirements for the simulation are too formidable to ever accomplish. Simulations always take a lot more resources than what they simulate. There is also that software is extremely hard to get right — current wisdom is that we never really can — so that may also limit what’s physically possible.

Part of the problem is that we don’t know how far down the simulation needs to go. To the level of neurons and synapses, certainly, but does it also need to account for the myelin sheathing or the glial cells? Does it need to simulate the internal structure of the cells? Does it need to get down to the level of chemistry? Or atoms? Or quantum interactions? We just don’t know. Each emergent level may depend in important ways on the exact behavior of the level beneath it, and simulating, say neuron function, might miss key aspects that contribute to that function. That is, it may not be possible to fully simulate neurons without also simulating lower contributing layers.

The vexing thing is we don’t understand brain function as we do hearts and livers.

So I’m very skeptical and will need to see a simulation (or computation) working to believe it. Obviously, I could be wrong, and someday there will be such a thing.

The other option is a physically isomorphic constructed brain — a machine that is structurally identical to the brain. It would have actual neurons and synapses (and whatever else turns out to be necessary). I’ve long believed something like (Isaac Asimov’s “positronic” brain) might work. I call that position “structuralism” — the belief that the structure of the brain is important. I don’t see that biology is necessarily necessary.

WRT memories, brain damage can affect memories, so I suspect our memories are stored in the brain. Drugs that make us unconscious — that shut down our minds — don’t destroy memories. And while I’m spiritually something of a dualist, my physicalist side can accept that there is no dualism between brain and mind, that mind is just what brains do. As you say, things get fuzzy at this point.

WRT what is often called “astral projection” or “ESP” let me start with my skeptical side. Firstly, I’ve been reading a lot of Agatha Christie lately, and both her famous detectives (Hercule Poirot and Jane Marple) speak a lot about not believing what people say. People mistake things and even lie for various reasons. And stories evolve in the retelling. Given stories about angels, ghosts, and UFOs, I’m skeptical of what people claim they experienced.

One issue I have with astral projection stories involves the mechanism of perception. We see things because photons hit our retinas. So I question how invisible ghosts or disembodied projections manage to see things. I also know that the human mind, even during normal dreaming, can visualize scenes of its imagination. There are many stories about unconscious patients hovering above operating rooms, but they never seem to see anything they couldn’t have seen otherwise. For example, if there was an unexpected object on top of a light fixture, and someone reported seeing that, it would be very good evidence in favor, but I’m not aware of any such. (Not unlike how psychics never seem to see major unexpected events — none predicted 9/11, for instance.)

I think it’s far more likely Nick Black Elk simply had a dream and so did his mother. The problem I have is, again, what is the mechanism that accounts for such an experience? (I’d also like to know if there was a correlation of the days each experienced their dream.)

OTOH I have my own experiences along these lines, and they don’t involve dreams, which makes it all the more mysterious. I had a college friend — we’d had a brief time of being intimate, but it hadn’t sparked, and we’d gone back to being friends — and one night I got the strong urge to give her a call. It’d been a couple or few weeks since we’d talked, but this feeling was more than just, “It’s been a while since I talked to Val, I should call her.” It was a feeling that I needed to call her right now. As it turned out, she’d just had a major life-changing event shortly before I called. (She’d discovered she was gay. Been out with another woman, they’d come back to her place, started fooling around, but then the woman stopped it because she was in a committed relationship. Val’s life suddenly made sense, but she was also devastated that the woman had left.) I drove over to her place to help talk her down from that double high.

Another occasion that stands out is the time, from my second story office window, I saw the gal who cut my hair (there was a salon on the first floor) walk from her car to the building. I just knew she was hurting badly. I called her and sure enough, she’d had a bad experience. That one could be written off to my perception of her body language as she walked, but it still stands out as a bit weird how strong the perception was. (She and I had become friends and sometimes went out together, so she was more than just someone who cut my hair.)

So who knows? Especially in the case of Val, perhaps our minds were somehow entangled. That could be even stronger with family members and strongest between mother and child. If an EEG can pick up brain signals from outside the skull, who can say how far that influence extends? And, getting back to spirituality and duality, maybe the world is far deeper than we yet realize. As you say, variations of these stories exist across cultures and history. Does all that smoke imply a fire?

Physics explicitly says everything is connected on some level. Do our complex brains tap into that in some way? There does seem a lot that needs explaining, and our appreciation of what hard factual science can do may have blinded us to more subtle mysteries. It’s ironic that the same philosophers who proclaim the provisional nature of science seem to disdain the mystical.

April 7th, 2021 at 7:15 pm

Lots to unpack here. I greatly appreciate the reply. I love it when a comment begets a spontaneous post!

So I’m really in synch with you on most of what you shared about minds and brains and computationalism. And with memory as well. If an artificial brain was to produce a mind, then I tend to agree with the structuralist approach you described. My own intuition/bias is that simulating the high level without actually including the lower levels is inherently incomplete somehow, so it may be that you just can’t build an artificial mind that is truly identical to a human one. We could say we understand everything neurons and glial cells do, but there’s always sort of a question there: do we actually understand everything they do? Or do we just understand what we’ve witnessed to date?

As to the part about astral travel and such, I greatly appreciate that you have your vantage, but also an openness. The challenge is that every individual example of something can be questioned. I see this a lot as a debate strategy. If Natasha makes a general point that Neil disagrees with, then Neil says: give me an example. Not wishing to be caught without evidence, Natasha obliges. Neil then performs an ad hoc debunking of the example, thereby disproving the entire general principle because one particular case is not air tight. If Neil is more proficient debater on the fly, Natasha is sunk. Also, no example of subjective experience will ever be objectively air tight, so this is a great way to ostensibly disprove something without needing to address every possible instance of it.

I think that at some level there are components of what we call mind that are absolutely brain-dependent. But I think there’s a reason for that. It’s perhaps too long for a comment, but in order for unity to produce individual experience, then some mechanism is required to create a partitioning. The body is this mechanism. And it works very well. It’s really a matter of choice about where to apply one’s skepticism–and in my mind there is a wide range of alternatives here that are valid at the individual level. I’m not here to suggest you’re mistaken. But I think to be fair to myself I have to acknowledge my own choices are different here.

I really appreciate you sharing those personal stories, Wyrd, so let me reciprocate. For a period of time–about fifteen years of my life–I participated in Lakota ceremony. A group of 20 – 30 of us, led by an elder, spent two weeks a year up in the woods for vision quest ceremonies. This is actually how I met my wife. So one year before I met her she was “up” praying, and she was “shown” that another individual who was also up, in his own particular site, was having great difficulty. (If you’re not familiar, during a vision quest you have a site that is basically 10′ x 10′ that you never leave for days at a time. No food or water, etc.) So, This other individual was physically quite remote from her. When she returned to camp she discovered that that very same night she was shown this, this individual did indeed succumb to a very difficult trial, and gave up. He walked away, back to camp, and left.

Now we could debate what she may have heard in atmospheric acoustics or something. We could try to explain it. But the simplest explanation is that this is how this has worked for thousands and thousands of years. During this time, there was also a great deal of chaos in the camp itself. There was thus a “gap” in the community alignment that perhaps contributed to or fostered this moment of difficulty. The elder was quite heartsick afterwards, because when he was a younger man, he was on his own vision quest and nearly died. And in his experience, the camp intuited what was happening, and the elder who put him up rallied the camp, and they came together and rallied for him. He said that when this happened he was able to get back on his feet, but he was a great distance away, up on the side of a mountain.

Each individual story can be debunked, but there are millions of them. They’re just not written down. Most of those millions of people have little need to prove anything to anyone. They know and accept what is true for them, and I’m good with that. There simply is (in my opinion) connectivity that transcends what we currently know as science, and you’ve perhaps glimpsed it yourself. It’s not to say I’m right or wrong. But I’m extremely reluctant to believe that my western-trained mind can actually comprehend what it’s truly like in other cultural contexts, when the definitions of what is real are quite different than our own. Efforts to explain on our own terms fall short of the reality. The only way to know for sure is to enter it and see.

Michael

April 7th, 2021 at 8:54 pm

It does sound like we’re very much in sync with regard to brains, mind, and computation. I also agree that the question of how deep a simulation would have to be also exists for a positronic brain. It may well be that only biology has sufficient detail at all levels. That said, I think it might be easier to leverage other physical principles than to define a digital simulation, but that’s sheer guesswork on my part.

I admit we’re not quite as in sync regarding, for lack of a better term, mystical experience, but nor are we necessarily all that far apart. The difference may be that the skepticism I have for computationalism I have for most matters with open questions.

While I agree there is a long history for mystical experience, that includes accounts of ghosts, angels and demons (and UFOs), and I’m very aware of the power of human imagination, even very sincere imagination. There is also that we tend to remember events that strike us as out of the norm while discarding those times, metaphorically, we heard a noise but nothing was there.

I’m very skeptical of astral projection (or ghosts) for reasons mentioned, but I’m much less skeptical of our ability to mentally entangle with others (as I also mentioned). I’ve experienced that sort of thing myself, and the human mind is a deep mystery with capabilities we don’t yet understand.

On the other side of the equation, I’m not fully taken by scientism either. I absolutely believe there is more to the picture than our sciences can ever determine, especially with regard to the human mind. Our perceptions only give us what I think of as a “wireframe” model of reality — I believe it resembles the real thing, but much is missing.

All that said, the undeniable thing for me is that science and physics are reliable and effective, whereas most mysticism just isn’t. It’s hard to separate the almost certainly not true (astrology and fortune-telling, for example) from what may well be valid. I do think some forms of mind entanglement may fall into the valid category, though.

Very interesting tales of your Lakota experiences. 15 years is impressive. I’ve been very taken with the accounts of the Navajo in Tony Hillerman’s novels and have often thought (or fantasized) of picking up and moving to New Mexico. I do love the American Southwest desert country, and Navajo culture fascinates me.

April 7th, 2021 at 9:05 am

BTW and FWIW: This is part one of an intended three-part series. Part two is (the) Digital Difference. I’m working on part three (Digital Dualism) today; hoping to publish tomorrow.

April 7th, 2021 at 6:44 pm

Will catch up to your other posts at some point soon, Wyrd! Thank you!

April 8th, 2021 at 1:01 pm

[…] is the third post of a series exploring the duality I perceive in digital computation systems. In the first post I introduced the “mind stacks” — two parallel hierarchies of levels, one leading up […]

October 4th, 2021 at 7:28 am

[…] I, II & III) which was a follow-up to last spring’s Digital Dualism trilogy (parts: 1, 2 & 3). The first trilogy was a continuation of an exploration of computer modeling I started […]

November 22nd, 2021 at 11:58 am

There is a view that a computer running software is just a tool configured a certain way. That’s not wrong, but I think it can miss a crucial difference between algorithms and our usual sense of tools. The difference lies in what the tool can do on its own.

The use of all tools begins with intentional action. In a computer, it’s pressing the [Enter] key or touching the screen. With a hammer, it’s taking aim and swinging.

Then the tool does something. A hammer pounds on a nail; a wrench tugs on a bolt; a needle pulls thread through cloth; a wheelbarrow carries a heavy weight. A computer does your taxes. Or sorts your photos. Or acts as the opponent in a game. Or manages millions of phone calls, or routes internet packets.

A tool extends a person. A computer can replace a person.

I don’t mean that in the “takin’ our jobs!” sense, although that’s true, too. I mean that algorithms allow a computer to perform a multi-step task that a human would do. Many of the things computers do now (taxes, phone calls, etc) were done by humans in the past. (All a computer does is manipulate numbers. Anything a computer can do, a human can do with a pen and paper.)

The core of the difference lies in that most machines, from simple levers to complex mechanical calculators, are strictly causal analog objects. They are limited to direct impulse, without which the mechanism becomes inert. In a mechanical clock, a wound spring provides impulse to the gear train and drives the clock. In an electric clock, current provides the impulse.

But an algorithmic device divides into two crucial subsystems: Instructions and an engine. That’s what puts such systems in a different class. (Turing’s Universal Computers.)

As such, I see algorithms as a class of mathematical objects that seem very much the product of an intellect. (This is one place where the Blind Watchmaker argument may be correct.) The empirical experience that leads to algorithms is abstract and high-level. Euclid’s Algorithm, for example, is not just itself abstract — it’s about something abstract (the GCD).

So I’m hard-pressed to see algorithms occurring naturally, is what I’m saying. 😉 I think they’re necessarily a product of an intelligence.