Is that you, HAL?

Last time, in Calculated Math, I described how information — data — can have special characteristics that allow it to be interpreted as code, as instructions in some special language known to some “engine” that executes — runs — the code.

In some cases, the code language has characteristics that make it Turing Complete (TC). One cornerstone of computer science is the Church-Turing thesis, which says that all TC languages are equivalent. What one can do, so can all the others.

That is where we pick up this time…

Recall how a mathematical expression represents a numeric value. (In some cases, an expression can have multiple values. For example, √4 is both +2 and -2.) An expression expresses a value in some language we’ve defined for that purpose.

Real sheep…

The numeric value an expression represents is abstract.

A specific case may represent the value in some physical way (actual number of sheep, actual number of gallons), but these are just instances of the underlying mathematically defined numerical abstraction.

Likewise, code is a language designed to express an abstract algorithm in a concrete way. Different languages have different design goals. They can be human-friendly (easy to read) or computer-friendly (easy to parse). They can be terse or verbose; they can be rich or simple.

The same is true of expressions. Here’s the math expression from last time in five forms (the first is the one from that post):

(3 + 4) × (9 - 7)

(3) (4) {+} (9) (7) {-} {*}

multiply: add: 3 4 subtract: 9 7

multiply(add(3,4), subtract(9,7))

number(3).add(4).multiply(number(9).subtract(7))[1]

We can view mathematical expressions as code strings specifying how to calculate the represented value.

…abstract numbers.

In this regard they are like the table on the rice box — used by the knowledgeable cook to make rice. A knowledgeable engine (perhaps a math student) reads the code string and calculates the described value.

This is that key distinction between the value represented by the code string and the abstract process of calculating the answer described by that string. The arithmetic processes are disconnected and different from the actual values!

This code string versus value distinction recapitulates the difference between the code to represent — to model —something and the thing being represented (modeled).

So, expressions and algorithms can be expressed in a variety of languages, but in both cases the language merely implements an underlying abstraction. All code is in reference to the engine that can run it. (In fact, some programming languages are defined explicitly in terms of the abstract virtual machine that can execute it.)

The value of an expression is its underlying abstraction. Because code is much more complex than an expression (for one thing, code may contain hundreds or thousands of expressions within it), the underlying abstraction is much more complex.[2]

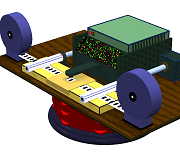

My (virtual) Turing Machine.

We describe algorithms abstractly in two key ways: Turing Machines (TM) and lambda (?) calculus.

That these two are equivalent is an example of Church-Turing (C-T) thesis and again shows the abstract nature of an algorithm.

Turing Machines are a lot more fun, and since they do the same thing as lambda calculus, we’ll focus on them. But lambda calculus is important for a key reason: It’s a formal system in mathematical logic for expressing computation! As such, it has value in formal proofs about computation.

The key point here is that an algorithm — that software — is math.

§

Okay, so what is a Turing Machine?

I plan to go into more detail in a Sideband post (or perhaps on my programming blog), so for now I’ll say that it’s an abstract computer (a model of computation itself) that represents a given algorithm. Every algorithm has a TM that represents it.

The software world is said to be abstractions on top of abstractions on top of abstractions (repeat several times). Each TM has an abstraction behind it, formally called a state diagram, but you can think of it as a flowchart.

If you haven’t created a flowchart yourself, it’s likely you’ve at least seen them. They’re common enough to be used for visual humor![3]

If you haven’t created a flowchart yourself, it’s likely you’ve at least seen them. They’re common enough to be used for visual humor![3]

Note, too, how crucial the idea of a conditional branch (yellow box left) is here. Recall from last time that selection is a key attribute of code!

One thing about a TM, though — it’s a single-purpose machine. It only does what its “program” allows. (A TM is an implementation of its state diagram — typically as a table of rules about moving from one state to another.)

You might think this sounds fairly useless, but it’s exactly what’s going on in your car, microwave oven, electronic calculators and clock radios, vending machines, elevators, and lots of other places. They are all run by a computer — a (finite) state machine — designed to do the one thing it does.

But not necessarily!

The next step along our journey is the idea of a Universal Turing Machine (UTM) — a general-purpose abstraction of computing.

A TM, as mentioned, depends on two key abstractions: The general idea of a TM, and the state diagram (flowchart) that defines a specific TM.

A UTM is a machine that first loads a provided state diagram (usually a table) and then executes the algorithm that defines.

This is the state diagram for the Divide program discussed in the Halt! post. Given 1 and 3, it will loop forever!

This is also known as a Stored Program (SP) computer, and it’s exactly how all PCs, laptops, mainframes, tablets, and smart phones, work. All of them contain a general-purpose machine — the CPU and the O/S — that loads an application and then runs it.

It’s possible that some of the single-purpose devices mentioned earlier actually have a general-purpose chip along with a single hard-stored program controlling the device. That’s increasingly common.[4]

Remember that TMs and UTMs are abstract. We can talk about the “implementation” of a TM, but we’re still talking in strictly abstract terms. We don’t consider actual physical TMs.[5]

However, an important next step involves an abstract architecture that has a concrete implementation in just about every digital computer there is (certainly in all the PCs, laptops, and other boxes we think of as computers). It’s called the Von Neumann architecture.[6]

In future posts, I’ll be taking a look at this common computer architecture in particular with regard to characterizing its complexity. Down the road, we’ll compare that to the complexity of the human brain (guess who wins hands down)!

In the meantime, here’s the bottom line for this time:

Algorithms are abstract and agnostic!

By agnostic I mean that calculation is irrespective of the physical natures of the platform, code, or data, or how long a calculation takes. All such implementations are equal so long as the system is Turing Equivalent.

Just as you can “calculate sums” with your fingers, pen and paper, chalk and board, piles of rocks, an abacus, clever collections of gears, or with transistor logic, algorithms likewise have myriad physical implementations.

None of which changes that an algorithm is an abstract mathematical entity.

The other crucial point is that an algorithm requires an engine to execute it. That’s where we pick up next time.

I’ll leave you with this flowchart used instinctively by expert engineers the world over:

[1] Expert Level Question: In this last example, why the number() function for some, but not all, numbers?

[2] In fact, implementing a language design is notoriously challenging because it’s hard to reify a complex abstraction 100% correctly.

[3] So are Venn diagrams! Searching Google Images for [funny flowcharts] or [funny Venn diagrams] results in lots of hilarious hits!

[4] Sometimes an off-the-shelf general purpose chip and some programming is cheaper than designing and building a special purpose chip. It also allows the possibility of updating or changing how the device functions.

[5] For one thing, while being a universal model of computation, they are hideously inefficient. And they’re designed on the presumption of infinite resources. So they’re really imaginary. No one builds one except as a demo.

[6] Which, incidentally, is why you need to be careful about referring to a Von Neumann machine. That can refer to a computer using his architecture, or to various kinds of self-replicating machines, such as nano-kind and the interstellar probes.

∇

October 23rd, 2015 at 12:01 am

If anyone is staring at the flowchart for the Divide program trying to figure it out, here’s the Python code that implements the algorithm:

def divider (a, b, d=10): if b == 0: raise ValueError('Division by ZERO!') q = [0] while a: # Can we remove more b's from a?... if b <= a: # Yes, so remove one more... q[len(q)-1] += 1 a -= b else: # No, so next digit... a *= d q.append(0) return (q, a)(Python is such a sweet language!)

July 2nd, 2024 at 3:00 pm

[…] tricky, let’s start with that. For a detailed look, see my posts Calculated Math and Coded Math, but […]