Tag Archives: binary

Back in 2014 I decided that a blog that almost no one reads wasn’t good enough, so I created a blog that no one reads, my computer programming blog, The Hard-Core Coder. (I was afraid the term “hard-core” would attract all sorts of the wrong attention, but apparently those fears were for naught. No one has ever even noticed, let alone commented. Yay?)

Back in 2014 I decided that a blog that almost no one reads wasn’t good enough, so I created a blog that no one reads, my computer programming blog, The Hard-Core Coder. (I was afraid the term “hard-core” would attract all sorts of the wrong attention, but apparently those fears were for naught. No one has ever even noticed, let alone commented. Yay?)

In the seven years since, I’ve only published 83 posts, so the lack of traffic or followers isn’t too surprising. (Lately I’ve been trying to devote more time to it.) There is also that the topic matter is usually fairly arcane.

But not always. For instance, today’s post about Unicode.

Continue reading

24 Comments | tags: alphabet, binary, computer programming, letters, Unicode | posted in Computers

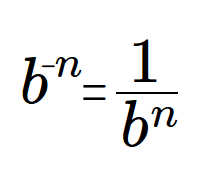

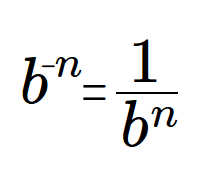

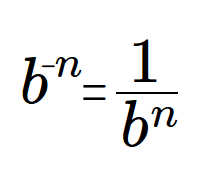

The last Sideband discussed two algorithms for producing digit strings in any number base (or radix) for integer and fractional numeric values. There are some minor points I didn’t have room to explore in that post, hence this follow-up post. I’ll warn you now: I am going to get down in the mathematical weeds a bit.

The last Sideband discussed two algorithms for producing digit strings in any number base (or radix) for integer and fractional numeric values. There are some minor points I didn’t have room to explore in that post, hence this follow-up post. I’ll warn you now: I am going to get down in the mathematical weeds a bit.

If you had any interest in expressing numbers in different bases, or wondered how other bases do fractions, the first post covered that. This post discusses some details I want to document.

The big one concerns numeric precision and accuracy.

Continue reading

3 Comments | tags: base 10, base 2, binary, binary digits, decimal, fractions, number bases | posted in Math, Sideband

Fractional base basis.

I suspect very few people care about expressing fractional digits in any base other than good old base ten. Truthfully, it’s likely not that many people care about expressing factional digits in good old base ten. But if you’re in the tiny handful of those with an interest in such things — and don’t already know all about it — read on.

Recently I needed to figure out how to express binary fractions of decimal numbers. For example, 3.14159 in binary. And I needed the real thing — true binary fractions — not a fake that uses integers and a virtual decimal point.

The funny thing is: I think I’ve done this before.

Continue reading

4 Comments | tags: base 10, base 2, binary, binary digits, decimal, fractions, number bases | posted in Math, Sideband

If it hasn’t been apparent, I’ve been giving a bit of a fall semester in some computer science basics. If it seems complicated, well, the truth is all we’ve done is peek in some windows. From a safe distance. And most of the blinds were down.

If it hasn’t been apparent, I’ve been giving a bit of a fall semester in some computer science basics. If it seems complicated, well, the truth is all we’ve done is peek in some windows. From a safe distance. And most of the blinds were down.

I thought we’d finish (yes, finish!) with a bang and take a deep dive down into the lowest levels of a computer, both on the engineering side and on the abstract logic side. When they say, “It’s all ones and zeros,” these are the bits (in both senses!) they mean.

Attention: You need to be at least this ━▇━ geeky for this ride!

Continue reading

6 Comments | tags: adder, AND gate, binary, binary logic, calculation, calculator, computer chip, flip-flop, full-adder, half-adder, logic, NAND gate, NOR gate, NOT gate, OR gate, transistor, XOR gate | posted in Computers

Today I’d like to introduce you to a concept I picked up from mathematician Rudy Rucker in his 1987 book, Mind Tools (The Five Levels of Mathematical Reality). I’ll warn you now that there is some math ahead (but no math homework—unless you want to). It won’t get any more complicated than multiplication and addition, but we will be dealing with some extremely large numbers (so large they are more ideas than numbers).

Today I’d like to introduce you to a concept I picked up from mathematician Rudy Rucker in his 1987 book, Mind Tools (The Five Levels of Mathematical Reality). I’ll warn you now that there is some math ahead (but no math homework—unless you want to). It won’t get any more complicated than multiplication and addition, but we will be dealing with some extremely large numbers (so large they are more ideas than numbers).

The end result is that we’re going to tie together the written word with numbers. I’m going to show you how every word, every sentence, every book, magazine and blog article can be reduced to a single (very large) number. That we can do this provides a foundation we can use to discover some amazing things about mathematical reality.

It may sound dry or intimidating but stick with it! You just might find it worthwhile.

Continue reading

10 Comments | tags: base 10, base 2, binary, decimal, number systems, positional notation, Rudy Rucker | posted in Basics, Math

I suppose a “golden” date could refer to a really good time out with the perfect someone. Or it could refer to a couple of hot oldsters, past their silver years, tearing up the town.

I suppose a “golden” date could refer to a really good time out with the perfect someone. Or it could refer to a couple of hot oldsters, past their silver years, tearing up the town.

I suppose the oldsters could double the value of their gold by being with that perfect someone. It doesn’t matter; I mean neither perfect occasions nor advanced years. I speak, literally, of the date.

It’s 11-11-11, and that’s slightly fun and slightly rare. It’s a bit like your Golden Birthday, when your age matches the date (for example, when you turn 19 on the 19th of whatever month). Today we match on the date, month and year; trifecta gold! And of course, double bonus points just before lunch at 11:11:11!!

Continue reading

4 Comments | tags: 11, binary, date, golden | posted in Life, Math

I close the first round of CS101 articles with some of my very favorite CS jokes. Sure, they’re esoteric, but they’re also really funny. Thing is, you may have to trust me on that.

I close the first round of CS101 articles with some of my very favorite CS jokes. Sure, they’re esoteric, but they’re also really funny. Thing is, you may have to trust me on that.

Binary

I have a sign in my cube:

There are 10 types of people…

Those who can count in binary,

and those who can’t!

It garners two reactions. Some people just walk away puzzled. Some people look puzzled for just a moment and then they crack up.

Continue reading

3 Comments | tags: binary, COBOL, computer programming, humor, jokes, Unix | posted in Computers, Sideband

The ship sailed when I was moved to rant about cable news, but I originally had some idea that Sideband #32 should be another rumination on bits and binary (like Sidebands #25 and #28). After all, 32-bit systems are the common currency these days, and 32 bits jumps you from the toy computer world to the real computer world. Unicode, for example, although it is not technically a “32-bit standard,” fits most naturally in a 32-bit architecture.

The ship sailed when I was moved to rant about cable news, but I originally had some idea that Sideband #32 should be another rumination on bits and binary (like Sidebands #25 and #28). After all, 32-bit systems are the common currency these days, and 32 bits jumps you from the toy computer world to the real computer world. Unicode, for example, although it is not technically a “32-bit standard,” fits most naturally in a 32-bit architecture.

When you go from 16-bit systems to 32-bit systems, your counting ability leaps from 64 K (65,536 to be precise) to 4 gig (full precision version: 4,294,967,296). This is what makes 16-bit systems “toys” (although some are plenty sophisticated). Numbers no bigger than 65 thousand (half that if you want plus and minus numbers) just don’t cut very far.

Continue reading

2 Comments | tags: 42, binary, bits, counting, indexing, zero | posted in Computers, Sideband

I’d planned to do this later, probably for Sideband #64, but in honor of my parents 64th wedding anniversary (2 parents, 64 years, okay!) this numerical rumination gets queue-bumped to now.

I’d planned to do this later, probably for Sideband #64, but in honor of my parents 64th wedding anniversary (2 parents, 64 years, okay!) this numerical rumination gets queue-bumped to now.

Just recently I wrote about 64-bit numbers and how 64 bits allows you to count to the (small, compared to where we’re going) number:

264 = 18,446,744,073,709,551,616

That’s 18 exabytes (or 18 giga-gigabyes). Just to put it into perspective, if we were counting seconds, it amounts to 584,942,417,355 years; more than 500 billion years! (That’s the American, short-scale billion.)

Continue reading

Leave a comment | tags: 64-bit, 64K, 8-bit, big numbers, binary, bits, exabyte, powers of two | posted in Computers, Math, Sideband

In my second post I raised the topic of mind versus brain. There is (or, perhaps more accurately, may be) a duality. I mentioned that there are two basic schools of thought: one holding that mind emerges from brain and the other holding that they are distinct, that mind is – somehow – not physical. For now, the duality of the brain/mind question is open.

In my second post I raised the topic of mind versus brain. There is (or, perhaps more accurately, may be) a duality. I mentioned that there are two basic schools of thought: one holding that mind emerges from brain and the other holding that they are distinct, that mind is – somehow – not physical. For now, the duality of the brain/mind question is open.

But there is definitely a duality in the two schools: the two opposing points of view. In this post I want to focus on the idea of duality and the idea of ideas in opposition. This post is about Yin and Yang.

Continue reading

13 Comments | tags: binary, Dualism, trinary, Yin and Yang | posted in Basics, Philosophy

Back in 2014 I decided that a blog that almost no one reads wasn’t good enough, so I created a blog that no one reads, my computer programming blog, The Hard-Core Coder. (I was afraid the term “hard-core” would attract all sorts of the wrong attention, but apparently those fears were for naught. No one has ever even noticed, let alone commented. Yay?)

Back in 2014 I decided that a blog that almost no one reads wasn’t good enough, so I created a blog that no one reads, my computer programming blog, The Hard-Core Coder. (I was afraid the term “hard-core” would attract all sorts of the wrong attention, but apparently those fears were for naught. No one has ever even noticed, let alone commented. Yay?)

If it hasn’t been apparent, I’ve been giving a bit of a fall semester in some computer science basics. If it seems complicated, well, the truth is all we’ve done is peek in some windows. From a safe distance. And most of the blinds were down.

If it hasn’t been apparent, I’ve been giving a bit of a fall semester in some computer science basics. If it seems complicated, well, the truth is all we’ve done is peek in some windows. From a safe distance. And most of the blinds were down. Today I’d like to introduce you to a concept I picked up from mathematician

Today I’d like to introduce you to a concept I picked up from mathematician  I suppose a “golden” date could refer to a really good time out with the perfect someone. Or it could refer to a couple of hot oldsters, past their silver years, tearing up the town.

I suppose a “golden” date could refer to a really good time out with the perfect someone. Or it could refer to a couple of hot oldsters, past their silver years, tearing up the town.