I suspect very few people care about expressing fractional digits in any base other than good old base ten. Truthfully, it’s likely not that many people care about expressing factional digits in good old base ten. But if you’re in the tiny handful of those with an interest in such things — and don’t already know all about it — read on.

Recently I needed to figure out how to express binary fractions of decimal numbers. For example, 3.14159 in binary. And I needed the real thing — true binary fractions — not a fake that uses integers and a virtual decimal point.

The funny thing is: I think I’ve done this before.

There are things (most things) that I’ll research but there are some things I see as more fun to try to figure out on my own.

For one thing, it keeps my mind exercised, which is a Very Good Thing at my age. A number of studies have shown that highly active minds can remain sharp into old age. (Various diseases can play a significant role, of course, but it’s a simple truth that, body or mind, exercise is an excellent thing.)

For another, solving a puzzle is fun, but I need the puzzle to be relevant and that solving it provides something useful. (So books or websites with puzzles other people made up don’t do much for me.)

This one I apparently figured out many years ago for an arbitrary precision calculator tool I wrote (and still use today).

That calculator does math with actual “digits” — very much like we would do with pen and paper. Effectively, it’s a symbol processor. This time I needed binary numbers, so the underlying implementation is different, but the process is the same.

§ §

First, some background: Given a numeric value, how do we express that value as a number? How do we “spell” a numeric value?

You may not realize it, but there is a background protocol behind every number we use. It’s a bit like the protocol we use to turn alphabetic characters into written language. We’re so used to decoding that protocol we don’t sense it as the process it is.

Consider a number such as 2019. For convenience, we might give it the name “twenty-nineteen” but we also know it as “two-thousand and nineteen.”

Its full name is: two one-thousands, plus zero one-hundreds, plus one ten, plus nine ones. It’s very close to how money works.

In fact, it’s exactly like money works if money only has ones, tens, hundreds, thousands, etc. (It’s the fives, twenties, fifties, etc, that make it different.)

Our numbers use positional notation, which means each digit position represents a “denomination” of a certain value. From right to left: ones, tens, hundreds, thousands, ten-thousands, and so on as high as necessary. Each position to the left is ten times the amount.

We think of this as completely natural, but it’s only because humans have ten fingers. If we had eight fingers, or twelve fingers, each position would be eight times, or twelve times, the amount.

What we’re talking about here is the numeric base (or radix). Nearly all the numbers we deal with on a daily basis are in base ten, or decimal notation.

But there is absolutely nothing special about base ten. Number bases are just a way to “spell” numeric values. All bases are functionally equivalent.

[Also: All your base are belong to us!]

§

Formally, positional notation works like this:

| Base | … | b3 | b2 | b1 | b0 | b-1 | b-2 | b-3 | … |

|---|---|---|---|---|---|---|---|---|---|

| 2 | … | 8 | 4 | 2 | 1 | 0.5 | 0.25 | 0.125 | … |

| 8 | … | 512 | 64 | 8 | 1 | 0.125 | 0.015625 | 0.001953125 | … |

| 10 | … | 1000 | 100 | 10 | 1 | 0.1 | 0.01 | 0.001 | … |

| 16 | … | 4096 | 256 | 16 | 1 | 0.0625 | 0.00390625 | 0.000244140625 | … |

All numeric values are shown in base ten. That’s why only base 10 looks “normal” — i.e. has a “1” in each position. Expressed in their own base, each row would look the same as the row for base 10.

I listed only a handful of common bases, but the logic applies to any base from two on up. Note that bases greater than 10 require new digit symbols. For example, base 16 needs six new symbols: “A”, “B”, “C”, “D”, “E” & “F” (upper or lower case allowed).

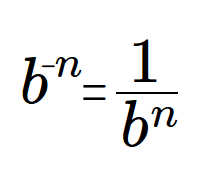

The protocol is that the value of a digit position is the base raised to the power of the index of that position (as shown in the table above). The fraction digits have negative exponents, which generates the inverse value. For example, the inverse of 10 is 0.1 (and vice versa).

§ §

So my question was: How do I convert a fractional numeric value from one base to another?

As a specific example: If the numeric value is 3.14159 (expressed in good old base 10, of course), how do I convert that to binary? Or how about an easy one: What is 0.1 (that is, one-tenth) in binary? Or any base?

That seemed a problem challenging enough to be interesting but simple enough for me to tackle. I already knew how to convert integers between bases, that’s pretty easy. But that process doesn’t work with fractions.

But the one that does is similar, so I’ll start with the integer version.

§

Let’s go back to the number 2019 and convert it to base 10.

The result we want is already there in the previous sentence, but let’s assume we have that value internally stored and we need to generate the string for that previous sentence.

The process — the algorithm — is pretty simple:

- Divide the numeric value by the intended base.

- Add the (integer) remainder to the output list of digits.

- If the (integer) quotient is zero, we’re done.

- Otherwise the quotient is the new numeric value.

- Go to step #1.

Since the division gives us integer quotient and remainder, the quotient always reaches zero, and the algorithm halts. When it does, the string of remainder digits is the output.

So the base 10 spelling of the numeric value is:

- 2019 ÷ 10 = 201 remainder 9

- 201 ÷ 10 = 20 remainder 1

- 20 ÷ 10 = 2 remainder 0

- 2 ÷ 10 = 0 remainder 2

Which, from first-to-last, right-to-left, is 2019.

If we wanted to spell that value in binary (base 2):

- 2019 ÷ 2 = 1009 remainder 1

- 109 ÷ 2 = 504 remainder 1

- 504 ÷ 2 = 252 remainder 0

- 252 ÷ 2 = 126 remainder 0

- 126 ÷ 2 = 63 remainder 0

- 63 ÷ 2 = 31 remainder 1

- 31 ÷ 2 = 15 remainder 1

- 15 ÷ 2 = 7 remainder 1

- 7 ÷ 2 = 3 remainder 1

- 3 ÷ 2 = 1 remainder 1

- 1 ÷ 2 = 0 remainder 1

Which gives us the binary spelling: 11111100011

How about the base 16 spelling?

- 2019 ÷ 16 = 126 remainder 3

- 126 ÷ 16 = 7 remainder 14

- 7 ÷ 16 = 0 remainder 7

As mentioned above, bases larger than 10 require extra symbols. We see that in the second line where the remainder is 14 — which is not a digit. The result is certainly not “7143” — it’s “7?3” where the “?” is some symbol that means 14.

As also mentioned, we usually do it like this: 10=a, 11=b, 12=c, 13=d, 14=e, 15=f (for what it’s worth, I favor the lowercase versions as more readable).

Therefore, the base 16 spelling of the numeric value 2019 is 7e3.

§ §

That algorithm depends on integers and can’t work with fractions.

For my project I created one algorithm that worked okay, but was super slow. It replicated a “by hand” method using digits and required a large database of fractions. (I might write about these on my programming blog.)

Then I worked out a faster version that implemented factions and didn’t need the database, and that was much better. Still kinda slow, and the fraction integer components got huge (like many hundreds of digits). Python can handle huge integers fine, but I wanted a more general solution.

I could have saved myself a lot of effort by looking this one up. On the other hand, I had fun, and the two algorithms are kind of interesting.

But the best method turns out to be embarrassingly simple and essentially a mirror image of the technique for integers.

§

This time the algorithm multiplies:

- Multiply the numeric value by the intended base.

- Remove the whole part to the output list of digits.

- If the fractional part is zero, we’re done.

- Otherwise the fractional part is the new numeric value.

- Go to step #1 (unless we’ve looped enough).

Note that this algorithm doesn’t necessarily halt on its own! We’ll see why in a moment. Let’s first consider some simple examples:

If we have the numeric value one-eighth, which is expressed in base 10 as 0.125, we can verify the process in base 10 as we did with 2019 above:

- 0.125 × 10 = 1.25 (remove and use the 1)

- 0.25 × 10 = 2.5 (remove and use the 2)

- 0.5 × 10 = 5.0 (remove and use the 5)

- 0.0 ⇒ done

And sure enough, the output is 0.125, so the algorithm seems to work.

Let’s try it out on base 2:

- 0.125 × 2 = 0.25 (use the 0)

- 0.25 × 2 = 0.5 (use the 0)

- 0.5 × 2 = 1.0 (remove and use the 1)

- 0.0 ⇒ done

Which give the binary fraction 0.001, which is, as required, one-eighth in base 2.

How about base 16:

- 0.125 × 16 = 2.0 (remove and use the 2)

- 0.0 ⇒ done

Which gives us 0.2, which is how one-eighth is spelled in base 16. (Because 0.1 in base 16 would be one-sixteenth, and two of those is one-eighth.)

Note that the algorithm only accepts inputs with values less than one. To convert a real number, such as 3.14159, the whole and fractional parts must be separated and used with the respective algorithms. The outputs are pasted together to form the final result.

§

I’ll get back to that. First we need to see why the fraction algorithm can loop forever. The short answer is: Because some fractions have digits that go forever.

Let’s apply the fraction algorithm to get a base 10 spelling for the numeric value one-third. The answer we expect is 0.333… where the 3s go on forever.

- 0.333… × 10 = 3.333… (remove and use the 3)

- 0.333… × 10 = 3.333… (remove and use the 3)

- 0.333… × 10 = 3.333… (remove and use the 3)

- … (continue forever!)

Clearly the algorithm will spit out 3s forever. But look at what happens in base 3:

- 0.333… × 3 = 0.999… = 1.0 (remove and use the 1)

- 0.0 ⇒ done

(Because 0.999… is just an alternate spelling for 1.0.)

The answer is 0.1 exactly, which is one-third in base 3. (Easily proved since 3 × 0.1 = 1.0 in base 3. Three thirds is one.)

The thing about fractions is that they may have infinite digit strings in some bases while being exact finite digit strings in others. It sometimes surprises programmers the first time they see the humble 0.1 as a binary fraction:

- 0.1 × 2 = 0.2 (use the 0)

- 0.2 × 2 = 0.4 (use the 0)

- 0.4 × 2 = 0.8 (use the 0)

- 0.8 × 2 = 1.6 (remove and use the 1)

- 0.6 × 2 = 1.2 (remove and use the 1)

- 0.2 × 2 = 0.4 (use the 0)

- 0.4 × 2 = 0.8 (use the 0)

- 0.8 × 2 = 1.6 (remove and use the 1)

You can see that the last four lines repeat indefinitely. The binary fraction for one-tenth is: 0.00011001(1001…)

As base 10 beings, we’re used to seeing one-tenth as 0.1, but its “digits” (bits) go on forever in base 2. (This is why we sometimes get weird results with calculators. Edge cases caught between base 10 and base 2.)

§ §

So what is 3.14159 in binary?

Well, we split the whole and fractional parts. The 3 is quick and easy:

- 3 ÷ 2 = 1 remainder 1

- 1 ÷ 2 = 0 remainder 1

So the whole binary part is 11. The fractional part is more involved:

- 0.14159 × 2 = 0.28318 (use the 0)

- 0.28318 × 2 = 0.56636 (use the 0)

- 0.56636 × 2 = 1.13272 (remove and use the 1)

- 0.13272 × 2 = 0.26544 (use the 0)

- 0.26544 × 2 = 0.53088 (use the 0)

- 0.53088 × 2 = 1.06176 (remove and use the 1)

- 0.06176 × 2 = 0.12352 (use the 0)

- 0.12352 × 2 = 0.24704 (use the 0)

Which gives us the first eight bits. We can generate as many as we need.

Pasted together, the binary value so far is: 11•00100100

You can continue this on your own if you like, but as a hint, here are the first eight hex (base 16) digits: 3•243f3e03

Stay fractional, my friends!

∇

February 28th, 2020 at 5:33 pm

There! Now it’s documented so I don’t have to ever figure it out again.

February 28th, 2020 at 5:39 pm

I warned y’all math posts were coming. Math is a great escape from politics and social malaise. It’s pure. No bullshit allowed.

February 28th, 2020 at 5:43 pm

The reader is invited to figure out why these two algorithms work the way they do. Start with the integer one; it’s fairly easy to think about, especially if you think visually.

Understanding that one illuminates the other, since it’s a mirror version. In fact, how I finally came to the fraction algorithm was thinking about the integer version and looking for something similar.

February 29th, 2020 at 9:16 am

Hint: In the first case we’re dividing whole numbers and removing the remainders (fractional parts) as output. In the second case we’re multiplying fractional numbers and removing the whole parts as output.