“Ouch!”

Over the past few weeks we’ve explored background topics regarding calculation, code, and computers. That led to an exploration of software models — in particular a software model of the human brain.

The underlying question all along is whether a software model of a brain — in contrast to a physical model — can be conscious. A related, but separate, question is whether some algorithm (aka Turing Machine) functionally reproduces human consciousness without regard to the brain’s physical structure.

Now we focus on why a software model isn’t what it models!

Here’s a Venn diagram of the four Levels (or Doors) discussed in the last two posts:

Models of mind. The three doors on the left all lead to physical models of the brain. Door X leads to alternate models outside our scope.

Walking through Door #2 (mind is just a physical network) is a requirement to walk through Door #3 (mind is a biological physical network). Door #4 (dualist theories) assumes the biology, which assumes the network.

The point here is that Door #1 stands alone from those physical theories. A software model does not have a physical correlation to the thing it models. That is the crux of these posts!

(The diagram also shows that Door #1 includes several approaches. We’ve touched only on the first two: modeling the brain’s physical network; creating consciousness functionally.)

§

The real thing.

We’ll start by considering a software model of a simple physical process: current flowing through a resistor.

To make the model easy, we’ll assume the battery has a constant voltage and zero output impedance. That means it can supply any amount of current (within reason). We model it just as a voltage source.

The resistor has a resistance and power dissipation rating. The latter is important because it reflects the part’s ability to shed heat. If it gets too hot, it fries.

The current through the resistor depends on its resistance in a straightforward way: I = E ÷ R. The current (I) is the voltage (E) divided by the resistance (R).

If our battery supplies 10 volts, and our resistor is 200 ohms, the current is 0.05 amps (10 ÷ 200 = 0.05).

The model.

Power (watts) is also straightforward (and tasty): P = I × E. Just multiply the current (I) and the voltage (E).

With a current of 0.05 amps and a voltage of 10 volts, our resistor dissipates 0.5 watts.

Our software model takes battery voltage and resistance as input parameters. We can vary them to see how they affect the current flow.

The two formulas we saw are the guts of the model. Change the input parameters and the model uses the formulas to adjust current — and therefore the power — accordingly.

Here’s the punchline: Our model can tell us the resister is dissipating half a watt, but that’s just a number that came out of doing math with other numbers.

Fried!

Our model can even take another input parameter — the resistor’s power rating — and tell us, numerically speaking, how hot the resistor would be.

But, unlike the real circuit, nothing in the software model generates any heat (let alone burns your fingers).

All we get is a number that we interpret as telling us, whoa, that baby is hot!

§

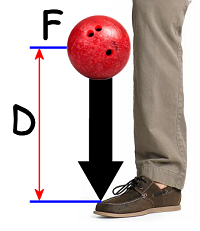

Another simple model we might consider involves a dropped bowling ball and the foot it lands on. Our model will answer the question: How much force does the foot receive?

There’s a simple trick that makes this model easy. Because the ball begins and ends at a dead stop, there’s a simple formula we can use:

Ffall × Dfall = E = Dstop × Fstop

The force (on the ball of gravity) times the distance it falls gives us a number representing the ball’s energy (in foot-pounds) after falling that far.

Since the ball comes to a stop on the foot, that energy number is the same as the (very short) distance times the force of stopping. It’s that last value, the force of stopping, that interests us, so we can rearrange the formula like this:

Ffoot = (Dfall ÷ Dstop) × Fball

Our model takes the weight of the bowling ball, the distance dropped, and the stopping distance, as input parameters. The operation of the model tells us how much our toes hurt.

For example, a 13-pound bowling ball dropped 4 feet (48 inches) and hitting, say, a ¼” layer of combined shoe padding and top layer of foot gives us:

(48 ÷ 0.25) × 13 = 2,496 pounds

Which you would expect to hurt!

But, again, in our model, the result is just a number that we interpret in a real-world context to make sense of. Running the model does not make us say, “Ouch!”

And this really is the key point. A software model begins with numbers and creates new numbers with math. That’s all it does. That’s all it can do.

Remember: Computers are calculators. They crank out numbers.

§

…and a popcorn button!

Let’s consider a much more involved model. This time we’ll model a microwave oven — a really nice one with lots of user features.

Our model will include whatever knowledge necessary about creating and using microwaves to cook food.

The user features are simple — programmers have been writing user interfaces for many decades now (and, sadly, many of them still aren’t getting it right). Modeling the behavior of microwaves is complex, but engineers understand that behavior quite well.

We’ll break our model into two parts: the control system and the microwave system. To start off easily, we’ll imagine that the microwave system is literally a very simple microwave oven.

Then all we have to do is model the user interface (easy!) and let the magic happen inside the “black box” of the actual microwave oven. We end up with a model like this:

The UTM (Universal Turing Machine) is the algorithm that is our software model. The Oven is the black box we can think of as the I/O of the algorithm. The model creates numbers it can send as output. It can also read numbers as input.

Because the Oven is sophisticated, the outputs of the UTM amount to commands that replicate what a person might do standing at the oven. Set the time; set the power; press Start.

Most advanced features can be done by a human, so what we’re really doing here is modeling a human standing in front of a simple microwave.

Most advanced features can be done by a human, so what we’re really doing here is modeling a human standing in front of a simple microwave.

If we think of that person as a virtual chef, we’re now modeling a microwave with advanced features.

But the heavy lifting is done by a black box.

Let’s dig deeper.

Let’s gut the microwave oven and weave our control lines into everything.

Any circuitry we can model with software, we rip out and discard. For instance, if there were timer circuits, we can toss those, because we can model a timer effectively in software.

In fact, we’ll throw away everything except the magnetron that actually generates the microwaves and any power circuitry associated with it.

Now our algorithm must account for the advanced user features and the operation of the parts of the oven. The software is now controlling the oven. The only black box now is the one that actually generates the microwaves.

For a final step, let’s open that box as well. No more special hardware!

All that remains now is the UTM, the algorithm that describes the behavior of our microwave oven. The model accounts for how microwaves are generated and how they behave.

No special hardware!

The model now is strictly software — the entire system is modeled by the algorithm. And since an algorithm is a mathematical object, so is our model.

We’ve found a mathematical object that describes the behavior of our microwave oven.

The punchline should be obvious: The model cannot generate microwaves unless we connect it to physical hardware capable of generating microwaves.

The microwaves come from intrinsic properties of the hardware, not the software. They cannot, in fact, ever come from the software. The software is just numbers.

§

A similar example exists with laser light.

A similar example exists with laser light.

The physics of what makes materials lase is quite well-understood, and software models can very precisely describe what happens under those circumstances.

But software models do not, themselves, ever lase. They can’t. A flow of numbers cannot lase.

Laser light supervenes on specific physical properties. So does the generation of microwaves. Likewise, the force of falling bowling balls or the heat of overloaded resistors.

All these are physical phenomena.

We can model them with numbers, but those models are not the thing they model. They are distinctly different — precisely as different as analog sound and its digital model.

§

So the bottom line is this: What if the self-aware part of our mind supervenes on physical properties of the brain?

What if consciousness is like microwaves or laser light and can only arise under the correct physical conditions — physical conditions our brains happen to meet?

What if consciousness is like microwaves or laser light and can only arise under the correct physical conditions — physical conditions our brains happen to meet?

If our brains meet those physical conditions, it seems likely other physical objects with the same properties would also meet those conditions. That means we can step through Door #2.

But it would also mean Door #1 is locked forever. It cannot be opened, even in principle. We’ve encountered a physical limit of reality.

That doesn’t mean we might not someday model a brain, even model consciousness on some level. But those will be just flows of numbers that describe real world objects. They will not be consciousness.

Drop a (virtual) bowling ball on their (virtual) toe, and the resulting numbers, plus some clever programming, can make them send the OUCH.WAV digital sound file to the output speaker.

But the pain is virtual.

§

An obvious question is: What exactly is it that makes the physical brain necessary for consciousness. Here are some possible answers:

¶ The first one is big and obvious: the fact of the unimaginably huge network we all have between our ears. That network has roughly 500 trillion connections, which is awesome and unique.

¶ The first one is big and obvious: the fact of the unimaginably huge network we all have between our ears. That network has roughly 500 trillion connections, which is awesome and unique.

Maybe it just boils down to needing that actual physical network.

¶ Computer transistors have an on state and an off state. Neurons are either firing or not firing. Their “on” state (firing) is a pulsed signal; the frequency encodes the intensity of the firing state.

That’s two physical properties: Firstly, the time component inherent in a pulse train. Secondly, a firing neuron has an analog strength! The latter can probably be modeled, but the time aspect could be significant.

Time is an important element in the physics of generating laser light and microwaves — specifically these things depend on resonances. Perhaps consciousness also supervenes on a resonance.

¶ Brains are dense and compact, plus they’re encased inside hard skulls.

¶ Brains are dense and compact, plus they’re encased inside hard skulls.

Perhaps the pattern of neural activity in that small space generates emergent patterns, standing waves, we feel as consciousness.

Douglas Hofstadter posits consciousness is a complex feedback loop. If that were so, the physical self-containment might be part of that.

Cavities have the property of resonance, so maybe it takes a compact network inside a round container for consciousness to emerge.

§

We need a laser to lase; perhaps we need a brain to think. (When put that way, it seems kind of obvious.)

There is also this: Church-Turing tells us that, if mind can be implemented as an algorithm, then mind is an algorithm. (Again, it’s obvious when stated that way.)

Church touring!

But that means there is a natural physical object that is an algorithm — which is an abstract mathematical object.

But as far as we know, physical objects are not — generally speaking — abstract mathematical objects (although they can reify them).

So if mind is a physical object, it seems not very likely to be an algorithm.

Next time we’ll explore one more possible physical limit that might hamper efforts to create software consciousness. That will complete the arc of this series where we started: mathematics!

November 9th, 2015 at 11:22 am

“But that means there is a natural physical object that is an algorithm — which is an abstract mathematical object.”

Actually, I can’t see that that’s what it means. As far as I can see, it only means that a natural physical object can *implement* processes that we can recognize as algorithms. Since we can engineer physical objects that do that, why is the idea of an evolved physical object doing it so unlikely? Indeed, it seems likely to me that the engineered versions are simply aping the evolved ones.

November 9th, 2015 at 12:39 pm

“Since we can engineer physical objects that do that, why is the idea of an evolved physical object doing it so unlikely?”

The question is what we mean by: “…engineer physical objects that do that…”

We agree something that physically models the brain is likely to create mind. So if “that” refers to that, we’re totally on the same page.

If “that” refers to machines running algorithms that model the brain, or algorithms that model its functionality, I totally agree we do that. We do create models — simulations — of natural processes. Maybe someday we’ll be able to model the mind. (cf. Steve’s summation of my view; it pretty much nails it)

One key point is that those models function very differently than the thing they model.

But the premise of Classical CToM is that the model is the thing.

It’s possible it is, but it would be a first in nature.

The brain simply does not function like any Turing Machine (or algorithm) we know. That might just be a limitation of our knowledge, but it might mean brains work differently from TMs. Specifically, it might mean they’re not algorithmic. Things in nature just don’t tend to be.

” Indeed, it seems likely to me that the engineered versions are simply aping the evolved ones.”

As far as building a physical system, absolutely. Just consider how airplane use the same laws of flight as birds.

We can also make a software model that uses flight laws (good old Flight Simulator!). But it doesn’t actually achieve any lift. And I’ve crashed my plane more times than I can count, but oddly,… not a scratch on me. 🙂

November 9th, 2015 at 2:12 pm

Just to clarify, my response was actually to that quote was narrowly focused on the context in which you used it:

“There is also this: Church-Turing tells us that, if mind can be implemented as an algorithm, then mind is an algorithm. (Again, it’s obvious when stated that way.)

But that means there is a natural physical object that is an algorithm — which is an abstract mathematical object.”

My point was that given the premise in the first paragraph, I didn’t think the second paragraph followed, that it only implied a natural object that can run algorithms. By “that” I only meant implement algorithms. Of course, if “mind can be implemented as an algorithm” is false, then it’s mute.

Steve’s summation of your position matches my understanding, particularly with your follow up clarification of what you mean by “computer”.

On the result vs process thing, I actually think you might have it reversed. I see consciousness as part of the process, part of the information processing. I think Hofstadter is right that it’s a feedback loop, but I think that loop is an information loop, a subsystem collecting information from the other subsystems about what they’re doing and then sharing a summary of it all to all the subsystems.

Any software needs hardware to output its result (or to receive inputs), to have some effect on the environment. It seems like what you’re positing is that consciousness is one of those effects, one of those outputs. To me, that makes it a result.

I’m open to that possibility, but I don’t see anything that compels me to regard it as likely. That could change tomorrow of course as study proceeds.

November 9th, 2015 at 2:37 pm

“My point was that given the premise in the first paragraph, I didn’t think the second paragraph followed, that it only implied a natural object that can run algorithms.”

Where do those algorithms come from that the natural object (brain) runs?

November 9th, 2015 at 2:58 pm

The implementation? It seems like evolution is the obvious answer.

If you mean something deeper, I’d imagine they come from the same fundamental mathematical realities you’ve discussed.

Or am I totally missing what you’re asking?

November 9th, 2015 at 3:06 pm

I don’t think so. “Evolution” is an acceptable answer. The one I was going for is: “Nature.”

There is a natural source for those algorithms (assuming they are, in fact, algorithms, which I don’t think they are, but that’s another discussion 🙂 ). They are, therefore, natural objects.

November 9th, 2015 at 2:54 pm

Two separate points, so two separate threads.

“On the result vs process thing, I actually think you might have it reversed. I see consciousness as part of the process,…”

So do I. What do you think I have reversed?

“Any software needs hardware to output its result (or to receive inputs), to have some effect on the environment. It seems like what you’re positing is that consciousness is one of those effects, one of those outputs. To me, that makes it a result.”

Nope. Exactly the opposite. I think consciousness emerges as a consequence of the operation of the system. Call it a byproduct, perhaps (except “byproduct” suggests it’s not important).

Certain physical configurations of cavities can generate microwaves as a “byproduct” of how the EMF interacts with the cavity. Certain physical configurations of materials can emit laser light as a “byproduct” of how electrons and photons interact in the material.

Both of these can be modeled with software, but those models can’t emit microwaves or laser light.

Consciousness is, likewise (I believe), a byproduct or consequence of the physical operation of a physical system. It can be modeled in software, but the model won’t be self-aware. The model won’t experience the world.

If consciousness was an output of an algorithm, that’s effectively saying there is some numerical calculation (obviously very complex) that spits out numbers that… what? Are conscious? How do you get consciousness from numbers?

That seems really hard to believe to me, but that’s what a Classical CMoT demands.

The alternative is that running of some algorithm causes consciousness to emerge, not as an output calculation, but as a byproduct or consequence of running the algorithm. In this case, the algorithm itself somehow is conscious.

If you subscribe to a CCMoT, those seem the choices. Consciousness is the result of numerical calculation, or it somehow emerges from running that calculation.

I find that a hard view to credit.

November 9th, 2015 at 3:10 pm

Isn’t “consequence of the operation of the system” and “byproducts” just other names for output? It might not be what a designer intended, but it’s still a result of the process.

“If consciousness was an output of an algorithm, that’s effectively saying there is some numerical calculation (obviously very complex) that spits out numbers that… what? Are conscious? How do you get consciousness from numbers?”

How do you get sound or pictures from numbers? By having the appropriate output devices. All I’m saying is that your view seems to be that consciousness is an output, like the output of sound from a speaker.

Maybe a way fairer to your thesis would be to compare it to the sound of a hard drive. That sound isn’t a designed output, but the patterns of sounds that come out ultimately derive from software’s interaction with the data on it. Move things over to an SSD, and the sound disappears.

Of course, once we figure out that the sound is important, we might be able to engineer a speaker that can recreate it.

November 9th, 2015 at 3:44 pm

“Isn’t ‘consequence of the operation of the system’ and ‘byproducts’ just other names for output? It might not be what a designer intended, but it’s still a result of the process.”

Sure, it can be viewed that way (“output” more often means intended output, but from a whole system point of view all outputs are outputs).

What’s important here is that algorithms have a specific kind of intended output: numbers.

As for byproduct outputs, so far algorithms just generate low-level heat.

“How do you get sound or pictures from numbers?”

By, as you say, applying them correctly to physical output devices and leveraging real-world properties that actually give rise to light and sound.

The analogy being made here is that the mind “algorithm” process spits out numbers that some “I/O” device in the human body turns into consciousness like a speaker turns electrical signals into sound.

It’s worth noting that it’s not just a speaker you need. You need a physical DAC to turn the numbers into a physical analog voltage. You probably need drivers to get enough physical analog current to drive the speaker. And then you need that specific magnet-coil-cone configuration to produce physical analog sound waves.

“All I’m saying is that your view seems to be that consciousness is an output, like the output of sound from a speaker.”

If you’re going to define “output” as “anything the system does” then yeah, sure. I’m defining “output” to mean the intentional output of an algorithm (which is just numbers). The alternative, as I mentioned, was that consciousness is a byproduct output, a side-effect of running the algorithm.

Let me be specific what I’m arguing:

“Maybe a way fairer to your thesis would be to compare it to the sound of a hard drive.”

Yes, now you’re in the ballpark! (What, you’ve been being unfair up to now? 🙂 )

“Of course, once we figure out that the sound is important, we might be able to engineer a speaker that can recreate it.”

In other words, my exact point: If you want to re-create a specific physical phenomenon, you probably need a specific physical device.

Sound, lasers, microwaves, are all pretty easy. Consciousness may require a “speaker” with 500 trillion intelligent “wires.”

November 9th, 2015 at 4:17 pm

Okay, good. But now my question is, what data do we have to compel us to conclude that consciousness is like this? I agree we can’t eliminate the possibility, but is there anything that makes it a necessary part of our understanding?

November 9th, 2015 at 4:35 pm

I’ve written a dozen posts over the last few weeks exploring all the reasons we have for thinking consciousness is a physical process and not an algorithm. This whole series has been about the huge gap between physical models and software models.

Frankly, I think the burden of proof is on the other side. What shred of evidence is there arguing consciousness is algorithmic, that it can be fully implemented in software? All we know for sure is that physical (biological) brains produce consciousness.

Guessing that mechanical brain analogues will work like brains isn’t a great stretch. We just need to assume a very similar physical process invokes similar results. It would almost be more surprising if it didn’t work.

Guessing that software brains will work like brains is unprecedented. We have no examples of any such thing, and a lot of the computer science seems to argue against it. It is, in any event, a huge jump from a physical model, so I see no reason to expect it would work.

Unless the universe is significantly Tegmarkian, and then this is all moot.

November 9th, 2015 at 5:52 pm

I think there are actually three scenarios here:

1. Consciousness is algorithmic.

2. Consciousness is non-algorithmic information processing.

3. Consciousness is a physical output / result / byproduct of brain processing.

Going by your definitions, 1 would require that the brain be a digital computer. I think we agree that isn’t the case.

But 2 seems like the most compelling scenario to me, the simplest one that is compelled by the evidence. If it is true, I can’t see any reason why digital computing couldn’t approximate its functionality, although it could never match it perfectly, and performance constraints might require a hardware architecture much closer to the brain’s neural network.

I’m open to the possibility of 3, but to regard it as something other than remote speculation, I’d have to see something that compels a physical manifestation of consciousness as an explanation.

November 9th, 2015 at 6:26 pm

“1. Consciousness is algorithmic.”

That is my Door #1. And we agree this seems unlikely.

We can stop right there, since that’s the basic point of this series of posts. We agree a CCMoT seems unlikely, so cool! 😎

I am confused by the second list item:

“2. Consciousness is non-algorithmic information processing.”

I’m not sure what that means, but…

“3. Consciousness is a […] result […] of brain processing.”

…that’s my Door #3, which suggests your item #2 is my Door #2? By “non-algorithmic information processing” do you mean a very large, highly connected network of active nodes and links? (i.e. my Door #2) I’m thinking… yes?

“If [Door #2] is true, I can’t see any reason why digital computing couldn’t approximate its functionality”

Which puts me back to being confused about what you mean by the second item.

Look, if we agree mind isn’t algorithmic, then we agree no algorithmic approximation can actually work (that is, give rise to self-awareness). You can’t have it both ways! 🙂

November 9th, 2015 at 8:59 pm

Wyrd, just as you’ve made requests of me, I’m going to request that when you quote me, you avoid editing my words. I get confused on whether you’re reacting to the original or modified version. If pasting the actual text is too cumbersome, please consider just referencing it.

If Door 2 includes consciousness as a physical byproduct, then I think there is space between Doors 1 and 2, and that’s where I think scenario 2 lives. So:

scenario 1 matches Door 1,

scenario 2 is between Doors 1 and 2 (but much closer to 1),

scenario 3 matches Door 2.

You’ve written a great deal against scenario 1 / Door 1, but I don’t think you’ve addressed scenario 2. I suspect you’ll reject its existence. Fair enough, but I think it’s most likely where reality is.

November 9th, 2015 at 9:33 pm

I’m guessing, but are you objecting to my inserting “[Door #2]” into your reply?

If so, I’m sorry. No harm was meant. I didn’t know how to answer the question otherwise. Your first item seemed to match my Door #1, and your third item seemed a match to Door #3, so I had to wing it and assume you meant Door #2. The edit (and my line preceding it) was meant to make that clear.

(Other that that, for the record, I do cut-n-paste, and I’m always referencing what you wrote. If I remove words it’s because I believe it doesn’t change the sense of the phrase, and the cut-n-paste is, as you say, just meant as a reference to orient on exactly which part I’m responding to.)

Okay, anyway, so your item #2 is not Door #2…

“I don’t think you’ve addressed scenario 2. I suspect you’ll reject its existence.”

True that I haven’t addressed it. I have no idea what it is. (I did include a Door-X for possible other models of mind.) Other than some general talk about “non-algorithmic information processing” I have no idea what it’s supposed to be.

What exactly is a non-algorithmic information processing system? I don’t know.

And so I’m clear: Are you suggesting this scenario 2 can be accomplished by an algorithm that either generates or gives rise to consciousness?

November 9th, 2015 at 9:51 pm

“What exactly is a non-algorithmic information processing system? I don’t know.”

By your definitions, it would include any analog computing, the processing of neural nets, or any computing that isn’t done by a Turing machine.

“Are you suggesting this scenario 2 can be accomplished by an algorithm that either generates or gives rise to consciousness?”

I think the functionality can be approximated by an algorithm. It won’t be a perfect match, but it could be an effective one.

Can it give rise to consciousness? Well, I think consciousness is most likely a data processing architecture, so I think it could. I’d revise my opinion if there were evidence that consciousness was some physical output of the brain, as opposed to just the way information flows between subsystems.

November 9th, 2015 at 10:35 pm

“By your definitions, it would include any analog computing, the processing of neural nets, or any computing that isn’t done by a Turing machine.”

Okay, cool, that’s a start, but so far it’s a PowerPoint diagram with labeled boxes. To make this more than hand-waving, the boxes need to be broken into, at least a little. (I realize no one knows how to do this on a detailed level.)

So can you define, just a little, exactly what you mean by “analog computing”, “neural nets”, and computing not “done by a Turing machine.”

I’ll try to get this started…

Software neural nets are behind Door #1, and physical neural nets are behind Door #2 (they kind of are Door #2), so if they mean something else here, that needs to be defined. Or at least explained as to how they might be different.

The analog “computing” I’m familiar with all involves physical networks or devices that leverage physical properties as analogues to some other physical system. A example is electron flow through a resistor network “computing” the water flow through a system of pipes.

All those fall behind Door #2, so if analog computing means something different here, that needs to be explained.

That last one is in contrast to how Turing Machines define what we mean by “computing” so that definitely needs to be opened up.

Are there real world processes that seem analogues of some part of this? What sort of mechanisms are you imagining?

“I think consciousness is most likely a data processing architecture…”

Okay, but you realize that’s just another labeled box, right?

Other than that you imagine the human brain to be one, can you point to any other example of data processing that shows any hint of consciousness?

There is further that every example of actual data processing we know about (that is, every computer that exists) is a Turing Machine!

So a belief in mind as data processing as we understand it is tantamount to a belief in an algorithmic mind.

November 10th, 2015 at 9:21 am

Okay, maybe I’m misunderstanding the definition of Door 2. Above, I took it to mean a non-biological system that produces consciousness as a physical byproduct, an output, of its operations. But if it includes any computing other than digital, then it sounds like it’s equivalent to my scenario 2.

But if so, if the physical product of consciousness is off the table, then what separates Door 1 from Door 2 from a functional effectiveness viewpoint? Even if we put the physical product back on the table, what prevents the physical implementation of Door 1 from generating it?

Arguing about what is or isn’t a Turing machine or algorithmic or whatever, it seems to me, is ultimately beside the point. All of this comes down to consciousness being this physical byproduct. If it exists, then any physical implementation would have to produce it. (Although that implementation might involve Door 1 components.)

If it doesn’t exist, then the distinction between Door 1 and 2 comes down to the architectural distinction between digital and other types of computing, and there are no barriers to Door 1 approximating the functionality of Door 2 or 3 (albeit perhaps with a serious performance penalty).

November 10th, 2015 at 11:05 am

“I took it to mean a non-biological system that produces consciousness as a physical byproduct…”

Your description is okay, but lacks the key feature distinguishing Door #2, so I’m not sure whether you understand or not.

Door #2 is a physical (non-biological) replica of the brain’s network (including the weighting of the synapses). The significant difference between Door #3 (us) and Door #2 is biology. Both doors presume that consciousness emerges from the physical complexity of that network and as a consequence of its operation.

(Which complexity we touched on also in your current blog post.)

“But if it includes any computing other than digital, then it sounds like it’s equivalent to my scenario 2.”

I can’t answer. I don’t know what you mean by “computing other than digital” (or any of the other things I pointed out) so I don’t understand scenario 2.

“But if so…”

I can’t answer. I don’t really understand the question.

‘But if Door #2 includes computing other than digital, if physical product of consciousness is off the table, what separates Door #1 from Door #2 from a functional effectiveness viewpoint?’

Am I even getting the sense right? A problem is that undefined phrase “computing other than digital” and I’m not sure what you mean by consciousness being on or off the table. I’m also not really sure what you’re asking in “functional effectiveness viewpoint.”

It seems about the difference between Door #2 and Door #1. That’s an important difference in so far as this whole series of posts is about it. Rather than try to answer a question I don’t understand, can I ask you to re-read the Model Minds and Four Doors posts?

I tried to define the Doors (Layers) in great detail there. Maybe a re-read will answer your question?

“All of this comes down to consciousness being this physical byproduct.”

Yes, absolutely. That’s exactly what this is about. (And the CS aspects of this, the algorithms and whatever, are actually fundamental to that question.)

“Although that implementation might involve Door 1 components.”

If you mean “contains software components” then yes. So does my microwave. Nothing disallows software from helping the system.

The point I wanted to emphasize is that Door #1 is like being pregnant. There’s no partly. What lies behind Door #1 (a software mind) is either true or not. (I’m arguing not. I could be wrong.)

“If it exists, then any physical implementation would have to produce it.”

Right. It’s not 100% a given, but it’s hard to see why it wouldn’t.

“If it doesn’t exist, then the distinction between Door 1 and 2 comes down to the architectural distinction between digital and other types of computing”

Right, again. (Just so we’re clear, “it” in both quotes refers to “consciousness being this physical byproduct.” That is, it emerges as a consequence of the physical operation of a physical system.)

This is the question these posts are considering! In particular, my skepticism consciousness can be the result of a numerical calculation and my belief that it arises as a physical consequence of a physical system.

Now what?

Is there still a scenario 2 to define, or are we all on the same page, or… Remember Ferris Bueller? Are there any questions? Anyone? Anyone? 🙂

November 10th, 2015 at 12:21 pm

I think we’re on the same page as to the issue here. But I do think we disagree on the likelihood of consciousness being a physical product.

I’d have to see some kind of evidence, something that would make it necessary in our understanding of the mind and brain. Simply not being able to prove it impossible doesn’t get through my skeptic filter. Of course, that evidence could come tomorrow.

As we’ve done so often, I fear further discussion is unlikely to resolve this disagreement. We’ll just have to chalk it up to another thing that SAP and Wyrd disagree on 🙂

November 10th, 2015 at 12:36 pm

“I do think we disagree on the likelihood of consciousness being a physical product.”

We do? I thought that was one point we definitely agreed on. Can we agree on exactly what we’re disagreeing on?

I’m predicting a No, Yes, Yes…

In which case we do disagree, since I’m No, No, No. 😀

November 10th, 2015 at 1:34 pm

1. Not yes.

2. Not no.

3. Not no.

🙂

November 10th, 2015 at 2:26 pm

ROFL! Okay, fair enough, although now I have to ask: Do you accept the axiom of the excluded middle?

November 10th, 2015 at 2:01 pm

Well, like Mike, I don’t know why you have arrived at your conclusion. I agree that a software model of a microwave will never cook anything (other than a software ready meal), but I don’t see the relevance to mind.

For me, mind is to brain as data processing is to computer. When a computer is running, all it is doing is moving electrons around, and yet seemingly magical things happen as a result of the algorithms it runs and the data it processes.

You are comparing the mind to a physical effect, like microwaves or a falling ball. I don’t consider the mind to be a physical object or effect at all. If so, what are its properties? Does it have mass, size, physical location, momentum, temperature, or anything that a physical thing has? I don’t think so.

What makes you think the mind is like this?

November 10th, 2015 at 2:41 pm

“[M]ind is to brain as data processing is to computer.”

I’m sorry, but that’s an empty, undefined slogan that means nothing.

“When a computer is running, all it is doing is moving electrons around,…”

Agreed.

“…and yet seemingly magical things happen as a result of the algorithms it runs and the data it processes.”

Seemingly magical? Utter nonsense. Nothing more “magical” than 2+2=4.

And a whole world of difference apart from “experiencing” anything.

I’ve worked extensively with computer hardware and software for nearly 40 years. Nothing they do isn’t trivially easy to understand. You know the phrase: “Calm down! It’s just one and zeros!”

“You are comparing the mind to a physical effect,…”

Comparing, yes. Don’t get too wrapped up in analogies. I’m saying mind — whatever it is — supervenes on physical effects.

Effects that cannot be accomplished with 2+2=4.

“If so, what are its properties?”

The mind, obviously, has many, many properties.

“What makes you think the mind is like this?”

I think the more urgent question is what makes you think 2+2=4 can experience consciousness?

You’re arguing that some form of algorithm, or some form of ill-defined “data processing,” gives rise to consciousness, despite the fact that no such thing happens in any form of algorithm or data processing we currently know about.

Sounds like the burden of proof is on explaining that leap of faith.

What, exactly, happens? It doesn’t sound like the numbers the algorithm spits out are conscious, so you must believe that running the algorithm gives rise to consciousness?

Other than the empty slogan “Data Processing!” how exactly does that work?

November 11th, 2015 at 3:12 am

“[M]ind is to brain as data processing is to computer.”

I’m sorry, but that’s an empty, undefined slogan that means nothing.

Well I think it’s a meaningful statement. It’s an analogy, not a precise definition.

“Seemingly magical? Utter nonsense.”

I don’t mean literally magical. I mean exactly what I said – seemingly magical. I remember as a child seeing my very first home computer game. It played pong, and “badminton”. I watched it with jaw-dropped amazement. This box actually knew what I was doing with the controller and was using my actions to control the action on the screen. The ball bounced off the walls like it was a real ball.

I asked my father how it worked, and he was at a loss to explain it. Later, I found out for myself how it worked, and it was a trivial algorithm running a few hundred lines of code. But that code had inspired awe in a young boy who saw what it did.

Perhaps you are too close to computers to appreciate them, or perhaps you have put on an analytical “hat” that is giving you this particular perspective.

After all, what is a brain? A squishy lump of ugh.Neuroscientists study it and say it’s made of neurons, etc, reducing it to a large network of interactions. Yet out of it comes the miracle of consciousness.

Shoot me for using the words magical and miracle in the same comment. 🙂

“I’m saying mind — whatever it is — supervenes on physical effects.”

I’m going to have to ask you for clarification of what you mean, if this is an important step in your argument.

“The mind, obviously, has many, many properties.”

You have written about some of them yourself in this series – the connectome, number of operations per second, data storage capacity, etc. These are all properties that computers have too, as you explicitly pointed out!

“I think the more urgent question is what makes you think 2+2=4 can experience consciousness?”

Of course it can’t. Neither can 2+2=4 send an email.

“What, exactly, happens?”

I’ll let you know just as soon as I finish filing my patent application for a working mind.

“ou must believe that running the algorithm gives rise to consciousness?”

Yes, of course!

November 11th, 2015 at 10:04 am

“Well I think it’s a meaningful statement. It’s an analogy, not a precise definition.”

But it’s not anything we can draw a precise definition with. It’s just hand-waving. That might be a limitation of our understanding, but it might also be something more fundamental.

“I don’t mean literally magical. I mean exactly what I said – seemingly magical.”

Of course! I understood you. I’m saying they’re no more magic seeming than car engines (which some might find equally magical). I appreciate what you’re getting at, the jaw-dropping amazement. We all got that to some extent.

I was a serious science and electronics geek all my life (and older), so it was cool what we could do with electronics, and delightful and awesome, but it never seemed magical to me. It was more, “Yeah! See what we can do!”

It is possible the early impressions we got of computers shaped our sense of what they might accomplish in the future. I see them as just grown up electronic calculators. You’re saying you essentially see them on par with humans.

[shrug] I’ll believe that when I see proof. It strikes me too much as a belief or hope. At best a wishful extrapolation.

The irony is that all three people I’m currently debating about this are, I believe, atheists who’ve expressed disdain over the idea of believing in something with no proof. And yet they believe calculators can be minds without one shred of proof.

“Shoot me for using the words magical and miracle in the same comment.”

Not at all! Welcome to the Dark Side! 🐱

About that “miracle of consciousness” though, from our perspective the statement cannot be refuted. That doesn’t mean it necessarily is a miracle, but it does mean it’s really mysterious. And probably incredibly complicated and difficult.

Maybe when computers get to be that complicated we’ll see similarity, but right now there just isn’t any.

“I’m going to have to ask you for clarification of what you mean, if this is an important step in your argument.”

This post is explicitly about that. The ten or so posts leading up to it support it.

This post points out a number of physical phenomena that arise from physical situations but not from software models of those. Microwaves, laser light. If mind is like that, emerges from physical properties, then no algorithm will ever generate it any more than any algorithm can generate laser light.

“Neither can 2+2=4 send an email.”

Of course it can. It does all the time. (Perhaps what I meant wasn’t clear. “2+2=4” is a metaphor for what computers do: math. Binary math, at that, and they don’t even actually do math, they use binary logic to accomplish math. [Do you know what a full-adder is? Tomorrow’s post explores binary logic!] So I’m talking about computer logic here.)

You’re telling me that computer logic can accomplish consciousness (without being able to at all say how that might happen), and I agree that may turn out to be correct, but at this point it’s little more than a hope.

Is it useful to point out that I’m not trying so much to persuade anyone about their belief in AI so much as make the point that what I called Door #1 (hard AI) is nearly as much a leap of faith as is what I called Door #4. That’s really my only point. The facts simply aren’t known.

I’m discovering that people with a strong belief in hard AI are like people who are certain that God definitely does, or does not, exist. They seem not fully aware of how much belief their position actually involves.

It’s hard being agnostic! You end up fighting gnostics on both sides! 😮

November 11th, 2015 at 11:44 am

I wouldn’t characterise myself as a strong believer in hard AI, merely that I don’t see any reason why the brain isn’t like a computer. And yes, I’ve read all your posts. I still don’t understand why you believe that the brain is explicitly not like a computer.

As an atheist, I on’t reject the idea of God because it has no proof. I reject the idea because the only evidence in support of God is stuff people wrote in old books, and because there is plenty of evidence that God is invented by humans (those same books demonstrate how good we are at it, and how fixated we are on the idea.) The evidence weighs strongly against there being a God.

On the brain being like a computer, I haven’t seen a single piece of evidence that suggests the brain is not like a computer.

November 11th, 2015 at 12:10 pm

“I still don’t understand why you believe that the brain is explicitly not like a computer.”

Where is this computer you see in the brain? It’s definitely not a Von Neumann machine. It doesn’t even seem to be a stored-program machine. What computer-like aspects do you see?

You recently used an IF-THEN-ELSE construct to illustrate how we use logic but our ability to use logic doesn’t make us like a computer. (It just means logic is a fundamental tool used in many situations.)

“I reject the idea because the only evidence in support of God is stuff people wrote in old books,”

That’s not a fair analysis, but it’s so far off the topic (and such a minefield), let’s not go there. It does highlight the selectiveness with which believers of any stripe view evidence.

“On the brain being like a computer, I haven’t seen a single piece of evidence that suggests the brain is not like a computer.”

Saying it doesn’t make it true. Show me your cards, dude! XD

It doesn’t look architecturally like any computer we know. It doesn’t behave like any computer we know (complete distributed processing, no CPU). Neurons are sending analog signals in the timing of their firing pulses.

There’s evidence it’s not a computer — not like any we’ve ever seen. You’re saying for you it all weighs on the “is a computer” side, so… how so?

November 10th, 2015 at 2:17 pm

On Mike’s blog, did you really say “DNA is pure data!”?

November 10th, 2015 at 2:42 pm

No! I deny all knowledge of the parties and events involved!

November 11th, 2015 at 3:48 am

Let’s try a different approach. I assume that you would agree that if we had a very powerful computer, we could use it to model individual atoms, and hence molecules, simulating their behaviour in detail. If they emitted photons, they would not be real photons, but the simulated photons would be exact models of photons.

With a working knowledge of biology, we could model proteins, DNA, individual cells. The modelled cells would behave just like real cells, except that they would of course just be computer models.

With a powerful computer and a detailed knowledge of biology, we could scale this up further and model an entire human. This simulated human would behave like a real one – eating, sleeping, thinking, and so on. If this human dropped a simulated ball on his or her simulated foot, they would not actually feel pain (it’s just a computer, how can it feel pain?), but the simulated brain would respond in exactly the same kind of way as a real physical brain.

Would this simulated human be conscious? if not, why not?

November 11th, 2015 at 10:36 am

“I assume that you would agree that if we had a very powerful computer, we could use it to model individual atoms, and hence molecules, simulating their behaviour in detail.”

I’m not sure I would agree without a discussion about how that computer handles the quantum aspects of things and how it handles chaos.

You go on to assume this computer is powerful enough to model an entire human and their environment. Essentially, you’re describing The Matrix minus the actual humans.

I have problem believing a computer model of an atom can be accurate enough because of quantum behavior and chaos theory. We know that rounding off real world quantities in order to calculate with them invalidates the precision of the calculation.

Modeling a complete human, let alone their environment, as accurately as you’re describing? I find that nearly impossible to believe.

So, no, I don’t agree a powerful enough computer can model atoms exactly let alone molecules let alone a human let alone their environment. I think chaos would get you for sure.

“…but the simulated brain would respond in exactly the same kind of way as a real physical brain.”

Well, the conclusion is based on the premise, and the premise assumes what you’re trying to prove, so it’s circular, but I’ll grant it all and answer your final question:

“Would this simulated human be conscious? if not, why not?”

No. My last dozen posts explain why I think not.

And, again, there are two points to all those posts.

The main point is trying to explain why an algorithmic mind is such a leap. Not that it’s wrong, but that it grounds on facts not in evidence. This is a factual point, and the resistance to it, frankly, has astonished me.

The secondary point is trying to explain my skepticism regarding that leap. To me the gap seems too big to jump with machines that process discrete symbol strings.

I’ve come to believe that, as with most gnostics, acknowledging the leap of faith is seen as such a threat to certainty that there’s a blindness to it. It’s become apparent to me that, despite weeks of posts and tons of comments, my point still isn’t being understood.

I don’t care (that much) if it’s agreed with, but I’d like it to be understood. The failing is likely mine. I know I throw too much detail in and it seems to muddy the waters for people. Those details all seem hugely important to me, so it’s hard to know what to leave out. (Leads to long comments, too. [sigh])

Ah, well. The series is basically over now and we can all return to our normal lives. 🙂

November 11th, 2015 at 11:49 am

So is your objection based on rounding errors? A digital computer would have tiny rounding errors that scale up to prevent consciousness from occurring? But an analogue system can work?

I have friends who work in molecular modelling. Their entire careers are based on the reality than computers can model complex molecules. So I put that forward of evidence that such systems can be modelled on digital computers.

November 11th, 2015 at 12:16 pm

“So is your objection based on rounding errors?”

In part, absolutely. Chaos theory has shown it does matter. We can’t predict the weather accurately, in part, because of rounding errors.

“But an analogue system can work?”

Yes. The difference is as stark as between digital and analog music.

“I have friends who work in molecular modelling.”

I don’t at all disagree systems can be modeled sufficiently to be useful for study. Ask your friends if the models fully account for every aspect of the system. My guess is their answer will be something along the lines of, “What? Oh, no, of course not,…” followed by an explanation of how they model the parts of the system of interest and value.

November 11th, 2015 at 12:27 pm

OK. I thought your objection was based on theoretical grounds, rather than on the practicalities of implementation.

I can certainly agree that building a computer model of a brain is a tremendously hard task and may never succeed.

But researchers have already built computer simulations of the C. elegans nematode and both mouse and rat neocortical columns, with varying degrees of success. Would the success of one of these projects sway your opinion?

November 11th, 2015 at 1:35 pm

“I thought your objection was based on theoretical grounds, rather than on the practicalities of implementation.”

The problems chaos theory present are both theoretical and practical. My primary objections are all theoretical.

It boils down to this: to calculate with numbers you need number you can calculate with. That means finite numbers. Chaos theory addresses the fact that some mathematical systems (iterative non-linear ones) are so sensitive to input conditions that any rounding off of a number (in order to use it in calculations) degrades the calculation.

That degredation increases as the calculation iterates until the model is extremely different from the physical system. That is largely why we can roughly guess the weather tomorrow, but on this date a year from now? We can predict what season it will be, but…

“But researchers have already built computer simulations of the C. elegans nematode and both mouse and rat neocortical columns, with varying degrees of success. Would the success of one of these projects sway your opinion?”

Fairly crude models as I understand it, but absolutely evidence is what matters. I’m looking forward to advances along these lines! I just hope I live long enough to see them create the first physical or virtual model of a human brain. I wanna be there when they switch it on! 🙂

That is one thing about this area (unlike discussions about God). We will figure out the truth in the matter eventually. Sadly I fear it’ll be after I’m long gone. Bums me out. (On the other hand, I’ll have found out the answer about God by then, so there’s that! 😀 )

November 11th, 2015 at 11:30 am

Here’s a question back: Given this magical computer simulating a virtual human and environs, what if it ran at a rate of one clock cycle per day? Still conscious?

What if the algorithm is performed, not by a computer, but by an very large army of monks using abacuses to accomplish the calculations. Is that system still conscious?

November 11th, 2015 at 11:49 am

Totally. Yes, to both questions!

November 11th, 2015 at 12:00 pm

Well, good! You’re consistent and you fully appreciate the implications! (Not everyone does.) Seems we can at least converge on the exact points we see differently. That’s cool.

January 5th, 2024 at 2:13 pm

[…] on specific characteristics of the brain. I listed some possible sources of such dependence in the No Ouch! post, which focuses on this first […]