Last month I wrote three posts about a proposition by philosopher and cognitive scientist David Chalmers — the idea of a Combinatorial-State Automata (CSA). I had a long debate with a reader about it, and I’ve pondering it ever since. I’m not going to return to the Chalmers paper so much as focus on the CSA idea itself.

Last month I wrote three posts about a proposition by philosopher and cognitive scientist David Chalmers — the idea of a Combinatorial-State Automata (CSA). I had a long debate with a reader about it, and I’ve pondering it ever since. I’m not going to return to the Chalmers paper so much as focus on the CSA idea itself.

I think I’ve found a way to express why I see a problem with the idea. I’m going to have another go at explaining it. The short version turns on how mental states transition from state to state versus how a computational system must handle it (even in the idealized Turing Machine sense — this is not about what is practical but about what is possible).

“Once more unto the breach, dear friends, once more…”

There are some side dishes, but here’s the main meal:

In the brain, one mental state follows another without intermediate mental states. Putatively, the brain’s function is fully described by these states. But in the computer, “mental states” are nothing like this.

Therefore a claim of organizational invariance is on very shaky ground (perhaps falsified).

Per Chalmers’ definition, the mental states in question must be at the granularity necessary to describe cognition. This applies to both the physical resolution and the time resolution.

Physical resolution has a range from quantum up to neurons and perhaps even higher. We currently don’t really know what parts of the brain are necessary factors in a simulation — some believe quantum effects may play a role.

Chalmers picks the neuron level, so that is what I’ll discuss here (I suspect a truly accurate simulation requires finer granularity — Chalmers seems to feel a coarser granularity might work — we meet in the middle).

The time resolution is a little tricky since neurons operate asynchronously. One approach is to require a new state when any neuron changes. This ensures all neuron changes are accounted for.

But, since neurons don’t march in lockstep, that means states won’t always have the same time span between them. They’d need time codes to account for variable timing.

Alternately, we can take states on clock ticks, making those ticks quick enough that we’re guaranteed to never miss a state change. The downside here is states where nothing changes in that tick (leading to duplicate states).

For now, let’s just assume the system handles this appropriately, that states are calculated and made available at the right time.

There is also that, in many views, the timing doesn’t matter. States could be made available at any speed with any delay between states and it shouldn’t matter to the cognition (any more than it matters in most software).

The point here is that a state-based system replicating a real-time physical system is decidedly a non-trivial proposition. As computational approaches to solving problems, they have many advantages, but they’re also the biggest footprint and, in some ways, hardest to pull off.

§

That’s because the function that calculates the next state makes the timing issues just mentioned look trivial. Depending on how the state system is designed, that function may be a serious sticking point.

The alternate is a trivial function that just rolls out existing states from a table of states. I discussed this extensively in previous posts.

But let us set aside all such details and assume we have the following:

- FSA:Brain — essentially, the dynamic living brain itself.

- CSA:Brain — a program; a state-driven brain simulation.

- FSA:Computer — a machine that can run CSA programs.

A little unpacking is necessary to explain the terms.

By FSA (Finite-State Automata) I mean (the abstraction of) any physical system operating according to physical laws. The idea is the materialist one that any process has some Turing Machine that describes it completely.

Yes: For the moment, I’m assuming computationalism is true.

I’m assuming FSA:Brain is such a complete description of the brain as to essentially be the brain. Likewise, FSA:Computer is the fully detailed abstraction of how the computer works.

CSA:Brain is a real program that implements FSA:Brain. (It is what Chalmers describes as a CSA.)

Note that we currently have no idea what FSA:Brain is, let alone what CSA:Brain would be implementing it. We assume FSA:Brain exists, because under computationalism there is some TM that describes the brain.

And if FSA:Brain exists, we ought to be able to derive CSA:Brain easily enough.

The presumption then is that:

FSA:Brain = FSA:Computer(CSA:Brain)

That is, the brain is identical to a computer running CSA:Brain. If Chalmers is right, both should experience identical mental states and cognition.

(Note that Chalmers only claims cognition here, not phenomenal experience.)

§ §

However.

§ §

This turns on what Chalmers calls organizational invariance preserving the causal topology of the system.

I’m not sure it does.

It also turns on the idea that two systems that can be said to share a common abstraction have a meaningful identity — one strong enough to say both experience the same thing.

I’m not sure that’s true.

§

In the brain, a state change involves all neurons at once. (The CSA is defined in terms of vectors comprised of all neurons.) Note also that a neuron state is a physical, chemical, complex condition.

In the computer, the hardware-software combination changes the numeric values of an array of numbers one-by-one. Note here that a “neuron state” is just a bit pattern that stands for the physical, chemical, complex actual neuron state.

The crucial point is that a single-thread algorithm (which is what we presume in an idealized case such as this) cannot change all neuron states at once. (I’ll consider the alternative to this below.)

The upshot is that a given state changes to a new state slowly, one “neuron” (memory location) at a time.

Which means there are as many intermediate states as there are neurons.

Imagine the system is in state N. After changing one vector value to the new value for state N+1, the vector is in an illegal state with one component in the next state while all the rest remain in the current state.

This change ripples down the vector until, after all have been changed, now the vector is in the new legal state, N+1.

§

So, firstly, the state vector goes through billions of illegal transitory states between each legal state.

This raises the question of whether those states can matter. If only one state is a legal CSA state for every 86 billion those memory locations go through, how can the values of the state vector possibly mean anything?

What makes that one legal state “the one” while billions of others aren’t?

Secondly, the computer goes through dozens or hundreds of computer states accomplishing even each transitory vector state, let alone the many billions of computer states involved in accomplishing one legal vector state change.

This is the physical causality computationalists often point to as substantiating the idea that physicality is necessary. But it turns out to be entirely at the wrong level to have value. (I’ll get to that in a later post.)

Thirdly, the “neuron state” is a number that stands for a physical state. That simply isn’t the same thing as a physical neuron in a chemical state.

Neurons have a spatial orientation to each other as well as a topological wiring (the network). Memory locations have no connection with each other. Even the individual bits of a single location have no relation to each other.

Bottom line, it’s really hard to see where any causal topology is preserved given that the organization of these two systems is vastly different.

§ §

Perhaps there are adjustments we can make to improve things.

Can we eliminate the first, worst, problem of all those illegal transitory states? Is there a way to change all 86-billion vector components at once?

Yes, of course, but it requires that each component be separate. They can’t even be housed in the same RAM chips. They also require separate logic circuits for each to accomplish the change en masse.

Which pretty much puts us back to a Positronic Brain, a physical emulation.

I can see no way out of it computationally, and it suggests that the state vector doesn’t do much towards preserving causal topology.

§

Even a split-buffer technique has problems.

The idea is to use two memory arrays as state vectors, which allows modifying one while the other is considered “active” (whatever that means in this context). Once the changes are complete, the system is switched to considering the other buffer the active one. (The technique was often used in video buffering.)

The problem is: what does this say about the state vector? It seems to make it even more meaningless.

What can it even mean to say that one buffer, or the other, is the legal mental state? Programmatically, it’s just an address of one or the other.

As a geeky sidenote, there could be good reason to use a split-buffer for the same reason one does when coding the Conway “game” of Life.

While calculating one change, input from other cells matters, but it has to be the input from the current condition. If it happens the system has already updated those cells, those inputs are no longer valid.

Split-buffer allows taking inputs from the active buffer while calculating changes into the alternate buffer. This way the inputs stay stable.

§ §

If the state vectors don’t seem to mean much, if they don’t seem the source of causal topology, if it exists, it must be in the function used to calculate states.

I think this is a good stopping point. Next time I’ll take a closer look at what kind of function could accomplish our goal.

You won’t be surprised to learn there are some serious issues there, too.

Stay stately, my friends!

∇

July 15th, 2019 at 12:53 pm

Wonder if you are aware of this:

https://gizmodo.com/new-time-slice-theory-suggests-youre-not-as-conscious-a-1770950927

The idea is that consciousness works in time slices.

July 15th, 2019 at 1:26 pm

I’m a little confused by the article. It talks about a delay of about 400 ms from low-level perception to conscious awareness, but I don’t see where it talked about time slices. It says, “And it all happens in blips, or ‘time slices,’ lasting for as long as 400 millisecond intervals.” But that turns out to be the delay.

But it’s not clear that those delayed perceptions aren’t presented in a smooth stream once they do reach awareness. It says the low-level scanning “is done semi-continuously” so why wouldn’t the stream of presentations, albeit delayed, be at least semi-continuous?

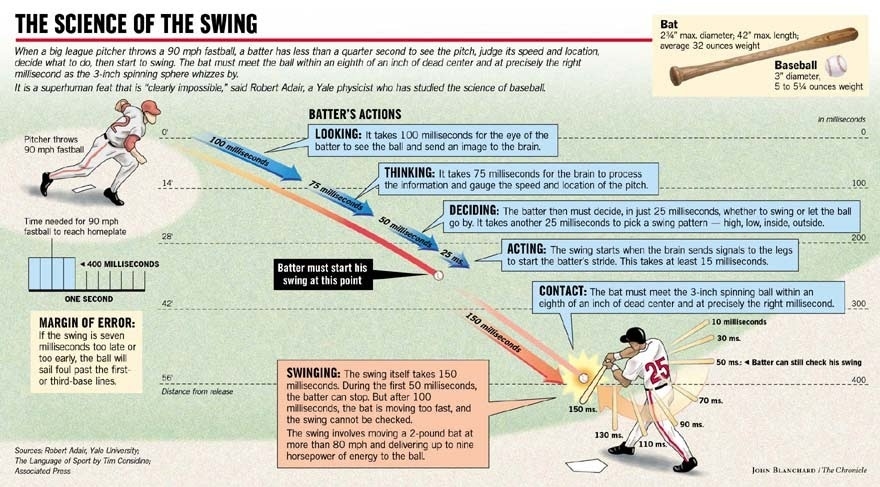

As a side factoid, a 90 MPH fastball in baseball reaches the plate in about 400 ms. The batter has to recognize the pitch by about 100 ms to decide whether to swing or take because it requires time to swing the bat. Certain aspects of what is going on are in much smaller time slices:

July 15th, 2019 at 1:38 pm

From other reading it seems like the frames can be longer or shorter. The main research I’ve read about relates to flashing lights.

If I remember correctly, they were somehow monitoring brain waves to actually detect a frame. If two lights flashed one after another in the same frame, they were reported as one light. If the second light was in the next frame it was seen as two lights. Apparently the length of frame can vary by individual also. It might be great baseball players can operate in a shorter frame. The author Evan Thompson in a book was even arguing that meditation training might actually improve or reduce the time frame.

July 15th, 2019 at 2:24 pm

There is the phi effect which could also account for seeing two lights as one, so I’m not sure what this says about our perceptional “frame rate.”

Thing is, 400 ms is really, really slow. One frame of film (at 24 FPS) is only 41.667 ms, and we can see individual frames if they stand out. And that 400 ms flight of the baseball looks pretty smooth to me (and I’m certainly not a pro baseball player — no way could I physically react in time). FWIW, 400 ms is almost ten frames of film — it’s close to half a second!

Given that our high-level perception at least seems smooth, and that our low-level inputs are smooth, what’s the proposition? That our brains go from smooth signals to very coarse time “blips” and then get smoothed out again as we experience? This seems to need further exploring.

I do definitely believe both muscle and perception speed can be increased through training. It’s especially important during development. That’s why I make it a point to include lots of fast-moving things when I raise a puppy. Trains their brain!

July 15th, 2019 at 2:12 pm

The actual article is interesting.

https://journals.plos.org/plosbiology/article?id=10.1371%2Fjournal.pbio.1002433

It is possible also baseball players are making the determination to swing before the trajectory of the baseball is actually conscious to them. In other words, there is some perception that maps the trajectory that triggers the reaction to swing but it occurs unconsciously. It is interesting, however, that the 400 ms in baseball for the ball to reach the plate actually aligns with 400 ms in the study.

July 15th, 2019 at 2:37 pm

This is all interesting to the max, and as someone who thinks the physicality of the brain matters, timing and synchronization do play a big role in my thoughts about brain and mind.

But unless the proposition is that such a coarse granularity is sufficient to emulate brain processes, I don’t want to wander too far down a rabbit hole that, as interesting as it is, isn’t relevant to the topic of CSAs or, in particular in this post, the state vector (idea or implementation).

I think you would agree the processes in the brain that support any putative time slices have a finer grain that would be necessarily a part of any emulation?

That means the 400 ms aspects emerge from the operation of the system (as it does in the brain)?

July 15th, 2019 at 2:57 pm

“It is possible also baseball players are making the determination to swing before the trajectory of the baseball is actually conscious to them.”

Maybe a little, kinda, but not really. (Although I can only report what I’ve heard players talk about. I am not in the MLB, just a big fan of the sport.)

If you looked at that graphic, they’re deciding in the first 100 ms, and that decision is based on a fairly large set of variables (which is part of what makes baseball amazing). Many of those variables are known as the pitcher begins the pitch: the current count, how many are on base, the current score, how well the pitcher is doing today, and a few others.

But in that 100 ms the batter uses the flight of the ball as the last piece of data to tip the scale either way. Part of the skill of pitching is making all your pitches look the same in that first 100 ms (it’s more subtle than that, but basically).

So, for example, a pitcher will throw a fastball that goes straight over the heart of the plate. They’ll also have a “breaking ball” of some kind — a pitch that starts straight, but breaks left, right, or down, as it nears the plate. But if both those pitches look the same in the first 100 ms of flight, the batter has to guess which one it is.

When you watch the pitch and then how the batters swing or take, it’s very clear they are reacting to the flight of the ball. If you watch closely, you’ll notice some batters stand as far back in the batter’s box as possible to give themselves just a split second more time.

It’s actually kinda scary standing there while someone throws hard objects at you in the 70 to 100 MPH range. Batters do get injured. One was killed (long ago). Pitchers get hurt, too, on direct line hits, which are even harder and faster than pitches.

“It is interesting, however, that the 400 ms in baseball for the ball to reach the plate actually aligns with 400 ms in the study.”

That really caught my eye. 🙂

I’ve come to believe baseball, over its 150+ year history, has evolved into a finely balanced game between offense and defense. I believe the outcome of any given baseball game is close to 50/50. It’s well known that the worst teams still win about 1/3 of their games and the best teams lose about 1/3 of their games. The 90 feet between bases seems perfectly balanced.

I think batting and pitching approach what the human body is capable of. I had a chance once, when I was younger, to throw a baseball and have it timed. I couldn’t break 40 MPH. Some pitchers break 100 MPH, but most can get into the 90s. And as we’re talking about, batting involves some pretty tight timing.

That 400 ms may very well be no coincidence. Merely changing the height of the pitcher’s mound by a few inches affects the dynamic.

July 2nd, 2024 at 3:13 pm

[…] the first post I looked closely at the CSA state vector. In the second post I looked closely at the function that […]