Tag Archives: entropy

I don’t usually write two Friday Notes posts in one month, but I was dog-sitting my funny little “nephew” Bentley for a week, and every time I don’t post for a while it’s hard to get back into blogging mode. In fact, it’s harder each time. I increasingly find social media less and less interesting or rewarding.

I don’t usually write two Friday Notes posts in one month, but I was dog-sitting my funny little “nephew” Bentley for a week, and every time I don’t post for a while it’s hard to get back into blogging mode. In fact, it’s harder each time. I increasingly find social media less and less interesting or rewarding.

Some of that is on me, but more of it is disappointment and disgust with social media and technology companies in general. A bit more on that below.

Mostly, though, I wanted to — at long last — post the last two notes that have been lingering on my Apple Notes app for years (in one case, since 2018).

Continue reading

17 Comments | tags: Bentley, Donnie Yen, entropy, gravity, Ip Man, Major League baseball, MLB | posted in Friday Notes, Movies

Last time I began explaining my “CD collection” analogy for entropy; here I’ll pick up where I left off (and hopefully finish — I seem to be writing a lot of trilogies these days). There’s more to say about macro-states, and I want also to get into the idea of indexing.

Last time I began explaining my “CD collection” analogy for entropy; here I’ll pick up where I left off (and hopefully finish — I seem to be writing a lot of trilogies these days). There’s more to say about macro-states, and I want also to get into the idea of indexing.

I make no claims for creativity with this analogy. It’s just a concrete instance of the mathematical abstraction of a totally sorted list (with no duplicates). A box of numbered index cards would work just as well. There are myriad parallel examples.

One goal here is to link the abstraction with reality.

Continue reading

13 Comments | tags: entropy | posted in Physics

Entropy is a big topic, both in science and in the popular gestalt. I wrote about it in one of my very first blog posts here, back in 2011. I’ve written about it a number of other times since. Yet, despite mentioning it in posts and comments, I’ve never laid out the CD collection analogy from that 2011 post in full detail.

Entropy is a big topic, both in science and in the popular gestalt. I wrote about it in one of my very first blog posts here, back in 2011. I’ve written about it a number of other times since. Yet, despite mentioning it in posts and comments, I’ve never laid out the CD collection analogy from that 2011 post in full detail.

Recent discussions suggest I should. When I mentioned entropy in a post most recently, I made the observation that its definition has itself suffered entropy. It has become more diffuse. There are many views of what entropy means, and some, I feel, get away from the fundamentals.

The CD collection analogy, though, focuses on only the fundamentals.

Continue reading

30 Comments | tags: entropy | posted in Physics

It’s time for another edition of Friday Notes, a dump of miscellaneous bits and ends. (Or do I mean odds and pieces? Odd bits and end pieces? Whatever. Stuff that doesn’t rate a blog post on its own, so it gets roped into a package deal along with other stray thoughts that wandered by.)

It’s time for another edition of Friday Notes, a dump of miscellaneous bits and ends. (Or do I mean odds and pieces? Odd bits and end pieces? Whatever. Stuff that doesn’t rate a blog post on its own, so it gets roped into a package deal along with other stray thoughts that wandered by.)

There’s no theme this time, the notes are pretty random. Past editions have picked the low-hanging fruit, and the pace has slowed. I’ve managed to whittle away a good bit of the pile.

Maybe some day no more notes and my blog will be totally in real time!

Continue reading

56 Comments | tags: calculator, deer, El Coyote, entropy, graphs, margarita | posted in Friday Notes

There is a key rule of thumb (or heuristic) in science known as the Copernican Principle. It essentially says: “We’re not special.” (The “we” in question being the human race.) It’s named after Nicolaus Copernicus, who, in 1543, forever banished the Earth and its thin film of humanity from the center of the universe.

There is a key rule of thumb (or heuristic) in science known as the Copernican Principle. It essentially says: “We’re not special.” (The “we” in question being the human race.) It’s named after Nicolaus Copernicus, who, in 1543, forever banished the Earth and its thin film of humanity from the center of the universe.

Ever since, the science view of humanity is that it’s just part of the landscape, nothing particularly special, a mere consequence of energy+time creating increasing organization in systems. We may be complex, perhaps even a little surprisingly so, but we’re still nothing special.

Yet it seems to me that, at least in some ways, we really are.

Continue reading

20 Comments | tags: dark energy, dark matter, Drake Equation, entropy, Fermi Paradox | posted in Science

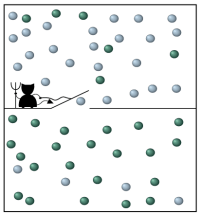

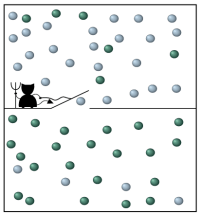

particles & their momenta

Over the decades I’ve seen various thinkers assert that entropy causes something — usually it’s said that entropy causes time. Alternately that entropy causes time to only run in one direction. I think this is flat-out wrong and puts the trailer before the tractor. (Perhaps due to a jack-knife in logic.)

The problem I have is that I don’t understand how entropy can be viewed as anything but a consequence of the dynamical properties of a system evolving over time according to the laws of physics. Entropy is the result of physical law plus time.

It’s a “law” only in virtue of the laws of physics.

Continue reading

21 Comments | tags: arrow of time, entropy, time | posted in Physics

Last time I started talking about entropy and a puzzle it presents in cosmology. To understand the puzzle we have to understand entropy, which is a crucial part of our view of physics. In fact, we consider entropy to be a (statistical) law about the behavior of reality. That law says: Entropy always increases.

Last time I started talking about entropy and a puzzle it presents in cosmology. To understand the puzzle we have to understand entropy, which is a crucial part of our view of physics. In fact, we consider entropy to be a (statistical) law about the behavior of reality. That law says: Entropy always increases.

There are some nuances to this, though. For example we can decrease entropy in a system by expending energy. But expending that energy increases the entropy in some other system. Overall, entropy does always increase.

This time we’ll see how Roger Penrose, in his 2010 book Cycles of Time, addresses the puzzle entropy creates in cosmology.

Continue reading

27 Comments | tags: black hole, cosmology, entropy, galaxy, Immanuel Kant, laws of thermodynamics, Roger Penrose, thermodynamics, universe | posted in Physics

I’ve been chiseling away at Cycles of Time (2010), by Roger Penrose. I say “chiseling away,” because Penrose’s books are dense and not for the fainthearted. It took me three years to fully absorb his The Emperor’s New Mind (1986). Penrose isn’t afraid to throw tensors or Weyl curvatures at readers.

I’ve been chiseling away at Cycles of Time (2010), by Roger Penrose. I say “chiseling away,” because Penrose’s books are dense and not for the fainthearted. It took me three years to fully absorb his The Emperor’s New Mind (1986). Penrose isn’t afraid to throw tensors or Weyl curvatures at readers.

This is a library book, so I’m a little time constrained. I won’t get into Penrose’s main thesis, something he calls conformal cyclic cosmology (CCC). As the name suggests, it’s a theory about a repeating universe.

What caught my attention was his exploration of entropy and the perception our universe must have started with extremely low entropy.

Continue reading

12 Comments | tags: arrow of time, big bang, cosmology, entropy, laws of thermodynamics, Roger Penrose, thermodynamics, time, universe | posted in Physics

The Thanksgiving holiday we celebrate here in the USA has some unfortunate overtones regarding its colonial origin. Still, the idea of a festival of thanks is an ancient one — thanks for a good harvest or a good hunt. Or, in our case, thanks for helping us not die last winter.

The Thanksgiving holiday we celebrate here in the USA has some unfortunate overtones regarding its colonial origin. Still, the idea of a festival of thanks is an ancient one — thanks for a good harvest or a good hunt. Or, in our case, thanks for helping us not die last winter.

As with Christmas or the Copenhagen interpretation, we tend to take a “shut up and calculate!” approach to the holidays. “Shut up and shop!” in the case of the Winter Solstice, and “Shut up and give thanks!” today.

One thing we can be very thankful about is patterns…

Continue reading

4 Comments | tags: atoms, DNA, electrons, entropy, Mandelbrot, Mandelbrot fractal, photons, quarks, Thanksgiving | posted in Life

There is something about the articles that Ethan Siegel writes for Forbes that don’t grab me. It might be that I’m not in the target demographic — he often writes about stuff I explored long ago. I keep an eye on him, though, because sometimes he comes up with a taste treat for me.

There is something about the articles that Ethan Siegel writes for Forbes that don’t grab me. It might be that I’m not in the target demographic — he often writes about stuff I explored long ago. I keep an eye on him, though, because sometimes he comes up with a taste treat for me.

Such as his article today, No, Thermodynamics Does Not Explain Our Perceived Arrow Of Time. I jumped on it because the title declares something I think many have backwards: the idea that time arises from entropy or change. Quite to the contrary, I think entropy and change are consequences of time (plus physics).

Siegel makes an interesting argument I hadn’t considered before.

Continue reading

62 Comments | tags: arrow of time, entropy, Ethan Siegel, got time, is time fundamental, laws of thermodynamics, thermodynamics, time | posted in Opinion, Physics

I don’t usually write two Friday Notes posts in one month, but I was dog-sitting my funny little “nephew” Bentley for a week, and every time I don’t post for a while it’s hard to get back into blogging mode. In fact, it’s harder each time. I increasingly find social media less and less interesting or rewarding.

I don’t usually write two Friday Notes posts in one month, but I was dog-sitting my funny little “nephew” Bentley for a week, and every time I don’t post for a while it’s hard to get back into blogging mode. In fact, it’s harder each time. I increasingly find social media less and less interesting or rewarding. Last time I began explaining my “CD collection” analogy for

Last time I began explaining my “CD collection” analogy for

There is a key rule of thumb (or heuristic) in science known as

There is a key rule of thumb (or heuristic) in science known as

The

The  There is something about the articles that

There is something about the articles that