It’s time for another edition of Friday Notes, a dump of miscellaneous bits and ends. (Or do I mean odds and pieces? Odd bits and end pieces? Whatever. Stuff that doesn’t rate a blog post on its own, so it gets roped into a package deal along with other stray thoughts that wandered by.)

It’s time for another edition of Friday Notes, a dump of miscellaneous bits and ends. (Or do I mean odds and pieces? Odd bits and end pieces? Whatever. Stuff that doesn’t rate a blog post on its own, so it gets roped into a package deal along with other stray thoughts that wandered by.)

There’s no theme this time, the notes are pretty random. Past editions have picked the low-hanging fruit, and the pace has slowed. I’ve managed to whittle away a good bit of the pile.

Maybe some day no more notes and my blog will be totally in real time!

This happened back in August: I was sitting in my living room when motion outside my porch doors caught my eye. Three deer walking past. I do live in a city with lots of wooded sections that deer inhabit, and I’ve seen them wandering around the edges of my neighborhood.

But since I moved here in 2003 I’ve never seen them wander through the block-long grassy lane between condo structures here. Those porch doors of mine look out across maybe 15-20 yards of grass to the next structure.

Fairly close neighbors in my “backyard”

To the left, the lane extends past another set of structures and ends at the suburban road that runs past the complex. To the right, the lane ends in an open area where people play with their dogs (often using this grassy lane to get there). Beyond that is a small road that runs through the complex and then another line of condo structures.

My point is that deer wandering along that lane is weird. First time I’ve seen that in 18 years here. (Whoa! Eighteen years. Time does fly.)

The story gets funnier. A short while later I saw a small dog running, as though chasing the deer, but from very far behind. I had just enough time to wonder about the dog when a woman running after him followed. It was a funny little parade outside my porch door.

Little things like that keep us fascinated by life. You never know what you’ll see next (if you keep your eyes open).

§

An old college friend who still lives out in California and I were reminiscing about a Mexican restaurant we used to frequent, El Coyote. Back in the day, at least among a certain crowd, it was the second trendiest Mexican joint in Hollywood. (The trendiest is Lucy’s El Adobe on Melrose.)

I pulled up Google Maps, and it’s still there:

El Coyote in Los Angeles. (Image from Google Maps.)

It was a great place. The waiters were either middle-aged Mexican mother types in Spanish dresses or the more common young gay Hollywood male waiter types. Both were a lot of fun, although the former often didn’t have much English.

The place showed signs of having rooms added on over time. Often the host led you and your party on a long winding path to some remote room. The interior was dark and best described as Tijuana Tacky, right down to (I kid you not) lots of those pictures painted on black velvet. (None of Elvis, though, if I recall correctly.) The pictures were in heavy ornate wooden frames with lots of little seashells glued to them. Then they were spray-painted. Gold. It was glorious!)

The food was mostly Tex-Mex but the menu leaned into some more authentic Mexican dishes. It was good and inexpensive, but most Mexican places in Los Angeles are. They’re as common as gas stations, and nearly all of them are quite good (or at least passable).

What made El Coyote stand out (other than the décor and waitrons) was the equally inexpensive, but very potent, double margaritas. It’s where I learned to drink them on the rocks. It took me years to duplicate their recipe.

§

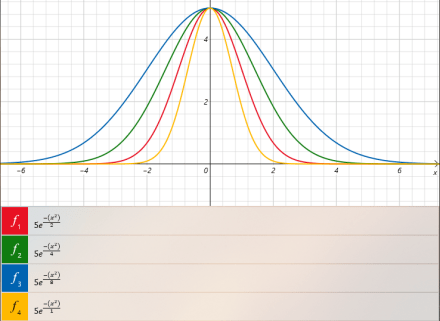

Did you know that the Windows Calculator — the one that comes with Windows — is a graphing calculator? I didn’t either until I read about it as a Windows Tip in some Windows Secrets article (not as visual as Victoria’s but still interesting).

Windows Calculator – Graph mode

Select the menu in the upper left, then select Graphing on the menu. You’ll get an empty graph. Click the button in the upper right to go back and forth between the graph and the functions it displays.

You can enter your formulas in plain text. Use the ^ symbol for exponents. Use parentheses to group things as required. (A little experimentation will make it pretty clear.)

It’s a handy thing to have if you just want to see what the curve of some equation looks like. That’s a need I’m sure we’ve all experienced from time to time. (Back in the day this would have made my calculus homework a joy!)

§

Speaking of math, the topic of entropy keeps popping in posts and videos. It got me thinking more deeply about my CD collection analogy for it, and I realized it’s harder to quantify the entropy than I’d realized. It’s even possible putting a concrete number to a given microstate might not just be hard, but NP-hard (which currently means “effectively impossible”).

I’m still researching this, so I won’t get into the specifics or the math here, but the difficulty arises because the microstates of a sorted collection are permutations of that collection. Quantifying the entropy of a permutation is much harder than for, say, a bit string or unordered collection (such as gas molecules or atoms).

[The popular notion of entropy as “disorder” isn’t a bad one, but it requires some explaining and examples. One can also view it as a “gap in knowledge” between different descriptions of a system. For example, the temperature and pressure of a gas (two numbers) versus information about position and momentum of each gas molecule (lots of numbers).]

The requirement is: given some permutation of an ordered collection, its entropy is the minimum number of moves necessary to restore the collection to order. Coming up with a number isn’t hard — bubble sort the permutation and count the moves. That provides a number, but it won’t be anywhere near the minimum number required. That’s what’s apparently NP-hard.

Grist for another mill. Thinking about entropy generated a side thought: The definition of entropy has itself suffered entropy. It has become more chaotic and diffuse as more and more accounts and metaphors of it exist in the popular consciousness. That’s not ironic; that’s the second law of thermodynamics in action. (It also illustrates quantum decoherence.)

§

I saw Dune (2021). Meh. I found it slow and full of itself. About halfway through I realized how much film time was devoted to people walking. (My subtitle for the film is, Dune: People Walking.)

My guess is that, in time, this film won’t be any more of a treasured classic compared to its source text than The Hobbit is compared to its text. An even better comparison: Blade Runner (1982) is a film classic. Blade Runner 2049 (2017), which was Villeneuve’s last film, I’d guess also is destined to be forgotten.

I’ll get into this in more detail another time, but as stories modern films usually suck. They’re little more than amusement park rides with a shallow narrative playing second fiddle. They’re about sensation, not narrative. (This is why those seeing the film in IMAX find it so enthralling.) Villeneuve’s films are a lot more substantial than most; I’ll give him that easily. I really liked Arrival (2016).

Grist for yet another mill; my note is about the book and the story. Which I like enough to have read several times over the decades. Both my hardcover and softcover copies are pretty beat up. None of the sequels ever really grabbed me, though, and Herbert’s other work really never grabbed me. The contrast between Dune and his other work led some to think he hadn’t actually written the book. (Shakespeare has a similar problem regarding all his work.)

Seeing the posts and comments, I think Dune (1965), is one of those books, like Lord of the Rings (1954) and many others, that people discover at a younger age and, because they are such substantial surprising works, take to in a big life-long way. For me that was Star Trek, starting in 1966 when it first aired.

But I’ve been reading science fiction almost as long as I’ve been reading, and it seems that life-long diet provides some buffering against any one text grabbing me big time. I’ve taken bits and pieces (and odds and ends) from all the worthy texts, but hitched my wagon to none. (Except Star Trek, kinda, but I got over it after 50 years.)

§

Lastly, I’ve been watching video talks by theoretical physicist Chiara Marletto, who has been making the rounds promoting Constructor Theory. Until now I’d heard it mentioned, looked at its Wiki entry,… and thought, ya, whatever.

How many well-known theorists do you recognize here?

Then I stumbled on a video talk by Scott Aaronson that sounded interesting, On the Hardness of Detecting Macroscopic Superpositions. That video was part of a recent conference, On the Shoulders of Everett, which turned out to have a number of other interesting videos. One of those was by Marletto (being a colleague of David Deutsch, who was also there, she takes the MWI as the correct theory). Her talk wasn’t about MWI at all, though, it was about Constructor Theory.

Which led to watching a number of other video talks she gave. (I confess to being totally smitten.) She gives essentially the same talk in each, so the material is becoming somewhat familiar. The idea is interesting, and I’m sure I’ll post about it once I’ve given it some thought. At this point, I wonder about the generality and universality implied by the program. At such high levels of abstraction, is it possible to make anything other than trivially true statements? I wonder about the value.

I also question the justification for the line from universal computer to universal constructor. I have a strong “so what” sense about that, too. Firstly, it’s already kind of there if we want to make one; secondly, it’s not what computing is for.

I’ll note, too, that this theory goes back to 2012, and I’ve found some old videos of Marletto giving essentially the same talk. It seems the program hasn’t moved forward much in nine years. Marletto’s media blitz seems to be, at least in part, a bid for funds — she’s made off-the-cuff remarks about the general difficulty of funding in several videos.

Still, it’s an interesting approach, and if fruitful might play a role in moving fundamental physics beyond its current logjam.

§ §

Stay constructing, my friends! Go forth and spread beauty and light.

∇

November 19th, 2021 at 9:16 am

I expect you know the “expected surprise” idea as a way to think about entropy? Surprise =-log(p); Expected Surprise=∑p×(-log(p)). It seems reasonable to think that how surprised you are to find a particular CD just where you find it doesn’t necessarily have to be a formal number (so it doesn’t necessarily have to be associated with a probability), that surprise might be defined and evolve in a complicated way, and that how surprised one is at any given complicated circumstance might be an even more complicated matter even to the point of it being uncomputable. Then I suppose expected surprise is not less complicated than that.

November 19th, 2021 at 10:40 am

Ha! 😀 This is exactly what I meant about the definition of entropy itself suffering entropy. The notion of “surprise” — let alone “expected surprise” — diffuses the definition. (Question: What are you summing over? Multiple p?) As you suggest, trying to quantify “surprise” might not be computable.

If an Adam Ant CD is misplaced among the Dave Matthews CDs, am I less surprised than if it’s found among the Yes CDs? Does its distance from “home” matter? Or does it count as a single CD out of place, end of story (because one move restores the sort). There is a measure called the Kendall tau distance that seems interesting in quantifying distance.

My understanding is that the notion of “surprise” is defined under Shannon entropy (rather than Boltzmann entropy), and I’ve always been vaguely askance at Shannon entropy. The formulas are similar, sure, but I wish he’d picked a different term. To me, even the “lack of knowledge” view comes more from the information side, and I’m interested in how Boltzmann would characterize my CD collection.

But I’m coming to understand what I need is essentially the minimum sort required to re-order a permutation, and that’s apparently NP-hard. Related to the Traveling Salesman problem of finding the shortest path, I expect.

November 19th, 2021 at 11:14 am

Entropy can be useful when we’re working with effectively closed and limited systems, but I’ve stopped thinking about entropy at all for open or unlimited systems, TBH. You can’t move for papers in the physics literature about entropy, but unless we choose finite scales for various cutoffs I think we can’t define something that is finite. With finite cutoffs in place, we’re completely dependent on there being absolutely no exchange between inside and outside and smaller, so that I think it’s not possible to have an in principle discussion with entropy as more than a bit part.

I think we have to work with algorithms that compute summary statistics associated with the actually recorded results of whatever measurements we have performed (or with theoretical analogs that are approximately as well-controlled mathematically). Any algorithm whatsoever might turn out to give some insight into the data we have (assuming it terminates for the data it’s given!), but I suppose there will inevitably be an evolving wider assessment of whether any given insight is useful for some specific purpose or is just pareidolia.

November 19th, 2021 at 1:31 pm

Yeah, I think entropy is often best taken a bit metaphorically. The notions behind the Shannon version are useful, especially in their intended domain (communication), but I do think it’s unfortunate it resulted in the increase of entropy in the definition of entropy.

FWIW: I view it more from an educator’s point of view than from a physicist’s. I’m mostly interested in the clearest way to explain (Boltzmann) entropy to lay people in a way that reduces the entropy of their understanding of entropy. 😉

The CD collection can be viewed as a closed system when just sitting there. Outside forces cause a permutation, and outside forces restore it to order. The latter is what I’d like to quantify. The former almost has to be taken as a kind of noise — a metaphor for people borrowing a CD and replacing it incorrectly (or worse, randomly).

In the metaphor, the highest entropy state is after the earthquake knocks all the CDs off the shelf into a big random pile on the floor. Changing the order of the pile is indistinguishable, so it makes a good analogue for the highest entropy states. (In contrast, a single CD out of order is immediately distinguishable.)

((Which, I suppose, is where “surprise” can conflict with entropy? I’d say a CD only slightly out of place is less surprising than one at the other end of the collection. (Surprise!) But I’m also tempted to call all permutations with just one CD out of order the first macro state after perfect order.))

November 19th, 2021 at 9:57 am

BTW: In that Zoom imagine from the conference, Deutsch is top row center, Aaronson is middle row second from right, and Marletto is bottom row left. The two top row left are the moderators; everyone else is a participant, although they didn’t all give talks.

November 19th, 2021 at 3:26 pm

Now I’m going to have to eat something Mexican for dinner.

I didn’t know that about the Windows Calculator. Actually, I didn’t even remember the Windows Calculator existed. Interesting.

It makes sense to me that a high entropy system might be effectively indescribable, because any description would itself have to be an even higher entropy system. Quantum decoherence strikes me as perhaps the best example of the second law in nature.

I enjoyed Blade Runner 2049. I actually liked it better than the original. (Honestly, the original, while thought provoking, has only ever been middling for me as entertainment.) I probably would have blogged about 2049, but I saw it during my blogging winter. But then I liked the latest Dune movie, so our tastes seem to vary on Villeneuve movies. I’ve pretty much liked every one since Sicario.

I actually struggled with the original Dune novel when I read it, although the ending ended up making it work. I never found the Dune universe very appealing. It always struck me as an appalling dystopia. The next three novels didn’t help, which is when I bailed. I sometimes wonder if I’d react differently if I read them today. Probably not. Herbert is too technology averse and too paranormal for my tastes.

Cool looking batch of videos! I had forgotten about that conference. Now to find the time to watch them.

November 19th, 2021 at 4:10 pm

You could watch one of those videos while you eat Mexican. 🙂

I don’t mean to throw any shade on Villeneuve. Arrival is one of my favorite modern SF films, and I enjoyed Blade Runner 2049 just fine. I’m just saying it won’t go down in history as the classic Blade Runner did. That’s not all on Villeneuve, either. Modern culture is so filled that it’s hard for anything to stand out anymore. Bands don’t. Movies don’t. We’re all about what’s next and what else is on?

I agree about (the book) Dune. I enjoy it as much as I enjoy Lord of the Rings, but it’s fantasy, and as you say, it’s grim AF. Some of it’s even a little childish (lookin’ at you, Fremen). To me its honor comes from being a Significant Classic. Blade Runner, likewise, I agree, isn’t such a great movie in isolation (especially these days), but it informed a lot of other work (as did Dune), and that’s where I see value.

I’m not sure what you mean about needing a higher entropy system to describe a high entropy system. I would have said a description (of anything) is a highly organized low entropy system. Can you elaborate on your thinking there?

November 19th, 2021 at 7:49 pm

Well, I ate Mexican while watching TV.

I think I could have enjoyed the Dune books as much as the LOTR ones, if the stories had been better. But the Dune series, at least the original ones by Frank Herbert, strike me as more about the ideas. There are characters and story events, but they seem secondary to the philosophical themes Herbert wants to explore. Most of the characters were too alien for me to have much of a connection with. Maybe if Herbert had had some equivalent to hobbits, although lots of otherworldly stories do an adequate job at having sympathetic characters.

On entropy, similar to the conversation we had the other day, I think a description can only be lower entropy if it’s part of a system that includes the viewer(s) of that description along with their capabilities. The whole combined system is then organized for possible work. But it seems to me that the description by itself has to have as much differentiation in it as what it describes. Consider if you have no understanding of the description whatsoever. As a physical system, it seems to have as much uncertainty in it for you as the original system.

November 20th, 2021 at 11:06 am

Ha, yeah, there’s no question LotR is more fun! It’s kind of the difference between Star Wars and Star Trek. LotR was explicitly a child’s fairy tale, but Dune was definitely for the adults. That alienness and the philosophical, political, and religious, themes are indeed the book’s core. (In Star Trek the rule was that stories always had to be about something. Themes were that show’s core, too.)

Hobbits on Arrakis. Now there’s an image! 😀 😀

Out of curiosity, have you read, and did you like, C.J. Cherryh or Stephen R. Donaldson? Those are both dense writers who are very much on the theme side, although they also have one foot firmly planted on the rippin’ good yarn side. We discussed Gibson. He’s maybe more like Herbert, mostly an idea guy with some action scenes. Cherryh and Donaldson are closer to Tolkien.

I think a key difference in our views of entropy is that I’ve noticed yours seems based somewhat on what a specific individual knows about a system. For example, if I have a high entropy system (e.g. CDs in a random pile), and I have an ordered description that lists their locations in that pile, if I understand the description it’s low entropy, but if I don’t the whole thing still has high entropy. The sector index of a disk drive makes a good analogy, perhaps. Without knowledge of the format, it’s just apparently random bits.

I see it more from a god’s-eye or idealized view not dependent on specific knowledge. The description demonstrably has an ordered structure even if I can’t fathom its meaning. That structure has lower entropy than data with no structure. For instance, if I were to ZIP the description, its structure would result in a much smaller file than if I were to ZIP a truly random dataset the same size. Exactly as you said, the description has to have as much differentiation (structure) as what it describes. Even the sector index would turn out to have regularities and structure.

Entropy is a measurement and it is viewer dependent in how the microstates are identified and quantified. (My problem is wanting to quantify the microstates of permutations, but that turns out to be NP-hard.) I’m not sure I’m on board with having it depend on an individual’s knowledge, though. I think maybe it should be based on a view that does understand the system. (Or at least understands it as well as it can be. Turing Halting, Gödel Incompleteness, Heisenberg Uncertainty — there are limits to even ideal knowledge.)

November 20th, 2021 at 2:40 pm

Hobbits on Arrakis. Yeah, I think they’d all quickly be Gollums. But no one in the Dune universe feels relatable in the way they are, or even in the way the characters on Star Trek are. Every time I thought there might be a group of relatable people they turned out to be deranged in some manner. As you said, grim AF.

I have read a little Cherryh (Downbelow Station) but no Stephenson. I was warned away from Stephenson by my cousin when we were teenagers and never got back around to trying him. I found Cherryh’s very tight viewpoint technique frustrating. That said, it’s been decades. Either one might work better for me now. Maybe at some point.

Actually, my view of entropy is of an objective phenomenon. (Otherwise how can we talk about total entropy in the universe?) It’s why I gravitate to the idea that it’s the amount of information necessary to describe the system, which seems to fit with the convergence of the equation for statistical and information entropy.

It only becomes viewer dependent when the viewer is part of the system in question, which I think they have to be for the viewer+description+original-system combo to have lower entropy than just the viewer+original-system combo. But the entropy of the whole viewer+description+original-system is, in my understanding, an objective measure. (Albeit one probably not measurable in any practical sense.)

If compression algorithms work on the description, and that description is a thorough description of the original system (no summarizing in a way that loses details), then it has redundancies, and it seems the original system itself had to have them as well, and so less entropy.

Unless of course I’m still confused about entropy, which is very possible. But this understanding seems to work well so far.

November 20th, 2021 at 4:45 pm

Maybe it was because Halloween just passed, but the thought struck me that one obvious difference is the number of people who dressed up as a character from LotR versus from Dune. 🙂

Based on our discussion of Gibson I’d be hesitant to recommend Cherryh or Donaldson, although who can say. They’re both descriptive, especially Donaldson, often luridly so, and very contained. They ask more than most of the reader. You might like Donaldson’s Gap series (five slim books). He’s usually fantasy, but this is hard SF. Strange and dark, though. Cherryh has a hard SF castle opera series, Foreigner, that I thought quite good. Up until the fourth trilogy, anyway. (I think there are seven trilogies in that series now.)

Regarding entropy, when you talk about describing a system, do you mean the description is of the system’s microstates? That’s how I’m using it, and it might be why I’m a little confused here. There is a “depends what you mean” thing here about the description, though. They can be tricky. I’ll use the CD collection analogy as an example.

One type of description only details what’s out of order. If the collection is sorted, the description is effectively empty (or just refers to “the collection; sorted”). Think of it as a list of the misplaced CDs. It grows from zero (perfectly sorted) to a maximum of N-1 (reverse order). The description is a list of moves that restore the sort order.

The other type of description has a fixed size, N. Its entries are an index of the CDs. If the CDs are sorted, the indexes run from 1 to N in order. When CDs are out of order, all that changes in the description is their index number. The sort order is maintained in the consecutive order of the index.

So in one case the description grows with entropy, but in the other its size is fixed. Hence my confusion. It might help to unpack some things about how that description exists. The description itself is a system with its own entropy. That entropy is essentially zero when the list correctly describes how to sort the collection regardless of the entropy of the collection.

The description can exist two ways: from the initially sorted condition and then maintained every time a CD is moved; by scanning the CDs and sorting that scan. The former spreads effort over time, the latter is the same as sorting the CDs in the first place. Note that with the type one description, the initial list is easy; just an empty list. Each CD move adds an entry to the list.

The point is, if we put energy into the index so it tracks the disorder of the CDs, we’re effectively maintaining the low entropy of the system. In some sense, it was never out of order. In this case, the zero entropy of the CDs has transferred to the zero entropy of the index. But the CD collection itself now has higher entropy. The index would reduce the work involved because we don’t have to sort, just use the index.

I think, for me, in your formulations of viewer+description+original-system, it’s possible in all cases to leave out the viewer, because I include the interpretation of the system in the system. Perhaps the only difference is that you’re ascribing it separately to the viewer? In the CD collection, the (objective) entropy is based on a set of sort criteria (viewer?) defining a perfectly sorted state (which will have zero entropy). A permutation of that sort has some entropy based on the amount of work necessary to restore the sort. (I think what it is, in my mind it’s like God defined the sort order or it’s natural or something. So there’s no viewer necessary in the equation.)

The index is certainly based on a design, but in my mind it becomes part of the index. The idealism is that no extra knowledge is required to make sense of the index, so again I don’t think of it in terms of a viewer. If you just mean the defined sort order or the index protocol, yeah, totally, that’s definitely part of the picture.

BTW, the index would be compressible, as you say, because it has redundancies. I’m assuming the index has a regular structure to it, a list or table, that would lend to compression. These redundancies need not parallel the system they describe, although they could.

November 20th, 2021 at 7:04 pm

I don’t recall being bothered by the level of detail in Cherryh’s book. What bothered me more was the lack of information. She tightly focuses on the viewpoint character’s stream of consciousness, which means that if they don’t think about things in the environment, she doesn’t convey them. Many authors do something like that, but they still find ways to drop clues so we know what’s going on. I found Cherryh to be very stingy with those, so I often felt like I was watching a movie through a keyhole. Again, it might not bother me as much today as it bothered young me.

I do mean a thorough and complete description of the system’s microstates. Sorry, the CD analogy just doesn’t click for me. I’m not saying it’s wrong. It might be completely and utterly right. But every time I ponder it, I get entangled in all the dependencies (language, numbering system, how any index would be used, etc) which seems to indicate we’re not talking about a system in isolation and its capacity to spontaneously transform (do work), which is why I feel the need to include other systems in any deliberation on what might lower the entropy. As you noted before, I’m probably just taking it too literally, but then the analogy isn’t clear for me. It might be I just need to give this more thought.

November 20th, 2021 at 8:13 pm

There are certainly authors the more experienced me has gotten a lot more from. Downbelow Station is part of a universe she wrote in, so it’s possible she was making some assumptions about what her readers knew. That said, Cherryh definitely requires the reader connect a lot of dots. The description, as you say, comes from the character’s POV. I’ve found myself re-reading paragraphs to squeeze out all she packs in.

I think you might be reading too much into the CD analogy. It’s an abstraction, not a concrete system. It’s my analogy, and I think it’s a good one, so it would be in my interest to see if I can figure out why it isn’t coming across clearly. I’m not sure how much you want to participate, though. (Alternately we could talk about an example of a system that does make sense to you.)

FWIW, I’ll have a go here at CD Analogy 101, as if you were new to this:

Context: There is a popular notion that entropy is “disorder.” It’s an English approximation, but it’s a reasonably useful one for understanding the idea behind (Boltzmann) entropy. A CD collection (or any ordered set) is a useful analogy in illustrating the connection.

Premise: We have a CD collection of size N (to be concrete, set N=2000). We take as axiomatic that there exists a defined sort order on the collection such that there is only one “perfectly sorted” state. What defines that sort isn’t relevant. What matters is that each CD in the collection has some common property that allows us to sort it. (In the full abstract, the analogy could just use numbers. I made it a CD collection to make the analogy more concrete and familiar.)

Goal: Per Boltzmann’s formula, entropy is a number — a measure of how many microstates are indistinguishable under some macrostate of the system. (The canonical example is the microstates of gas molecules versus their temperature or pressure.) This requires defining the microstates and macrostates for a given system.

Caveat: Determining the microstates of a complex system is hard-to-impossible, so in real-world systems, putting a number on entropy is a challenge. Identifying microstates can be really tough. Regardless, the idea behind entropy is valid even if we can’t put concrete numbers to it.

So: The CD collection offers insight into how microstates are defined. The macro state is a single number saying how sorted the collection is. It’s the inverse of the entropy, so here the macro state is the (inverse) entropy of the system. The microstates are the positions of the CDs within the collection.

The premise is there is only one perfectly sorted state — only one set of microstates resulting in a perfect sort. Entropy is the log of the number of microstates, and log(1)=0, so a perfectly sorted collection has zero entropy.

The first microstate after that is one CD out of order. There are many such states, the total depends on the number of CDs and whether we quantify how out of place a CD is. But depending on what we decide, this is a fixed number of states, and the log of that is the first jump in entropy after zero. Then two CDs, three, etc. Maximum entropy is the collection in random order. It has the same dependencies as for one CD out of order (N and whether we care about distance).

Don’t get hung up on the details of the collection. As I say, it could just be a list of numbers and permutations on the numbers. CDs made it more real. And offered a nice analogy of maximum entropy — after the earthquake knocks them all into a random pile on the floor.

As far as isolation, the CD collection represents some system at a single instant in time. Each permutation of the collection is a step in time. The analog of a natural system experiencing normal entropy would be people borrowing various CDs and replacing them badly, sometimes very badly. Over time, the collection becomes more and more disordered. The curator who comes by and straightens out the collection would be some system that uses energy to reduce the entropy of the collection (at the cost of raising their own).

Any of that help? Clear as mud? beer? water?

November 21st, 2021 at 6:46 am

Don’t feel bad about your analogy. It’s similar to one used by Brian Greene (a master science communicator) involving the pages of War and Peace being in order or not. Similar to yours, I felt like it actually pushed me away from understanding the nature of entropy.

Re-reading this again this morning, it occurs to me that maybe I’m missing a possible implied assumption in both analogies. Would the correct way to think about it be that the action of humans using the system is like the laws of nature playing out in any isolated physical system? If so, then that might give me the handle I need to understand them.

In that sense, maybe what are gradients of energy in a physical system, might be gradients of usage in these analogous systems. So an ordered CD collection or ordered pages of a novel are organized for usage. It’s gradients of usage are placed such that they are useful for someone accessing the CD collection (or reading War and Peace). But either being out of order fragments the gradients of usage, just as physical system being disordered fragments the energy gradients, in both cases isolating them and suppressing usage / transformation.

If that’s not right, then I think I’ll give up for now. 🙂

November 21st, 2021 at 9:30 am

Oh, I kind of remember Greene’s War and Peace version! Yeah, I do think permutations of an ordered set make a good analogy — a very pure analogy — for entropy. In both cases the macrostate is the entropy (rather than some other property like pressure).

Yes! Humans (or dogs or whatever) changing the order is like the effects of thermodynamics on the system. It’s been a long time since I read Greene, so I don’t recall how he accounts for the pages of a book getting disordered. It’s an explicit part of the CD analogy, though. Friends borrowing a CD and putting it back in the wrong place. (One thing I like about the CD analogy is that CDs can easily be out of order due to sloppy replacing. It takes extra effort to keep the collection in order just as it takes extra effort to keep any system in a low-entropy state.)

These analogies get to the heart of what entropy really is. I think maybe you’re trying to make it more than it is. What I said before about entropy being the measure of the number of microstates indistinguishable under a macrostate should be taken to heart as the fundamental and only meaning of entropy. It’s just a restatement of Boltzmann’s formula in English, and that formula is very simple. It’s basically about Omega, the number of microstates.

Energy gradients, and using the system, are in some sense “extra” here. They don’t play any role in defining and quantifying the microstates. They are deeply connected in real systems, but I think you need to let go of those concerns here and focus on what the analogy is saying about order, because that’s all these analogies are about.

November 21st, 2021 at 6:22 pm

I think you’re entropy analogy lost me as well, Wyrd. But I come at it from the thermodynamic perspective primarily I think, based on my first encountering the concept in Mech Eng classes.

To me, and maybe Mike hit on this already, we need in your analogy some parameter that equates to the physics form of entropy, in which the ability of the system to do work is a key element. I think eventually Boltzman maybe realized or derived that there is a relationship between the “value” of an energetic system (high pressure and temperature superheated steam can do work that low pressure and temperature steam cannot) and the possible number of microstates it can have. And then he was able to give a way to quantify if.

The other thing is that there’s no “ideal” state really in the thermodynamic form of entropy as there is in your collection. That part sort of loses me.

So for me the analogy could work like this. Let’s say that you only have CD cases that store multiple discs. All your Dave Matyhews Band are in one jewel case with multiple sleeves, all your Blues Traveler in another, etc. And let’s say none of the individual discs are marked. They all look the same.

When all the discs are in their jewel cases, the collection is valuable to a listener. And there’s only a limited number of states. You could have all the Dave Matthews Band in the right jewel case, but not in chronological order. So even though you knew the album you were looking for was in there, you still may have to try a few before you found it. The lowest entropy state might be to have all the discs in jewel cases by band, in chronlogical order of their release dates.

Now if you start throwing discs on the floor, you can’t distinguish between them. They’re just silver discs. And if you mix them all up it’s sort of the same. So you could still have low entropy conditions that are “mostly” right, where most discs are at least in the right jewel cases. For the case where they’re all scrambled, the condition is virtually identical to you, even though there are a huge number of possible scrambled states.

Does that make sense to you?

Michael

November 21st, 2021 at 6:24 pm

And to further this analogy, the “temperature” of the system would be analogous to how many discs were in the jewel case of the correct band… The more that are not, the lower the temperature.

November 21st, 2021 at 6:29 pm

Sorry… Haha. One more addition. The lowest entropy state equates to the one with the highest probability of finding a particular disc in the least number of guesses.

November 22nd, 2021 at 1:26 am

I don’t understand the bundling by artist or the CDs being unmarked. What is the purpose there?

November 21st, 2021 at 7:40 pm

Man, I have to say I find this all very discouraging. 😦

I think you and Mike are taking this beyond its intention. I don’t know if you read the whole long conversation I had with Mike about it; the short form is that this analogy is intended for those new to the concept of entropy. It’s strictly an attempt to illustrate Boltzmann’s formula. A list of numbers would work as well. Mike reminded me that Brian Greene used the pages of War and Peace. These are all abstractions meant to demonstrate why people equate entropy with disorder.

I agree it’s a terrible analogy for a real thermodynamic system, but it’s not meant as one. You and Mike might be struggling with it because it’s too far beneath you.

November 21st, 2021 at 7:56 pm

I’m just not sure the analogy works. I’m focusing on the step-by-step approach you described as CD Analogy 101.

I’ll try to follow each step and let you know where I get lost a bit…

So we have the premise: there is a particular order that is “perfectly ordered.” You sort of lose me right away because I have a hard time seeing how one particular order relates to entropy, but I can follow so far. We could imagine the “perfectly ordered” state is all the albums in alphabetical order by album name.

Now in the caveat, you introduce the idea of macro and micro states. And here you lose me, so maybe you just need to give an example of two micro states that are indistinguishable. I think in some sense the CD thing works against you, because I can’t personally imagine a scenario where you showed me two stacks of CD cases and I couldn’t discern relatively easily a difference between them. To me that’s an issue. I think what you may need is a clear example of two micro states that are literally indistinguishable. They have to be different, but appear identical for all practical purposes. In point of fact, they need to be functionally identical as well.

So that may be it for me Wyrd, I can’t understand how two specific states of the CD set can either appear identical or be functionally indistinguishable for practical purposes without some help…

November 22nd, 2021 at 12:42 am

Hopefully I can clarify some things…

“I have a hard time seeing how one particular order relates to entropy,”

This abstract system is defined in terms of an ordering. What it is doesn’t matter. It could be date purchased, order added to collection, ISBN number, or a more normal order based on (e.g.) Artist, Date Released, Title, etc. We could use numbered index cards (or just numbers). I mentioned Brian Greene used the pages of a single book. The whole point is we’re defining an abstract system capable of zero entropy. A physical equivalent might be a crystal at zero Kelvin — complete regularity, no noise. An impossible ideal condition physically, but possible in our abstraction.

“…the idea of macro and micro states. And here you lose me, so maybe you just need to give an example of two micro states that are indistinguishable.”

Yes, easily! First, we define the macrostate here as the degree to which the collection is sorted. As a macrostate, it’s a single quantity (like temperature or pressure) — a value that sums over the microstates. We define the microstates here as the positions of each CD.

Indistinguishable refers to that number quantifying sort degree. As you know, entropy is numerically defined as the number of microstates indistinguishable under a given macrostate. Here that means: How many permutations of the collection have the same sort degree?

We define the sort degree as the minimum number of moves required to restore the collection to perfect order. (Determining this number on a permutation is apparently NP-hard; related to the Traveling Salesman problem.)

Some examples. There is only one perfect sort order (sort degree 0), so its entropy is K log(1), which is zero (K is the Boltzmann constant). If one CD (any CD) is out of place (sort degree 1), it requires one move to restore the collection to order, so “one CD out of order” is the first macrostate after perfect order. There are many permutations of the collection with just one CD out of place, call it N, so the entropy of the first macrostate is K log(N). (So three numbers here: sort degree (1); N (biggish); K log(N) (the entropy).) The next macro state is two CDs out of order (sort degree 2) and so on. N grows (hugely) as more and more CDs are out of order, and entropy grows as its log. Max entropy (and max N) is when the order is random.

“I can’t personally imagine a scenario where you showed me two stacks of CD cases and I couldn’t discern relatively easily a difference between them.”

Imagine the stacks are very, very large (library sized)! More importantly, we’re defining the macrostate — a single value remember — as the sort degree. As with temperature or pressure, all we get is that one number. Of course we can examine the CDs and find the disordered ones, but that’s a statement about the microstates of the system.

November 22nd, 2021 at 7:42 am

Wyrd, thanks for taking the time to walk through this. I “get it” now but there are some elements of this that still throw me off and I want to explain them, as it may be interesting.

For starters, I think there are a couple types of macro states that come up when we talk about entropy. With fluids, for example, macro states are readily discerned by making simple measurements. We stick a thermometer in the sky and get an accurate reading of temperature for just about every practical purpose, even though we learned absolutely nothing about any single particle. We could do the same with a pitot tube and get some bulk fluid properties while, again, learning absolutely nothing about individual particles. So when I was trying to follow your analogy in part I was reacting to the fact that your macro state is not really like that. I can’t know “the degree to which the collection is sorted” until I inventory the collection. So for me, perhaps knowing too much, that wasn’t a good macro state. You mentioned gases being the canonical example and this oriented my mind to thinking about the entropy of fluids.

Now there’s another kind of macro state which could be like a crystalline material. The fact that the material has a particular repeating pattern constrains the location of atoms, electrons, etc. This is more like your example. The CD collection is sort of like a crystal where everything wants to be in the right place. So I was thinking about fluids and thermodynamics, and your example was more about a “geometric” sort of entropy. That’s probably not a real category. But unlike a fluid, where we can readily take a temperature, it strikes me that it is the observation of a pattern, which we may also be able to detect quickly by visual pattern recognition, that is the macro state. The problem with your CD collection is there’s no repeating patterns that could serve as the macro state, to give a “quick read” on the state of the system. You have a crystal with only one grain pattern.

Imagine instead if your CD cases had colored spines, and you had three sets of them. And each set formed a rainbow pattern of color from start to finish, with each disc gradually changing color from the two adjacent. Now if they were all in perfect order you’d see three rainbows. If you scramble them up, you could tell at a glance they’re scrambled. But you would have no idea where any particular disc was.

So, in part I think the analogy would be stronger with a macro state that was readily discernable and relevant to basic uses of the system, while keeping the micro states basically irrelevant.

In my modification, bundling the CDs by artist was an attempt to give a utility to the owner of the collection that the “degree of sorting” doesn’t. And by making all the discs unmarked I’ve made microstates indistinguishable. But it still doesn’t work great. What it does is produce a system where you could randomly pull just a handful of CDs and get a high level sense of how well it’s ordered. Like taking its temperature. You can’t do that if it’s only individual discs with maximum freedom to wander in the collection. In that model, if you try five, and none are right, it doesn’t tell you much statistically. With the jewel cases and the indistinguishable discs, though, if I try five discs, and four are at least the right artist, I might know if it’s hot or cold… Are the statistics different though? I don’t know…

The thing about both fluids and crystals or geometric patterns is that the macro state is readily and easily distinguishable. The micro states are literally impossible to discern. I think in your example the macro state isn’t distinguished easily enough from the micro state. It’s like I need to know the entire micro state to know the macro state. There’s no real way to come up with “the degree of sorting” without going through disc by disc.

Michael

November 22nd, 2021 at 11:07 am

Michael, first let me express my appreciation for your feedback and willingness to bounce this back and forth. The internet’s a strange place for conversations; they often end so abruptly. It may not always be apparent, but I do place a lot of value on feedback and communication. (So much so I can be guilty of over-providing it, I think, which might explain the abrupt endings.)

Anyway, so it’s the microstates and macrostates that seem at issue. I think I see what you’re saying. Keep in mind this is a highly simplified abstraction intended to illustrate certain specific features of entropy. Everything else has been deliberately stripped away to focus only on those features. It’s meant as the beginning of an explanation of (Boltzmann) entropy for those who’ve pretty much only heard the word and that it means “disorder” somehow. The things you’ve brought up would definitely be necessarily later in the explanation.

Totally agree there are different kinds of macrostates. Gas has temperature and pressure plus the geometry of its particles as the first two put it into liquid and solid phases. Entropy is a measurement on the system, and we always have to define the yardstick. (Note that all three macro views involve the same microstates, the positions and momentums of the particles.)

I submit there’s no such thing as a “real” category of entropy, or that “geometric” entropy is anything strange. That Shannon adapted Boltzmann demonstrates the underlying abstraction. At heart, the concept of entropy is strictly about those micro and macro states, nothing more. Anything else is an application.

It appears the macrostates are the issue here. Fair question; “how sorted” is a bit of an abstraction. I agree it has much in common with the entropy of arrangements, such as crystals. Since the example itself is an abstraction, the first answer is: Trust me and take it on faith this collection has a single numeric property describing “how sorted” it is. I defined that property as the minimum number of moves required to restore the sort. It turns out this number is NP-hard (i.e. effectively impossible) to calculate in the general case, so it is definitely an abstraction.

The “how sorted” property does impose one bit of magic if taken on faith. But given the full range of temperatures and pressures, we need instruments (technology magic) to access those macrostates, and the microstates are even more inaccessible to us personally. So a second answer is that in comparison we must treat the micro and macro state of the CD collection as equally opaque. We can imagine a special instrument that measures the “how sorted” property.

That said, I can get into third answers with concrete scenarios to account for the macroview. 🙂 Perhaps you’re familiar with robot-managed storage? Systems humans don’t (normally) enter that store anything from small samples to warehouses full of goods (and “vending machines” of cars). I think some of the first ones stored high-volume computer data tapes. Requests for information would cause the robot to seek the tape containing it, retrieve it, and load it into a tape reader. Or imagine a huge jukebox — one with tens of thousands of albums. From the outside, we can’t see the macrostate, let alone microstates, of such systems.

Now imagine the mechanism has malfunctioned such that the robot can’t always replace an item in its correct location. Sometimes it physically misses. But it’s able to record the error. Over time that list grows — the entropy of the collection itself rises. But the robot always has a measure of how disordered the system is (the size of the error list). Alternately, imagine the robot doesn’t keep track but can be asked to scan the system and report how disordered it is. It can be asked to take a measurement.

Or imagine each CD has an RFID tag that can tell the distance the CD is from its correct position. A measurement involves quickly querying each CD for its distance and reducing those distances to a sum, average, or whatever. Or the owner, after various friends have borrowed and misfiled CDs, does a quick scan from start to end to count the CDs out of place (so he has a number to complain to his friends about). Or he sorts the collection and keeps track of how many moves it took.

Bottom line, perhaps the analogy needs to include something like one of these to clarify the macroview. (I take it there is no complaint about the microstates? That’s clear enough?)

An aside about such extensions. They can be “unnatural” in terms of how people see CD collections, and I try to avoid stretching analogies beyond their natural images. When trying to explain something complicated, I feel the tools used for that should be as familiar and natural as possible. My goal is to stretch the student’s imagination in only one direction at a time. As you and Mike are pointing out, the CD collection analogy requires explanation as it is. (Students sometimes struggle with the “why” of a system or analogy.)

November 22nd, 2021 at 12:36 pm

Thanks, Wyrd! I appreciate we kept going, too. It leads to interesting things.

One thing apparent to me from this back and forth is we may, may, have slightly different views on entropy. Or to say it another way, we may place emphasis on different attributes of this notion of entropy, and these differing emphases lead us towards valuing the appearance of those different attributes in the pedagogical tools we might deploy.

You wrote, At heart, the concept of entropy is strictly about those micro and macro states, nothing more. And I find this intriguing. I mean, I’m in agreement of course! But leaving the analogies aside, it makes me wonder about some things. I touched on them earlier very briefly but want to dive into something more explicitly and see where it leads.

The key relationship at the heart of entropy is between the number of possible microstates a system can plausibly occupy that are indistinguishable in the macrostate. A macrostate that can be instantiated in the most number of microstates is said to be more disordered. Well, it’s said to have higher entropy. But my question is which is more essential to the notion of entropy: the disorder? Or the loss of transformative capacity?

I tend to struggle a little, not conceptually perhaps, but from the sense that it rings a little hollow, to say (as is often done in popular descriptions of entropy) that a disheveled room has higher entropy than an ordered one simply because it is messy. Definitions removed from a linkage to the capacity of the system to genuinely transform seem like they’re only half right. Which really begs the question I’m trying to get to here: as physics has evolved, has the notion of a system’s ability to transform been left by the wayside and replaced purely by the notion that entropy is about knowledge or ignorance of a system state?

And what is transformation? I tend to think transformation means a change in the macrostate. And I think that way because it seems like once the system can no longer undergo a change in macrostate, even though it may perpetually change microstates from moment to moment, it’s basically “dead.”

I’m a little less in touch with the information approaches to entropy, but wonder what the macro and microstates are defined as in “information terms.” Do you have a way to explain that? Meaning, what is an example of a macrostate of “information” that subsists over multiple microstates?

November 22nd, 2021 at 2:26 pm

You raise some very interesting points here, Michael! I was just about to go have breakfast and start Laundry Day when your reply popped up, and I had to force myself to go do those things.

I don’t think there’s much daylight between us here. I think perhaps the analogy does need more connective tissue, at least for advanced users. In abstracting application away from the notion of entropy I may have stranded those who already understand its application. My hope for students is that the abstraction leads to the application(s). I think/hope (upon full explanation) you’ll find the attributes you mention all do appear (albeit perhaps later in the explanation; first things first).

“But my question is which is more essential to the notion of entropy: the disorder? Or the loss of transformative capacity?”

I would say I see them as equivalent — different ways of saying the same thing. The former is the more general; the latter speaks more to applications. Consider that both a glass of ice cubes and a glass of boiling water have transformative capacity in a room temperature environment, but we objectively see the boiling water as having high entropy and the ice as having low. Obviously the transformative capacity comes from the difference in entropy. If the ice is in the freezer, it loses the (local) transformative capacity.

So I think transformative capacity is relative to the system and its environment. In the CD collection context, you’ve suggested one notion all along: the ability to find a CD. The lower the entropy, the easier that is. (To get practical, in a perfectly sorted finite collection, one can use a binary search, which has log(N) average performance. Indexes can improve search performance even more.)

Another notion is of an ordered listing of CDs. The more sorted the collection is, the less work that listing requires. Best case, no work at all, just read off the collection. Worst case you need to sort the whole thing. (Off the top of my head, a possible physical analogy with thermodynamics might be how a steep temperature gradient allows easy determination of distance from any point to the heat source, but as temp equalizes, that task becomes harder. New energy must be supplied to determine distance.)

But, my bottom line, all cases of high entropy are also cases of high disorder, whereas I’m not sure they’re necessarily also always cases of (at least not obvious) transformation capacity. So I think the former is more general.

The disheveled room is an abstraction very similar to my CD collection or Greene’s book pages. I think it makes more sense when understood as a metaphor and seen in the context of why the room gets messy and what’s implied by cleaning it up. Big Thermo Rule: Entropy always increases unless energy is expended to maintain it. Metaphorically: Your room gets messier if you don’t expend energy to keep it neat. Another Big Rule: Reducing entropy requires spending energy and always increases entropy somewhere else. Metaphorically: Cleaning up a messy room takes energy and always results in a mess somewhere else (garbage, your CO2, your waste heat, etc).

“Which really begs the question I’m trying to get to here: as physics has evolved, has the notion of a system’s ability to transform been left by the wayside and replaced purely by the notion that entropy is about knowledge or ignorance of a system state?”

😀 😀 😀 I… quite agree! But I don’t think “a system’s ability to transform” is a fundamental definition, either. We do differ in that. I don’t think of it that way at all, but that’s probably due to having a view that includes the information theory aspects. Let me come back to this on your last question.

“And what is transformation? I tend to think transformation means a change in the macrostate.”

By definition! As you point out, the microstates can be changing (transforming) under the same macrostate, so when you say “transform” you basically mean “change in macro state” and I’d agree those are the transformations of interest. And often the only ones available.

When you refer to the inability of the system to change macrostate as “dead” I think you’re referring back to what you said about the greatest number of microstates being the macrostate with highest entropy — maximum temperature equilibrium. (Of course, applying energy can return those microstates to less likely states and reduce entropy.)

“Meaning, what is an example of a macrostate of ‘information’ that subsists over multiple microstates?”

It depends on what view one takes. The macrostate could be the accuracy of an entire message or just one byte, depending on the granularity one cares about. In a packet switch, it’d be packets. In a text it might be sentences or paragraphs.

To connect back to transformation, Shannon entropy is very much about what happens to the bits when a message (a macrostate) is transformed (e.g. copied, transmitted, or stored and retrieved). The transformation is imposed on the message — it’s not something the message does in itself (such as does a melting ice cube or cooling water).

Going back to your earlier question, the irony for me here is that, while I can be a bit askance at Shannon entropy, my own CD collection analogy demonstrates the similarity between Shannon and Boltzmann entropy. Exactly because it is an abstraction — the very one Shannon noted. So it goes. 🙂

The thing that concerns me about that “entropy is ignorance of system state” view is that it risks instilling the notion the ignorance is a problem or due to inability. The “ignorance” is in many cases a choice between highly detailed levels of description and a simple one. Even if we could track all the gas molecules, pressure and temperature make more sense to deal with.

November 23rd, 2021 at 9:12 am

Good stuff here, Wyrd. Some thoughts, replies, ideas that came up reading your latest… These will reveal my ignorance on certain topics, which is good because I’ll learn something!

You wrote, Consider that both a glass of ice cubes and a glass of boiling water have transformative capacity in a room temperature environment, but we objectively see the boiling water as having high entropy and the ice as having low. Obviously the transformative capacity comes from the difference in entropy.

What you’re actually describing is a non-equilibrium state, in essence. If the room is adiabatic with respect to the rest of the world, with no energy transfer to the environment beyond, and we have either boiling water hotter than the rest of the room, or a glass of ice that is colder than the rest of the room, then the room and its contents are simply not at equilibrium (yet). Without any contact with a broader environment, the state of the room will naturally proceed in time to higher entropy states, which correspond to equilibrium. At this point the ability of the system to transform would stop. So just as you are not sure if high entropy always equates to low transformation capacity, I’m pretty sure it does…! But there’s a nuance, which I think my comments below will address.

By putting the ice back in the freezer, and obviously ignoring the work being done to sustain the freezer’s conditions, you’ve established a new system boundary (the freezer) in which equilibrium conditions now obtain. I believe thermodynamically the maximum entropy for a closed system and the achievement of equilibrium are related, and this also results in a system without any residual potential for macroscopic transformation. The freezer example is just another form of a closed system at equilibrium. If you open the door that’s another story… you’ve changed the system under study. If you unplug the freezer, and open the door in a room, and the room is a closed system, it will simply proceed to equilibrium, maximum entropy, and zero transformative potential.

So far, equilibrium and loss of transformative capacity of the system are obviously related. For closed systems, I think they very clearly are. Open systems are a little trickier. I guess it’s like this: I could say that falling is fundamental, and say that for closed systems (anything without wings and thrust, like a rock dropped into the sky a mile up) falling is obviously all there is. And then you could put wings and a form of thrust on it, and make it an open system, but you’ve not taken falling out of the equation, you’ve simply created a system that counteracts falling until the fuel runs out. Falling is still an essential dynamic. In fact, it is the perpetual tendency to fall that makes stable flight possible in a sense. It’s a bad analogy at that point, but without the directionality of falling, in real world systems energy would not flow through the systems in an ordered way.

So thermodynamically, I don’t see how we avoid entropy being fundamentally related to an approach to conditions of minimum transformative capacity. It may not ever get there for open systems if the system’s stocks of transformative capacity are constantly replenished by energy flows (as you noted), but it’s in essence what is happening.

Now, that said, I’m still lost on whether there are genuine analogies between thermodynamic entropy and information entropy in terms of how systems evolve over time. I’m going to go back to basics and try to build back up, and maybe you can jump in where I get something incorrect or you see it differently.

Entropy for a thermodynamic system is the probability that one particular microstate arises from among all the possible microstates the system could occupy, which are effectively the same macrostate. (This is my slightly modified abrogation of wikipedia talk, but it follows the mathematics in English form and makes sense.) (I can see as I write this why you could reasonably argue the ability to transform isn’t fundamental, as it’s not really part of this definition. But I believe it’s fundamental to how systems evolve in time, and this definition of entropy is just about the snapshot of a system at any given instant. So maybe the ability to transform is related to the second law and the notion that the evolution of systems is towards maximum entropy, which is equilibrium, which is no transformative potential…)

Okay, entropy for an information system is related to the probability a particular message is found in a given message space. Now right off the bat, these are not equivalent to me because the microstate of a thermodynamic system involves all the elements, and a microstate (a message) in information theory is generally only a subset of the message space (the macrostate). So while they may have equivalent mathematical formulations—they both are using probability theory—the actual meaning of the mathematics is a little different. One (thermodynamics) is related to finding a particular configuration of a continuously evolving system, and the other (information) is related to finding a particular subset of elements within a fixed space.

So now what. Well, this is where I get lost if we try and equate the evolution of a particular system in time with both the thermodynamic and the information theory definitions of entropy. Because it’s not clear to me they function in the same way. Example: based on laws of thermodynamics on which we both agree, and using a simplified analogy like a dirty bedroom or a collection of CDs, we know that after a while the CD collection will end in disarray. And we can say that this is a good way to convey the fact that a closed system will tend to equilibrium over time, which is maximum disarray/disorder. That’s good.

What is the corollary in information theory? Is it something like, a message that is coded, decoded, and retransmitted will lose quality over time? Something like the children’s game of Telephone?

What I’m realizing is entropy is just a parameter, and that in thermodynamics it relates to a set of laws, and it probably does in information theory as well, though I don’t know those laws. But as they’re different types of systems I guess until I learn more, I’m uncertain about equating the two. In the thermodynamic world, in the context of the known laws, systems evolve (unless otherwise acted upon) towards maximum entropy, equilibrium, low-transformative-capacity states. Since messages and signals are not like thermodynamic systems in the sense they don’t evolve on their own, it’s unclear how information entropy fits into a system of ideas that remains analogous to thermodynamic transformations. It could be something about how messages get decoded, recompressed, retransmitted I guess. I don’t know. But these processes are not like thermodynamic processes in the sense that they don’t happen on their own per se.

And in closing, if there are not analogous laws then we should definitely be careful about mixing thermodynamic and information theory forms of entropy in a common model…?

November 23rd, 2021 at 12:57 pm

I hope you won’t be put off if I do a lot of quoting. I know some find it confrontational. I don’t mean it that way at all. My intention is clarity and a conversational mode. This is a great conversation, and I have nothing to be confrontational about! 😉

“What you’re actually describing is a non-equilibrium state, in essence.”

Yes, exactly. What I was trying get at is that the room, being much larger, “wins” — the ice and boiling water will equalize with the room. But in one case a low-entropy state is warming up and in the other a high-entropy state is cooling down. They approach equilibrium, but from opposite sides. Both can do work, and in both cases we’re recovering the energy we spent freezing the ice and heating the water. It’s the difference that matters.

Because I don’t equate entropy with work, I tend to see these in the context of energy levels, not entropy levels. In thermodynamic systems, as you say and I agree, they tend to be the same thing.

What I think happened with entropy is that it arose from the study of thermodynamics, so that’s its most natural fit. But as often happens in mathematics, the fundamental notion underlying what’s going on with entropy turns out to have much broader application. That’s why it caught Shannon’s eye. The basic notion of microstates vs macrostates also works in information theory. It even works in messy rooms. 🙂

“Without any contact with a broader environment, the state of the room will naturally proceed in time to higher entropy states,…”

No disagreement, just adding that the room is already very close to equilibrium. There are two small regions (the two glasses) that are wildly out of equilibrium, and of course they’ll equalize with the room, but the room being much larger won’t change its energy or entropy that much. In fact, the ice cubes and boiled water might balance each other out leaving the room unchanged energy-wise but with ever so slightly higher entropy with those regions gone.

Totally agree that once that happens, there’s no work available within that system.

“So far, equilibrium and loss of transformative capacity of the system are obviously related.”

I’m sorry if I implied they weren’t. I agree they are. (I just think of it in terms of energy rather than entropy.)

Agree the situation becomes very complicated with open systems. I like your falling analogy. The second rule is a bit like an eternal fall, isn’t it. You could even include (no thrust) gliders as systems that attempt to isolate themselves from the fall but don’t expend energy doing it.

“So thermodynamically, I don’t see how we avoid entropy being fundamentally related to an approach to conditions of minimum transformative capacity.”

Thermodynamically, totally agree it’s directly related! (Again, sorry if I implied otherwise.)

“I’m still lost on whether there are genuine analogies between thermodynamic entropy and information entropy in terms of how systems evolve over time.”

I think the commonality comes from the fundamental abstraction of entropy, but it requires decoupling from notions of thermodynamic systems and focusing on the notion of micro and macro states. That said, I quite agree with the sense that, practically speaking, they are two very different things.

“Entropy for a thermodynamic system is the probability that one particular microstate arises from among all the possible microstates the system could occupy, which are effectively the same macrostate.”

I was nodding up to the last clause, which confused me. If the microstates are in the same macrostate, the system’s entropy doesn’t change. Maybe I’m just hung up on the wording.

There is, I think, possible confusion in that we have entropy-the-number and entropy-the-process. I have a sense people see it mainly as the latter. I tend to see it more as the former. To me, the process is thermodynamics.

There is the entropy (the number) at any given moment. As you say, a snapshot. That number from Boltzmann’s formula has nothing to do with probability. That comes into play with the system over time — the higher probability that it evolves towards “larger” macrostates (ones with more microstates).

With information theory the game of Telephone isn’t a bad analogy. The equivalent of the environment pulling a system towards equilibrium (the fall) is noise or errors. As with the CD collection, a message can have zero entropy (think of it as zero Kelvin), but any bit errors would be like heat leaking into the system. When a message is just sitting there, it’s akin to a snapshot of a physical system, but messages don’t do much good just sitting somewhere. Transmitting, displaying, saving and retrieving, these are the dynamics of information systems, the time ticks, so to speak.

“What I’m realizing is entropy is just a parameter,…”

Exactly so. It’s just a measure we can make on a system once we define what we mean by its microstates and macrostates.

And perhaps you see why my comment in this post was to the effect that the definition of entropy has itself suffered entropy. 😀

November 23rd, 2021 at 9:19 pm

The quotes don’t bother me at all, Wyrd. As you noted, it helps with clarifying the points of discussion efficiently. Also I’m glad you’re enjoying the exchange as I am, too. I’d say we’re on the same page at this point generally.

You wrote, Because I don’t equate entropy with work, I tend to see these in the context of energy levels, not entropy levels. In thermodynamic systems, as you say and I agree, they tend to be the same thing.

One nit-picky point here is that an “energy level” is entropy! Entropy is the means of quantifying the quality of energy in a system. In the boiling-water-in-a-room and cup-of-ice-in-the-room scenarios, there is no change in the total energy of the system whatsoever. (Keep in mind the system is a room isolated from the world, with a cup of hot or cold water in it.) But there is a change in its quality. The measure of this is entropy. I think you understand that, but wanted to note that when you write that you’re thinking in energy levels, not entropy levels, it seems like a non sequitur sorta, for the reason noted. Entropy is unrelated to how much or how little energy is in a system (to say it another way, which I know you also know).

You wrote, I was nodding up to the last clause, which confused me. If the microstates are in the same macrostate, the system’s entropy doesn’t change. Maybe I’m just hung up on the wording.

I said this all wrong because I omitted a step, actually. Thermodynamic entropy (the number) is a constant multiplied by the sum of the (probability that each particular microstate it “contains” could arise, multiplied by the natural log of that probability). Anyway, a macrostate that contains 10 microstates has a lower entropy than one that contains 100. We agree on that. Haha.

Same screw-up on the information version. It’s the sum of the probability of finding each possible message (the various microstates) that could be contained in the message space itself (the macrostate).

You wrote, There is, I think, possible confusion in that we have entropy-the-number and entropy-the-process. I have a sense people see it mainly as the latter. I tend to see it more as the former.

I think you’re exactly right and we agree on this. Entropy is a parameter, like distance or temperature or frequency—a number, basically—that can be assigned to the system being studied at any point in a process. (Granted, not exactly like temperature. . . haha.) It is not the process itself. As we’ve both essentially noted, the laws of thermodynamics describe the processes and how they evolve, and how entropy does or doesn’t change for different types of processes.

You wrote, There is the entropy (the number) at any given moment. As you say, a snapshot. That number from Boltzmann’s formula has nothing to do with probability. That comes into play with the system over time—the higher probability that it evolves towards “larger” macrostates (ones with more microstates).

But this I don’t think is quite right. It’s tricky I think. Entropy (the number) is related to the sum of the probabilities of the microstates that are “included” in a macrostate have of arising (multiplied by the natural logarithm of each probability). It turns out that if all microstates have equal probability of arising, this reduces to the natural logarithm of the number of microstates. But in systems for which not all microstates are equivalent, probabilities do play into entropy (the number). And it’s the same in Shannon entropy (I recently learned).

The probabilities you’re thinking about in the statement above, as you noted, are about evolution of the system at a macro level.

We might have exhausted this sport for now, I don’t know, but I think there’s a lot for me to learn about the more abstract form and how information and thermodynamic entropy merge in practice. This sentence from Wikipedia, for instance, is pretty fascinating, “If the probabilities in question are the thermodynamic probabilities pi: the (reduced) Gibbs entropy σ can then be seen as simply the amount of Shannon information needed to define the detailed microscopic state of the system, given its macroscopic description.”

Say what!?

November 24th, 2021 at 11:59 am

Interesting! We seem, at the end here, to have picked out some definite differences in how we see entropy. They might just be definitional or point of view, though.

“One nit-picky point here is that an ‘energy level’ is entropy! Entropy is the means of quantifying the quality of energy in a system.”

I’m sorry, but I just can’t go along with the first sentence there. I agree with the second, emphasis on “means of quantifying the quality of energy in a system.” But to me an energy level is something like the number of joules or calories or volts. I agree they’re correlated in thermodynamics, and I agree that entropy can be a measure of the quality of energy.

Remember I’m oriented at a simple explanation for beginners, one that explains Boltzmann’s equation and why people equate “disorder” with entropy. Many of the refinements and applications we’ve discussed are entirely valid but outside the scope of that project.

(At one point you quipped that entropy was like temperature but not exactly, and I quite agree. Temperature (to me) is an energy level.)