Oh, look! Dancing Pixies!

In the last two posts I’ve explored some ideas about what a computer is. More properly, what a computation is, since a computer is just something that does a computation. I’ve differentiated computation from calculation and, more importantly, evaluation. (This post assumes you’ve read Part I and Part II.)

I’ve also looked at pancomputationalism (the idea everything computes). The post hoc approach of mapping of random physical states to a computation seems especially empty. The idea of treating the physical dynamics of a system as a computation has more interesting and viable features.

That’s where I’ll pick things up.

Before that, about the post hoc mapping: The emptiness is due to the reverse mapping. Recall from last time that a listing is not a computation.

The design of a computer includes a definition of physical system states representing computational vehicle states. For instance, +5 volts is a “logical one” and 0 volts is a “logical zero” (the choice is arbitrary). Think of that as the atom that builds molecules, which build compounds, which build substances, which build things. In computer design it’s bits, bytes, logic gates, CPUs, computers.

The design also includes a set of operations the computer can perform on its vehicles in response to codes (a “program”). The operations, architecture, and vehicles, define an abstract machine implemented by voltages, wires, and chips.

A programmer maps this well-defined abstract machine to a program — a sequence of codes that will cause a series of operations on data. The code is explicitly designed to run on the machine. The notion of a Universal Turing Machine is that the machine definition comes first; the machine is universal. Machines are mapped to algorithms, not vice versa.

This is (in part) why different machines can run the same algorithm.

But post hoc mapping goes the other way. It finds a listing of desired states and retroactively calls them computation by an imagined machine that’s a subset of the rock, pail of water, or whatever.

A listing isn’t a computation; it’s a recording of one. A post hoc mapping is only valid if the states are computationally generated (and arguably generated explicitly for that result). What’s more, if the abstract states are already known (so a set of appropriate physical states can be found), what’s the point of the physical states? The computation has already been performed.

So the dancing pixies are a fantasy.

(On this account, but also on the account that the computational energy to extract the supposed computation from the background is large compared to the supposed computation. In a valid computation, it’s a tiny fraction. For instance, the screen.)

§ §

The idea that universe is a computer, or that physics is a computer, is interesting (and worth exploring). On one level, the concept seems trivially true. It may depend on how one feels about the distinction I made between computing and evaluation.

The abacus and the slide rule are obvious computational devices (if we allow that computational peripherals are computational devices). Rock-clocks aren’t (and to the extent they are, they’re really bad at it). The abacus is digital; the slide rule is analog. The notion of a physics computer suggests a deeper look at analog computing.

Yet if we look deeply enough, we may find ourselves with a digital computer, as some theories see reality as a quantum-level cellular automata. Certainly the interactions of quantum or classical particles does resemble such a system.

§

As an aside, executing a computer model of a physical system — a tornado, for instance — is entirely different from any computation that physical system supposedly performs. However, if the model is accurate enough, then the model computes what the system computes.

Or at least appears to. A tricky thing about virtual models is that their causality is equally virtual. It’s enforced only by fiat, not by any reality. Therefore what a model appears to do causally is an illusion. As a simple example, only the algorithm prevents Tetris blocks from overlapping. No actual physics prevent it (as any number of rendering errors demonstrate).

There is also a question of emulation versus simulation. The former replicates the function of a system without regard to its mechanism. The latter is a model of the physical system to some degree of precision.

This works differently depending on what’s modeled. A model of a tree or heart is most accurate to life as a physical rather than functional model. But a rendering program filling a hillside with trees is better served by a much simpler function that generates random similar-appearing trees. So it depends on the need.

To get all the same results physical objects provide, functional models tend require fine granularity which makes them converge on physics models. When it’s down to tree-cell functions, that’s pretty much a physics simulation.

§

On the other hand, a model of an electronic calculator can model function precisely and ignore the physics. The functional model delivers exactly the same results as the physical one.

In fact, assuming the goal is replicating what a calculator does, then modeling anything but function is overhead. On the other hand, a photorealistic rendering requires at least the optical surface physics. (On the other other hand, a photorealistic animation needs both surface physics and function.)

So it depends on what’s needed. (Which is a canonical joke in computer science. The answer to just about every CS question begins, “It depends on what you mean…”)

The real dividing line is that a calculator is already dual-layer mechanism, already a computer. Trees and hearts are physical objects (like rocks) and are not executing an unrelated virtual layer. Functional models are easy, even trivial, when that virtual second layer exists.

§ §

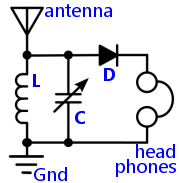

A crystal radio circuit.

Getting back to analog computers, let’s consider a radio. For simplicity, not just an AM-only radio, but a “crystal” radio. They’re so simple Cub Scouts used to build them.

Is a radio an analog computer?

Under pancomputation views, absolutely. There are two levels. Firstly, the radio is comprised of particles that compute reality according to quantum laws. Secondly, the materials and structure of the radio combine to create radio properties that compute the audio signal of a radio channel.

Under the computer science view, absolutely not. There is no Turing machine that can tune and rectify a radio signal. The reason is that radio “computation” depends on physical properties of the radio.

However, a computer can model those properties and accomplish the same thing, which is what “digital” radios do. The level of complexity of digital radio, compared to the five-component version says a lot about the challenges of digital models of reality.

§

A computer uses voltages for logic levels. A radio uses voltages to represent the radio signal and the audio signal of the radio channel.

Very briefly, the antenna picks up all the radio signals in the area. These are low-frequency light signals broadcast from local radio towers. Note that “low-frequency” is actually pretty high in human terms. The AM band is roughly from 0.5–1.7 MHz (million cycles per second). In comparison, actual visible light is 400–790 THz (million-million cycles per second).

The coil (L) and the capacitor (C) comprise a tuned circuit that resonates at a particular frequency — one that happens to match the selected radio station. The arrow through the capacitor means it’s variable — changing the capacitance changes the tuning, which changes the station.

The resonance picks out the selected station making its signal the strongest. The rectifier (D) strips out the (to humans) high-frequency radio signal leaving the audio envelope. That’s fed to the headphones.

The Gnd (ground) provides a return path for the radio signal. Now you know how a radio works. A simple AM one, anyway.

§

Here’s the point: In the antenna and tuned circuit, the voltages directly represent the radio signal. After the diode (rectifier), the voltages directly represent the audio program.

Crucially, if we looked at those voltages, we would see the signals. There is a direct proportional mapping. The signal echoes reality.

As to what a radio “computes”, under a pancomputation view the tuned circuit, diode, and head phones, all compute transformations of input data to output data.

As I’ve said, I call this evaluation (rather than computation), and it’s a topic I may explore in more detail in a later post. (For one thing, it’s just semantics.) Now I’ll just say I think things like planetary orbits, natural watersheds, flying baseballs, and most audio gear, are evaluating, not computing. The former is akin to water seeking a level or pressure equalizing.

Along the same lines, I wouldn’t consider chemical reactions or quantum interactions as computing, but as evaluating. That said, at the particle level, the cellular automata view is compelling. (I just don’t see that it helps us analyze computing in the human sphere.)

The lack of a dual layer in natural systems is significant to the difference.

§ §

Let’s consider a stubbed toe. That’s a biological analog system. In the nervous system, we see signals there are also direct and proportional. Those signals echo reality.

It’s not a digital system. The toe doesn’t encode and send an arbitrary vehicle; it metaphorically “yanks on a wire.” It’s the difference between a chat message saying “Help!” and a telephone call yelling it.

Both work … if the message is correctly interpreted. On the other hand, there’s no mistaking the audible yell. That analog signal carries more information, more bits.

The biological stubbed toe naturally segues to the biological CPU, the brain, and the same assumptions apply.

§ §

In the first two posts I avoided computationalism — which identifies brains and computers in both directions. It equates the brains to computers, and computers to brains (minds can run on them). These posts are not concerned with the latter notion.

The focus is computational systems, so the question of whether the brain computes is certainly in scope.

On the pancomputation view, as with radios and toes, definitely yes. On the computer science view, most would argue no, but some find the neuron=gate identity compelling. (One objection: logic gates alone don’t comprise a computer.)

The question becomes more difficult if we include analog computers as computers. Many would then argue CS says yes.

§

But I don’t see the virtual layer. I see only a physical layer that gives rise to the mind. Under both physicalism and neuroscience, they’re supposed to be the same thing. Mind emerges from the operation of the brain.

To me that’s not computing any more than it is in a radio. There is no duality, the mind isn’t a separate or separable thing. Neither is the signal in the radio. It can’t not have that signal; the signal is part of the radio. The signal can’t exist on its own; it supervenes on the radio.

To extend the comparison, brain damage is a broken radio, and mind-altering drugs are radio interference.

§

In any event, it’s just a label. In the assertion Brain=Computer, the latter is a metaphor. The equality is vague at best. (If brains actually were like computers, people would be much better at math and logic.)

It doesn’t matter. The brain is what the brain is, and I think brains are rather unique and amazing natural objects. They just aren’t like anything else. All comparisons fail.

That said: If anything, the brain is more akin to a network of computers, each neuron (if not each synapse), a node in the network. Signals sent between nodes are viewed as analog despite there being firing/not firing modes. The firing mode contains much more information than just On!

§ §

That’s about all for this trilogy (funny how I keep writing them). Given my training and background, I should post about computing science more. (Although I have done so a bit.) There’s another SEP page that has me thinking about a sequel.

Remember that, in the end, when it comes to computer science (and often in life), “It depends on what you mean…”

Stay mapped, my friends! Go forth and spread beauty and light.

∇

September 3rd, 2021 at 8:49 am

It won’t be right away, but I do have notes for a part IV looking more into analog systems and analog computing. A closer look at the dual layer view (that lack of) in physical systems.

September 3rd, 2021 at 9:34 am

Very good summation of the subject matter Wyrd, especially this statement:

“I see only a physical layer that gives rise to the mind. Under both physicalism and neuroscience, they’re supposed to be the same thing. Mind emerges from the operation of the brain.”

Also, your radio analogy fits very well when discussing the relationship of the brain with the emergent system of mind. What really fascinates me is that this emergent system we refer to as mind “is” the Cartesian Me and that system is “not” an illusion. Additionally, the Cartesian Me’s experience is a 100% unadulterated conceptual experience.

I think this uniquely distinct and unprecedented experience of mind, or call it a quantum leap from a non-conceptual experience of the brain to a conceptual experience of the mind is profound. This distinction between non-conceptual experiences of unconscious systems and the conceptual experience of the conscious Cartesian creates a natural dissociation if you will from the classical world which gives rise to an intuition of some type of avatar at the helm.

September 3rd, 2021 at 11:32 am

Thanks! I do think how a radio works offers a decent analogy to how the brain works. (That radios and brains are both clearly “information processors” just shows how vague and general the term is. Calling the brain an “information processing system” says almost nothing. So is a tree.)

I like “distinct and unprecedented” — good way to put it. I’ve long argued consciousness is a unique and special feature of the natural world. Once it sparks to life, it goes on to invent all manner of crazy shit: art, morality, justice, mathematics, computing… weird, wild, and wonderful. (As I mentioned to you recently, we may be alone in the galaxy, if not in the local cluster. At the very least, I think, very rare.)

October 4th, 2021 at 7:28 am

[…] September I posted the Pancomputation trilogy (parts: I, II & III) which was a follow-up to last spring’s Digital Dualism trilogy (parts: 1, 2 & 3). The […]