I’ve seen objections that simulating a virtual reality is a difficult proposition. Many computer games, and a number of animated movies, illustrate that we’re very far along — at least regarding the visual aspects. Modern audio technology demonstrates another bag of tricks we’ve gotten really good at.

I’ve seen objections that simulating a virtual reality is a difficult proposition. Many computer games, and a number of animated movies, illustrate that we’re very far along — at least regarding the visual aspects. Modern audio technology demonstrates another bag of tricks we’ve gotten really good at.

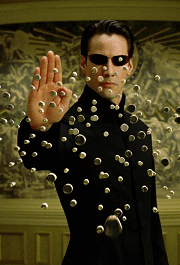

The context here is not a reality rendered on screen and in headphones, but one either for plugged-in biological humans (à la The Matrix) or for uploaded human minds (à la many Greg Egan stories). Both cases do present some challenges.

But generating the virtual reality for them to exist in really isn’t all that hard.

I don’t know if the objections were based primarily on the difficulties of the interfaces or in generating the virtual environment. I’m going to focus on the environment, but I’ll start with a few words about the interfaces.

There are two quite different scenarios:

- Direct neural connection with biological humans.

- Virtual connection with virtual minds.

Regarding item #2: If we know enough about minds to run a simulated one inside a computer, then we necessarily know all about how that mind receives inputs and generates outputs.

So the difficulty of the interface is the same difficulty of implementing those minds in the first place. Mind simulation is a different topic (taken for granted here), so item #2 presents no additional difficulties.

§

Directly connecting with the neural system of a biological human is a considerable challenge, but we currently have technology that reads or stimulates individual nerves.

Directly connecting with the neural system of a biological human is a considerable challenge, but we currently have technology that reads or stimulates individual nerves.

The issue is the scale and size. There are a lot of nerves involved, and they are very small (and packed together). But those are engineering problems; we’re good at those.

The proposition is an old one, known as the Brain in a Vat.

It’s based on the idea that all your brain receives or sends are impulses in the connecting nerves (mainly through the brain stem). The brain has no way of knowing if those impulses link to a real body or a computer interface.

Ultimately, what we need are two maps and the hardware micro-technology engineering to directly read or stimulate each nerve coming from or going to the brain.

The first map (a software system) converts between virtual reality data and general nervous system data. It provides generic data streams that any user could plug into. More on this in a bit.

The second map (also software) converts that generic data into the specific nervous system of a given user (it’s the adapter plug — it controls the hardware that links with the brain). This map requires identifying the connecting nerves of a given person which is likely very challenging.

[Presumably it involves presenting stimulus and monitoring nerve activity into the brain as well motor nerves coming out. That process provides a map of what does what and what the signals need to look like.]

§ §

This leaves the difficulty of generating the virtual environment.

Notice that the first map above, which converts between the environment and a generic nervous system, implies a point of view. Certainly, the data for that nervous system involves things it sees, hears, smells, touches, or tastes.

Which means the first map extracts these things from the environment given a location and gaze (point of view or POV). “I’m standing here, and I’m looking at that.”

In a game, it’s often the player’s POV. In animated movies, it’s the camera. In a virtual reality, it’s your POV.

§

The Descent 1 Level 7 Boss. Learning to vanquish it took forever!

The crucial thing about this map, this POV, is that it can freely rove around the virtual environment and look at what it wants.

(In some cases, it can be inside a wall or other object, which rather limits the view.)

But the key point is that the virtual environment exists as a defined model inside the simulation. That’s what allows the POV to roam.

Many games offer a virtual reality one can freely explore, so I’m surprised at the notion there is something canned or preset.

The only video game I ever really got into, Descent (1995), had many levels one could freely explore (once you killed all the robots trying to kill you).

MS Flight Simulator, another virtual reality game, goes back to the 1980s!

A key point is that virtual reality simulations now are really good. Between helmets and haptic gloves and walkable surfaces, they’re pretty amazing.

[In fact, the high quality of virtual environments today is a supporting argument in the Reality Is a Virtual Simulation hypothesis.]

§

It may help to understand a little about what a virtual reality is.

It starts with a coordinate system. Usually, since the idea is to emulate our 3D reality, that coordinate system is also 3D — typically some version of x, y, and z. This comprises the space the virtual reality exists in.

Empty virtual reality coordinate space.

All objects in this reality (walls, rocks, books, trees, baseballs) have coordinates that describe their location and orientation in that space.

Each object also has a set of properties that describe its shape, weight, surface texture, color, and many other properties (whatever is needed).

An object “exists” in the reality if there is an instance of it somewhere in the environment. It’s location and orientation are a matter of coordinates, so it can be rotated or moved just by changing those coordinates.

Generally, the virtual space is a database of some kind, and it contains instances of objects that exist in the space. That database defines the virtual world.

For example, the system has a template for baseball and box objects, so a virtual box of virtual baseballs involves adding to the database an instance of a box and many instances of baseballs. The coordinates of the various baseballs place them at various locations inside the box; the coordinates of the box places it somewhere in the virtual reality.

Placing a POV anywhere within that world — that is, giving the coordinates for a point of view — asks the system to render reality for that POV (so we can look at the box of baseballs from any angle).

The process of placing a POV in a virtual space filled with virtual objects and rendering how the space looks from that POV is well-explored, almost ancient, territory. Games and animated movies have been doing it for decades.

The virtual room I designed for this blog’s Eighth Anniversary.

[One trick that makes virtual realities feel more realistic is procedural generation. Rather than having to specify each tree, or alternately of having all trees look alike, there is a procedure for creating a tree that uses randomized parameters to generate similar trees. Clouds, grass, and mountains, are all good candidates for procedures.]

Note that reality is only rendered for a specific POV. It might be done in real time as the POV moves around, but it is only ever done for that POV in that moment.

In virtual reality, things really only do appear when you look at them!

§

So, as far as how the virtual reality looks, that’s the easiest part. It’s something we’ve been doing for a long time.

Currently we use a map that converts virtual reality data into pixels for a 2D screen. For a Matrix scenario, we need a map that converts VR data into nervous system data. For an upload scenario, presumably there is an obvious map from virtual object data to virtual mind.

There are other senses than visual, so our VR objects need properties describing how they feel, smell, sound, and taste. These just extend the set of visual and physical properties for objects. Part of rendering objects now includes those additional properties.

Again, the map converts them to nervous system data or to whatever the uploaded or simulated minds understand as sensory inputs.

§ §

Now there was a ringer in the list of object properties: weight.

Now there was a ringer in the list of object properties: weight.

Or more properly: mass.

All the other properties mentioned apply to how the object appears to us (including to all five senses).

Mass concerns how an object behaves.

Which brings up the idea of causality and physical law in the virtual reality… because there isn’t any unless we put it there explicitly.

For example, since objects aren’t physical and only exist as numbers, there’s no problem with them overlapping. Or with just floating in the air (exactly the way bricks do not).

Virtual objects don’t have weight or inertia, so a virtual book is as easy to lift as a virtual bulldozer… unless the virtual reality explicitly implements weight and inertia due to mass.

[What gave Neo and his friends “magic” powers in the Matrix was their ability to ignore the system’s programmed virtual rules of physics.]

§

Simple example of instances of objects rendered from a given POV. Note how objects can intersect and float.

Something as simple as enforcing the solidity of objects (like the walls and floors of buildings, not to mention objects) requires explicit programming that knows the size and shape properties of all objects.

[I remember how one of the advances in CAD-CAM software (circa early 1990s?) was the ability for it to detect when moving parts occupied the same space. We knew how to do it — that’s just math — we just needed fast enough computers to pull it off.]

A decent VR requires object solidity at the least but should go as far beyond that in implementing basic physics as possible. Certainly, it should be easier to lift a book than a bulldozer. Gravity should make an appearance (objects shouldn’t be allowed to float.)

One question is how real a virtual reality needs to be. The point of the Matrix was fooling biological humans, but uploaded or simulated minds might not need a fully fleshed out reality.

They might not want one! Floating, for one, sounds like fun, and on some level, the whole appeal of being virtual is escaping physics and reality (like aging). One could hike up Everest if one wanted, but one could also just teleport to the top.

So it’s possible a VR doesn’t need to implement all the physics, but it certainly should implement many of them.

The easiest way to do it (the way it’s done now, to the extent it’s done now) is allow objects to have physical properties that your VR engine uses to give them physical behaviors.

§ §

Speaking of behaviors, a virtual space is a coordinate space (usually 3D), but there is also a notion of time and of actions in the environment.

The simulation has a clock of some kind that ticks off moments of time. That enables dynamics within the system.

As with space, the physics of how time behaves depends on explicit programming. For example, the rules of Special Relativity would have to be explicitly coded into the dynamic behavior of the VR.

All the laws of physics have to be explicitly coded in, and some of it can be subtle. Friction, for example, or air turbulence, requires fine-grained properties and calculations.

(Temperature is an interesting one. What does it mean to be virtually too hot or too cold? What effect does that have on a virtual person?)

§

I haven’t gotten into how a person navigates around a virtual space or interacts with it, but this has gotten long and that should follow from what I’ve covered so far.

I can explore it further if anyone has an interest. It mainly involves converting motor neuron outputs into commands for the environment.

Sci-Fi Saturday shout-out to E.E. “Doc” Smith and his 1947 novel Spacehounds of IPC. The main character is a “Computer” named Dr. Percival (“Steve”) Stevens. The book was written back when the term referred to a person who specialized in doing math.

Stay virtually real, my friends!

∇

November 2nd, 2019 at 6:36 pm

I agree that the virtual environment isn’t the big issue some make it out to be. To be sure, there are complexities, but those are the one’s I feel most comfortable can be overcome.

I actually think wiring a biological human into such an environment would be harder than doing it with an uploaded mind (at least once we’re past figuring out to do the upload in the first place). For the biological human, the equipment has to precisely reproduce the necessary signals for the biological human’s brain, and as you note, there are physical challenges.

For an uploaded mind, we can cheat by the expedient of simply having the mind believe what we want it to about the environment. So, if we want it to believe it’s looking at the Mona Lisa, and for some reason we can’t get an adequate representation of it in the virtual environment, we can just present a kludgy one but have the mind believe it’s looking at a perfect representation.

Of course, this could have some dark implications for minds in virtual environments.

This discussion reminds me of a sequence from Greg Egan’s novel: Diaspora, where a character who grew up in virtual is dealing with the outside world:

November 3rd, 2019 at 9:47 am

“I actually think wiring a biological human into such an environment would be harder than doing it with an uploaded mind…”

Yep. As I said in the post, it “requires identifying the connecting nerves of a given person which is likely very challenging.” Definitely a harder task than the virtual reality itself!

I still think uploading minds presents the biggest challenges. It requires knowing everything we’d need to know to plug into a brain, plus a lot more about the details of how the brain works. (Plugging in should only require a thorough understanding of the I/O.) On top of that we need the scanning technology, so quite a formidable task.

“For an uploaded mind, we can cheat by the expedient of simply having the mind believe what we want it to about the environment.”

Very true. Like the hosts in Westworld, “That doesn’t look like anything to me.”

Our understanding minds that well, as you say, has implications. Egan has some short stories that involve characters manipulating their minds in ways that change their fundamental opinions such that they’re glad to see things that way now. Dark indeed.

(In one of them, a hit man offers his victims a “pill” that makes them a-okay with being killed. (In fact, it’s a nasal spray containing nanites programmed to alter neural connections. Egan’s ideas are awesome! Nasal spray makes so much sense.))

“This discussion reminds me of a sequence from Greg Egan’s novel: Diaspora,…”

I think you know, I’m a big fan of Egan’s (despite my skepticism of computationalism 🙂 ), and I actually especially enjoy his VR writing. (Diaspora and Permutation City really explore VR, but it shows up in so much of his writing.) He’s a hard SF writer, so he tries to get the science as correct as possible, and his VR stuff feels really legit to me. Very much, “Yeah, it would probably be something very much like that.”

And as your quote points out, the goal of VR need not be fidelity to the real world. The VR world might lack aspects of reality, but it has the potential to be a lot richer and more fun. (And uploaded minds can be edited to remove missing something that isn’t there!)

November 3rd, 2019 at 11:59 am

Those examples remind me of a character in Diaspora which modifies themselves not to care anymore, including with no ability to reason their way back out of it, and was subsequently a pale listless husk of their former self.

But the implication I meant is darker than self modification, but that the mind can be modified or controlled by the environment. In essence, the environment could take a mind for its skill and knowledge, but alter it so that the personality is compliant to someone else’s wishes. Borg like assimilation.

It’s the best argument I know not to run your mind in someone else’s system. Other authors, such as Nagata and Rajaniemi explore these darker themes.

November 3rd, 2019 at 12:43 pm

I’m familiar with Rajaniemi, but haven’t explored Nagata yet.

Funny thing: After I finished the Quantum Thief series, I bought an ebook of his short stories. As I start reading them, each one seems really familiar. After four or so, I think, “I can’t have read all these in other collections… but I know I’ve read them.” I finally figured out a friend had loaned me a hard copy version a couple of years ago. (I read so much I often don’t remember the specifics, just the content. But it’s familiar when I re-read.)

I enjoyed reading those short stories again. Rajaniemi is an interesting author. Quantum Thief was poetic hard SF with lots of hand-waving, but those short stories venture into pure horror and fantasy. In all cases poetic — he goes out of his way to avoid explaining stuff (let alone info dumping).

“But the implication I meant is darker than self modification, but that the mind can be modified or controlled by the environment.”

Right, I’m with ya. Like the hosts in Westworld.

Although in that world they hadn’t solved mind uploading (an interesting choice, I thought). When that’s a given, there are all sorts of queasy implications!

How would we feel about a virtual copy of oneself being enslaved — and liking it? (That theme comes up in mind-control scenarios: happy mind slaves or pod people.) What about a copy of oneself suffering or dying.

So, yeah, totally dark!

I’m glad mind uploading is, at best, far off in the future. I suspect we’ll be better off if it turns out to be impossible, be it effectively so or literally.

November 3rd, 2019 at 4:02 pm

Mind uploading in a story opens up an enormous can of worms. If you posit it as being possible, it largely defines the story from there, at least unless the story is far beyond when it began. I think Westworld wanted to explore the possibility, but keep the series from being about that, so they came up with the treatment of it they did.

I don’t share your aversion to uploading. I’d be happy if it showed up in my lifetime, although I don’t expect it to. Yes, like any new technology, it would lead to problems. But I think the possible benefits would be worth the risk. But even in Egan’s stories, there are humans who are repulsed by it.

November 3rd, 2019 at 4:06 pm

Yeah, I think Westworld was mostly focused on the hosts. As you say, mind uploading would have defined things rather differently.

I’m not opposed (morally or socially) to uploading if it proves possible. I’d love to live in a virtual reality! One reason I love Egan’s stories is the possibilities they suggest!

November 3rd, 2019 at 8:55 am

“The crucial thing about this map, this POV, is that it can freely rove around the virtual environment and look at what it wants.”

Crucially it is not simply a matter of looking at the virtual reality. It is interacting with it and having it act back like the real world. You need to be able to simulate completely the behavior of everything in the world. When the world can be perfectly predicted will be when we in effect know everything about it.

Of course various cartoonish realities are possible and may be satisfactory for some for a while.

November 3rd, 2019 at 10:37 am

Can I start by asking you about the exact nature of your objection(s)? Is it just that you see issues with the fidelity of the simulation, or do you also have doubts about the ability to simulate an apparent world that can be freely explored? From your comment I’m guessing the former?

“Crucially it is not simply a matter of looking at the virtual reality. It is interacting with it and having it act back like the real world.”

Mos def! As I said at the end of the post, “I haven’t gotten into how a person navigates around a virtual space or interacts with it, but this has gotten long…”

We can talk about that here. If it looks like it’s going to get long and detailed, maybe I’ll bang out another post picking up where this one left off.

For one thing, to be clear, the maps are two-way. We’re converting VR data into inputs for the mind and we’re converting outputs from the mind (motor actions, mainly) into data for the VR. So, for instance, if the mind is sending motor signals to make the body walk a certain way, the POV changes to reflect that.

This would also generate feedback signals into the brain — the feelings of walking. (A bit like how you hear yourself when you speak on the phone, what’s called “sidetone.”)

The motor signals just for turning the head would alter the POV. Other motor signals indicate talking, which would out the person’s voice into the VR. Motor signals to the arms and hands would manipulate objects in the VR.

“You need to be able to simulate completely the behavior of everything in the world.”

Do we really, though? (See the comment from Mike above about why maybe not. Absolute fidelity to the real world may not be desirable. Certain “cartoonish” aspects — popup VR info and control screens, for instance — might make a VR more useful.)

Given the interest in Augmented Reality I’m not sure fidelity is necessarily a requirement.

How real do clouds and trees need to be? Must we generate clouds by simulating the principles of atmospherics and fluid dynamics and gas pressures, or can we use a simpler algorithm that generates things that just look like clouds?

Same question for trees. Must we simulate their biology — recapitulate their growth from seeds in each case — or can we use a tree generation algorithm that makes look-alikes? (Do trees need to use photosynthesis?)

To some extent, it depends on what we plan (or need) to do with clouds and trees. If we’ll only ever look up at clouds, appearances are all we need. If we plan to fly through them, and we want natural effects, then we need the physics. (Which we do know a fair bit about — enough to generate natural effects probably — the contrails and vortexes.)

Trees likewise. It’d be nice to climb them, so they want some physical solidity, but that’s true of all objects in VR. (In reality, solidity is due to chemical laws. In VR, it’s due to algorithms.) But do we want to cut them down sometimes or throw logs on the fire? That requires more complicated algorithms, but there’s nothing utterly unknown about the dynamics required.

(As an aside, it’s not unusual in animated movies now that character simulations involve a skeleton that defines possible motion, a musculature that enables motion and defines shape, a skin that defines appearance, and clothing that emulates properties of cloth. These are four interacting distinct levels of simulation used to make characters appear lifelike.)

Something else: That we can’t predict the weather faithfully is due to both the inability to collect data at sufficient resolution — even something as crude as a reading for every cubic mile — and that chaos causes calculation to diverge from reality even if we could get the resolution.

But that just means we can’t predict the weather in the real world. That doesn’t mean we can’t simulate an imaginary weather system. In a VR, we control every particle of space, so resolution isn’t an issue. And we’re fairly familiar with the physics involved. The only big issue for a realistic simulation of an imaginary world is computing power.

The result of decades of video games and animated movies is lots of experience in simulating roughly real worlds — including behaviors and interactions. As I mentioned in the post, the quality of those simulations is a bullet point in the “We live in a VR” hypothesis. The premise there is, if we’re this good now, just imagine what future tech might enable!

November 3rd, 2019 at 10:39 am

(Well, that was almost a second post right there! 😀 )

January 8th, 2020 at 1:41 am

I think that currently for gaming VR isn’t quite ready. I am a teacher though, so i bring my headset ( a gear VR and an Oculus GO into the classroom) when we finish a project, or read a book if there is a reasonable tie in i will consider bringing out the headset and putting it on the face of the students. As i wrote on my blog, you can’t see the eyes of the student but the jaw drop when they are sitting there face to face with a 50ft dinosaur. That is really awesome. Children have so much creativity that they see past the screen door or the light bleed from the bottom and just lose themselves in the moment. So for games i will keep the controllers in my hand, but for experiences VR has some legs in it yet i think.

January 8th, 2020 at 9:45 am

Oh, I don’t doubt that about kids for a second. I’ve seen them turn sticks and chunks of ice into all sorts of things. As you say, they are filled with creativity. I love the way you’re using VR in the classroom. Right on!

I don’t doubt VR will continue to improve. The Virtual Reality Hypothesis is based on how good it is now and how likely it is that it will get even better.

Kudos for being a teacher, which I know can be a challenging career path (but such an important and noble one). My mom was a teacher, and my sister is one — lots of teachers and preachers in my family tree. 😉

March 13th, 2024 at 1:37 pm

[…] That realism is a bullet point in the Virtual Reality hypothesis. If we were in that water simulation, we would find it (simulated) wet. The idea in the VR hypothesis is that, given how good simulations are now, just imagine what they’ll be like down the line. A Matrix scenario isn’t unthinkable. […]