All my life I never noticed that, when February has 28 days (non-leap years), the dates in March line up exactly with the dates in February. It took writing this TV Tuesday post for March 24 and realizing last month’s edition was also on the 24th to notice it.

All my life I never noticed that, when February has 28 days (non-leap years), the dates in March line up exactly with the dates in February. It took writing this TV Tuesday post for March 24 and realizing last month’s edition was also on the 24th to notice it.

Seventy revolutions around the local star, yet I can still find new things lurking in what is by now a vast pile of ordinary. It’s a double-dip pleasure: firstly, the delight of the new thing; secondly, the delight of still being delighted by delightful things (and wordy whimsy).

More to the point, I’ve been delighted by some things I’ve been watching lately, including actually going to the movies this past Sunday.

Continue reading

11 Comments | tags: American Gods, Andy Weir, Bewitched, Japanese anime, Project Hail Mary, Rurouni Kenshin | posted in TV Tuesday

We live in a noisy world. It was never quiet, but what used to be a natural background has become an artificial assault constantly seeking to capture and at all costs hold our attention.

We live in a noisy world. It was never quiet, but what used to be a natural background has become an artificial assault constantly seeking to capture and at all costs hold our attention.

Rather than wind, waves, or animals, the modern blare comes generally from two sources: The sellers and each other. The internet granted upon us — for better or worse — the ability to be noisy on a global scale.

Modern technology grants us one and all the ability to easily contribute to the din.

Continue reading

2 Comments | tags: advertising, commercials, noise | posted in Society, Sunday Sermons, The Interweb

Winter, that is.

Except for some small piles in shaded areas, the snow was gone.

Continue reading

16 Comments | tags: Minnesota, snow, snow storm, weather | posted in Life

Pardon me for going momentarily meta, but these three-paragraph opens (hopefully with a pithy cliffhanger punchline for the third) are sometimes a real challenge. The intent is a recognizable style that acts like a watermark.

Pardon me for going momentarily meta, but these three-paragraph opens (hopefully with a pithy cliffhanger punchline for the third) are sometimes a real challenge. The intent is a recognizable style that acts like a watermark.

Some opens are more challenging than others, though. The right half-dozen or so sentences comprising three thoughts (with a hoped-for haiku-like third) can take forever to whip into shape.

Friday Notes are among the hardest because there isn’t much to say other than “here we go again…”

Continue reading

12 Comments | tags: adaptations, AI, Bentley, Dogma (movie), opinions, seconds are long, Substack, weather | posted in Friday Notes

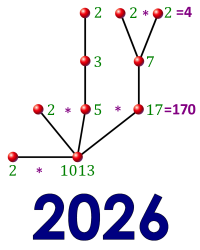

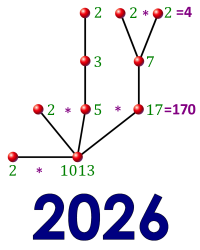

Something old and something new collided last week in a way that I found very engaging. The old was a science fiction series I read long ago, the Heechee saga by Frederik Pohl (1919-2013). What’s relevant here is that the alien Heechee used a number system based on prime numbers.

Something old and something new collided last week in a way that I found very engaging. The old was a science fiction series I read long ago, the Heechee saga by Frederik Pohl (1919-2013). What’s relevant here is that the alien Heechee used a number system based on prime numbers.

The new was this recent Substack post by Richard Green, a math writer and teacher. It, too, features a system based on primes, and I realized it solves a problem that has long bothered me about the putative Heechee number system.

Let me explain…

Continue reading

2 Comments | tags: Frederik Pohl, Heechee, prime numbers, science fiction books | posted in Math

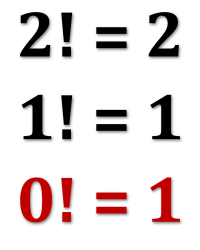

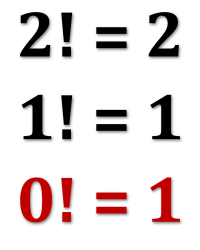

Recently, I learned an interesting new math trick involving what are known as dual numbers. These are compound numbers similar in form to complex numbers but with a different kind of “magic” element enabling their behavior.

Recently, I learned an interesting new math trick involving what are known as dual numbers. These are compound numbers similar in form to complex numbers but with a different kind of “magic” element enabling their behavior.

What makes them interesting to people like me is the surprising way they provide a fast and easy technique for software to generate the derivative of a given function.

As an unrelated bonus, a simple explanation of why zero-factorial is equal to one rather than zero (which might seem more intuitive).

Continue reading

1 Comment | tags: computer programming, derivatives, dual numbers | posted in Computers, Math

The last edition of TV Tuesday ended with the line: “There’s more to report, but I’ve hit my word ceiling and will abruptly stop.” That was three weeks ago, so now there’s even more to report. (Baseball starts soon, and that will have a big impact on how many shows and movies I can watch.)

The last edition of TV Tuesday ended with the line: “There’s more to report, but I’ve hit my word ceiling and will abruptly stop.” That was three weeks ago, so now there’s even more to report. (Baseball starts soon, and that will have a big impact on how many shows and movies I can watch.)

That last post focused on movies I’d seen. This time much of the focus in on a related pair of TV shows I finished watching. One is a French TV series about a female “Sherlock Holmes” who works as a police consultant. The other is its American adaptation.

I like the French original better. (No surprise there, right?)

Continue reading

9 Comments | tags: adaptations, Audrey Fleurot, Bill Murray, Christopher Nolan, Christopher Priest, Jim Jarmusch, Kaitlin Olson, Sean Connery, Ted Danson | posted in Movies, TV Tuesday

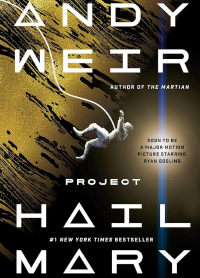

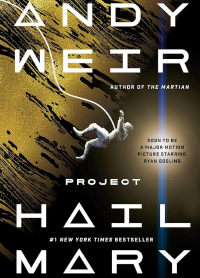

This past week I read and very much enjoyed Project Hail Mary (2021), by phenom Andy Weir. This is his third novel, his third time with an award-winning bestseller, and the third time Hollywood has acquired the rights for a film adaptation.

This past week I read and very much enjoyed Project Hail Mary (2021), by phenom Andy Weir. This is his third novel, his third time with an award-winning bestseller, and the third time Hollywood has acquired the rights for a film adaptation.

All three of his books are what I call “diamond-hard” science fiction — projections of future technology with a bare minimum of gimmes (such as warp drive). Bonus points if there are none.

This story has a somewhat magical material that plays a key role, not to mention an exotic alien lifeform that’s the raison d’etre for the whole story.

Continue reading

14 Comments | tags: alien contact, aliens, Andy Weir, Mars, science fiction, science fiction books | posted in Books, Sci-Fi Saturday

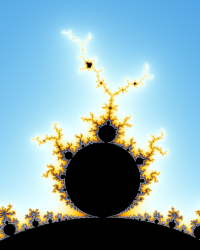

Time for another Mandelbrot Monday. I’ve mentioned before that aimlessly playing around with Mandelbrot zooms gets old fairly quickly. I find that I do it for a little while, lose interest for a long while, and then pick it up again for a little while.

Time for another Mandelbrot Monday. I’ve mentioned before that aimlessly playing around with Mandelbrot zooms gets old fairly quickly. I find that I do it for a little while, lose interest for a long while, and then pick it up again for a little while.

I’m in the lost interest phase right now — have been for a couple of months. I think I’ll cool my jets until May, when I’m planning a series of posts (“Mandelbrot May”) exploring the Mandelbrot set and how images of it are made.

But I still have images from previous phases to share, so off we go…

Continue reading

Leave a comment | tags: Mandelbrot fractal, Mandelbrot Monday, Ultra Fractal | posted in Math

Today’s Friday Notes post is a small first — I’ve never published one on the sixth of the month (until today). A bit more significantly, this one is early in the month. I’ve discovered a strong bias towards publishing these posts in the latter half of the month: only 14 posts before the 16th of the month; 44 after (very close to exactly a 25/75 split).

Today’s Friday Notes post is a small first — I’ve never published one on the sixth of the month (until today). A bit more significantly, this one is early in the month. I’ve discovered a strong bias towards publishing these posts in the latter half of the month: only 14 posts before the 16th of the month; 44 after (very close to exactly a 25/75 split).

As it turns out, I have plenty for a post (including some stuff left over from the previous post). And, of course, there’s always the weather and various other charts.

So, let’s get to it…

Continue reading

11 Comments | tags: charts, email spam, Grace Hopper, Martin Short, Richard Feynman, snow, Steve Martin, weather | posted in Friday Notes

All my life I never noticed that, when February has 28 days (non-leap years), the dates in March line up exactly with the dates in February. It took writing this TV Tuesday post for March 24 and realizing last month’s edition was also on the 24th to notice it.

All my life I never noticed that, when February has 28 days (non-leap years), the dates in March line up exactly with the dates in February. It took writing this TV Tuesday post for March 24 and realizing last month’s edition was also on the 24th to notice it. We live in a noisy world. It was never quiet, but what used to be a natural background has become an artificial assault constantly seeking to capture and at all costs hold our attention.

We live in a noisy world. It was never quiet, but what used to be a natural background has become an artificial assault constantly seeking to capture and at all costs hold our attention.

Something old and something new collided last week in a way that I found very engaging. The old was a science fiction series I read long ago,

Something old and something new collided last week in a way that I found very engaging. The old was a science fiction series I read long ago,  Recently, I learned an interesting new math trick involving what are known as

Recently, I learned an interesting new math trick involving what are known as  The

The  This past week I read and very much enjoyed

This past week I read and very much enjoyed  Time for another

Time for another