Indulging in another round of the old computationalism debate reminded me of a post I’ve been meaning to write since my Blog Anniversary this past July. The debate involves a central question: Can the human mind be numerically simulated? (A more subtle question asks: Is the human mind algorithmic?)

Indulging in another round of the old computationalism debate reminded me of a post I’ve been meaning to write since my Blog Anniversary this past July. The debate involves a central question: Can the human mind be numerically simulated? (A more subtle question asks: Is the human mind algorithmic?)

An argument against is the assertion, “Simulated water isn’t wet,” which makes the point that numeric simulations are abstractions with no physical effects. A common counter is that simulations run on physical systems, so the argument is invalid.

Which makes no sense to me; here’s why…

The human brain is a physical system, and we can model physical systems in various ways.

We can make a physical copy, often with a different size, usually with different materials. The copy may be functional to any degree from not at all to fully, depending on our ability to create such a physical model.

Alternately we can create a numerical model that describes the physical system.

Often such numerical models can be “run” over time to describe the dynamics of the simulated system. Computationalism is the idea that a numerical model of the brain (or possibly of the mind) will describe everything a brain does.

In particular, that the simulation would be conscious. (Which I’ll define here as being self-aware, cogently coherent, and capable of meta-thought.)

§

Effectively, that means we provide input numbers the running simulation recognizes as sense data in its virtual world. These numbers can reflect a real world or an imaginary one.

The simulation manipulates the numbers to produce new numbers, some of which the system interprets as outputs. Generally speaking, these are motor outputs that drive muscles.

If it works, the output numbers interpreted as speech and action should reflect an active conscious mind.

§

The question is whether it will work. There are two opposing propositions:

① A numerical simulation can describe a physical system to a high degree of precision — in some cases greater precision than an analog copy can accomplish.

② Simulated X isn’t Y. (Simulated water isn’t wet. Simulated airplanes don’t fly. Simulated earthquakes don’t knock down buildings. Etc.)

Which proposition “wins” ultimately depends on what consciousness really is.

If it exists such that describing the system’s operation generates it, then a simulation should produce it. But if it supervenes on physical properties of the system, it might not.

An example that illustrates this nicely is lasers.

An example that illustrates this nicely is lasers.

We can (and do) simulate laser behavior with a high degree of precision. We understand the physics of the physical system, so our numerical models are very good.

But no simulation of a laser, no matter how accurate, can produce photons.

That requires specific physical materials in a specific physical configuration (and has some specific energy requirements also).

[There is a sub-topic about exactly what the simulation is of, the brain or the mind. The former models the physical organ, similar to how we might model a heart or kidney. The latter involves the more algorithmic approach of replicating mind functionality. I treat them as the same here.]

§

Computationalists, very understandably, find the second proposition uncomfortable (because it might be true). As I mentioned above, a common counter is the assertion that the simulation is running on a physical system.

Which, as I also mentioned, makes no sense to me.

I can read it in two ways:

Firstly, that a numerical simulation can, through inputs and outputs, interact with the physical world.

Secondly, that the numerical simulation is, itself, running on physical hardware and all the information involved has physical instances in RAM or voltages or whatever.

My perception is that people usually mean the second sense.

The first sense dodges the issue in that the issue is whether those inputs and outputs engage a conscious mind within the simulation. The second sense seems to answer argument that physical systems are physical but information systems are abstract.

§

But of course these numerical systems are physical. That’s not what the second proposition is getting at.

The second proposition is saying that, no matter how accurately the numbers describe water, those numbers can never be wet. No matter how those numbers are physically reified.

It’s completely irrelevant that a numerical system is, itself, physical. (Because of course it is.)

What’s relevant is the nature and content of the information in that system.

§ §

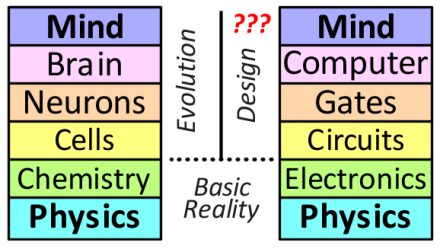

Which brings me to what I mentioned briefly in the Anniversary post and never got back to, the Mind Stacks:

The human brain/mind (left) and a simulated mind (right).

These are intended to represent the structure hierarchies involved in two physical systems that might implement conscious minds (although the one on the right is currently an open question).

What I tried to do was find equivalencies between major organizational levels, starting with shared basic physics at the bottom of each stack.

I equate the biochemistry of humans with the electronics of machines as being a basic next level. Above that are cells (or biology in general) equated with electronic circuits — these levels organize the level below them into generally useful units.

So, the bottom three levels apply to all living things (left) and all electron-using devices (right).

Neurons and logic gates (which are cells and circuits, respectively) are the basic building blocks of “thinking” systems — systems that make apparent choices or decisions based on inputs.

The next level up organizes neurons and gates into functioning machines, a brain and a computer, respectively. (And it’s really this very hierarchy that gives people the idea that computers can have minds in the first place.)

Finally, at the top, we know brains give rise to minds, but we’re not sure about computers just yet. Mainly because we don’t know exactly how brains give rise to minds.

The diagram also notes that the biological organization using chemistry is accomplished through evolution whereas the computational organization using electricity is designed.

§

What requires serious unpacking is the box labeled Computer. The whole computationalism debate centers on what’s going on inside that box.

What requires serious unpacking is the box labeled Computer. The whole computationalism debate centers on what’s going on inside that box.

What the diagram doesn’t show is that Computer is actually a combination of software (Program) and hardware (Engine).

What’s crucial towards the main point (that numeric systems being physical doesn’t matter) is that all the physicality in the left stack is directly linked with generating Mind whereas nearly all the physicality in the right stack is not.

To see this, recognize that the physical system that enables Computer is the same regardless of what software is run. The behavior of the physical system is identical — logic gates act the same no matter what software runs.

The Engine that enables Computer is not associated with Mind.

It is, in fact, a basic tenant of Computer Science that software is agnostic about the engine that runs it.

So, the entire physical part of the right stack is unrelated to the software, which means that the numeric system being physical is irrelevant.

In contrast, everything in the left stack directly participates in generating mind. There is no discontinuity as you work upwards in the stack.

But there is a major discontinuity in the Computer box in the right stack. What’s above it (Mind) isn’t directly associated with what’s below it.

§

In fact, there are a number of levels of discontinuous abstraction in that box.

The CPU itself is likely a self-contained system — an implementation of the abstraction of the CPU. On top of that is the physical architecture of the computer — another implementation of an abstraction.

Then there’s the operating system which can involve several levels of abstraction all on its own (if high-level services are wrapped around an O/S core, plus there is the BIOS level).

On top of that, software is usually written in a programming language, which is another level of abstraction.

In any event, the running application software is the top-level abstraction, and it is only at this top level that the causality of the numeric model exists.

Bottom line: Any causal topology implemented by the system is entirely disconnected from the physical causality of the system.

So, the physicality of a numerical simulation is irrelevant to what it simulates.

§

After all, a numerical simulation can only give us numbers describing what the simulated system does.

Another fundamental tenant of computer science is that which physical system generates the numbers is irrelevant. Numbers in, numbers out; all that matters is the calculation.

So “The simulation is physical” isn’t a useful argument. It ignores the nature and content of the information the system processes. [For more about physical systems vs numeric simulations, see Magnitudes vs Numbers.]

It all boils down to those two propositions.

It boils down to what consciousness actually turns out to be.

Stay propositioned, my friends!

∇

October 26th, 2019 at 7:44 pm

One theory states that everything that exists is nothing more than data on the surface of a black hole.

October 27th, 2019 at 12:31 am

You’re referring to the holographic principle? What connection are you seeing with the blog post?

October 27th, 2019 at 2:42 am

Maybe (wet) water is a simulation.

October 27th, 2019 at 8:55 am

I’m not at all sure how you get to that conclusion. The holographic principle isn’t really about simulations. It’s something that sprang out of String Theory, and it’s main application so far seems to involve AdS space, so it may have nothing to do with reality as is actually is.

October 27th, 2019 at 11:05 am

Sorry, that seems brusque. Too early in the morning! What I mean is that I don’t understand how that applies to the post. Are you thinking deliberate simulation or just that reality might actually be on a two-dimensional surface? (What confused me is that the latter case wouldn’t be a simulation so much as a weird ontology.)

Either way (and there are various “reality is a simulation” hypotheses), water is wet in whatever we have that passes as reality. Numerical simulations of water that we accomplish in that reality are not wet. So the distinction still applies regardless of the underlying ontology.

October 27th, 2019 at 8:59 am

“Any causal topology implemented by the system is entirely disconnected from the physical causality of the system.”

Do you mean this literally? If so, how then does the “numerical” system work if it is “entirely disconnected” from the causality of the physical system?

It seems like you’re making a category mistake here, confusing different levels of description of the same system for ontologically different systems. The system can be described in purely physical terms, or in higher level abstract terms. Characterizing them as separate seems like a reification of different models we find convenient to describe the same overall reality.

Executing software can be described in terms of its subject matter, or in terms of numbers, or in terms of transistor voltage states and electron flows. That we often don’t find the last a convenient way of discussing things only comes down to the limited ability of human cognition, not anything ontological.

Terminology issues aside, it seems like what you’re arguing for here is something like Searle’s biological naturalism. My response to both his and your proposition is, again, to ask, what is missing in the information processing simulation?

You keep falling back to the water and laser analogies, where it’s easy to identify the missing elements (the way water interacts with its physical environment, and photons emmitted). What’s the equivalent in the brain that is being omitted?

If you say “physicality”, what in particular about that physicality? As James of Seattle keeps asking, what specifically makes this something other than an information system simulating another information system?

October 27th, 2019 at 10:48 am

[Since this is a path we’ve explored before with, perhaps, some frustration on both sides, I’m going to be very specific and detailed, so I hope you’ll bear with me. Maybe at the very least we can clearly define the boundary between our views. Or at least fully grasp what the objections are (because I’m not sure I really do).]

“If so, how then does the ‘numerical’ system work if it is ‘entirely disconnected’ from the causality of the physical system?”

The numerical system can be executed with pen-and-paper, or with logic gates, or with some sort of mechanical system. It’s basic Church-Turing thesis that computation and substrate are separate.

If you recall Chalmers’ notion of a CSA, that notion represents the entirety of the numerical simulation as a single data object — so it has no causality at all (other than the virtual causality simulated by the numbers).

The whole point here is that the causality implemented by the simulation most definitely is ‘entirely disconnected’ from the causality of the physical system running it, be it pen-and-paper or a computer.

“It seems like you’re making a category mistake here, confusing different levels of description of the same system for ontologically different systems.”

I’m sorry, I don’t understand what that mistake is. Can you cite something specific?

“The system can be described in purely physical terms, or in higher level abstract terms.”

By “system” do you mean any system, the brain specifically, or the brain and computer stacks?

What do you mean by “described in purely physical terms”? Such as? Do you mean like a physical model of real physical system (model airplane for instance)?

“Characterizing them as separate seems like a reification of different models we find convenient to describe the same overall reality.”

I don’t understand. How is a characterization a reification? How do “convenient” and “overall reality” figure into it? Can you cite something and show me what’s wrong with it?

“Executing software can be described in terms of its subject matter, or in terms of numbers, or in terms of transistor voltage states and electron flows.”

I agree those are three ways to describe executing software. All three could be complete enough to capture what’s important about the executing software.

Which isn’t surprising, since executing software is a numeric simulation and therefore has an abstract description and can be multi-realized.

“That we often don’t find the last a convenient way of discussing things only comes down to the limited ability of human cognition, not anything ontological.”

I agree with that, too.

But so? You seem to pack a lot into “can be described in terms of its subject matter” but how does that apply? Executing software is itself a description, so what does describing its subject matter accomplish?

“Terminology issues aside,…”

What terminology issues?

“…it seems like what you’re arguing for here is something like Searle’s biological naturalism.”

(Why would you say that when you know I support Positronic Brains as likely?) I tried to be clear that I was speaking here solely about computationalism. This is entirely about numerical simulations versus physical objects.

“My response to both his and your proposition is, again, to ask, what is missing in the information processing simulation?”

I know you keep asking, but you don’t seem to accept the answer: an explanation for phenomenal experience. And by “information processing” do you mean the broader sense that includes transistor radios, or do you mean the specific CS sense of digital algorithms?

“You keep falling back to the water and laser analogies, where it’s easy to identify the missing elements”

You’re downplaying the point of those analogies, which is that no numeric simulation has the physical effects of the physical system. That’s not something to ‘fall back to’ but the basic argument against computationalism.

“What’s the equivalent in the brain that is being omitted?”

I can only give you the same answer I’ve always given you: phenomenal experience.

Put the label “illusion” on it if that makes you feel better, but it’s still a real thing every human experiences, and we don’t know what it is or why or how it exists.

And there is no account in “information processing” (however described) that gives any real hint of an explanation, so isn’t that clearly something that’s “being omitted?”

“If you say ‘physicality’, what in particular about that physicality?”

I’ve explained that in this post (and in this post (and in many others)).

I have to ask: Why do you not accept these explanations? How do you conflate a real physical system with a numerical description of that system? Do you really think there is no difference? I don’t understand.

“As James of Seattle keeps asking, what specifically makes this something other than an information system simulating another information system?”

I’m not sure I follow, but I’ll take “this” to mean ‘executing simulation of a brain or mind’ and the first “information system” to mean ‘a computer running software’ and the second “information system” to mean the brain ‘running’ the mind. Yes?

Well, that’s the big question, isn’t it. That’s what we’d like to know.

But the question requires the brain/mind to be an “information system” more akin to the CS-style computer than a transistor radio, because that’s the only way an “information system” can be fully simulated by another “information system” (CS-style in both cases).

OTOH: If the simulation is of the brain-as-organ, a simulation of the physics, then the question is false, unless one contends physical reality is, itself, an “information system.”

Either way, the answer to James’ question is: It doesn’t look that way (despite some similarities). There is nothing in (CS-style) “information processing” that suggests phenomenal experience or consciousness. That IP is a trait of a system doesn’t make that system just IP.

[Okay, so a lot of detail, but I wanted to respond to every point hoping to at least pin down some specifics. We may never have the same view, but hopefully we can be clear and precise about those views.]

October 27th, 2019 at 12:06 pm

“The whole point here is that the causality implemented by the simulation most definitely is ‘entirely disconnected’ from the causality of the physical system running it, be it pen-and-paper or a computer.”

Okay, well I think this is one that we simply disagree about. Software is ultimately translated into machine code instructions, which map to either processor instruction codes, physical state transitions in the system, or micro-code, which eventually itself maps to those states.

“I’m sorry, I don’t understand what that mistake is. Can you cite something specific?”

A similar mistake would be to hold that traffic has no relation to the actions of the individual cars and road topology, traffic lights, etc. Or that thermodynamics have nothing to do with the particle kinetics. All of these cases are simply higher level descriptions of the same overall system.

“By “system” do you mean any system, the brain specifically, or the brain and computer stacks?”

Sorry, I meant computer systems, including the hardware and software.

“What do you mean by “described in purely physical terms”?”

I mean you can describe something like the execution of WordPress in purely physical terms, including voltage states, electron transfers, electrical conductance on network cabling, etc. Doing so is hopelessly tedious, which is why we instead speak of WordPress in terms of its subject matter: blogging, commenting, etc.

“How is a characterization a reification?”

Rather than treating these things as different descriptions of the same system at different levels of organization, you’re treating them as though they’re completly separate things “entirely disconnected” from each other. If I didn’t know you better, I’d think you were attributing substance dualism to computer systems.

“You seem to pack a lot into “can be described in terms of its subject matter” but how does that apply?”

I was using it as an example of a different level of organization.

“Executing software is itself a description, so what does describing its subject matter accomplish?”

A mind could itself be considered a description of the environment, the body, action plans, and of itself. In any case, I again only mentioned subject matter as an example.

“What terminology issues?”

I have to assume that the distinction you keep drawing between a “numerical” system and its underlying physicality, of them being “entirely disconnected” is somehow a difference in terminology. Taken literally, they imply the computer substance dualism I mentioned above, but I know that’s not your position.

“Why would you say that when you know I support Positronic Brains as likely?”

Searle reportedly is also open to technology being able to produce consciousness. (He’s not portrayed that way by some of the people who tout his philosophy, but that’s more about them than his ideas.) Similar to you, he just rules out the physicality of computers.

“And by “information processing” do you mean the broader sense that includes transistor radios, or do you mean the specific CS sense of digital algorithms?”

I mean both digital processing and analog processing that can be reproduced with digital processing.

“I can only give you the same answer I’ve always given you: phenomenal experience.”

What about phenomenal experience is not informative, or at least potentially informative? In other words, what about it leads you to conclude that it can’t be information processing?

“Why do you not accept these explanations?”

For all the reasons laid out above. I just can’t see that you’ve adequately addressed them.

[Just FYI, I’m generally uneasy with these point by point responses, as too often they devolve into acrimony, so I’ll likely be much more selective with any future responses.]

October 27th, 2019 at 12:56 pm

“Software is ultimately translated into machine code instructions,”

There is no disagreement software is reified. The point is that in a software/hardware system there are two entirely different causal systems in play.

Firstly, there is the physical causal system implemented by the machine. Secondly, there is the virtual causality implemented by the software.

A physical system implements just one level of causal behavior. There is no unrelated virtual layer.

“All of these cases are simply higher level descriptions of the same overall system.”

I asked you to cite what I said and why it’s wrong. Your reply doesn’t do that.

Both traffic systems and particle kinetics are physical causal systems. There is just one level of causal behavior. These systems aren’t implementing a second virtual level of causality.

“I mean you can describe something like the execution of WordPress in purely physical terms, including voltage states, electron transfers, electrical conductance on network cabling, etc.”

Okay, so you mean descriptive information in all cases there. I agree systems can be described and at various levels. And certainly lower levels are more detailed.

“Rather than treating these things as different descriptions of the same system at different levels of organization, you’re treating them as though they’re completly separate things ‘entirely disconnected’ from each other.”

Do you mean all the layers of both stacks? If so, then I agree they are “different descriptions of the same system at different levels of organization.” That’s kind of the point — to compare both systems at different levels of organization.

The only place I’m talking about ‘entirely disconnected’ is with regard to those two levels of causal behavior seen in a numeric simulation.

“What about phenomenal experience is not informative, or at least potentially informative? In other words, what about it leads you to conclude that it can’t be information processing?”

You don’t seem to accept the answer that: (1) No form of information processing we know other than the brain seems to experience consciousness. (2) No physics we know suggests how there could be “something it is like” to be information processing.

October 27th, 2019 at 3:44 pm

On the distinction between causality at the various layers, are you arguing that the “virtual causality” is in some manner strongly emergent from the physical causality? But that can’t be right, because we completely understand how each layer maps to the layers above and below it.

At this point, I have to admit that I’m out of words. I don’t know any more ways to keep saying the same thing. And I perceive you to be saying the same thing. There’s a disconnect here, and I don’t know how to bridge it.

“You don’t seem to accept the answer that: (1) No form of information processing we know other than the brain seems to experience consciousness. (2) No physics we know suggests how there could be “something it is like” to be information processing.”

On (1), I accept that currently nothing other than brains are able to trigger our intuition of a fellow consciousness. But then nothing other than brains used to be able to calculate ballistics tables, do accounting, play chess or Go, recognize faces or speech, or drive a car.

On (2), I do recognize that’s a very common sentiment, but it’s also an ambiguous one. When I ask people to be more specific, I get either more ambiguity, details that can in fact be addressed in terms of information processing, or hostility. (Often all of those in that order.) So you’re right. I don’t accept it, because no one who does can explain why I should.

Maybe I’m a zombie trying to understand something I don’t have. Naturally my own suspicion is that I’m just not buying into an “emperor’s new clothes” meme.

October 27th, 2019 at 4:27 pm

“On the distinction between causality at the various layers, are you arguing that the ‘virtual causality’ is in some manner strongly emergent from the physical causality?”

Oh, good lord, no! Quite the opposite!

“But that can’t be right,…”

Definitely not, because I’m arguing the virtual causality is separate and an illusion. That’s kind of my whole point.

You’ve mentioned Angry Birds before. That’s a numerical simulation with an apparent causal environment (I believe; I’ve never played it). But like all computer games, that environment is a lie — there is no actual causality occurring. It’s all simulated.

For example, in some games, the right cheat codes let you walk or see through walls in defiance of the simulated causality of the system.

I’ll refer again to the CSA of a system. The virtual causality is entirely coded in the CSA (which might lead some to consider the CSA itself conscious), but the CSA is a static document or file, there is no physical causal system present.

Isn’t that a clear illustration that those two causal systems, physical and virtual, are distinct?

“On (1), I accept that currently nothing other than brains…”

Then you’ve been given an acceptable answer. What you think might happen in the future isn’t relevant to what’s true right now. You must concede that nothing else we know acts that way — certainly nothing in nature.

“On (2), I do recognize that’s a very common sentiment, but it’s also an ambiguous one.”

What is ambiguous about it? Do you deny that humans experience something that fits the description “phenomenal experience”? Forget trying to define it for now, do you deny it exists?

It’s the one thing Descartes claimed we could know for sure. How is it ambiguous?

At least on point (1) you had a counter-argument, albeit a speculative one. This seems like hand-waving. What is your counter-argument supporting the idea that: The physics we know suggests how there could be “something it is like” to be information processing.

How do you go from crunching numbers to phenomenal experience?

October 27th, 2019 at 6:06 pm

Let me try to state the core point as specifically and succinctly as possible.

In a physical system (e.g. a brain), at all levels of organization, the causality — the physics — is devoted solely to implementing the physical system. A physical system just is what it is.

In a numerical simulation (i.e. a computer running software), the levels of organization divide into two layers: one regards the physics of the hardware; the other regards the virtual physics implemented by the software.

So the core point is that a physical system has one layer of causality, but a numerical simulation necessarily has two, and those two are, as I’ve said, entirely separate.

Can we agree on that much?

October 27th, 2019 at 6:50 pm

I had a literal refrigerator moment:

“It seems like you’re making a category mistake here, confusing different levels of description of the same system for ontologically different systems.”

That combined with your later comment about “strongly emergent” along with your reference to traffic and particle kinetics suggests you see me saying each block in the diagram is ontologically different?

I’m definitely not saying that. As the examples you gave illustrate (and as I said in the post), these are organization levels (akin to zooming in or out).

The point was to compare the two systems, brain and sim-mind, in terms of those levels.

As I also mentioned in the post, the similarities are one thing that give people the idea that computationalism works. (How many times have you and others compared neurons to logic gates?)

What the post discusses is the causality disconnect lurking inside the Computer box. At that level of organization, there is a discontinuity between the physical causality of the system and the implemented virtual causality of the running software.

October 27th, 2019 at 8:07 pm

Thanks for the clarification.

I’m still not on board with the idea of a discontinuity between physical and virtual causality, but maybe another way to state your thesis is that the continuities between the layers in a brain are different than the continuities in a computer system.

For example, if we implement a mind as a software neural network, a particular software neuron may fire when receiving the same combination of inputs that cause its original physical version to fire. However, the underlying causes of the software neuron firing are different than the underlying causes of the physical neuron firing.

The physical one is going to involve the actions of billions of proteins, as well as the reactions of sodium and potassium ions, and a whole bunch of other stuff. The software neuron may not involve any of that. It’s firing may be based on our study of the time sequenced events of the physical neuron when receiving certain inputs.

Likewise, the strengthening or weakening of the physical synapses may involve the actions of proteins that make changes under varying conditions. But the software version may, again, change based on our time sequenced observations of how the physical version changes under certain conditions.

The concern might be that doing this causes us to exclude some crucial causality at these lower levels. Maybe something important is happening at the protein level, or even the molecular level, which if not accounted for, prevents consciousness from happening.

Does that sound like a reasonable description of what you have in mind? If so, that’s a reasonable position, and only time will tell.

A more difficult issue is if people believe that, even if the simulation can produce, to some approximation, the same outputs as the original, it won’t actually be conscious unless it has all those same lower layers. The problem there is it puts us in zombie territory with all the issues involved with that.

On the ambiguity of “something it is like”, can you tell me what that phrase means? What, at a purely theoretically neutral level, in terms of phenomenology, is required? Is it enough to have sensory impressions? What about a feeling of embodiment? Are affective feelings necessary? Is it necessary to be aware of our awareness, or of our thoughts? If the last is not required, then what makes the other stuff “like” anything?

Myself, I think the phrase as Nagel originally used it is inherently anthropomorphic, attributing things to a bat that we can’t at all be sure of. In other words, what he implicitly, albeit unwittingly meant was, “some human thing it is like”.

October 27th, 2019 at 10:05 pm

We seem to be seeing some convergence…

“I’m still not on board with the idea of a discontinuity between physical and virtual causality,…”

Do you agree a numerical simulation necessarily has two distinctly different causal layers? (The physical one and the virtual one.)

“…maybe another way to state your thesis is that the continuities between the layers in a brain are different than the continuities in a computer system”

I’d like a definition of “continuities” please.

“However, the underlying causes of the software neuron firing are different than the underlying causes of the physical neuron firing.”

Indeed. And those causes can be characterized as virtual and physical, respectively.

Let’s be clear that in the first case, what we mean by “cause” is that certain arbitrary input numbers, due to algorithmic logic, generate new arbitrary output numbers. The cause implied by those numbers is entirely virtual. (If, for example, the numbers say a circuit is carrying too much current, no heat is actually generated.)

In the second case what we mean by “cause” is physics.

“Maybe something important is happening at the protein level, or even the molecular level, which if not accounted for, prevents consciousness from happening.”

That’s one possibility, and it depends on Proposition One (from the post) failing.

But the other possibility — the one I’ve been getting at — involves Proposition Two and implies that no numeric simulation, no matter how detailed, is likely to work. As you go on to say:

“A more difficult issue is if people believe that, even if the simulation can produce, to some approximation, the same outputs as the original, it won’t actually be conscious unless it has all those same lower layers.”

If by “lower layers” we mean the physical system, then that’s exactly what I’m saying.

“The problem there is it puts us in zombie territory with all the issues involved with that.”

How so?

“On the ambiguity of ‘something it is like’, can you tell me what that phrase means?”

I have. Multiple times. It was your “train of thought” as you wrote that question.

It’s likely an irreducible thing, so it can’t be defined in simpler terms, it can only be described, like love. It’s a fundamental aspect of our existence, the one fact Descartes thought we could know is true.

You go on to ask a lot of questions about aspects of the thing, and I’ll try to answer them if you ask, but the point is that the thing — phenomenal experience — exists. There is something it is like to be a human being.

“Myself, I think the phrase as Nagel originally used it is inherently anthropomorphic,”

I think you’ve misinterpreted him. He explicitly says we can’t possibly imagine what it’s like to be a bat. Here are some quotes:

He chose bats because they were so different.

One more:

Regardless of bats, there is certainly “something it is like” to be human. There is nothing ambiguous about its existence even if we don’t don’t understand it yet.

October 28th, 2019 at 9:09 am

“Do you agree a numerical simulation necessarily has two distinctly different causal layers?”

My concern here is the language implies something ontological. I can agree there are different epistemic layers of abstraction here, but then we recognize different layers of abstraction in the brain and throughout nature. That’s also what’s behind my “continuities” remark, which is keyed off of your “discontinuity” term.

On zombies, if the copied system can take in the same inputs and produce the same outputs as the original system, even to the extent of discussing its own consciousness, but if someone insists that due to the missing layers, the copied system is not in fact conscious, then they’re saying it’s a zombie (a behavioral zombie to be precise).

The problem is that whether another system is a zombie is an utterly untestable proposition. Our only way to assess whether such as system is conscious is by its behavior. So if the causal framework for producing human behavior is missing part of the causal framework for producing consciousness, it’s not clear to me how we would ever know.

“It’s likely an irreducible thing, so it can’t be defined in simpler terms, it can only be described, like love.”

I accept that there’s a limit to how much it can be reduced subjectively, but in my experience, it can be reduced more than people often assert. You mentioned that I asked about aspects of it. I think I asked about various phenomena, not all of which is universally agreed upon to be part of the overall “what it’s like”.

In any case, we get back to my earlier question: what about it implies something other than information processing (of the type reproducible by a digital system, at least in principle)? I think qualia are information processing. Why am I wrong?

October 28th, 2019 at 10:03 am

“My concern here is the language implies something ontological.”

On the one hand, there is a computer system, hardware and O/S, which comprises a distinct causal system — one that can run any software appropriate for that system.

On the other hand, there is application software, which comprises a separate causal system.

These are, in fact, different ontologies, aren’t they?

The computer system can run other application software, and the application software can be compiled to run on completely different machines?

How are they not distinct different objects?

“Our only way to assess whether such as system is conscious is by its behavior.”

This turns out to be a tangent from the topic, so I’m going to let it drop. It’s more a question for if and when we ever create a system that does give the appearance of consciousness without any of the traditional mechanisms for it (i.e. a brain or brain-like device).

I’d like here to stay focused on the mind stacks topic of the post. (It’ll be hard enough to see if we can’t find some common ground! 🙂 )

“I accept that there’s a limit to how much it can be reduced subjectively, but in my experience, it can be reduced more than people often assert.”

Okay, but the point is that it exists.

Fuzzy as the concept may be (like love), it’s still a basic part of human existence. There is “something it is like” to be human.

Do you deny that?

“I think I asked about various phenomena, not all of which is universally agreed upon to be part of the overall ‘what it’s like’.”

You could ask similar questions about love (or many other things) and you’d get slightly different answers because the real world is diverse.

But that doesn’t change that phenomenal experience and love (or many other things) are basic aspects of human experience.

“In any case, we get back to my earlier question: what about it implies something other than information processing (of the type reproducible by a digital system, at least in principle)?”

I’ve answered this multiple times. Recently I gave you a two-part answer, and you’ve already acknowledged that part one is, indeed, answers the question. Now we’re debating part two, where your objection seems to be that it’s too ambiguous for you.

But there’s nothing ambiguous that I can see about every normal human in history experiencing a basic aspect of human existence.

“I think qualia are information processing. Why am I wrong?”

It isn’t that that’s wrong so much as it’s not the whole picture. For reasons I’ve explained many times.

October 28th, 2019 at 1:19 pm

On causality and the layers, it feels like we’re beating a dead horse here, so I anticipate this being my last answer on it. To me, the distinction is like the distinction between the causality of physics vs the causality of chemistry, or of biology. The causality of biology is the causality of physics, even if we rarely find it convenient to think of it in those terms. Likewise, the causality of software is the causality of the hardware, but for the same reasons we usually hold different models for them.

On the existence of consciousness, I accept it in a deflated theory-neutral manner. We have subjective experience. I don’t accept the inflated super-physical or super-informational version many philosophers associate with the concept.

You’ll probably be exasperated by me saying this, but I can’t see any reason why love is irreducible. The notion that it is sounds like romantic sentiment. Like many things, the only thing that might prevent it from being reduced is the vagueness of the concept and resistance to clarification.

“Recently I gave you a two-part answer,”

I can’t agree that was an answer, or that it’s been answered before, except the one on my post about subjective experience, which I addressed. Unfortunately, this is feeling like another deceased equine. We seem to be accumulating a collection. 🙂

October 28th, 2019 at 2:30 pm

“…it feels like we’re beating a dead horse…”

I suppose. I’m frustrated because I feel the preponderance of the argument supports what I’m saying but your only counter argument amounts to: “the causality of software is the causality of the hardware,” and I think I’ve shown how that’s not so.

The causality of the software is enabled by the causality of the hardware, that is true. But it is not the same as being the causality!

A clear example is Angry Birds. The causality of that world is encoded in the software — demonstrated by that software running on different platforms, including emulators.

That Angry Birds causality absolutely is enabled by whatever it runs on, no question. And what it runs on is a distinct causal system of its own — demonstrated by that it doesn’t need to run Angry Birds and can run other apps.

So this is not like the causality of biology. As you’ve said, reduction shows it’s the same causes at any scale. Also true of computers. The operation of a computer is reductively explained at any level.

Computers are unique in that they can execute a virtual causal system. On the one hand, they are physically a computer being a computer. But on the other hand, they are also simulating a completely different causal system.

“We have subjective experience.”

That was all that was being asked. 🙂

“I can’t agree that was an answer,…”

If you insist, but you did in this thread agree that, “currently nothing other than brains are able to trigger our intuition of a fellow consciousness,” which is to agree with my first answer, “No form of information processing we know other than the brain seems to experience consciousness.”

As to the second, “No physics we know suggests…” part, if you agree we have subjective experience and can’t point to the physics-based explanation, then don’t you have to accept the second answer?

(In any event, not liking an answer, or even thinking it’s wrong, isn’t the same as denying you were given an answer. I’ve given you these answers, in one form or another, many times over the years. I’ve written whole posts about them in which we’ve had long debates. I have definitely given you these answers before, my friend!)

October 28th, 2019 at 9:44 pm

[Is it still beating a dead horse if someone else does the beating? Or if they find an as yet un-beaten part of the horse? Don’t answer that]

Let’s start over. 🙂

Wyrd has mentioned how computers are different because they can simulate more than one thing. Let’s take that off the table by burning the simulation program into ROM. This computer can only run one simulation. I assume it’s agreed that it doesn’t change the rest of the arguments.

Wyrd has identified two kinds of causality: algorithmic causality and physical causality. While they bear a family resemblance they are different things. So, a point I, and I think Mike, would make is that the burned ROM computer exhibits both kinds of causality. The algorithmic causality emerges from the physical causality. More importantly, I would say that all of the Consciousness derives from, the algorithmic causality.

Finally, Wyrd asks how an informational system can explain phenomenal experience. My answer is representation, correctly defined and implemented.

*

October 28th, 2019 at 10:38 pm

“Wyrd has mentioned how computers are different because they can simulate more than one thing.”

You’re arguing the wrong thing here!

Being able to run different applications has nothing to do with my argument, so burning the software in ROM doesn’t change anything. The point I’m making about dual causalities applies to, for example, the embedded computer that runs my microwave.

“Wyrd has identified two kinds of causality: algorithmic causality and physical causality. While they bear a family resemblance they are different things.”

Now that I agree to without reservation! 🙂

“So, a point I, and I think Mike, would make is that the burned ROM computer exhibits both kinds of causality.”

Definitely. That’s exactly what I’m saying, too.

“The algorithmic causality emerges from the physical causality.”

I’ll agree with that. The term I used was enabled, but emerges from works.

The crucial point is that, as you just said, the two causalities are different.

In my microwave, for example, there is a physical causal system — the embedded micro-controller — that works according to digital logic that is implemented using transistors and wires. This is a distinct physical causal system. Its behavior is the same regardless of what software it runs.

Then there is the virtual causality, the software, that consists of instructions and if-then branches. This describes what the microwave does and that behavior emerges from (or is enabled by) the underlying physical system.

But if we consider an abstract definition of the micro-controller hardware and an abstract definition of the software, it’s clear they are completely different things. The former defines a physical system, the latter defines a set of rules for cooking food using that system.

“More importantly, I would say that all of the Consciousness derives from, the algorithmic causality.”

I know that’s your opinion, but I don’t share it. There is no indication any algorithmic causality is being enabled by (or emerges from) the brain. (Any more than, say, an analog radio does.)

“My answer is representation, correctly defined and implemented.”

Since no such implementation exists, nor is there any physics indicating how there could be one, isn’t that just a guess? Where is the supporting physics?

October 28th, 2019 at 10:55 pm

Algorithmic causality:

Loop

Total inputtransmitter = 0

For each transmitter in incomingtransmitters[]

inputtransmitter++ if transmitter == 1

If inputtransmitter > 50 then fire()

End loop

Something like this algorithmic causality emerges all over the brain. Supported by the physics of neurons.

*

October 29th, 2019 at 12:20 am

“Something like this algorithmic causality emerges all over the brain.”

You’re saying there is an algorithm that (roughly) models the behavior of a single neuron. That such a model is possible has never been in dispute.

But it’s a description; there is no defining algorithm. Actual neurons don’t use an algorithm to cycle through their inputs one-by-one, nor is there any variable ‘inputtransmitter’ to increment. There is no underlying CPU executing instructions.

What emerges is a physical causal system enabled by neurons. That physical causal system directly, in and of itself, generates consciousness.

Whatever engine runs the loop you described is also a physical causal system (probably enabled by logic gates). But that system is concerned with the causality of, for example, setting ‘inputtransmitter’ to zero and incrementing that variable during the loop (not to mention the housekeeping associated with the variable itself). That system understands and implements the loop and if-then structures. It knows how to iterate over the input array. It knows how to call the fire() function. This system involves only the semantics of the engine itself.

Your loop implements a virtual causal system — a rough model of a single neuron. That causal system is concerned with a set of inputs and what they mean. Depending, at run time, what values those inputs have, this causal system decides whether to invoke the fire() function. This system involves the semantics of the neuron’s behavior.

(As I’ve pointed out, the definitions for those two systems is entirely different. I’ve also pointed out that they stand alone on their own.)

October 29th, 2019 at 12:55 am

Let me try again.

Loop

If totalInput() > threshold() then

Fire()

Reset()

End Loop

This is a description of an algorithmic causality. This algorithmic causality can emerge from a physical neuron. It could also emerge from a different physical neuron. It could also emerge from a computer running a program. In each case the algorithmic causality is the same.

Yes?

*

October 29th, 2019 at 2:15 am

No. 🙂

Firstly, hiding a bunch of instruction steps behind functions doesn’t change anything. (Maybe you just wanted a short main loop?)

Secondly, you’re ignoring the main point, which is that your loop is necessarily executed by some engine. As I described last time, that engine is a distinct causal system. The loop itself is another causal system. (Perhaps you agree with that and we’re moving to a new topic?)

Thirdly, I think your terminology is off when you say:

“This is a description of an algorithmic causality. This algorithmic causality can emerge from a physical neuron. It could also emerge from a different physical neuron. It could also emerge from a computer running a program.”

The loop is an algorithm. That algorithm numerically models a rough abstraction of a generic physical neuron. I agree that algorithm(ic model) emerges from an analysis of physical neurons. That is, we can write an algorithm to imitate neuron behavior.

Of course that algorithm easily emerges from an analysis of a computer running that model, because that algorithm is directly embodied in the software. (Or did you mean a computer running some unrelated model?)

The point is that the algorithm only describes the physical neuron but it fully defines the numeric simulation.

“In each case the algorithmic causality is the same.”

I agree the causality described by the algorithm is the same in all cases (although the description may be imperfect for the physical neuron). The physical causality is obviously different.

October 29th, 2019 at 3:27 pm

[hate doing it this way, but there are too many points, so …]

“Firstly, hiding a bunch of instruction steps behind functions doesn’t change anything.”

Agreed, but that’s the point. Functions are multiply realizable. There doesn’t need to be a loop. Inputs matched to outputs is what matters.

“Secondly, you’re ignoring the main point, which is that your loop is necessarily executed by some engine.”

That’s why I took the loop out of the description. A loop iterating over inputs does not match the functionality of the neuron. In the new version, how those inputs are integrated is irrelevant.

“Of course that algorithm easily emerges from an analysis of a computer running that model, because that algorithm is directly embodied in the software.”

Um, you kidding me? I give you a random computer running a program and you can easily analyze the algorithm? Here, try AlphaZero.

“The point is that the algorithm only describes the physical neuron but it fully defines the numeric simulation.”

The algorithm fully defines the algorithmic causality of both the neuron and the computer. (Remember, burned ROM computer). The neuron and the computer stand in the same relation to the algorithmic description.

*

October 29th, 2019 at 4:23 pm

“There doesn’t need to be a loop. Inputs matched to outputs is what matters.”

But if you’re not specifying how that’s done, you’re not providing a full definition. How are you going to integrate multiple inputs without iterating over them?

“In the new version, how those inputs are integrated is irrelevant.”

How those inputs are integrated is a major part of the system. You can’t just hand-wave it away.

“Here, try AlphaZero.”

Why isn’t a program listing a definition of AlphaZero?

OTOH, if your point is that reverse-engineering it from its behavior seems difficult, what does that suggest about deriving a mind algorithm from reverse-engineering the brain?

“The algorithm fully defines the algorithmic causality of both the neuron and the computer.”

But the “algorithmic causality” of the neuron is an abstract description represented as an algorithm. What defines a physical neuron is its physical components, the biology and chemistry.

All an algorithm defines is a set of steps (presumably executed by some engine).

“(Remember, burned ROM computer).”

I’m not sure what ROM has to do with anything. That the software is fixed is irrelevant here. This has nothing to do with whether a given CPU can run other software or not. (Seriously, man, it’s a complete non sequitur. It has no connection I can see with the arguments we’re making here.)

“The neuron and the computer stand in the same relation to the algorithmic description.”

I’m assuming by “the computer” I assume you mean “the computer running neuron software”?

If that’s correct, I’m afraid I disagree. I guess you don’t believe me since I’ve said it several times now, but the algorithm only describes the behavior of a physical neuron. It defines a series of steps that implement that behavior.

As such, while it only describes the behavior of the neuron, it fully defines the operation of the computer.

October 30th, 2019 at 11:34 pm

This is where we differ. You say the algorithm “only describes” the behavior of the neuron. I say it also “only describes” the behavior of the computer. The fact that the algorithm came before the construction of the computer makes no difference to its behavior. Theoretically, given advanced tech, we could build a physical neuron from scratch to meet the requirements of the algorithm. We could then say the algorithm defines the operation of the neuron.

The point is, it’s the behavior of the system that matters, not its physical makeup.

*

October 31st, 2019 at 12:19 am

[Disclaimer: The Nationals just won the World Series and I’m very drunk, so…]

“This is where we differ. You say the algorithm ‘only describes’ the behavior of the neuron. I say it also ‘only describes’ the behavior of the computer.”

Okay, hang on just a moment. (1) Are you okay then with the idea that it only describes a neuron? On that point we agree? (2) I said it ‘defines’ the behavior of the computer, you say it only ‘describes’ it. This is what you mean by “we differ”?

Does this turn on what we mean by ‘describe’ versus ‘define’? What I mean by ‘describe’ is something with a rough correlation. Something that ‘defines’ is precise and detailed. A definition allows constructing a copy. A description does not — a description is more what we mean by “thumbnail” or “sketch.”

“The fact that the algorithm came before the construction of the computer makes no difference to its behavior.”

Which behavior do you mean? The behavior of the computer as a computer (i.e. its logic gates irrespective of the software it runs), or the behavior of a computer running some software?

In any event, the construction of the computer has nothing to do with anything. The algorithm — by definition — defines the processing steps the machine takes.

“Theoretically, given advanced tech, we could build a physical neuron from scratch to meet the requirements of the algorithm. We could then say the algorithm defines the operation of the neuron.”

Let’s be clear exactly what you mean. Do you mean a physical neuron that is literally defined by the algorithm? In other words, it has some sense of iterating over inputs and of looping? That it treats physical values as numbers?

If so, yes, I agree such such a construction is possible. It wouldn’t be anything like a brain neuron, but such a construction is possible. I have no idea if it would work. As a physical construction, maybe.

“The point is, it’s the behavior of the system that matters, not its physical makeup.”

And, as I think you’ve acknowledged in a different post thread, that’s where we differ.

You believe a numerical simulation that recapitulates the causality of a physical system (in arbitrary numbers) is sufficient to account for all the effects of that system.

I … don’t agree. (Simulated X isn’t Y. 😀 )

The jury is out; either of us could be right.

And let me remind: My only point has been that skepticism is warranted with computationalism. For reasons I’ve enumerated many times. Very little supports the idea; a great deal argues against it.

October 31st, 2019 at 12:36 am

Not all the effects. Just the ones we care about.

I had a thought. Maybe I’m using the wrong terminology. The description that both the neuron and the computer share is not an algorithm so much as a specification. The specification describes what functions need to be there and how they should behave with respect to inputs and outputs. The specification does not determine how functions are coded. Likewise, the specification does not determine what hardware the operations run on. If consciousness can be explained in terms of a specification, both the neuron and the computer would meet spec, or not.

Make a difference?

*

October 31st, 2019 at 1:04 am

“Not all the effects. Just the ones we care about.”

Sure.

“The description that both the neuron and the computer share is not an algorithm so much as a specification. … Make a difference?”

Sorry, no. I’ve said all along an algorithm is a description, which is a simplistic specification. To me, a specification is much more precise than a definition.

A specification, as you imply, is more akin to an algorithm in that both allow a construction of a physical copy from abstract information. A blueprint allows construction of a building or bridge, for example, and an algorithm allows “construction” of a computer executing a given software — both are precise.

The difference between the two, I’d say, is that a specification involves an unordered set of properties that define something. Unicode is an example of a specification. So are the electrical standards for house wiring. Or the rules of baseball. (Go Nats!!)

An algorithm is an ordered set of instructions understood by some engine. An algorithm is a very specific sort of specification with certain properties (order, variables, if-then constructs, callable code).

So you’re not wrong to see an algorithm as a specification.

But the fundamental difference between physical and numeric systems remains, amigo.

A specification of a physical neuron — one that allows construction of an analogue — won’t be anything like the specification of how a computer should simulate that system (i.e. an algorithm).

[This is my blog (and I’m drunk), so can I be honest? To the extent you or Mike argue computationalism must work, I really think that’s a losing (if not lost) battle. My whole stance is skepticism, not denial, and I think I’ve made the case for that pretty damn well. All I’ve ever said is that computationalism is a Big Ask. But it’s always been the case that the answer might be Yes!]

November 2nd, 2019 at 9:54 am

So,… Convergence? Divergence? The next post gets more into this topic.

November 2nd, 2019 at 11:59 am

Delay due to fatigue. Will pick up in the post you linked, probably.

November 2nd, 2019 at 12:13 pm

Okay, no prob. Didn’t mean to wear you out! I really believe, once we understand each other, it should be possible to be on the same page.

We may forever disagree about the probability of computationalism, but I don’t see why we can’t agree on what’s objectively involved in trying to accomplish it. I think that’s a fascinating conversation, but it’s hard to get to if we can’t agree on fundamentals.

(A post I plan to publish today is yet another attempt to start a conversation about what’s involved in virtual reality.)

October 28th, 2019 at 10:32 am

Wyrd stated: “There is no disagreement software is reified. The point is that in a software/hardware system there are two entirely different causal systems in play. Firstly, there is the physical causal system implemented by the machine. Secondly, there is the virtual causality implemented by the software.”

Your assessment of the causal relationship between hardware and software is superb. Now, all you have to do is take it to the next level by making the same correlation between consciousness and the physical universe. Consciousness is the hardware that our virtual reality called a physical universe runs on. In other words, materialism is a virtual reality which runs on the hardware of consciousness Panpsychism is a model which supersedes the need for the magic, mystic and mystery of the immortal laws of nature.

Take a walk on the wild side…

October 28th, 2019 at 12:21 pm

Thanks for the compliment, Lee; glad you thought so.

“Now, all you have to do is take it to the next level…”

That all sounds more like poetry to me than a physical theory. I’m all about specifics and what can be demonstrated. Philosophically I’m firmly in the realist camp. I need something I can sink my teeth into.

WRT panpsychism: Firstly, the idea doesn’t accord with my sense that consciousness emerges from complex systems and interactions (not from the components of those systems). When it comes to consciousness, I look upwards, not downwards.

Secondly, I think panpsychism (incorrectly) conflates two senses of the term experience. There is the phenomenal experience sense, the something it is like sense — that sense has memories, semantics, and a narrative, associated with it.

There is also the sense meant in “an electron experiences a magnetic field.” That sense refers strictly to the effect of one thing on another — it has none of the associations of the other sense.

Thirdly, extending the first point, the structure argument. I believe consciousness requires complex structure, which is why I look “upwards.” Simple systems, let alone particles, don’t have the structural complexity necessary to support a notion of consciousness.

Fourthly, the related information argument. Consciousness requires more information than I see can be contained in simple systems (let alone particles, which have very few properties at all).

Which is all to say, I’m sorry, but panpsychism is an idea I’ve filed under “Almost Certainly Not True.” I would need to see some very clear physical evidence to refile it.

“Take a walk on the wild side…”

Heh! There’s wild, and there’s crazy. 😉

October 28th, 2019 at 1:21 pm

I’m not a fan of the sentient electrons either but I have somewhat reconsidered panpsychism recently.

My logic goes like this.

Consciousness, experience, something mental exists.

No matter how it came to exist, it exists and it is actually all we know first hand. Everything else is hypothesis based on what is presented to us. This I think would be true no matter how accurate you think our representations of the world are.

So what are your choices about reality:

1- Dualism – the mental actually is non-physical and there are at least two different major components of reality. Raises all sorts of questions about how one came from other and how they influence each other.

2- Idealism – everything is mental

3- Physicalism – everything is physical

But if you go with option 3 then consciousness too must be physical. Hence, you are a panpsychist. Mind is matter even if it is hard to understand how they relate to each other.

Click to access Realistic-Monism—Why-Physicalism-Entails-Panpsychism-Galen-Strawson.pdf

October 28th, 2019 at 2:45 pm

“But if you go with option 3 then consciousness too must be physical. Hence, you are a panpsychist.”

How does that follow? Doesn’t panpsychism mean ‘everything is conscious’?

Thinking that consciousness is physical doesn’t imply all physical things are conscious.

October 28th, 2019 at 6:47 pm

It is simplest form it is just that the mental is a fundamental feature of reality. If you don’t think it is, then how do you know anything or have any opinion on the matter?

It must be somehow intimately connected with matter unless you want to down the idealism path.

October 28th, 2019 at 7:45 pm

“It is simplest form it is just that the mental is a fundamental feature of reality.”

As I said, I don’t see what’s “pan” about that. Nor would I agree the mental is a “fundamental feature of reality” — I would call it more an aspect of reality. (It’s certainly fundamental to us, though!)

“It must be somehow intimately connected with matter unless you want to down the idealism path.”

Indeed. As a realist, I don’t go down the idealism path. 🙂

October 29th, 2019 at 5:31 am

You may need to do some basic reading on the varieties of panpsychism. This may help.

“The word “panpsychism” literally means that everything has a mind. However, in contemporary debates it is generally understood as the view that mentality is fundamental and ubiquitous in the natural world.”

https://plato.stanford.edu/entries/panpsychism/

Take a look at Russellian Monism.

Or Strawson arguments linked previous that physicalism entails panpsychism.

October 29th, 2019 at 5:44 am

” Nor would I agree the mental is a “fundamental feature of reality” — I would call it more an aspect of reality. (It’s certainly fundamental to us, though!)”

You have the emergence problem that Strawson talks about. How does it come about if it is not fundamental or at least implicit in matter?

October 29th, 2019 at 10:37 am

“You may need to do some basic reading on the varieties of panpsychism.”

I’m genuinely sorry, but to me that’s like saying I need to do some basic reading on UFOs or ghosts.

Firstly, I don’t buy the basic premise, that “mentality is fundamental and ubiquitous in the natural world.” Given that we may very well be the only planet in the galaxy with conscious life (which appears only in brains), there seems nothing fundamental or ubiquitous about it. If anything, it seems very special.

Secondly, I’m just not a fan of hand-waving philosophical approaches to the mind. I’m not really even a fan of more structured approaches like GWT or HOT. I’m not saying any of it is right or wrong, just that the approach doesn’t interest me. As I said to Lee, I’m a realist and need theories I can sink my teeth into.

“How does it come about if it is not fundamental or at least implicit in matter?”

Is the image on a TV screen fundamental or implicit in matter? In the sense that basic laws of physics account for it, yes. But those laws don’t particularly apply to 2D images. (Note that a series of video images looks like motion at all due to properties of the human visual system.)

Likewise, as a realist, I assume, there are basic laws of physics that ultimately account for consciousness. I suspect it arises due to properties of the brain.

If you want to call those basic physical laws “panpsychism” you can, but it seems as strange to me as calling consciousness an “illusion.”

October 29th, 2019 at 11:44 am

So the one thing you know for sure, the one thing through which you perceive and know everything else, is NOT fundamental? How much more fundamental can you get? Would something you know second-hand through your mind be more fundamental?

There is an interesting quote at the end of Strawson paper from Sir Arthur Eddington that sums up the view:

“the mental activity of the part of the world constituting ourselves occasions no surprise; it is known to us by direct self-knowledge, and we do not explain it away as something other than we know it to be — or, rather, it knows itself to be. It is the physical aspects (i.e. non-mental aspects) of the world that we have to explain”

October 29th, 2019 at 12:14 pm

“So the one thing you know for sure, the one thing through which you perceive and know everything else, is NOT fundamental?”

Ah, we’re apparently using different senses of fundamental.

For me, fundamental refers to basic building blocks, for instance the fundamental particles (opposed to composite ones) and the fundamental rules of physics. In that sense, no my own consciousness is not “fundamental” but is enabled by fundamental (basic) physics.

You mean the sense of basic in our experience, and in that sense, yes, absolutely consciousness is fundamental.

But, as far as we know, it’s fundamental only to one type of complex life on “an utterly insignificant little blue green planet” orbiting “a small unregarded yellow sun.” It seems very egocentric to translate that to universal law.

As I said to Lee below, I’m comfortable that complex systems arise from basic rules (plus energy/matter), although it seems a fascinating aspect of reality to me.

October 30th, 2019 at 6:16 am

Okay, some agreement. A little bit of talking past each other.

To me it gets to the question of what do we know and how do we know it and how do we know that we know it. Fundamental particles are really hypotheses in mind. Even though the notion of atoms had been around a few thousand years ago, it was only a little over a hundred years that the debate over their existence died out in physics. Ernst Mach continued to have disbelief in atoms. Since then, we have come up with new hypotheses about ever more fundamental particles and added the notion that particles are waves. How substantial are the fundamental particles? How real are they actually? What will the next century tell us is fundamental?

We want to think that our understandings are getting better as we refine our measurements, that we are approaching with increasing precision what is fundamental. I’m not so sure.

“But, as far as we know, it’s fundamental only to one type of complex life on “an utterly insignificant little blue green planet” orbiting “a small unregarded yellow sun.”

Yes, as far as we know…

October 30th, 2019 at 11:34 am

“To me it gets to the question of what do we know and how do we know it and how do we know that we know it.”

Epistemology, yeah sure, you betcha!

“Fundamental particles are really hypotheses in mind.”

I don’t disagree with anything you say here. What we think of as fundamental particles may turn out to have structure or be made of more fundamental components (but so far no sign of any such).

That doesn’t change the distinction between the senses of fundamental, one concerning the most basic components used to build complex systems, the other concerning the most basic aspects of our experience.

I think we agree consciousness is not the former but is the latter?

On the side topic of fundamental “particles”…

“How substantial are the fundamental particles? How real are they actually? What will the next century tell us is fundamental?”

A few swings of the bat: (1) The fermions are what we consider (visible, normal) matter, so entirely substantial in at least one sense. (2) Assuming one accepts realism, there seems to be something real there — it certainly has measurable effects. (3) Who knows!

(On that last one, the discovery process in any given field isn’t linear — there’s “low hanging fruit” at first and a steep growth curve that becomes more asymptotic as less and less fruit remains (or seems to remain) on the tree. So I think a topic can be very well explored, but sometimes we do find an unexpected or unnoticed branch with new fruit.)

“I’m not so sure.”

I supposed it depends a bit on one’s stance towards realism and our ability to study it. I think the world is really there and roughly appears to us as it is. I think we can learn a great deal about it, but some things may forever be beyond us. Certainly many of our intuitions fail when it comes to the extremes of nature (the vast, the tiny, the fast, the ancient — stuff beyond our experience).

It’s certainly true that we lack any real understanding of key aspects of reality. (Time, the Big Bang, matter, consciousness, etc.) What does it really mean to have a mathematics that seems to precisely describe what we see?

As Lee and I touched on below, some aspects of physics seem stalled for now, and progress just might require some sort of Copernican Revolution — an insight that “changes everything” — a whole new view of reality.

October 29th, 2019 at 10:09 am

“Heh! There’s wild, and there’s crazy.”

I actually used to think that my models were crazy, but upon further reflection, I modified my own interpretation of those models as wild and un-tamed. Simply because the models work, but only within a venue realist’s would consider indeterminate and random. Wild and un-tamed corresponds succinctly with chaos theory, except for one minor distinction. There is an order of simplicity behind the chaos that my models can account for which is responsible for causation.

You are in good company being a realist Wyrd, and I can think of nobody within the scientific and/or academic community who is not a realist: Hossenfelder, Strawson, Chalmers, and Goff just to name a few. The realist position creates its own paradoxes which cannot be resolved unless one is willing to acknowledge some form of dualism. I’m in agreement with James that the notion of emergence is problematic in two ways. First, one has to account for how living organisms emerge from dead matter and second, how consciousness emerges from non-conscious living organisms.

But at the end of the day, I’m not a realist Wyrd, I’m a heretic. So, neither myself nor my models are held captive by that paradigm. Upon further reflection, maybe being a heretic is crazy, or it could be that crazy is just a circle of mutual definition and agreement for a paradigm of wild and un-tamed. Shit, even that sounds like poetry…

Peace

October 29th, 2019 at 11:26 am

Maybe you missed your calling, Lee, and you should have been a poet. 🙂

“The realist position creates its own paradoxes which cannot be resolved unless one is willing to acknowledge some form of dualism.”

Possibly, but we don’t understand reality nearly well enough to be able to say. If anything, we’ve only scratched the surface. I don’t subscribe to any particular model or theory other than to take the real physical world on faith as actually there.

I know I exist; I accept that the world does, too. 😉

(That said, I do have some personal dualist leanings, so it wouldn’t surprise me greatly if it turned we are all “God’s children” or characters in a virtual simulation or something else completely unexpected. As Aquinas said, faith is a deliberate irrational act, so I usually suppress my dualist leanings in discussions about what we think can be proved real. Essentially, in most discussions, I restrict myself to the domain of physicalism (or materialism or monism, take your pick).)

((OTOH, a life-long diet of science fiction has me well acquainted with the wild and untamed. 😀 ))

“First, one has to account for how living organisms emerge from dead matter…”

Per the panpsychism approach, does that imply life is fundamental and ubiquitous?

I do agree that abiogenesis is a huge question we haven’t answered. (Among three biggies: How did the universe start? How did life start? How did consciousness start?)

My sticking point is RNA (which led to DNA). How the hell did things go from organic chemistry to self-replicating information molecules? (Plus, it turns out one of the four nucleotides — guanine, I think — doesn’t seem to occur naturally.)

There seems no real trick getting to organic chemistry, and once you have RNA, the rest seems to follow, at least as far as single-cell life. It requires the mitochondrion accident to enable multi-cell life, and it may require a large tide-producing moon to get life out of the sea so fire and manufacturing are possible. (And it may require large gas giants in big orbits to shield the inner life world from constant bombardment by comets.) Not to mention the whole thing depends on a rocky-watery world of a decent size at the right distance from its star.

A lot of accidents or coincidences led to our having this conversation!

Given that entropy seems a basic rule of reality, isn’t it interesting that just combining energy (and matter) with some simple rules leads to really interesting complex systems? To me that’s where Spinoza’s God lurks — in the underlying rules that enable growing complexity in natural systems.