Over the last handful of years, fueled by many dozens of books, lectures, videos, and papers, I’ve been pondering one of the biggest conundrums in quantum physics: What is measurement? It’s the keystone of an even deeper quantum mystery: Why is quantum mechanics so strangely different from classical mechanics?

Over the last handful of years, fueled by many dozens of books, lectures, videos, and papers, I’ve been pondering one of the biggest conundrums in quantum physics: What is measurement? It’s the keystone of an even deeper quantum mystery: Why is quantum mechanics so strangely different from classical mechanics?

I’ll say up front that I don’t have an answer. No one does. The greatest minds in science have chewed on the problem for almost 100 years, and all they’ve come up with are guesses — some of them pretty wild.

This post begins an exploration of the conundrum of measurement and the deeper mystery of quantum versus classical mechanics.

A simple starting point is the question: What is the difference between quantum physics and quantum mechanics? We could ask a similar question about classical physics versus classical mechanics. It’s a good starting point because it’s a question we can answer.

Physics is the more inclusive term. It’s the study of physical systems and the laws that apply to them. A given kind of physics, be it classical, quantum, nuclear, solid state, or whatever, delineates the kind of physical systems studied.

Mechanics is the subset of physics that studies the dynamics of the system under study, its motion and forces. The canonical example of classical physics is Newton’s laws of motion. In quantum mechanics, it’s the Schrödinger equation (or its relativistic equivalent).

This, therefore, is what classical and quantum mechanics have in common. In both, we solve equations of motion that tell us how a physical system evolves over time. In classical mechanics we have an actual physical object — a ball, a car, a planet — and we predict its future (or sometimes past) trajectory given that we know where it is now and how it’s moving.

§

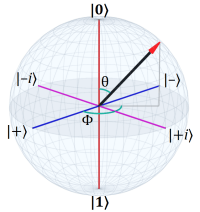

Which brings us to the first strange difference about quantum mechanics. The Schrödinger equation tells us how a quantum state evolves. Part of the deep mystery is that we don’t know what a quantum state actually is — it’s not something we can directly access, like a ball, car, or planet.

What we do know is that a quantum state is something we can measure, and that brings us to another strange difference between classical and quantum mechanics. When we measure a quantum state, we get a definite result, but nothing in quantum mechanics tells us with certainty exactly what that result will be. All we can know is that there is some probability of getting certain result.

Hydrogen atom with nucleus (a single proton) center and surrounding electron “cloud”.

For example, we can solve the Schrödinger equation for the motion of the single electron in a hydrogen atom. What we get is a “cloud” of probability surrounding the nucleus that tells us a probability of finding the electron in a particular spot.

Another oddity of quantum mechanics (called the “long tail”) is that the Schrödinger equation provides, for that electron, a position probability for every point in the universe. There is some (vanishingly small) probability the electron could be detected in the Andromeda galaxy — or even in a vastly more distant galaxy. The probability may be indistinguishable from zero, but it’s not exactly zero. Contrast this with classical mechanics which does tell us exactly where things are.

Which is another difference between them. In classical mechanics we can, at least in theory, know all there is to know about a physical system. We can know its exact location as well as its exact momentum. (Momentum is a combination of where something is going and how forcefully it’s going there.) Further, knowing the position and momentum of a classical system is all we need to fully define that system.

But in quantum mechanics, because of the Heisenberg Uncertainty Principle (HUP), we can only know half the information, even in principle. We can know exactly what the momentum of a “particle” is, but then we know nothing about its position. Alternatively, if we know its exact position, we know nothing about its momentum. We can also choose to know something about both, but the more we know about one, the less we know about the other.

§

I usually put the word “particle” in quotes because it seems likely (although not certain) that there is no such thing. The classical notion of tiny balls of something, such as photons, electrons, or quarks, is very probably not the reality. (Unless one subscribes to the deBroglie-Bohm interpretation of quantum physics or something along those lines.)

The reason we think in terms of “particles” is that, when we “measure” a “particle” we see a point-like interaction. Ever since Louis deBroglie, we understand matter as having a wave-particle duality. This duality resolves as a “particle” — a definite point-like location — when one matter wave interacts with another matter wave.

For example, a photon in flight acts like a wave until it interacts with the electron (wave) of an atom (which raises the energy level of that electron). The photon’s wave can be extremely spread out. Consider a photon emitted from a distant star but absorbed by a specific electron here on Earth. That photon could have been absorbed anywhere along its path.

A big part of the conundrum is the question of what happens to that wave once the photon is “measured” here on Earth. The wave apparently instantly vanishes everywhere else because no other electron, here or there, can ever absorb that photon.

§

The main point of this post involves why “measure(ment)” is also a quoted word. It’s not because we don’t know what measurement is, or because quantum measurements only have probabilities.

Firstly, many authors prefer to replace the word “measurement” with observation (although that seems a word game to me). The intent seems to be bringing quantum experiments, with their formal measurements, into the greater scope of experience where we merely observe things. It’s often said, for instance, that matter acts like a wave until we observe it, in which case it acts like a particle.

As an aside, what I consider one of the silliest interpretations of quantum mechanics is the notion (such as the von Neumann-Wigner interpretation) that consciousness is a necessary aspect of measurement/observation. Under this (very arrogant) notion, the universe was in some vague quantum state (or maybe didn’t even exist?) until we humans, or at least something conscious, showed up. I don’t believe anyone takes this seriously anymore.

Wait… radioactivity and poison? WTF?!

What inspired this post is what authors I’ve read recently (Roger Penrose, Lee Smolin, Peter Woit, Jim Baggott, and others) say about how the environment affects an actual or putative quantum system. I say “putative” because the deep mystery of quantum physics involves the question of whether large objects, such as cats, are, in fact, quantum systems.

On some level, they have to be because they’re made from quantum parts. The question is whether the classical reality that emerges from the parts still has a quantum nature. More pertinently, can they be treated as quantum systems. (For what it’s worth, my guess is no.)

Large systems are said to decohere and, thus, lose their quantum nature. It’s often said that the environment causes this decoherence. By “environment” we mean the surrounding air and the “heat bath” of infrared photons that comprise our day-to-day environment (not to mention all the RF photons that are also part of that environment).

In actual quantum systems — such as photons, isolated atoms, or quantum computing qubits — this is definitely the case. A huge aspect of engineering quantum computers is finding ways to isolate the qubits from the environment in order to retain the coherence of qubits long enough for processing. This is also necessary in many quantum experiments. In such cases, extreme cold, RF shielding, and a vacuum are required.

All-in-all, the idea is that, effectively, the environment measures or observes the system, and this causes the system to have a definite (classical) state.

§

When it comes to large objects, I think they decohere on their own without needing any interaction with the surrounding environment. Further, this decoherence destroys their quantum nature. Effectively, large systems observe themselves.

An aspect I haven’t yet touched on is that measurement disturbs — “collapses” or “reduces” — a quantum system. The measurement conundrum is also called the question of wavefunction collapse.

That photon from a distant star exists as a spread-out wave until it interacts with some electron and localizes as a point-like “particle”. Likewise, electrons exist as waves until they interact with something that localizes them. This localization of the wavefunction to a point-like interaction collapses (or reduces) the wave from the cloud of probability to the certainty of the measured location. Part of the mystery is that the collapse is instant everywhere in seeming defiance of special relativity (which limits causality to the speed of light).

The classical reality we experience, as far as we can tell, is localized. Objects have definite positions (at least until you’re trying to find your keys). It should be noted that localization is just one form of measurement or wavefunction collapse. Many other types of measurement of quantum properties also do this.

§

Getting back to large objects and their apparent loss of quantum behavior, if it was just interaction with the environment that caused it, then presumably we could find such objects demonstrating quantum behavior if we could isolate them from the environment. Large objects in deep intergalactic space should then have quantum behavior.

Imagine we make a statue of a cat and isolate it from all environmental influence. If quantum mechanics applies to systems large and small, then that statue should act like a quantum object. (Obviously the extreme cold and vacuum prohibits using a living cat.)

Imagine we make a statue of a cat and isolate it from all environmental influence. If quantum mechanics applies to systems large and small, then that statue should act like a quantum object. (Obviously the extreme cold and vacuum prohibits using a living cat.)

In 2019 experimenters demonstrated quantum effects in objects comprised of 2000 atoms (about 25,000 atomic mass units). Until then, the record, from 2013, was 810 atoms (over 10,000 AMU).

The MAQRO Mission, a proposed ESA experiment, would use test objects about 100nm in size and, if it successfully demonstrates quantum behavior, will increase the record to 10,000,000,000 AMU. I hope I live long enough to see this mission fly. It will be a game-changer, especially if it fails. Even if it succeeds, we’re still a long way from cats, so success won’t be quite as compelling as failure (but will certainly be pause for thought).

My contention is that the self-interactions of the vast number of atoms in a large object are sufficient for the object to “observe” itself and for quantum behavior to vanish. Even considered as a quantum system, the wavefunction of each “particle” contributes a vanishingly small and separate fraction.

Imagine each “particle” as a singer with a song. Now imagine a vast collection of singers, on the order of 1,000,000,000,000,000,000,000,000,000 of them, each singing their own song. (In contrast, the world population is under 8,000,000,000.) The result is cacophony or white noise. No coherent music arises from such a collective even if they sang the same song. Any aspect of a song is destroyed.

§

Likewise, I contend that the quantum nature of large objects is destroyed merely in virtue of their size. Let alone contributions from the environment, but that does also contribute. The world at large is classical because it’s the song of an unimaginable number of singers.

Again, even if we consider large systems to be quantum systems, they are massively and completely, in their very nature, uncoherent systems that do not exhibit quantum behavior.

Simply put, reality measures itself. All the time. Constantly.

§ §

In future posts, I’ll dig deeper into some of the details, especially regarding decoherence and what are known as “objective collapse” theories. While it may be that large objects don’t show quantum behavior, mathematically explaining wavefunction collapse remains an issue.

As a teaser, I find myself leaning towards the Diósi-Penrose model, which suggests that gravity from the mass of large objects is responsible for wavefunction collapse. It may be that solving the quantum gravity problem will show this, or something similar, to be the case.

Stay measured, my friends! Go forth and spread beauty and light.

∇

April 1st, 2022 at 8:04 am

Note that, because of the background material, these posts are part of my QM-101 series, but not a formal part because the series is about QM math while these posts are non-mathematical and more on the musings and opinions side of things.

April 1st, 2022 at 8:09 am

As an aside, I noticed that the MAQRO-mission site has a couple of minor issues.

Firstly, it’s not a secure site. It has only an http address, not an https address.

Secondly, it doesn’t render exponentials correctly. It refers to 1010 and 104 atomic mass units (which really confused me the first time I read it). Those, of course, should be 1010 and 104. I assume that’s a copy-n-paste error.

April 1st, 2022 at 8:15 am

Speaking of large numbers, the 1,000,000,000,000,000,000,000,000,000 number I used in the post (1027) is a very rough estimate of a number I saw purporting to be the number of atoms in a dog. Cats are smaller, so I figured it might be in the general neighborhood, give or take a few orders of magnitude, of the number of particles in a cat. Or at least in a smallish object.

It’s one-billion3 or one-billion-billion-billion — an unimaginably large number given that the Earth’s population is currently just under eight-billion.

(BTW, the short scale billion, 109, not the old long scale billion, 1012. As I understand it, even the British now use the short scale meaning and, like everyone else, use trillion for 1012.)

April 1st, 2022 at 8:26 am

You’re right, I think, to worry about the nature of measurement, but we can think of “recorded measurement results” as prima facie what physics is about. After an experiment is complete, there is a long list of numbers and information about how each list was gathered, all stored in computer memory and in lab notebooks, which is the whole grist for the physics mill.

The idea that objects like apples and pears are “systems” that have “states” is natural enough from a human-scale perspective, but for modern experiments I think it’s better to focus on analysis of the “recorded measurement results” and only assert that there is such a thing as a state if an analysis can unambiguously exhibit how an “object” and a “state” emerges from the recorded measurement results. The point of the Heisenberg “picture” of QM is that it works just as well if we discuss the evolution of the statistics of recorded measurement results over time instead of discussing the evolution of a state over time.

Your account above in terms of systems and states channels more-or-less conventional assumptions. I’ve found that thinking in terms of measurements gets me just enough out of that box. It’s a fairly simple change of perspective, but it has an elaborate range of consequences.

I look forward to seeing what else you have to say about the measurement problem, but I encourage you to consider the idea that “collapse” *can* be thought of as a way to construct joint probability measures. As far as I can tell, there is very little in the literature that is much similar to this idea, precisely because systems and states are so entrenched in how we talk about physics.

I wrote a lot about “collapse”, but I deleted it all 🙂

April 1st, 2022 at 9:12 am

I don’t know where you fall on the “collapse” debate Peter. Personally, I don’t have a problem with the term if it is used to express the outcome of a probability. In agreement with Rovelli’s RQM, I also reject the entire notion of wave function as a fundamental reality.

Unfortunately for science, it’s in our nature to be psychologically uncomfortable with things that we cannot see let alone measure with instruments. All of these unknowns lead to uncertainty and that uncertainty has a tendency to evoke the imagination of our species which in turn results in wild and bizarre ideas; ideas which over time, become imbedded into the psyche of our culture. Rovelli’s RQM avoids that mind trap by keeping it simple.

Great essay Wyrd, looking forward to future installments.

April 1st, 2022 at 9:41 am

Hey Lee, thanks!

FWIW, I’ve never been a huge fan of Rovelli; some of his views seem a bit fantastical to me. (For instance, I don’t agree that time is emergent. I think it’s, rather clearly, fundamental.) At first, I was a bit askance at his RQM because I initially associated it with the Leibniz relationalist view, which I’ve never bought into. (In that case I think relations are emergent, not fundamental.) But in learning more about RQM, I’ve found that Rovelli doesn’t deny the fundamental aspects of “noumena” but sees relations between them as the only thing that matters. On that account, it’s something I want to look into more.

I agree very much about our human tendency to make up, often fantastical, stories about mysteries. We’ve been doing that ever since we thought the Sun, the trees, and the wind, had personalities (usually irascible ones). For several years now I’ve been posting against notions of “fairy tale physics” and what I like to call FBS (Fantasy Bullshit). I’ve wondered if, in turning our backs on spirituality — which previously occupied our fantastical natures — if we don’t try to fill that imaginative void with scientific FBS. (See, for instance: Our Fertile Imagination or Our BS Culture)

April 1st, 2022 at 9:15 am

Hey Peter, how’s it going? Nice to hear from you again. I certainly would have been interested to see what you had to say about “collapse” given the goals of this series of posts!

We’ve talked about your notion of measurement and statistics before, and I’d like to simplify things by asking some questions. Firstly, would you align with the realist or anti-realist views of QM? Secondly, do you lean towards, or embrace, any particular interpretation of the QM math? Thirdly, what would thinking about joint probability measures buy me? (Frankly, I’m not even sure I fully understand what that means.)

As an aside, it’s true that I’m hoping to stay within the bounds of convention as much as possible. In part my mission is exploring these topics to communicate and create an understanding for others. Another aspect is that writing about these topics, finding ways to explain them, helps me understand them. There is also that, as an armchair amateur, I doubt I can truly add anything new to the mix, so I try to stay within the bounds of convention as much as possible. That said, certain aspects of current and past thinking just don’t sit quite right with me, and I hope to explore exactly why.

April 1st, 2022 at 10:08 am

I hope you won’t mind me begging one or the other question by saying that I think we can be as realist about QM math as we can be about probabilistic formalisms for CM math. How do you feel about the intersection of probability and CM math?

I’ve been thinking about QM for long enough that I can see merits in every well-known interpretation. I’m more Copenhagen than I used to be: I think Bohr was absolutely right, for example, to emphasize that recorded measurement results have to be classical. Of many times he said such a thing, I quite like this one: “It is decisive to recognize that, however far the phenomena transcend the scope of classical physical explanation, the account of all evidence must be expressed in classical terms.” “Recorded measurement results” seems a fairly reasonable translation of “the account of all evidence”. Then I think it’s helpful to ask how the algorithms we apply to that record get us from can-be-modeled-classically to cannot-be-modeled-classically. That seems to say that classical computations cannot be described by classical mechanics, so what do we have to add to classical mechanics so that we have a “CM+” that can describe as much as QM? We hardly have to do anything to include operator noncommutativity and hence measurement incompatibility, which reduces the gap *a lot* and points towards one way to understand “collapse”. Measurement incompatibility in probability theory (not in QM) is when two probability measures do not admit a joint probability measure that has the first two as marginals. For the finite case, we might have P(a[i])=pa[i] and P(b[j])=pb[j], but there is no P(a[i],b[j])=pab[i,j] for which sum_j pab[i,j]=pa[i] and sum_i pab[i,j]=pb[j]. Kolmogorov probability just requires there to be a positive, normalized measure, but says nothing about whether a joint probability can be constructed for two such Kolmogorov probability measures.

Thinking about joint probability, and what to do when a joint probability is not possible, gets us into a place where everything isn’t suddenly trivially simple, because CM+ is not the same as CM, (because it has to be as good at modeling what we do as is QM,) but CM+ is also subtly different from QM, despite also being an algebraic, Hilbert space, and probability formalism.

I contend above that there is a specific aspect of conventional accounts that can be thought part of the problem: there are systems and they have states. [My more-or-less instrumental approach to that contention is not the only way: Bohm’s holism in his later work is another.] The trick, perhaps, is to subvert convention in a way that a conventional physicist can’t stop themselves finding it compelling, although I have no great confidence I can carry off such a trick. I deleted what I had written about collapse because even I could see that what I had written was not compelling.

April 1st, 2022 at 12:15 pm

Heh, well the next post will be Wavefunction Collapse, so you’ll be tempted all over again. 🙂

I recall from previous conversations that you’d like to extend CM to include stochastics. As you know, of course, CM is deterministic and doesn’t admit to probability. I think it’s obvious CM is just an emergent approximation useful in “everyday” life and, on several levels, I don’t ascribe to determinism. I think we get to the same end place but perhaps by different roads. I’m pretty firmly in the realist camp, not with regard to the math, but with regard to the ontological reality. I want to know what reality is, and I’m not yet willing to give up the idea that we can figure it out. (As I think I’ve said before, I find instrumentalism unsatisfying.)

That said, I am a bit fascinated by Wheeler’s version of the Twenty Questions game where the group doesn’t pick an object and answers the yes/no questions randomly with the caveat that all answers must pick out at least one possible object (which makes the earlier questions more random than the later ones). Even so, as I opine in this post, I think reality does ask most of the early questions. We might then ponder to what extent our QM probing asks the later ones.

Probability is an area of math I’ve always avoided. (I had to look up Kolmogorov probability on Wikipedia. I know about Kolmogorov complexity from my CompSci days but not his probability axioms.) If you care to take the time, I’d be interested in a concrete example or thought experiment illustrating how to apply it in CM+.

As I recall, your overall project is to unify CM and QM. Does that include the holy grail of gravity? As I’ll elaborate on in future posts, I have strong sense QM cannot be complete (and CM certainly isn’t). It’s even possible that, somehow, it’s just wrong. There is that QM is background dependent. And doesn’t include GR. I’ve even begun to question the faith in linearity and unitarity.

I think that what you said about seeing merits in all QM interpretations, along with the lack of truly compelling arguments for any of them, may speak to just how wrong QM might be. Philip Ball, in his book Beyond Weird, aligns with “quantum reconstruction” — the notion we need to wipe the slate clean and start over. I think he may have a point. (Lee Smolin floats a similar idea in Three Roads to Quantum Gravity, those roads being [1] start with GR and add QM; [2] start with QM and add GR; [3] just start over again.)

Ultimately, all I can do is express my discomfort with various formulations. Things that just don’t seem right to me. It’ll take much greater minds than mine to cut this knot. Perhaps minds such as yours!

April 1st, 2022 at 1:20 pm

“As you know, of course, CM is deterministic and doesn’t admit to probability.” I don’t know that at all! There’s the Gibbs state, Liouville theory, and stochastic formalisms such as Wiener processes and the Itô calculus. Saying that CM is deterministic is almost as limiting as saying that CM is Newton, with nothing from those French or Irish counting for anything. I think any modern measurement theory for CM would include a calculus for how small errors change over time, in part because we now understand that a dynamics being chaotic in any part of its range may make even the smallest measurement errors significant. As you say, this gets us to the same place in practice, but I don’t have a deterministic ontology insofar as I don’t know and don’t know how I could know whether there’s an ultimately smallest scale where there is absolutely no noise whatsoever. I think part of the point of a Gibbs state is to say that we won’t say, today, what causes a system we are studying to be in an equilibrium state, because we haven’t yet measured anything about the details of the heat bath it is embedded in and what its interactions with the heat bath are, but we’re open to saying something, tomorrow, after we have measured something about the details of the heat bath and its interactions, which I think of as a modern “Hypotheses non fingo”. As for Newton, this doesn’t mean we have no ambition.

Even after we understand the relationship between algebraic quantum and random fields, changing from Minkowski space to a different geometry makes for difficult mathematics. You could have a look at https://arxiv.org/abs/2201.04667, which won’t win a prize in the essay competition I entered it into but I mostly intend it only as a first statement of intent to begin thinking about QG. Starting with GR is only one possibility: we can also work with torsion in a teleparallelism approach or with non-metricity. There are issues with both of those, but they change the rules in ways that are worth considering. I’d say this as: start with CM+ and add some kind of dynamical geometry, which I guess is [3] for Lee Smolin.

QM and CM+ are both complete and not complete. If we have performed a specific measurement in the past, perfectly, and recorded the result perfectly, QM asserts that performing precisely the same measurement later will result in precisely the same result. That’s determinism! If we do not perform *precisely* the same measurement, there’s no such guarantee, and **there’s the rub**. Even if a measurement has only 0 or 1 as a result, say, the measurement settings are typically continuous, so performing *precisely* the same measurement later is infinitely hard. An idealist will perform precisely the same measurement, so they have determinism and completeness out of QM, but the slightly less idealist does not have determinism and completeness. I note that I think I can say all this because of the way I choose to interpret “collapse of the quantum state”, as making it possible to construct joint probabilities, but anyone can make the same choice if they like. Of course this will all have to be approved by your favorite Authority on QM, or more than one such, before you will believe a word of it, because details matter and I might be missing a whole mess of them and because, indeed, I am only just above being some crazy guy 🙂 You know, however, that it’s perspiration as much as it’s inspiration and there’s an impossible to quantify chance the curtain will be lifted in left field.

April 1st, 2022 at 7:57 pm

I tend to see a difference between seemingly random noise, which I agree obtains in CM, and a probabilistic theory (such as QM and the Born rule). The Gibbs state, from what I can gather from its Wiki article, is about statistical mechanics, which, as in thermodynamics and entropy, comes from our inability to know enough about systems with many, many components. What I’ve understood about statistical mechanics and thermodynamics is that, if we could know the positions and momentums of all the particles (and random noise sources aside), then these systems would be deterministic. (As far as I know, Lagrangian and Hamiltonian mechanics are as deterministic as Newton’s.)

The notion that free will is an illusion has always been based on the perception that CM is, at root, deterministic. It may be forever beyond our ability to know enough, but the system itself is fully determined. (Again, absent noise sources, which may at root be deterministic themselves. Brownian motion is, perhaps, a good example. It appears as a random noise, but if we knew enough about the system, wouldn’t it be deterministic?) Chaotic systems are another example. They are unpredictable to us, because, as you say, any tiny variation in the values we measure greatly affects the outcomes, but their dynamics are still deterministic.

This may be an aspect of the difference between an instrumentalist view and an ontological one. We both agree absolutely that CM can be unpredictable in practice and measurement, but I’m not sure I can agree that CM is, at root, undetermined. (You’re the first source I’ve encountered to suggest otherwise.) Other than noise (and our various instrumental inabilities), what would be the source of unpredictability?

In any event, I think we both agree CM isn’t a complete picture.

“QM and CM+ are both complete and not complete.”

Ha! No, dude, you don’t get to have mutually exclusive propositions both ways. If it’s one, it cannot be the other. I do, at least in science, believe in the excluded middle! 😀

As I understand it, the reason repeating a measurement returns the same result (e.g. passing a photon through two polarizing filters at the same angle) is that the first measurement reduces the wavefunction to an eigenvector per some probability depending on the photon’s initial state. The second filter measures the same eigenvector, so has a 100% probability of passing the photon. Exactly as you say, if the second filter has a different angle (even slightly), the probability is no longer 100%. (A filter at 90° would have a 0% probability of passing the photon; one at 45° would have a 50% chance.)

So, it’s still a probabilistic measurement per the Born rule, and that’s not really what we mean by a deterministic system. To be called deterministic, the system has to be determined in all cases.

April 2nd, 2022 at 7:47 am

I expect we’ll let this go soon, but thanks for keeping at it. “the reason repeating a measurement returns the same result (e.g. passing a photon through two polarizing filters at the same angle) is that the first measurement reduces the wavefunction to an eigenvector per some probability depending on the photon’s initial state.” Let me do this a different way, which accommodates doing two measurements that either are or are not identical.

The standard elementary QM math says we perform an A measurement, then we perform a B measurement. We use perform-an-A-measurement to transform a system+deviceA-in-its-ready-state to system+deviceA-in-result-state#i, then we do the same for a B measurement, so in total we have

system+deviceA-in-its-ready-state+deviceB-in-its-ready-state to system+deviceA-in-result-state#i+deviceB-in-its-ready-state to

system+deviceA-in-result-state#i+deviceB-in-its-result-state#j, with different probabilities for different values of i and j. These probabilities are “joint” probabilities (or relative frequencies) insofar as in different states any value of (i,j) has a nonnegative probability and we can compute marginal probabilities for both the deviceA result states and the deviceB result states.

If it happens that A and B are the same measurement, we get the same deviceA-in-result-state#i both times, which is to say that there is a 100% correlation between the results; from a statistical point of view, that’s determinism.

When A and B are different, we can either say, with Heisenberg, that the state changes/collapses, or we can say, with Bohr, that the second measurement is changed by the presence of the first measurement. Heisenberg so won the battle for physicists’ hearts and minds that the second alternative is not often mentioned, particularly in the popular physics literature, but both ways are there in the math.

The duality between states and measurements in the mathematics, lets us say that the change applies to the state or that it applies to the measurement. If we say that B is affected by the presence of A, then for completeness I suppose we should also consider an AB measurement, with A having any effect on B whatsoever. For a general AB measurement, we can get any of the results of an A measurement and any of the results of a B measurement: in particular, for a given A-collapse-B-state there is an AB-state that gives the same result. So which is the right state?!? Will you go to the wall for the A-collapse-B-state, or will you at least allow that sometimes the AB-state might be useful? Because the AB-state is a vector in a different Hilbert space, we use two operators A’ and B’, for which [A’,B’]=0, instead of the A and B we started with, for which [A,B]≠0, so the A’,B’ are kinda classical, except that we will certainly entertain the idea that there are other operators with which A’ and B’ do not commute (allowing the use of noncommutative operators when it’s useful is the difference between CM+ and CM.) The AB-state and non-collapse-measurement approach has a name in QM, “Quantum Non-Demolition” measurement, because the state isn’t changed by the first measurement.

Now think about a signal analysis environment, in which we perform A,B,C,D,E,F,G,H,…, with each “,” signifying “-collapse-“. We could also think in terms of performing AB,CDE,FGH,…, but there’s a ridiculous number of possibilities if we perform billions of measurements, which we actually do in large experiments. There are two choices that are more natural than others: every measurement does not commute with those that follow it (QM), or every measurement commutes with every other(CM or CM+). To me, it’s not that I think one is right and another is wrong, but that we can use whichever is more useful; however, each choice corresponds to a different idea of what the Hilbert space of possible states is, so I think it’s best not to be *too* realist about Hilbert spaces and quantum states. In terms of something like the Heisenberg cut, every “-collapse-” can be removed, one at a time, but it’s not just one movable cut, because A,BCD,EFG,H… is not-so-simply different from A,B,C,D,E,F,G,H,… and AB,CDE,FGH,….

The ABCDEFGH… choice uses a commutative algebra, so it’s very close indeed to classical. We allow the use of CM+, so if it’s useful to use A,B,C,D,E,F,G,H,…, then we do, but now I think we have pretty good control of why we use one or another: it’s about using a smaller Hilbert space when we can. It’s about encoding the recorded experimental results in whatever way works. Because there is no collapse in this picture, this is also very close to the various Everettian-type interpretations, although I think I’m putting a much more empiricist metaphysics into this. In many cases there are very good mathematical reasons for using the QM formalism and not the CM+ formalism, but we have yet to find out whether for quantum gravity the CM+ formalism will be more helpful.

—————————–

I’m giving a Mathematical Physics Seminar at the University of Iowa on April 19th in which this will figure (it’s Zoom, so a recording may happen). Someone there is a Facebook friend. We’ll see how well they take it, though I expect it won’t move the needle much on the wider acceptance of these ideas. I’m giving the same talk in Bogotá on May 11th, also by Zoom and also because of a Facebook friend, but these are both events for which I won’t advertise the Zoom links unless it’s clear that the organizers are OK with that.

—————————–

QM and CM+ are complete in the sense that if we give them complete information about an ideal, complete, as-if-by-God-provided collection of measurement results in the past, then repeating those measurements will get the same results and nothing else. If we give QM and CM+ much less information, however, which is always the case in practice, then QM and CM+ can still do something with what they are given. Indeed, I think carefully allowing noncommutativity, CM+ instead of only CM, can give us more useful predictions from that limited information.

—————————–

About Brownian motion: if the smaller particles that affect larger particles have even smaller particles affecting them, and so ad infinitum, then it may be that we will have to introduce the axiom of choice. If so, my expectation is that much of the mathematics becomes undecidable, and I doubt we can do more than apply probability as best we can to recorded measurement results. If there is no “ad infinitum” then determinism might rule, but although I can think and imagine both that there is or is not an “Infinity in the palm of your hand And Eternity in an hour”, I can’t bring myself to say with an a priori, axiomatic certainty that one or the other is the absolute truth. This, for me, is where classical determinism hits its limits.

April 2nd, 2022 at 10:57 am

With you on the joint probabilities on A and B (because of the Born rule for the A and B measurements). I had thought there wasn’t much daylight between Heisenberg and his mentor Bohr, though (other than their famous mysterious meeting in 1941). You later say, “The duality between states and measurements in the mathematics…” Are you, perhaps, meaning the Heisenberg picture versus the Schrödinger picture? As I understand it, the former has fixed states and evolving observables, whereas the latter is the other way around. As you say, they are mathematically dual. Looking at the Wiki pages for each, it seems the choice of which is most useful depends on the situation.

I also noticed that the page for the Heisenberg picture mentions, “This approach also has a more direct similarity to classical physics: by simply replacing the commutator above by the Poisson bracket, the Heisenberg equation reduces to an equation in Hamiltonian mechanics.” I believe you’ve mentioned Poisson brackets in previous conversations, and, along with your program to unify CM and QM, I can see why the Heisenberg picture might be a very attractive approach there.

“Will you go to the wall for the A-collapse-B-state,…”

Yes. 🙂 Because we can forego the B measurement and we’re left with the A-collapse state.

“…or will you at least allow that sometimes the AB-state might be useful?”

In what way? What does that buy me (that A⇒collapse_a…B⇒collapse_b doesn’t)?

One of my favorite quantum experiments, one that anyone can perform, involves two polarizing filters, call them A and B, set at 90° to each other. Assuming the photon(s) have an initial random spin state, they have a 50% chance of passing filter A. Those that do have a known eigenstate (0° with respect to filter A), so they have a cos(90°)^2=0% chance of passing filter B. Assuming ideal filters, no photons pass through AB. The combination has a 0% probability.

The fun comes from inserting a third filter C between A and B and aligning it 45° from filter A (and, thus, also 45° from filter B). The 50% of photons that pass through A, given their known state, have a cos(45°)^2=50% of passing filter C. Crucially, their eigenstate changes to match filter C (45°). The combination of AC passes 25% of the original photons. Because filter B is 45° to filter C and the photons that pass through it, there is again a cos(45°)^2=50% chance the photons pass through filter B.

So, the combination ACB passes 12.5% of the original photons whereas the combination AB passed none of them. I just love the seemingly counter-intuitive fact that adding a third filter allows photons to pass whereas the AB combo doesn’t. And that it’s QM at the human scale — some quantum magic that I’m not sure can be explained classically. (Also, perhaps, a case where the Schrödinger picture is more apt, because it seems clear it’s the photon state that evolves.)

To reiterate my question, what does it add to view this as joint probabilities where AB=0% and ACB=12.5%? What does it tell me about what’s going on?

You lost me on how the axiom of choice applies, but I sense you’re getting weary of this, so I’ll just wish you good luck in your talks! If I may be so bold as to offer two suggestions: Firstly, that you’ve been thinking deeply about your ideas for a long time, whereas they may be new to others. It can be difficult to step back and look at our ideas from the outside, but it can be worthwhile to make the effort to simplify in order to bring others inside our thinking. Secondly, I’m a big fan of concrete examples (such as the photon-polarizer experiments or whatever), and I think it’s very important to tie mathematical ideas to physical reality. The rubber meeting the road as it were.

Again, good luck with your talks!

April 3rd, 2022 at 7:34 am

I let that reply sit overnight. “Collapse as a joint measurement construction”, taking “collapse” to be a non-dynamical consequence of a reasonable but not necessary conventional choice, is, I suggest, a mostly new way to sidestep the measurement problem. It is, so to speak, a new kind of solution —an Ender’s Game or Kobayashi Maru solution— of the measurement problem. The whole idea that there can be different kinds of solution, with different formal commitments, will always be anathema to some examiners but not to others. There have been other attempted sidesteps, such as Decoherence, Objective-Collapse, the Heisenberg-Cut, Observer-Induced-Collapse, No-Collapse, Transactional-Collapse (forcing the Transactional Interpretation into this “-Collapse” pattern!), …, all in multiple incarnations and all with their adherents; this is another. As I say, this is only mostly new: a few people, all smarter than I am, have presented ideas that are close to a joint measurement approach, in terms of Quantum Non-Demolition measurement, but the ground is better prepared now than it was 10 or 20 years ago and I am bringing in ideas from quantum field theory and signal analysis that I think help. We will see whether I sink without trace.

My presentation of the overall idea that collapse can be understood as a way to construct joint probabilities in terms of “Measurement merge”, modeling an apparatus either by (A-collapse-B)-collapse-… or by (AB)-collapse-…, weaponizing the fact that using -collapse- allows us to construct a joint probability, is less formal than “Collapse as a joint measurement construction”, but it connects with the Heisenberg Cut quite nicely insofar as that is also associated with a conventional choice of where we place the cut and moving the cut results in us using a different Hilbert space. Alliteration is always heavy-handed, so perhaps instead of “Measurement Merge” it would be better to say “Heisenberg Merge”, alluding to a historical precedent instead.

I hope you can see that “we can forego the B measurement and we’re left with the A-collapse state” is incomprehensible from an empiricist perspective! It makes sense if we insist on quantum state realism, the idea that the quantum state exists completely independently of whether it is *ever* measured, but what justification will we claim for that insistence? I am happy to make what I think is a conventional choice, but I think it’s as well to be as clear as we can about what conventional choices we have made.

My introduction of the axiom of choice suggests a new way in which the mathematics of classical mechanics falls apart under detailed scrutiny. It took centuries to realize that a deterministic classical dynamics can be chaotic and the more than a century since Poincaré has only scratched the surface of chaos theory. For field theories, I think the possibility of both noise in the initial conditions and chaos in the dynamics is serious enough to put the burden of proof on the idea that the universe is deterministic. The weight of the mathematics seems to me now to be on the other side in a way that it was not a century ago. That’s only my 2¢, of course.

That there is daylight of the kind I suggest between Heisenberg and Bohr was pointed out in the philosophy of physics literature by Don Howard, in a 2004 article in Philosophy of Science, “Who Invented the “Copenhagen Interpretation”? A Study in Mythology”. Exactly what Bohr was trying to say over the whole span of his career and how it was or was not different from what others had to say is something of an industry, so I think its useful to invoke such history only if we have some mathematics that can be used as a wedge.

Polarization has a reasonably good classical model until we introduce EM fields that are of low enough amplitude that Planck’s constant becomes significant. At such amplitudes, I think any experiment demonstrates the same thing: Planck’s constant is significant. In quantum optics, the effects of Planck’s constant are often called “shot noise”, distinguishing it from thermal noise. As a relatively abstract theory, the mathematics of that can be constructed in terms of Quantum Non-Destructive measurements (for which we could say, “algebraic random fields”, as a close-to-classical-but-with-noncommutativity-added starting point) instead of in the conventional way. I don’t know how this will filter down into the popular literature, but I think it will take a good science writer!

What is this good for? We’ll see, but I started from being dissatisfied with the mathematics of renormalization in QFT, over 20 years ago, which became partly about understanding “the connection between QFT and random fields” (which is the title of the talk I’m giving at U Iowa), which in turn became having something to say about the relationship between QM and CM about three years ago. A year ago, that crystallized into a paper with the unhappy title “The collapse of a quantum state as a signal analytic sleight of hand”, which someone helped me see might be better received with the title “The collapse of a quantum state as a joint probability construction”. Whether it will be well-received is still to be determined, though JPhysA has now had it for almost three months.

April 3rd, 2022 at 8:49 am

To add: “collapse” isn’t just about merging, there’s also a question about what order we collapse the state in. Do we choose

((((A-collapse-B)-collapse-C)-collapse-D)-collapse-E) or

(A-collapse-(B-collapse-C))-collapse-(D-collapse-E) or

(A-collapse-(B-collapse-(C-collapse-(D-collapse-E)))) or which of many others? These are all different on what I think is the natural way to construct a “collapse product”, which is nonassociative (hence I include the brackets) and nonlinear in one of the two measurements. *If* we think of “collapse” as a mathematical operation in an algebraic probability theory instead of as a necessarily temporal and dynamical operation, we can define both A-collapse-B and A-OTOcollapse-B (Opposite Time Order), which introduces even more choices. If we ever discuss the nature of time, that’s gonna be painful. There’s a trickiness about “collapse” that hasn’t been picked apart enough.

April 3rd, 2022 at 7:52 pm

“I hope you can see that ‘we can forego the B measurement and we’re left with the A-collapse state’ is incomprehensible from an empiricist perspective! It makes sense if we insist on quantum state realism, the idea that the quantum state exists completely independently of whether it is *ever* measured, but what justification will we claim for that insistence?”

Experiments in which we have made a second measurement and found consistent results. As I’ve mentioned, I am a realist and do believe the quantum state exists independently. Concretely, if a photon passes a polarizing filter, that photon is polarized in alignment with that filter. If we were to test it, we would invariably find that so.

“My introduction of the axiom of choice suggests a new way in which the mathematics of classical mechanics falls apart under detailed scrutiny.”

Okay, but how? My understanding is that the AoC comes from set theory and basically says, given a collection of sets, it’s always possible to select an item from each set. How does that suggest CM falls apart?

We do agree reality probably isn’t deterministic, but apparently we disagree that chaos is? I rather like the summary due to Lorentz: “Chaos: When the present determines the future, but the approximate present does not approximately determine the future.” Chaos enters our calculations and computations because we necessarily round off the numbers in order to use them. Chaos is our problem, not nature’s. (As I mentioned before, I think we may be running afoul of ontological versus epistemic points of view.)

The Don Howard paper is interesting. Yes, there is daylight between Bohr and Heisenberg. (I suppose there would be between any two theorists.) Even so, both seem under the broad umbrella of the anti-realist Copenhagen interpretation. There is a lot more daylight between them and the realists, such as Einstein and Schrödinger. That said, isn’t the mathematical duality between operators and states the difference between the Heisenberg picture and the Schrödinger picture? Or am I confused?

“…the effects of Planck’s constant are often called ‘shot noise’…”

If by “the effects of Planck’s constant” you mean the same thing as “the photon nature of light” then, yes, absolutely, but what does that have to do with what I said about polarization?

“What is this good for? We’ll see,…”

Okay, well, when you figure it out, by all means let me know! Currently, other than it being a very interesting idea, I don’t see how to apply it to what I’m trying to accomplish here. (Which, as I said before, is quite conventional and hopefully a bridge between expert-level thinking as I understand it and those, such as me, who are interested in knowing more. It’s kind of a one-eyed man in the domain of the blind sort of thing.)

“If we ever discuss the nature of time,…”

I’ve posted quite a bit about time. If you want to discuss it, either this post or this post might be the best setting. (Or any of the more recent ones.) Spoiler: I believe time to be fundamental and axiomatic. And it only runs in one direction. 🙂

April 4th, 2022 at 7:59 am

Time to let most of this sit for a while, I think.

FWIW, I think of myself as realist about the world, but my realism about our models of the world is much more qualified. The map is not the territory, the model is not the world, so to speak. If the world is infinite, there is room for very different models, emphasizing such completely different details that they may not be recognizable as models of the same world. I take it that any model we construct is finitely constructed, which we have chosen because of our perception that some finite subsets are more immediately useful to us than other finite subsets, so any given model presents only a finite set of information about what may be an infinite world. The world could have finite Kolmogorov complexity, however, in which case a perfect model is possible and realism about that model would be justified, but I don’t know how we could know that we have reached that blissful state. It’s not that thinking about the world in terms of photons isn’t useful, but there are other ways, some of which only barely acknowledge the idea of photons. On some maps one finds national boundaries, on others they are irrelevancies that are not there at all.

I like your quote from Lorentz and your line that “Chaos is our problem, not nature’s”, but I would take that as mostly support for not being *too* realist about any of our models.

April 4th, 2022 at 9:22 am

It might be worth noting that scientific anti-realism is not necessarily a denial of philosophical realism. The latter being the notion that the real world exists, or, as SF author Philip K. Dick put it, “Reality is that which, when you stop believing in it, doesn’t go away.” Scientific anti-realism, as you say, is the notion that our models don’t correspond directly to reality. Bohr, for instance, absolutely believed in the reality of atoms, but at the same time said, “We must be clear that when it comes to atoms, language can be used only as in poetry.”

I’ve compared scientific anti-realism with Kant’s division between phenomena and noumena (and Kant was also a philosophical realist). We can only ever know about the phenomena we experience, but never about the noumena (things-in-themselves) that produce them.

On the other hand, scientific realism is the belief that science ultimately converges on more and more accurate models along with the belief that an ultimate model, at least in principle, is possible. Even if it’s forever out of our grasp, scientific realism is the idea that we keep reaching for it.

That said, I quite agree that our current models are both incomplete and, yet, very useful in their domains.

Good luck with your talks!

April 4th, 2022 at 7:08 am

[…] The previous post began an exploration of a key conundrum in quantum physics, the question of measurement and the deeper mystery of the divide between quantum and classical mechanics. This post continues the journey, so if you missed that post, you should go read it now. […]

April 7th, 2022 at 7:07 am

[…] the last two posts (Quantum Measurement and Wavefunction Collapse), I’ve been exploring the notorious problem of measurement in […]

April 10th, 2022 at 7:28 am

[…] the last three posts (Quantum Measurement, Wavefunction Collapse, and Quantum Decoherence), I’ve explored one of the key conundrums of […]

April 13th, 2022 at 4:21 pm

[…] the last four posts (Quantum Measurement, Wavefunction Collapse, Quantum Decoherence, and Measurement Specifics), I’ve explored the […]

April 14th, 2022 at 8:03 pm

I wasn’t expecting this to be a five-part series, but this blog does also serve as a kind of lab notebook, and these posts explore my current thinking.

June 7th, 2022 at 1:01 pm

[…] this year I posted a five-part series about the measurement problem in quantum mechanics (see Quantum Measurement, Wavefunction Collapse, Quantum Decoherence, Measurement Specifics, and Objective […]