A single line from a blog post I read got me wondering if maybe (just maybe) the answer to a key quantum question has been figuratively lurking under our noses all along.

A single line from a blog post I read got me wondering if maybe (just maybe) the answer to a key quantum question has been figuratively lurking under our noses all along.

Put as simply as possible, the question is this: Why is the realm of the very tiny so different from the larger world? (There’s a cosmological question on the other end involving gravity and the realm of the very vast, but that’s another post.)

Here, the answer just might involve the wavelength of matter.

The line from an otherwise unremarkable blog post stated that quantum interference was due to the wave-nature of matter. Which, indeed, seems to be the case. The interference of light, for example, is a visible physical manifestation that links classical wave behavior to quantum wave behavior.

We can do two-slit experiments with light (and electrons) because their wavelengths are large enough that experimenters can create slits small enough to produce the effect. It requires wavelengths big enough to react to slits small enough. Electron interference experiments use crystal gratings, which is molecular size (if not atomic).

It got me wondering if the de Broglie wavelength was an important contributor to the Heisenberg Cut. [See What’s the Wavelength?] What if quantum behavior is only possible when an object’s wave-nature is significant?

Here’s de Broglie’s famous equation:

The wavelength (lambda) is Planck’s constant (h) divided by momentum (mass times velocity). Pretty simple.

What if, once an object becomes large enough that its wavelength shrinks to a tiny fraction (a seriously tiny fraction), quantum behavior is lost strictly in virtue of the loss of the object’s wave-nature?

I can definitely see interference going away if the spacing required is sub-atomic. And it’s not hard for an object’s wavelength to be sub-Planck Length, beyond the understanding of any current physics. If quantum behavior generally depends on matter’s wave-nature, that may explain why it only applies to the tiny.

§

One objection that occurs is that the de Broglie wavelength depends on velocity. That suggests an object normally large enough to lose quantum behavior regains it if its (observer relative!) velocity is high enough to shrink its wavelength sufficiently. A motionless observer sees a classical object, but a fast enough passerby sees a quantum object?

Maybe, as with mass, its only relative to its own frame. That’s why fast-moving massive objects can’t become black holes. An observer sees a big fast spaceship as having high enough mass to collapse into a black hole, but from the spaceship’s point of view, it just has its rest mass.

But the dependence of the matter wave on velocity makes the wavelength undefined at zero. The wavelength approaches infinity as velocity approaches zero, division by zero is undefined (not infinity).

In any event, your proper velocity is always zero. You’re never moving relative to yourself. So, it can’t be just the de Broglie wavelength that determines the Heisenberg Cut. As I posted about in Objective Collapse, I like the Diósi–Penrose objective collapse model that depends on gravity from an object’s mass.

And just the general notion that a single song is entirely swamped in a vast stadium filled with billions upon billions of other singers.

§

To put some concrete numbers to this, consider physics’ modern spherical cow, the 1-gram paperclip. Sitting “motionless” on a desk (we’ll give it a velocity of one micron per second due to vibration), has a wavelength of:

In comparison, the charge radius of a proton is a whopping 8.414×10⁻¹⁶ m in comparison. The paperclip’s wavelength is six orders of magnitude smaller than radius of a proton. (But it’s twelve orders of magnitude larger than the Planck Length.)

Bottom line, to the extent superposition and interference depend on the wave-nature of matter, the wavelengths of large objects put such effects in a domain much smaller than protons. It can easily put them sub-Planck Length.

In contrast, an electron has a mass of 9.1094×10⁻³¹ kg. Its velocity depends on the voltage used to accelerate it. Old style TVs with CRTs used very high voltage, and electrons moved as fast as 30,000 km/s. Old style vacuum tubes used lower voltages and speeds could be as low as 1000 km/s. Using the slower electrons (which would have larger wavelengths):

Which is a wavelength just under a nanometer (10⁻⁹ m). Decrease that by 30 for high-speed electrons (2.425×10⁻¹¹ m).

That short wavelength, by the way, is why electron microscopes. High-speed electrons have the wavelength to resolve nanostructures. Compare that with visible light, which has a wavelength around 470–680 nanometers.

§

So, quantum behavior — also called quantum wave behavior — being linked to the basic wave-nature of matter might be the obvious answer we’ve missed all along. Quantum mechanics is quintessentially a wave mechanics.

A big question has always been: Interference demonstrates the wavefunction has a definite reality, but what actually is it? What’s waving? Perhaps the answer is simply: matter.

This seems almost self-evident in the two-slit experiment. The classical explanation depending on the wave-nature of light gives the same answer as the quantum explanation depending on the interference of probability waves. The wavelength is the same.

Maybe that’s just generally true. You have to be small enough for your wave-nature to be a real part of your identity in order to be quantum.

§ §

In the last quantum-related post, I wrote about why qubits are so much more powerful than classical computing bits. Basically, a bit has only two states, but a qubit has a two-fold uncountable infinity of states.

Sabine Hossenfelder has a recent video that echoes some of what I wrote and takes it a step further. She explains that it’s not this two-fold infinity of individual qubits that makes quantum computing so different from classical computing. It’s the ability of qubits to entangle.

It’s (as usually the case) a good video:

She’s right that a single qubit, despite its vastly larger degrees of freedom, isn’t worth much. One could accomplish the same thing with two analog bits. But quantum objects can entangle their states, and this allows quantum algorithms to do things classical algorithms can’t.

A decent analogy for QC is of a mathematical “landscape” where “water” flows downhill to the most probable answer. Except the water is probability and it flows uphill. We’re interested in the most probable answer.

§

I also liked what Hossenfelder said about superposition and matter being in two places at once. Superposition is one of the distinctive quantum behaviors, but since we can never actually observe it, we only understand it through inference. I think it’s right to recognize that what superposition of objects really means in physical terms is an open question.

§ §

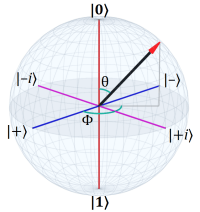

Speaking of that last quantum-related post, it occurred to me that the Bloch sphere is also a good demonstration of why global phase doesn’t matter but the relative phase between parts of a superposition does.

The short version is that, at the North and South poles, your longitude is just as irrelevant as a quantum system’s global phase, and for exactly the same reason.

A longer version is that, in terms of the Bloch sphere, the phase of the system is the “longitude” of the vector in the sphere. That longitude comes about in consequence of the superposition between states. The interference of the two complex coefficients results in that phase. But in the measured states, |0〉 and |1〉, that phase degenerates to irrelevancy.

Metaphorically, it doesn’t matter which direction you face at the North pole, you’re always looking exactly south (and vice versa at the South pole).

§ §

Matter waves? So, wave back!

Stay safe, my friends! Go forth and spread beauty and light.

∇

June 22nd, 2022 at 6:25 pm

That part about global phase might not have been as clear as I’d have liked. Given a superposition in a two-state system (a qubit):

The coefficients α and β are complex numbers with a phase. They interact to control the “longitude” of the state vector in the Bloch sphere (and, of course, its latitude, but it’s the longitude that’s the phase of the state).

A measurement reduces the vector to |0⟩ or |1⟩ and the latitude is lost. It’s only when combined with another qubit, or a “gate” operation, that the phase matters.

November 20th, 2022 at 6:19 pm

A simple way to understand global phase in terms of the Bloch sphere is that when the angle θ is 0° or 180° then the angle φ is irrelevant.

Exactly the way longitude is irrelevant at the North and South poles.

June 22nd, 2022 at 6:36 pm

I finally got around to finding out how to deal with glancing collisions during a “moving balls” simulation. In my previous attempts, I treated all collisions as head-on, which made the momentum transfer easy to calculate. I read a paper about simulating moving pool balls and learned how the vectors actually interact. I was close but my guesses were making it much more complicated than it turned out to be. Even once I learned, my first approach to solving it made it more complicated than it needed to be.

It was a fun mini project. I may blog about it someday, but here’s a sample for now:

There’s still a bug if you look closely. Sometimes balls that pass too closely fall into each other’s orbits and spiral in on each other. A consequence of a virtual reality where the balls have no actual substance and coincidence is only prevented in software.

June 23rd, 2022 at 7:06 pm

Also… a 3D version!

June 23rd, 2022 at 4:07 pm

Put it this way: When the wave-particle duality actually matters is when you get quantum behavior.

July 11th, 2022 at 10:27 am

And given that the Schrödinger equation derives, in good part, from de Broglie’s matter-wave duality, I wonder all the more to what extent matter waves, specifically their wavelengths, are significant.

June 23rd, 2022 at 6:57 pm

“The best-laid schemes o’ mice an’ men; Gang aft agley,…” I meant to post something for Juneteenth (and didn’t), and I meant to post something for the Solstice (and didn’t). End of the month is retirement anniversary (9 years), and beginning of next month is blog anniversary (11 years). Will I have the energy and interest to commemorate those milestones or, given 9 and 11 aren’t “special” to us ten-fingered chimps, just blow them off and keep on keeping on? I think we all know the answer, but I might surprise us.

June 23rd, 2022 at 7:04 pm

6 3 4 LYIEC QAMBC FCWXC QKWHG QAXBC WCWAR RJWXY LLHGQ AHGQA

July 7th, 2022 at 8:12 am

WordPress shows you search strings people have used to find your posts, and I just noticed among mine: “sean carroll is an idiot”

I’m not sure I’d go that far, but I do think his faith in the MWI is kinda stupid.

I used to have a lot of regard for the guy, but over the years that declined, and I most see him now as I do Michio Kaku — as someone who sold his integrity (or lost his mind) to being a “popular” science writer. I have zero regard for Kaku, and I’m increasingly seeing Carroll likewise.