In the last four posts (Quantum Measurement, Wavefunction Collapse, Quantum Decoherence, and Measurement Specifics), I’ve explored the conundrum of measurement in quantum mechanics. As always, you should read those before you read this.

In the last four posts (Quantum Measurement, Wavefunction Collapse, Quantum Decoherence, and Measurement Specifics), I’ve explored the conundrum of measurement in quantum mechanics. As always, you should read those before you read this.

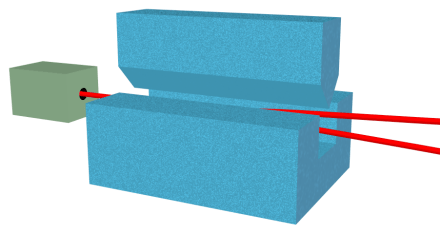

Those posts covered a lot of ground, so here I want to summarize and wrap things up. The bottom line is that we use objects with classical properties to observe objects with quantum properties. Our (classical) detectors are like mousetraps with hair-triggers, using stored energy to amplify a quantum interaction to classical levels.

Also, I never got around to objective collapse. Or spin experiments.

The basic motivation for objective collapse theories to preserve the linearity of the Schrödinger equation during measurement by extending the mathematics to account for the nonlinear jump of the state vector.

The objective part is that the wavefunction collapses (localizes to position eigenstates) for concrete reasons. Environmental elements, such as time or gravity, are key factors in why it does so. Large objects collapse, evolve briefly, and re-collapse constantly and rapidly. Quantum systems that interact with large objects collapse quickly under the large object’s influence.

Spontaneous collapse theories are a subset with an emphasis on time. Wavefunctions collapse spontaneously after some period of time (a little bit like a half-life decay). Collapse is also environmentally dependent, so isolated systems decay slowly. (All these theories, of course, must match what we observe experimentally. They need to account for why tiny, isolated systems can be quantum but why large systems can’t.)

I like the basic idea of objective collapse theories. I think they help explain how the classical world emerges from the quantum one. I lean more towards ideas about object mass and gravity (plus the environment) than towards ideas involving time. I like the Diósi–Penrose model. Its link with gravity (the “missing link” of quantum) intrigues me. (The notion of a wavefunction “half-life” is attractive, though. Uncertainty adds some time fuzziness in any event.)

These models are not without their problems. They are criticized for not conserving energy (because of noise), but better models may fix that. (And I wonder if a gravity-based model might be less prone to noise.) And there is still the issue of the nonlocality of wavefunction collapse.

Bottom line, though, I think the notion of objective collapse is a step in the right direction. (And I think gravity from the mass of objects is an important factor.) It explains our experience of a classical world — reality observes, or at least collapses, itself.

§

I have long wondered why the measurement problem isn’t just a matter of adding some kind of math to the Schrödinger equation. That seems obvious somehow. The Schrödinger describes the evolution of a quantum state like a parabola describes the arc of a baseball. Both describe an uninterrupted process.

The equation for a parabola describes only the flight of a baseball. It doesn’t account for it hitting the ground or a wall (or a bird) — terms have to be added to the parabolic equation to define those interactions. Likewise, it seems the Schrödinger equation needs terms to describe the interaction of a measurement. In fact, applying an operator is the mathematical equivalent of making a measurement, so I’ve never been entirely clear why that doesn’t make measurement a non-problem. At least in terms of the non-linear vector jump.

Note that, in many kinds of measurement, the quantum system being measured disappears. For example, when an electron absorbs a photon, the photon vanishes. Its wavefunction vector doesn’t just jump — it goes away entirely!

Sometimes I wonder if the measurement problem isn’t something of a misunderstanding. Why would we expect the Schrödinger equation to evolve linearly when its system encounters a much larger object, one presumably governed by its own Schrödinger equation Why don’t we expect that sudden interaction to have a sudden effect on the wave states? Definitely a huge one on the quantum system (assuming it’s even still around). Possibly an indistinguishable one on the large system as far as its quantum state. As discussed last time, its energy state and physical configuration can shift suddenly and massively.

This still leaves the nonlocal spooky action at a distance of the wavefunction vanishing everywhere, the shift from dispersed wave to point-like particle, but entanglement experiments seem to make quantum nonlocality something we may have to just accept.

§ §

In the last post, I didn’t talk about spin experiments. Many modern ones involve single particles, so the noise issues I discussed last time apply. A common example is detecting photons that pass — or don’t pass — through a polarizing filter. There is, firstly, the issue of knowing when a photon is present, and secondly, the problem of false negatives and positives due to noise.

[I believe it’s more accurate to visualize the laser as generating a continuous EM field on the borderline of having one quanta (photon) of light energy per a certain beam length. Variation and uncertainty randomizes exactly when there is enough energy to extract one photon. The mystery, as always, is why a particular electron is “chosen” to extract the photon.]

Some experiments use large numbers of photons (or other “particle”) to build statistics that rise above the noise. Geiger counters, for instance, depend on lots of events to signal the presence of significant levels of radiation.

Large numbers may also bring out patterns single particles don’t demonstrate. While individual particles do interfere with themselves in the two-slit experiment, it’s only the pattern that builds up over time that demonstrates the interference behavior. And many entanglement experiments need lots of data points to demonstrate the quantum correlations of entangled particles.

The original Stern-Gerlach experiment spin experiment is worth mentioning. It used silver atoms, which have spin because of the unpaired electron in the outer shell. Results depended on lots of silver atoms accumulating on a glass screen — enough atoms to observably block transmission of light (and silver is nicely opaque).

It’s a striking experiment because it creates a very concrete classical record of a quantum behavior. A big part of the amplification here lies in using lots of silver atoms to build up the record over time. Another aspect lies in the energy of the magnetic field; that’s where the actual measurement is made.

There is something of a question of exactly when the silver atoms are localized. Is it upon passing through the magnetic field, or not until they splat against the glass screen? I have seen accounts that view the two possible flight paths as superpositions of up and down measurements until the atoms strike the screen. This in analogy to beam-splitter experiments where the photon goes one of two ways. (Two-slit experiments also put the two paths in superposition.)

I think the atoms get localized at least in the magnetic field. They’re already bathed in the environment and may have localized even before they interact with the magnet. The interaction with the magnetic field aligns their spins and deflects their path, which certainly changes their wavefunction. There is a question of how rapidly this occurs — is there a quantum jump (a wavefunction collapse) or some sort of progressive interaction? Does the path kink or bend?

The snap of a kink wouldn’t surprise me, but regardless, I think the magnetic field “measures” the silver atoms, which localizes them. The interaction depends on the location of the atom as it passes through the field, which collapses its position. From then on, it’s a “classical” silver atom flying through the air and colliding with the glass screen.

§ §

To wrap things up:

There is a wave/particle duality to matter. It only emerges at small (quantum) scales. It’s not apparent at large (classical) scales. Quantum systems do have wave behavior, but it’s not like classical wave behavior. In particular, interference between waves works differently — quantum systems combine probabilities, classical systems combine energies. In fact, a key difference with quantum systems is that they are probabilistic at their core (via the Born rule). Classical systems are fundamentally deterministic.

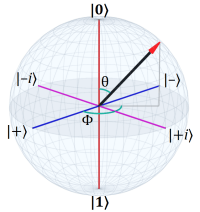

The measurement problem is the tension between the linear deterministic evolution of the quantum state and the nonlinear probabilistic jump of “collapse” (or “reduction”) to a measurement state. The former is a strong article of faith while the latter is so far without a solid explanation. (Or even a nearly universally accepted one.)

There are three distinct problems: Firstly, the nonlinear jump from evolving state to measured state. Secondly, the “spooky” nonlocal vanishing of the wavefunction everywhere instantly. Thirdly, the apparent randomness in determining which “particles” interact. The urgency of the first two depends on your ontological vs epistemic view of the wavefunction. (And interference effects lean hard in the direction of ontological.) The third one applies in all cases. It might be axiomatic that reality is somewhat random.

I don’t worry about wavefunction collapse. I think about it, but I don’t fret the disconnect between QM linearity and classical measurement. I think it’s very possible the wavefunction is just our Ptolemaic view of reality — almost right, enough to work very well, but missing a key insight. I don’t think the Schrödinger equation is the last word, just a great approximation, so the difficulties don’t bother me. I think solving the deeper quantum mysteries will allow us to understand measurement.

I think those deeper mysteries involve quantum superposition, quantum interference, and quantum entanglement. Because of its connection with the wave-like side of things, interference strikes me as especially interesting. But understanding the limits of superposition would be very helpful, too. As just mentioned, there are also the mysteries of apparent randomness (why that electron?) and nonlocality (which is an aspect of entanglement). And, of course, what exactly is the wavefunction?

Ultimately, I do think there is a Heisenberg Cut where classical behavior emerges and quantum behavior is swamped or averaged out. I think large objects decohere in the noise of 10²³ singers. And perhaps objectively due to mass and the gravity gradient it creates.

The big conflict between QM and GR suggests at least one, if not both, are significantly missing the mark. It’s quantum vs smooth; linear vs non-linear; background-dependent vs is the background. (That GR is a nonlinear physical theory seems points in its favor.)

§

So, bottom line, what do I think “measurement” and “collapse” is?

I can only guess: “Particles” in flight are single-quanta vibrations in the relevant particle field. Since the single quanta of energy is spread in a wave, the field vibrations in any one area are very much sub-quanta. I assume the wave moves at lightspeed (or whatever speed the particle does). At some spacetime point along the way, nature decrees an interaction. All the energy related to the “particle” instantly “drains” into a point-like interaction with another field, either starting a new wave or affecting an existing one.

However, this just equates “drains” with “collapses”, so it still invokes nonlocal magic. And in “nature decrees” it invokes the random selection magic. We do seem stuck with a (quantumly) nonlocal and possibly genuinely random universe. I’ve always been fine with that — prefer it, even — so I’d be willing to take them as axiomatic. (Doesn’t mean we stop investigating. They might not be.)

But bottom line, I think measurement is a mousetrap. The detector is a coiled spring with a hair trigger. Along comes a quantum mouse, and… snap!

§ §

And on that note, it’s time to move on to other things! (Not that there isn’t a great deal more to say, details to fill in, topics to revisit, elaborations to unpack. Like all good rabbit holes, Alice can explore forever. But I’m ready for a change of pace; I’m sure you are, too!)

Stay objective, my friends! Go forth and spread beauty and light.

∇

April 13th, 2022 at 4:26 pm

Have you heard the latest buzz? The experimental mass of the W boson has been measured to be higher than predicted by the standard model. As always, the dust needs to settle. What’s interesting to me is that the weak force seems like one place where the SM cracks show a bit.

April 14th, 2022 at 12:09 pm

Epicycles came from real planets in real orbits but seen from the wrong perspective. Likewise, the wavefunction seems to be, or at least to represent, something real, but we may be seeing it from the wrong angle.

If it is true that quantum mechanics, as it stands today, is our Ptolemaic view of reality at that scale, then obviously we need a quantum Copernicus.

(I’ve wondered if Roger Penrose might be someone along those lines.)

April 18th, 2022 at 12:56 pm

Reasons to Doubt GR

Singularities. Does nature actually allow them?

Information Loss paradox. Is information conserved? (If so, what symmetry does it come from?) Nature of event horizon is unknown.

Dark Matter observations. Is it a particle or MOND?

No quantization of matter or energy.

Galileo → Newton → Einstein → ???

April 18th, 2022 at 1:07 pm

Reasons to Like GR

Nonlinear physical theory that explains our experience of spacetime.

Extremely well-tested.

Single unified notion relating mass and the curvature of spacetime.

Fundamentally intuitive.

April 18th, 2022 at 1:02 pm

Reasons to Doubt QM

Assumes fixed spacetime background (same as Newton).

No gravity. (No curved space.)

Linear evolution and the “measurement problem”.

Uses complex numbers and large-D Hilbert spaces. Raises question of wavefunction ontology.

Heisenberg Cut: How/where/when does the classical world emerge?

Non-classical behaviors: Interference; Superposition; Entanglement; Nonlocality; Randomly Selected Interactions; Heisenberg Uncertainty.

QM is group effort and basically a first cut at solving observational data. Collection of theories with no direct connection to physical reality or experience. No major quantum revolutions.

No clear physical meaning. No clear interpretation of the math.

April 18th, 2022 at 1:08 pm

Reasons to Like QM

Extremely well tested.

April 20th, 2022 at 1:49 pm

A nice video about the Stern-Gerlach experiment:

June 7th, 2022 at 1:02 pm

[…] Earlier this year I posted a five-part series about the measurement problem in quantum mechanics (see Quantum Measurement, Wavefunction Collapse, Quantum Decoherence, Measurement Specifics, and Objective Collapse). […]

June 15th, 2022 at 9:18 pm

Another possible contributor to the Heisenberg Cut: the wave-nature of matter. In particular, the frequency of those waves [see What’s the Wavelength?].

Physics’s modern spherical cow, the 1 gram paperclip, sitting “motionless” on a desk (we’ll give it a velocity of one micron per second due to vibration), has a wavelength of:

In comparison, the charge radius of a proton is a whopping in comparison. The paperclip’s wavelength is six orders of magnitude smaller than radius of a proton. (OTOH, it’s a vast twelve orders of magnitude larger than the Planck Length.)

in comparison. The paperclip’s wavelength is six orders of magnitude smaller than radius of a proton. (OTOH, it’s a vast twelve orders of magnitude larger than the Planck Length.)

To the extent that superposition and interference depend on the wave-nature of matter, the wavelengths of macro objects put such effects (at least in paperclips) in a domain much smaller than protons.

So, the wavelength of macro objects contributes to the Heisenberg Cut, is my point.

June 22nd, 2022 at 4:20 pm

[…] be just the de Broglie wavelength that determines the Heisenberg Cut. As I posted about in Objective Collapse, I like the Diósi–Penrose objective collapse model that depends on gravity from an […]

December 18th, 2022 at 1:47 pm

> In fact, applying an operator is the mathematical equivalent of making a measurement, so I’ve never been entirely clear why that doesn’t make measurement a non-problem. At least in terms of the non-linear vector jump.

What kind of “operator” do you mean here? Projection operators are used specifically to introduce non-linearity, and are applied “when measurement happens.” The precise definition of “measurement” is still left unaddressed.

> For example, when an electron absorbs a photon, the photon vanishes. Its wavefunction vector doesn’t just jump — it goes away entirely!

This is probably a tangent, but photons don’t truly have wavefunctions, AFAIK. And if you view it from the QFT perspective, the transition is still unitary. But my QFT is weak.

> Why would we expect the Schrödinger equation to evolve linearly when …

Because physicists tend to be reductionists, and every interaction at the quantum level has thus far proven to be unitary

December 19th, 2022 at 7:28 pm

“Projection operators are used specifically to introduce non-linearity,”

Yes. If we grant that sudden interactions between disparate systems are fundamentally nonlinear (almost by definition), then an interaction of a quantum state, Ψ, must be such. I’ve never quite understood why applying that operator isn’t a valid reflection of the situation.

My analogy is that whether we use Newton, Lagrange, or Hamilton to predict the path of a thrown baseball, nothing in that mathematical system (essentially a parameterized parabola) accounts for the bat swinging and hitting the baseball. Or the pigeon that unexpectedly flew in front of it (true story). In both cases, extra math — some kind of operator — is necessary to describe that “collapse” of the ball’s predicted motion.

In this case, the equations for the ball’s flight are nonlinear, rather than the Schrödinger equation’s linearity, but both describe a smooth evolution ignorant of unexpected future interactions. (Unless those are built in from the beginning, making the equations much more complicated.)

So, I can’t help but wonder if projecting Ψ onto measurement eigenstates might not reflect the same reality as the math describing the bat (or pigeon) contact. Is it possible the Schrödinger equation, like Newton’s humble F=ma, applies to nicely isolated systems, but isn’t nearly enough of an answer in real world conditions? #justwondering

“The precise definition of ‘measurement’ is still left unaddressed.”

It’s probably messy and might not even be fully described by QM as we know it. As a realist, I think more about what’s ‘really’ happening than about the math that describes it. I think about tiny quantum systems encountering large macro systems (in what I believe to be a classical decohered state). I explored some of what I think happens in the previous post (and in others) and won’t go into it here.

“This is probably a tangent, but photons don’t truly have wavefunctions, AFAIK.”

Can you elaborate on that? How do we account for polarization, energy states, or multi-path experiments?

“Because physicists tend to be reductionists, and every interaction at the quantum level has thus far proven to be unitary”

And (perhaps in consequence?) quantum physics has been largely stalled for over 50 years. We know it can’t be the full picture, because gravity, so we’re definitely missing something. It’s bothersome to have a mathematical theory with no clear physical picture. Even GR is at least physical and sensible. QM just feels very incomplete to me.

December 20th, 2022 at 9:59 am

> If we grant that sudden interactions between disparate systems are fundamentally nonlinear (almost by definition)

Why should we grant this, let alone by definition? All interactions we’ve found thus far (at the quantum level) are governed by Hamiltonians, which produce unitary evolution. The fact that many such tiny interactions give rise to macroscopic classical behavior is already well explained by decoherence, with no non-unitary evolution needed.

I think much of our disagreement about interpretational issues may stem from issues related to this and our earlier conversations about entanglement, but I’m not sure it’s possible to make progress in a chain of WP comments!

> Can you elaborate on that? How do we account for polarization, energy states, or multi-path experiments?

I normally think of the word “wavefunction” to mean position-space function. See here: https://physics.stackexchange.com/questions/462565/why-doesnt-there-exist-a-wave-function-for-a-photon-whereas-it-exists-for-an-el/463454#463454

Anyway, to answer what happens when a photon is emitted or absorbed, non-relativistic QM can’t help. It’s one of the reasons QFT was invented in the first place.

December 20th, 2022 at 2:21 pm

“Why should we grant this, let alone by definition?”

How else can we characterize sudden interaction with an object not covered by math describing the evolution of the [baseball|electron|whatever]?

“All interactions we’ve found thus far (at the quantum level) are governed by Hamiltonians, which produce unitary evolution.”

Right. My point is that perhaps the faith in that is misplaced, and that’s why particle physics has been somewhat stalled for decades. That faith leads us to the measurement problem and the black hole information paradox, so maybe it’s not the full picture.

“I’m not sure it’s possible to make progress in a chain of WP comments!”

We have almost diametrically different metaphysics here, so I’m not sure what progress would even involve. I doubt either of us would much sway the other towards their views! No doubt we’ve both invested considerable time and effort into reaching those views. All we can really do in comment sections is exchange them — explore the contrasts and similarities.

I’m not convinced quantum descriptions are meaningful in macro-objects, a contrast, but we’re similar on why: decoherence. We contrast, though, in how that affects reality, collapse verses no-collapse. Where should we take it from here?

Hmmm. Maybe I should refine a point. Decoherence is not why collapse, that is a separate (admittedly knotty) issue, but it is why a (single) classical reality emerges from the quantum substrate. I’m less concerned with the mathematics, which I think depend on a better understanding, than with accounting for Einstein’s “spooky” apparent vanishing of the wavefunction (granting that it has some ontological reality) or for the apparent randomness of QM.

“I normally think of the word “wavefunction” to mean position-space function.”

Ah, okay. That link made for some interesting reading. I’d already begun to get the idea that photons are a lot more complicated than appears at first light (so to speak). I’ve read how, in single-photon experiments, the image of a photon in flight is just a metaphor. Better to picture a weak EM field from which the detector sometimes extracts a quanta of energy.

FWIW, I use the broader version of wavefunction. In large part due to thinking about wavefunctions for macro-objects or entire worlds. What exactly is the wavefunction of, say, a piano?

I’m familiar with the Schrödinger equation and QM in general not accounting for particle creation and annihilation. My understanding of QFT mainly consists of that it was created to account for relativistic physics (and that it’s a field theory — particles are field disturbances).

(It comes up sometimes in discussions about the MWI or other theories involving macro-object wavefunctions when those less background invoke “the Schrödinger equation” as the central, and only, fact. But what they really mean is the wavefunction. And as a realist, the ontology of the wavefunction is high on my list of interests. What exactly is “waving” when it comes to a piano, world, or universe?)

December 20th, 2022 at 2:59 pm

> How else can we characterize sudden interaction with an object not covered by math describing the evolution of the [baseball|electron|whatever]?

I guess I don’t see the sense in which the evolution of a baseball is “not covered by math.” Sure, the math changes once it hits an object, but I don’t see that as a problem (and doesn’t seem to contradict the unitary evolution of the microscopic components, except for the measurement problem).

December 20th, 2022 at 4:29 pm

That’s kind of what I’m getting at, though. We can define a (classical) Hamiltonian for the pitch, and it describes the smooth flight of the baseball. We can also add terms for contact with the bat (or unexpected pigeon), but these are entirely arbitrary and outside the description of the ball’s dynamics. Adding them results in sudden changes to those dynamics. Sudden velocity and acceleration changes.

By analogy, why isn’t something like that appropriate with the Schrödinger equation? Why isn’t applying a measurement operator, and the resulting wavefunction “collapse”, like applying the math to model bat or bird contact to the ball model?

December 20th, 2022 at 4:59 pm

I must admit I’m terribly confused at this point. I don’t see what makes them “arbitrary.” Something like that IS appropriate in the Schrodinger equation: before, the two systems were not entangled, and so we could apply the Schrodinger equation to them separately and take the resulting tensor product. The interaction Hamiltonian entangles them, but we still use the Schrodinger equation to describe the overall system. I don’t think any of this is surprising to physicists.

Quite apart from our metaphysics, I’m not sure how to make progress on this point! I wonder if there’s a specific question you have that can be answered on physics stackexchange. (Not that I think you’re confused, but you mentioned a couple times that something about this is a sticking point.)

December 20th, 2022 at 5:55 pm

“The interaction Hamiltonian entangles them, but we still use the Schrodinger equation to describe the overall system.”

And yet there is a “measurement problem”. Arguably for about 100 years. I’m just an armchair amateur speculating on what direction a solution might take. All I’ve got is a metaphor in reach of an idea. Which is that state reduction might be as simple as it seems. Once we have a Hamiltonian that includes the “measurement” interaction, reduction follows naturally.

I just can’t help but wonder if the nonlinearity that’s seen as a problem with reduction becomes obvious once it’s included in the Hamiltonian. Just like it is with baseballs.

Not sure what you think is a sticking point. Far as I can tell, we just have different views. 🤷🏼♂️

December 21st, 2022 at 9:30 am

> Not sure what you think is a sticking point. Far as I can tell, we just have different views. 🤷🏼♂️

Oh, it was in reference to “I’ve never quite understood why applying that operator isn’t a valid reflection of the situation.” (There was also another comment about not understanding something related, which I can’t find now.) I think those are well-understood issues, and aren’t controversial. More specifically, if you believe it’s a route to solving the measurement problem, but haven’t found that any physicists have proposed it, there might be something to learn by posting the question where physicists can help point out potential gaps. I’m also just an amateur, and I could be wrong about all this!

December 21st, 2022 at 1:09 pm

Ah, okay, I see. For me the phrase, “I’ve never understood…” can express a hopefully polite sense of disbelief. It’s somewhat like when people say, “I’ve never understood why people like [baseball|boxing|golf|NASCAR racing|golf|skydiving|mountain climbing|…].” 🤨 I’m aware of what conventional QM says, but — given its various long unsolved conundrums — I think what are taken as axioms (such as unitarity) are worth questioning.

“I think those are well-understood issues, and aren’t controversial.”

Exactly why I think they’re worth questioning and taking a second look at. Unitarity is axiomatic in conventional physics, but (as far as I’ve found) it’s not built on the same foundation (symmetry) as physical conservation laws. It’s an element of faith that information can’t be lost.

But — for example — what if it becomes so dispersed that its energy level at any one point is below the Planck limit for energy/space or energy/time? That would seem to cause the information to vanish below the “QM horizon”. And one (simple) solution to the BH information paradox is that information can be lost.

“…but haven’t found that any physicists have proposed it,…”

The things I talk about here are a synthesis of ideas by Roger Penrose, Lee Smolin, Sabine Hossenfelder, Peter Woit, Scott Aaronson, Jim Baggott, Philip Ball, and a variety of others whose books or papers (or blogs) I’ve read. There are a number of good QM lecture series on YouTube, including some from MIT (highly recommended). I’ve been interested in this sector of physics since before quarks!

Several of the people I just mentioned are iconoclasts with ideas contrary to established physics. Many share a belief that QM can’t be entirely right, and it may take a paradigm shift to progress. We need another Copernicus or Einstein with a new point of view.

Which would never be me! I’m just dabbling, but I enjoy seeing, for instance, Smolin writing about time being fundamental, or Penrose questioning unitarity, when I’ve pondered those things myself. Makes me think I’m not entirely at sea! 🤡⛵

December 21st, 2022 at 1:13 pm

p.s. That said, I’m always open to that I have my facts wrong. Theories, ideas, speculations, they are what they are, but if I get my facts wrong, that’s another matter. I may be fanciful, but I try to be factual.

December 22nd, 2022 at 1:41 pm

Ah, I see. In my experience, differences in metaphysics result in differences in beliefs around what even constitutes “facts.” So I think we may be at the limit of what we can fruitfully discuss! I thank you for our conversation, and happy to chat again should the desire arise!

December 23rd, 2022 at 1:19 am

It was a fun discussion, you’re welcome back any time!