There’s a discussion that’s long lurked in a dusty corner of my thinking about computationalism. It involves the definition and role of algorithms. The definition isn’t particularly tricky, but the question of what fits that definition can be. Their role in our modern life is undeniably huge — algorithms control vast swaths of human experience.

There’s a discussion that’s long lurked in a dusty corner of my thinking about computationalism. It involves the definition and role of algorithms. The definition isn’t particularly tricky, but the question of what fits that definition can be. Their role in our modern life is undeniably huge — algorithms control vast swaths of human experience.

Yet some might say even the ancient lowly thermostat implements an algorithm. In a real sense, any recipe is an algorithm, and any process has some algorithm that describes that process.

But the ultimate question involves algorithms and the human mind.

Since the definition isn’t tricky, let’s start with that. For a detailed look, see my posts Calculated Math and Coded Math, but basically:

An algorithm is an ordered list of instructions that can be executed by some engine. Algorithms, at minimum, entail: selection, state, and some notion of address.

Simple enough, but it probably requires some unpacking.

The wiki article shows there is quite a bit to it, as well as a long history. (Euclid’s algorithm is yet another thing the ancient Greeks already thought of.)

Sticking with a basic approach, the “ordered list” part means the instructions are in an order that matters. The steps of a recipe must be performed in order: first mix the ingredients; then bake the cake.

The “executed by some engine” part just means there is someone or something that understands the instructions and can do what they say (that part is kind of important). In ancient times, that was a person, but these days most algorithms are executed by CPUs.

Continuing the cooking analogy, with a recipe, the engine is the cook.

[Long ago, the term “computer” was a job title for a person who used mathematical algorithms (such as multiplication and division) to perform mathematical analysis. Now the term refers to a machine that sits on your desk (or in your pocket or on your wrist) and does the same thing.]

§

Now we get into something a little more technical, but which is important to understand.

Algorithms are usually deemed as having three general properties: selection, state, and address. (Many would call them required properties. I’m sympathetic to that view.)

The first, selection, is a combination of (unconditional) branching and conditional branching. We see the former in Go-To type instructions and the latter in If-Then instructions.

Note that while most instruction sets have more sophisticated versions of conditional and unconditional branches (Go-Sub and Switch, for example), any algorithm can be implemented with just the first two.

Even the If-Then-Else construct is optional. The combo of If-Then plus Go-To can do anything If-Then-Else can.

Essentially, selection is the ability for the instructions to say: At this point, IF such-and-such is true, THEN do this thing (ELSE do that other thing). The crucial point here is that it entails reacting to current conditions and jumping to an appropriate response.

Which appropriately jumps us to the third item, address. This just means we know the location to branch to. You may have seen this in certain forms:

If you don’t have children, skip to question #42.

That’s a tiny algorithm. There’s a conditional branch (on whether you have children) and an address (question #42).

The final item, state, refers to two things: Firstly, that the algorithm itself is always in some state. Secondly, that most algorithms need to preserve the state of certain pieces of information for some number of instruction steps.

For an example of the latter, consider the algorithm of counting the paper money you carry (assuming you carry paper money anymore). When you do that, you keep a running sum as you go through the bills. That running sum is an information state you maintain.

You can think of the algorithm state as the cook’s finger on the current instruction of the recipe. Or you can think of it as the program counter in the CPU. Basically the same thing.

§

Okay, so that’s a pretty clear (I hope) definition of an algorithm. Let’s apply it to some situations…

§

A thermostat seems to be an implementation of an underlying algorithm that describes a process of temperature homeostasis (if you’ll forgive the pun).

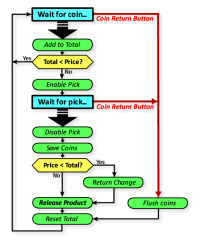

The apparent algorithm is a simple one:

If (temp-is-wrong) Then {do-something}

Which might be as simple as throwing another log on the fire or as complicated as running the HVAC of a large building. There is only a difference of scale.

That conditional branching satisfies a key requirement. What about address and state? A thermostat knows what state it’s in (doing something about the temp or not), so we have a “stateful” system.

Address seems like it might be missing. An actual algorithm would likely have a structure like this:

[start]

If (temp-is-okay) Then {Goto skip}

{do-something}

[skip]

Goto start

The code branches (“jumps”) around the do-something part if it doesn’t need to do anything. The [skip] is a “label” that creates a target address for the jump.

I’ve included the notion that a thermostat keeps on keeping on — in algorithm terms, it Loops over a sub-set of instructions. (Loop constructs also only require Go-To and If-Then to implement.)

Is there something analogous in the thermostat? When things are “okay” thermostats just don’t activate the switch. They may notice temps in the acceptable range — mechanical thermostats will move — but otherwise they don’t do much.

Specifically, they don’t seem to be skipping to the end and very rapidly going through the implied loop in the code above. There is no formal sense of the thermostat following a list of instructions that tell it:

- [start]

- Check the temp.

- Is it okay?

- Yeah? Then jump to [skip]

- Jump to [start]

Wash, rinse, repeat.

§

An algorithm usually has a list of instructions (a stored program) as well as an implicit “engine” that understands and executes them.

A recipe does. The algorithm for long division does. Euclid’s algorithm does. Actual computer algorithms all do.

But a thermostat operates more in virtue of what it is. We can view it as algorithmic, but the question is how literally to take that when there is no obvious program or engine in its operation.

I suppose one might see the machine’s design as the program, and the engine as the machine itself executing the program built into its design.

That seems a metaphorical view to me. Which is fine. I just wonder about the risk of conflating formal algorithm concepts what might only be analogous. Metaphors can break.

Part of it is that I think algorithms usually describe behaviors rather than objects. They tend to describe what a system does rather than what it is.

(That said, there are some objects that can be algorithmically defined as well as a few — such as the Mandelbrot set — that are only algorithmically defined.)

§

There is no question algorithms can describe any machine or natural process — that’s what modeling and simulation are all about.

Such algorithms can produce lists of numbers that can be mapped to real world systems, like weather or bridges. But that is not the same as the claim that a physical process is algorithmic.

For example, a while ago I had a discussion about whether an algorithm described or defined a brain neuron’s behavior.

An algorithm can describe a neuron, but is the neuron itself an algorithm?

A neuron algorithm might look a bit like this:

[start]

Set sum = 0

For Each input Do:

Set sum = sum + input

If (THRESHOLD < sum) Then:

Set output = FIRE

Goto start

The salient point is that an algorithm checks each input one-by-one, because the very nature of algorithms is step-by-step.

But a neuron responds holistically to all inputs at once; its nature is analog, not discrete.

§

This, I think is the key point.

Neither thermostats nor neurons are executing step-by-step loops. There is no obvious stored program providing the steps nor an executing engine performing them.

These are physical analog systems. Algorithms, by definition, are discrete information systems.

§

For a while, I’ve been considering a post about a very specific algorithm: One that solves the The Eight Queens puzzle.

I meant to do it when I wrote about virtual causal systems, but never got around to it. It’s a nice example of virtual rules as well as a great example of how algorithms can be realized in diverse ways.

It might make a nice companion to this post (and I just happen to have some code I can link to)…

Stay algorithmic, my friends!

∇

January 8th, 2020 at 5:54 pm

It seems like the distinction is in the underlying physical system. The mechanical thermostat is doing pretty much the only thing it can do. The algorithmic engine is performing one of many things it could be doing. While doing the thermostat algorithm, a computer chip is essentially a thermostat (or at least the controller portion).

This isn’t a black or white thing. Systems can be more or less flexible in varying ways. Nervous systems are very flexible in certain ways, but not in others. They are constantly rewiring themselves, although there are limits to that.

But a computer “rewires” itself every time it loads a new program. With that program loaded, it is, while holding that program, a physical manifestation of that algorithm, a system that, while holding the program, can only do what it can do. It’s like a Swiss army knife on steroids, that can quickly be reconfigured to perform work that previously required mechanisms specifically designed (or evolved) for that purpose.

“Neither thermostats nor neurons are executing step-by-step loops.”

Neurons don’t, just as transistors don’t. But neural circuits enable looping signals (referred to as recurrent or reentrant signaling in neuroscience). Binding between neural processes are generally recurrent. Actually, some neurons have been known to synapse on themselves, which essentially creates a looping mechanism.

January 8th, 2020 at 7:26 pm

“The algorithmic engine is performing one of many things it could be doing.”

I think there’s an important distinction here. On the one hand, the engine only does things defined in its instruction set, so in a sense, the engine only does one thing.

If we stood inside the CPU watching the instructions flow in and be executed, it would be hard to say what software was running. At that level, as a flow of instructions, it looks all the same.

It’s in doing those same things in different orders that different virtual systems arise. It’s like how we can build lots of different things with lots of (basic) LEGOs. (Or define lots of creatures with just DNA.)

Computers are fast, so we can build big things (with lots of tiny LEGOs) in reasonable time. But looked at closely, it’s just made of LEGOs.

“Systems can be more or less flexible in varying ways.”

Very true. The chip that runs my microwave might be special purpose or general purpose with microwave algorithms. There is even middle ground — special purpose general chips that are special in being for appliances, but general in applying to many kinds of appliance.

Quite a spectrum from TMs to UTMs!

“But a computer ‘rewires’ itself every time it loads a new program.”

Metaphorically, yes. … You might want to reconsider the terminology in light of research about, hopes for, and experiments with, computers that actually (literally) do rewire themselves (in one way or another). 😉

“It’s like a Swiss army knife on steroids,”

Ha. You know, all things considered, if not on sale already, how long before we have a Bluetooth-enabled programmable Swiss army knife? (Not nano-, but micro-machines allow it to reconfigure itself into a variety of tools, including new ones. 😀 )

“But neural circuits enable looping signals (referred to as recurrent or reentrant signaling in neuroscience).”

(As an aside, as you may know, “reentrant” is a term found in algorithm analysis. (If not, it refers to whether the thread of execution can safely reenter a function.))

I don’t know what your electronics background is, but I would see those more as oscillating circuits (something electronics designers have to fight to avoid). As such, I’d see that as an analog process.

However! Cyclic behavior can be a clock, and a step-by-step mechanism usually needs a clock, so oscillating circuits in the brain are a nice argument for algorithmic behavior.

If so, I think it would actually be more of a TM than a UTM — (at least so far) we can’t load and unload new “programs” into the brain. Not as data. We can learn, and we can forget, though.

But what I mean is, if there’s a mind algorithm, it’s an algorithm that itself, can grow and learn and change. It has to be self-reflective and self-modifying. It wouldn’t be an engine and code (a UTM), the way it treats the “tape” (input, life) is built in (a TM). Our TM literally does rewire itself.

A different view might be that what evolved in the brain is a biological non-TM approach to computation. Therefore it has aspects that resemble digital computing as we know it, but is something different.

An argument maybe favoring that last is: As far as we know, extremely complex computer systems demonstrate no signs of having phenomenal experience. As is often pointed out, modern systems are much better than us in terms of speed, memory, and bandwidth. Maybe it’s a serious parallelism, but so far consciousness seems to require something beyond a TM.

January 8th, 2020 at 9:02 pm

“Metaphorically, yes. … You might want to reconsider the terminology in light of research about, hopes for, and experiments with, computers that actually (literally) do rewire themselves (in one way or another).”

The “wiring” part is metaphorical since there aren’t any wires, but it’s also metaphorical in both nervous systems and neuromorphic hardware. In all three cases, it’s physical changes in the system. The changes in traditional hardware may be in RAM, making them very transitory, whereas in the others it might be more enduring, although the traditional versions we can get to hard drives and SSDs for the enduring aspects.

“As an aside, as you may know, “reentrant” is a term found in algorithm analysis.”

I do know about the thread version (along with race conditions, mutexes, semaphores, etc), and every time I see it mentioned in neuroscience context I briefly trip on it due to that meaning. What’s funny is that everyone has their preferred terminology, with some insisting on “feedback”, others “recurrence”, and others “reentrant.” It makes reading neuroscience papers a hassle sometimes.

“As far as we know, extremely complex computer systems demonstrate no signs of having phenomenal experience.”

I think that’s a matter of architecture rather than the exact mechanics of computation. But that’s the view of someone who sees qualia as information, in a deflated manner than many people would insist is illusionism.

January 9th, 2020 at 12:50 am

“The ‘wiring’ part is metaphorical since there aren’t any wires, but it’s also metaphorical in both nervous systems and neuromorphic hardware.”

I think we’re totally on the same page here, but I’m a little thrown by the phrasing of “aren’t any wires” and “it’s physical changes in the system,” so I’m not 100% sure.

Just to be clear, as a former electronics guy, “rewiring” to me does mean “physical changes” in the system, changing the network topology, whereas I don’t see reprogramming in the same light (despite also, of course, being physical).

These network pathways aren’t always wires, per se, which is why the “aren’t wires” threw me. I didn’t mean metaphorical in the sense of lacking wires. I meant that devices today generally can’t alter their topology, but there has been work on devices that can — CPUs that can turn themselves into a different CPU, for instance. It was just an aside about the term “rewire” — no biggie.

That said, correct me if I’m wrong, but do I understand neurons can form new connections, and that existing connections can wither and effectively permanently die? If so, the brain’s ability to rewire is literal, not metaphorical!

“I do know about the thread version…”

(As an irrelevant aside, a vanity language I invented (BOOL) was made hugely more complicated by the need for subroutines to be reentrant. A matter of where to put local variables when you’re not using a stack structure.)

I’ve run into that word games business in philosophy. It makes it a pain, too, sometimes.

“I think that’s a matter of architecture rather than the exact mechanics of computation.”

Could be. As I said, it might be the serious parallelism. And the very large very complex network with active connections.

The nagging question remains of why there is something it is like to be a conventional computing system. Call it an “illusion” or a “dashboard” or whatever, but the single Descartesean fact remains: there is something it is like to be a human.

That it remains a stubbornly hard problem seems like a clue that there is something special or unique or new going on, even if that turns out to be fully natural.

January 9th, 2020 at 7:23 am

“That said, correct me if I’m wrong, but do I understand neurons can form new connections, and that existing connections can wither and effectively permanently die? If so, the brain’s ability to rewire is literal, not metaphorical!”

You’re right about neurons, although most synapses are formed during development and the rest are predominantly in the form of strengthening or weakening of those connections. I would just note that when a computer system stores information, whether in registers, RAM, firmware, SSD, hard drive, etc, those are physical changes to the system. I don’t see any fundamental difference between those types of changes and changes in synapse strength.

January 9th, 2020 at 8:19 am

“…most synapses are formed during development…”

Right. Thanks for confirming the part about new connections; I wasn’t 100% sure about that, either, and I figured you’d know. I think that’s more recent knowledge that’s over-written old knowledge I had long ago that new (rewired) connections never happen?

(Or did we always know that, and I’ve conflated the idea with how new neurons don’t grow. Except there’s a flag in my memory that maybe we’ve even seen some cases of that, now? I don’t pay super close attention to neurophysics like I do quantum physics.)

“I would just note that when a computer system stores information, whether in registers, RAM, firmware, SSD, hard drive, etc, those are physical changes to the system.”

😀 😀 You keep touching that point, that memory is physical. Of course it it; I’ve never said otherwise. I agree completely.

There is an interesting difference in how ephemeral computer memory is — it’s another aspect of the speed computers bring to building with LEGOs. For us, the equivelent might be scratch paper? (An abacus is interesting in being a device with memory like RAM in this mode.)

The more persistent kinds of memory would be like books, records, tapes, CDs, etc, are to us. Physical in all cases. They have to be.

“I don’t see any fundamental difference between those types of changes and changes in synapse strength.”

Between RAM or disc and synapse learning potentials? I agree they’re similar. In some regards synapse learning, as I understand it, is more sophisticated than computer memory. It would take multiple memory locations and a bit of code to implement a “synapse learning algorithm” — the process is more algorithmically complex than reading or writing bytes.

Let me ask, are you also saying network topological changes are the same thing as dynamical changes to network nodes (which do not alter topology)?

January 9th, 2020 at 1:34 pm

“Or did we always know that, and I’ve conflated the idea with how new neurons don’t grow.”

Could be. I’m not aware that anyone thought new synapses didn’t form. There was a study a few years ago that explored what causes them to form in adults. (The neurons expect a certain level of activity. If they don’t get it, they’re more ready to form new synapses.) But most of the new synapses form early in development, then are pruned as we grow. It’s why there are so many critical periods in development such as in vision development.

“Let me ask, are you also saying network topological changes are the same thing as dynamical changes to network nodes (which do not alter topology)?”

It seems to me that whether something represents a change in topology or not depends on the level of organization we’re looking at. But regardless, in terms of functionality, I think they’re doing similar work.

January 9th, 2020 at 2:00 pm

No question about similar work. Consider a simple network with A-link-B and B-link-C. Presuming the network is capable of routing, A can have a virtual link to C. Alternately, we can change the topology to provide an actual A-link-C.

I agree they can (often) amount to the same thing functionally, but sometimes the difference matters. If node B goes down, the virtual link is lost, but the physical A-link-C is not. There is also the potential for node B to affect the traffic between nodes A and C — as a time delay, if nothing else.

Ha! We’re at one of those points where I’m pointing out differences and you’re seeing them the same. As far as the level of organization, here we just looking at the network level. Which can be the network of the brain, or the wiring of a motherboard, or the internal wiring of a CPU. In contrast, synapse learning or memory/routing changes.

It’s interesting… This is all a digression off what I meant as a passing comment about the word “rewiring”. What you meant was, of course, perfectly clear, and I was just being wry about some special cases that actually do rewire themselves (and so does the brain sometimes).

(I think I may have mentioned I lean towards restrictive word definitions… when you originally said “rewire” a part of my brain went, “Yeah,… but not really…” 😀 And, as I mentioned, some of that comes from having been an electronics guy before I was a software guy.)